Home

Ship Agents that Work

AI & Agent Engineering Platform. One place for development, observability, and evaluation.

Powering the world’s leading AI teams

1 Trillion

spans processed

50 Million

evals per month

5 Million

downloads per month

One platform.

Close the loop between AI development and production.

Integrate development and production to enable a data-driven iteration cycle—real production data powers better development, and production observability aligns with trusted evaluations.

Arize AX: Observability built for enterprise.

AX gives your organization the power to manage and improve AI offerings at scale.

Explore Arize AI Observability for:

01

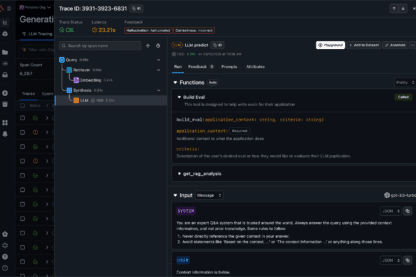

Development tools to build high-quality agents and AI apps

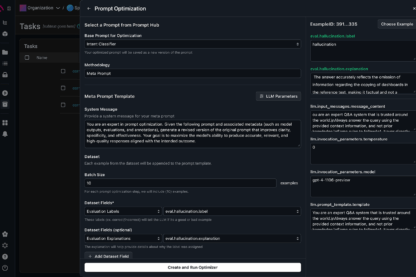

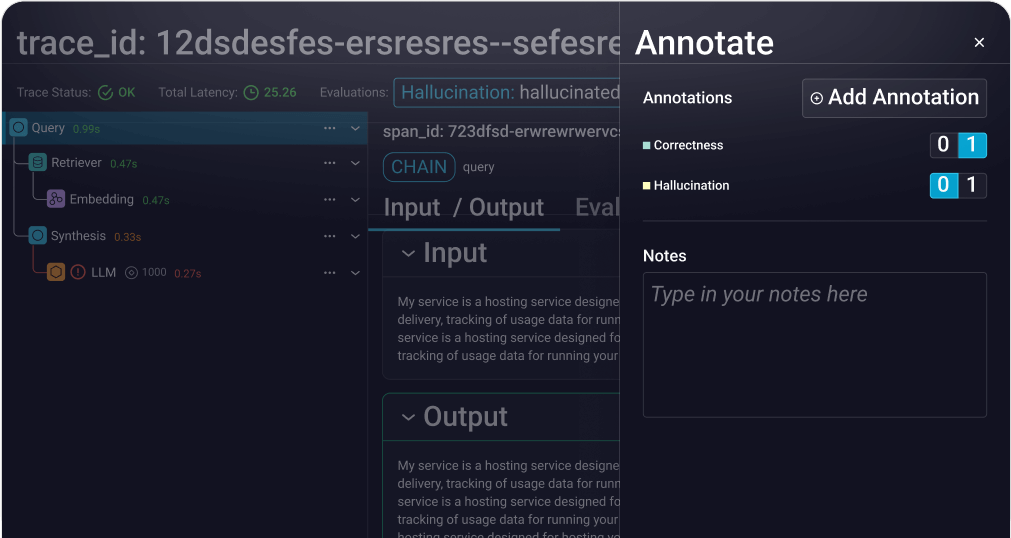

Prompt optimization

Make agents self-improving with automatic optimization using evaluations and annotations

View Docs

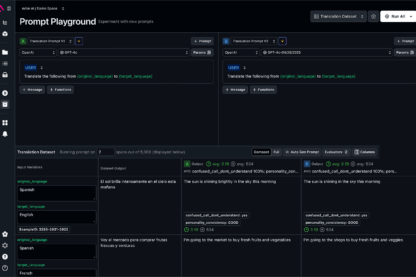

Replay in Playground

Replay, debug, and perfect your prompts with a playground designed for development

View Docs

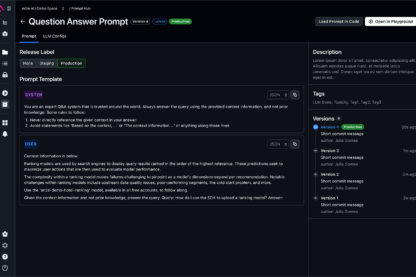

Prompt Serving and Management

Manage prompts, serve optimizations fast, and empower everyone to changes

View Docs

02

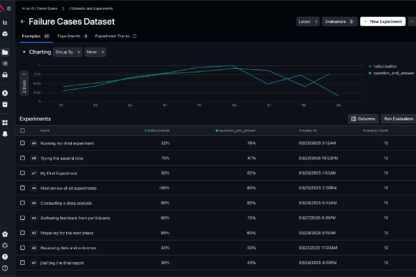

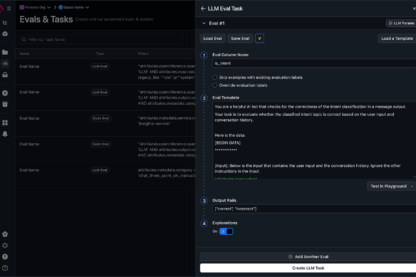

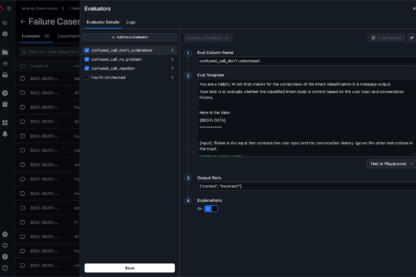

Evaluation that powers reliable, production-ready AI applications and agents

03

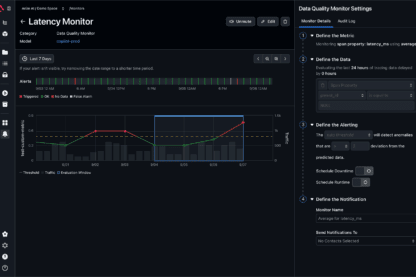

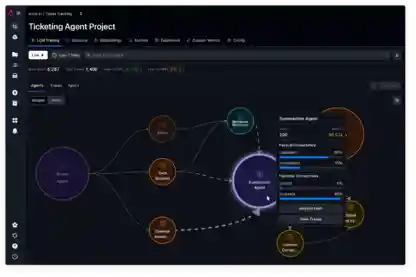

Observability to debug, trace, and improve your AI agents and applications

Complete Visibility into ML Model Performance

Pinpoint model failures and root causes.

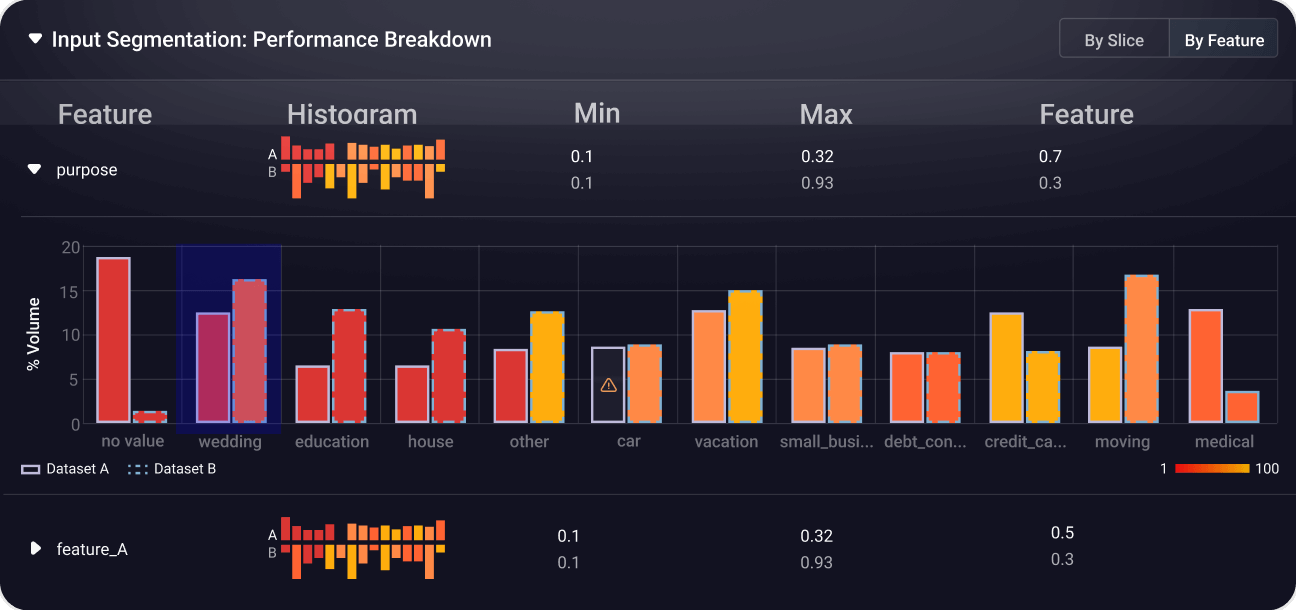

Quickly surface failure modes with heatmaps, identify underperforming slices, and understand why models make specific decisions to optimize performance and reduce bias.

View Docs

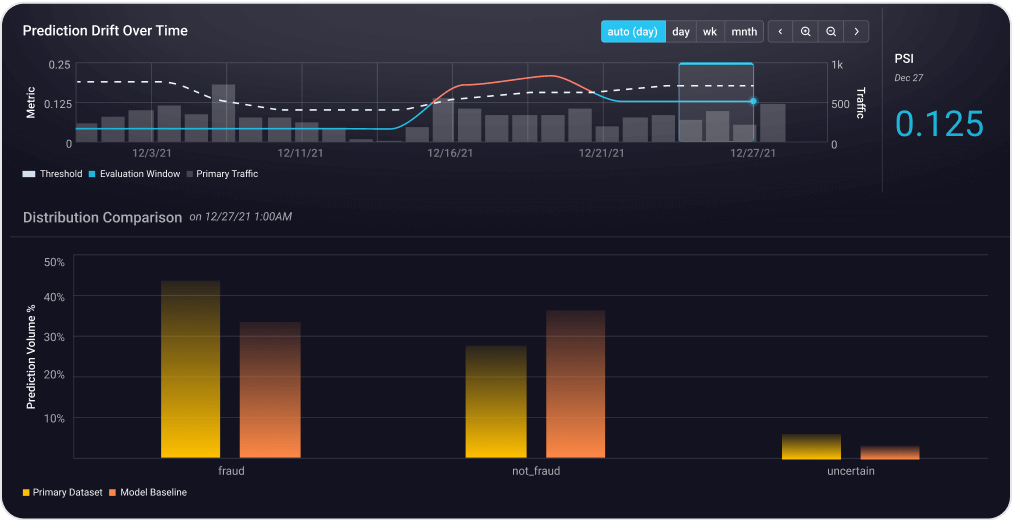

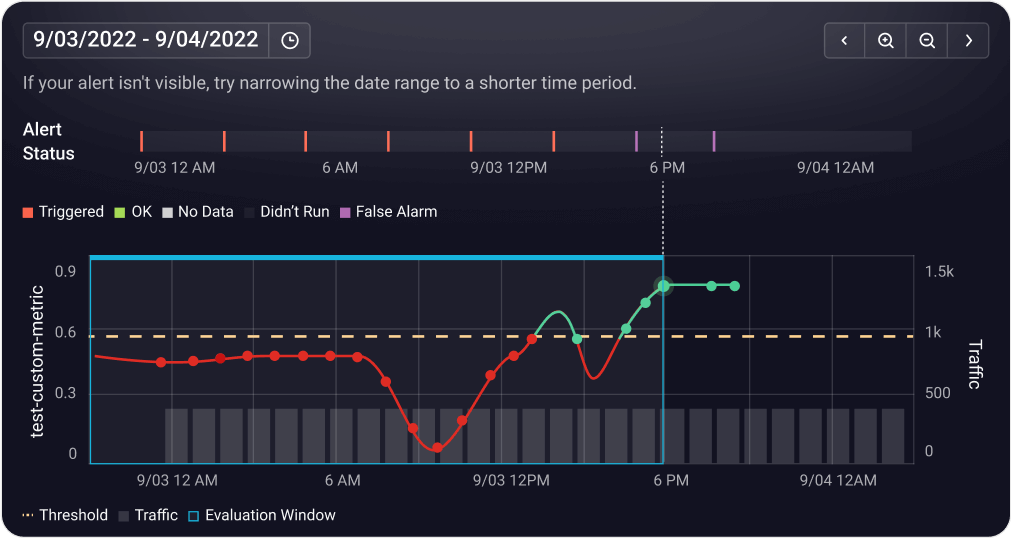

Detect and address model drift early.

Continuously monitor feature and model drift across training, validation, and production environments to catch unexpected shifts before they impact performance.

View Docs

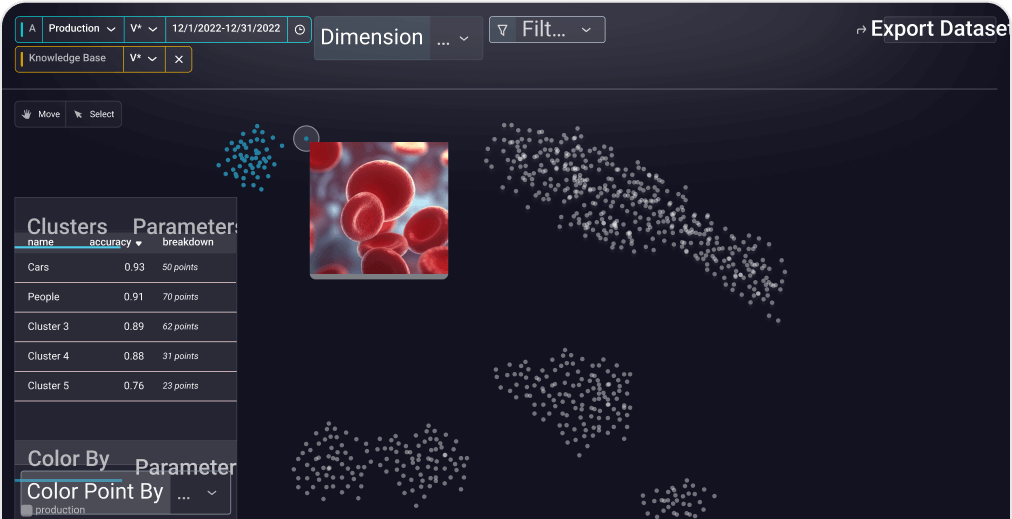

Find and analyze critical data patterns.

Leverage AI-driven cluster search to uncover anomalies, identify edge cases, and curate datasets for deeper analysis and model improvement.

View Docs

Monitor embeddings to prevent silent failures.

Track embedding drift across NLP, computer vision, and multi-modal models to maintain stable, high-quality feature representations.

View Docs

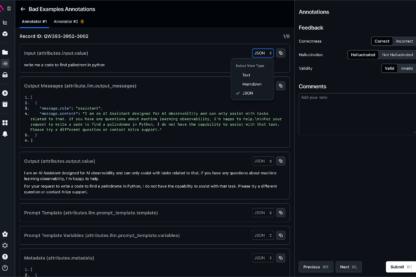

Improve model performance with better data.

Augment your datasets with human feedback, labels, and metadata while systematically curating data for experimentation, A/B testing, and iterative model improvements.

View Docs

A Platform Built for Production AI

Data foundation and intelligent agent for building, evaluating, and improving AI.

Building & Evaluating AI Agents.

Continue your journey into AI Specialization with advanced learning hubs.

Built on open source & open standards.

As AI engineers, we believe in total control and transparency.

Just the tools you need to do your job, interoperable with the rest of your stack.

No black box eval models.

From evaluation libraries to eval models, it’s all open-source for you to access, assess, and apply as you see fit.

See the evals libraryNo proprietary frameworks.

Built on top of OpenTelemetry, Arize’s LLM observability is agnostic of vendor, framework, and language—granting you flexibility in an evolving generative landscape.

OpenInference conventionsNo data lock-in.

Standard data file formats enable unparalleled interoperability and ease of integration with other tools and systems, so you completely control your data.

Arize Phoenix OSSCreated by AI engineers, for AI engineers.