DYMO-Hair

Generalizable Volumetric DYnamics MOdeling for Robot Hair Manipulation

Team

1 Carnegie Mellon University

2 Epic Games

3 Meta Codec Avatars Lab

4 Bosch Center for AI

TL;DR

- A study of model-based approaches for robot hair manipulation.

- DYMO-Hair, a unified, model-based robot system for visual goal-conditioned hair styling, evaluated across diverse hairstyles in simulation and real-world settings.

- A 3D volumetric dynamics model for hair combing, generalizable to diverse unseen hairstyles, enabled by a compact 3D latent space of diverse hairstyles and an action-conditioned latent state editing mechanism.

- A hair simulator with a novel Position-Based Dynamics (PBD) method for strand-level, contact-rich hair-combing simulation.

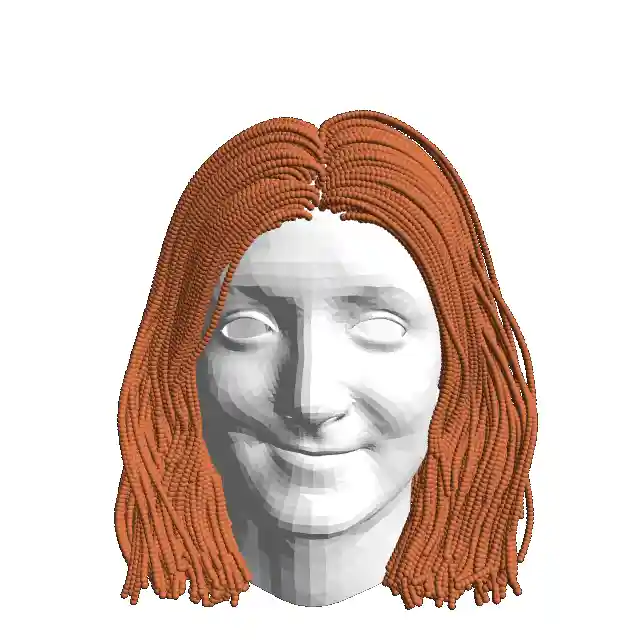

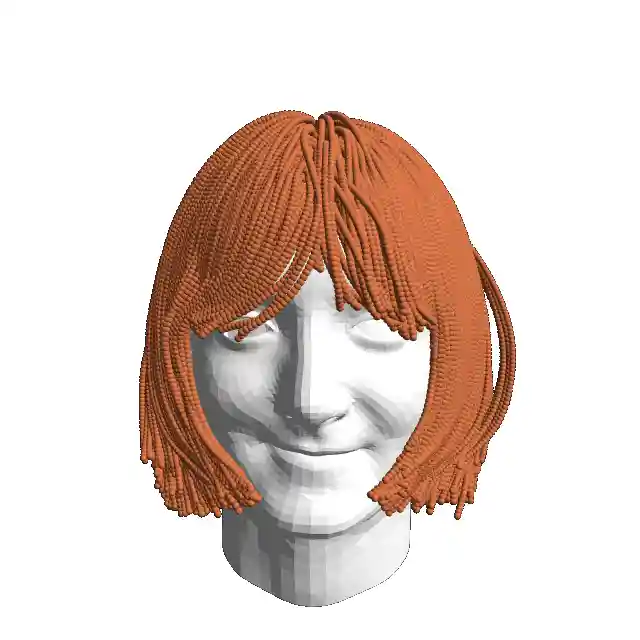

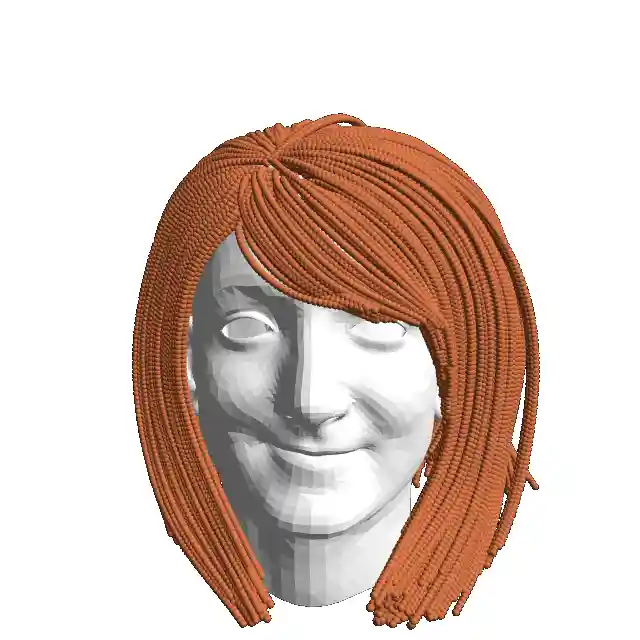

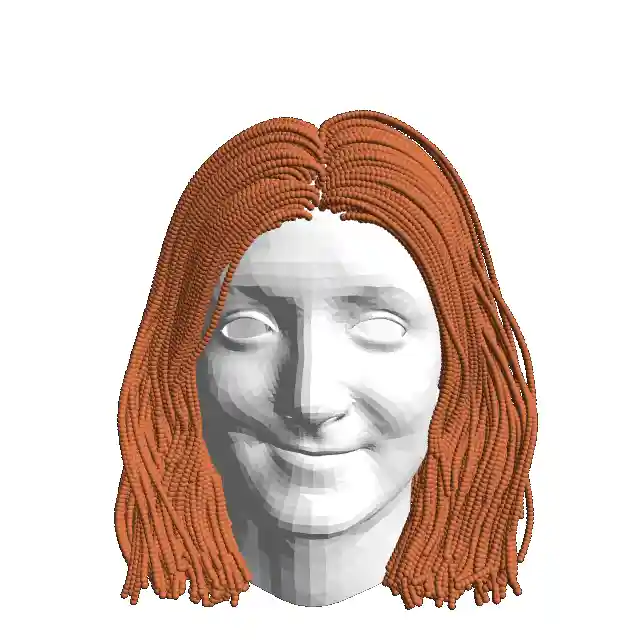

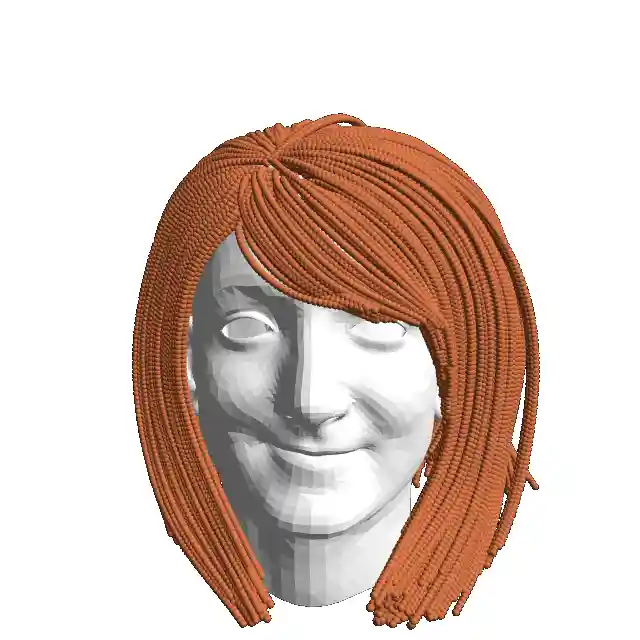

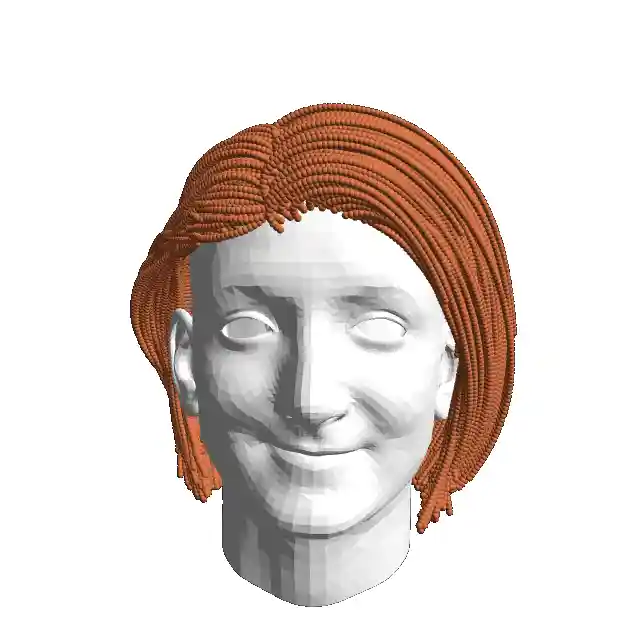

Hair State Representation for Dynamics Modeling

Static State

Grid Corresponding

Strand Segments [Proposed]

Dynamics Process

Grid Corresponding

Strand Segments [Proposed]

Design Highlight

Representing hair state as a high-resolution volumetric occupancy grid with a 3D orientation field, enabling:

- Capturing both the spatial distribution and the flow direction of hair, achieving high fidelity.

- Efficient and robust state estimation from multi-view RGB-D data in the real world.

- More structured processing with highly optimized neural operators and dense voxel-level supervision for learning.

- Maintaining acceptable accuracy when resolution is sufficiently high.

Hair Combing Dynamics Learning

Design Highlight

A novel two-phase neural dynamics learning paradigm, designed for generalizable volumetric hair dynamics modeling.

- Phase 1: Pre-training a compact 3D latent space for diverse hair states (various hairstyles and deformations).

- Phase 2: Adapting the pre-trained model with a ControlNet-style framework for dynamics learning, formulating the dynamics as an action-conditioned latent state editing process.

DYMO-Hair System: Visual Goal-conditioned Hair Styling

Design Highlight

- A model-based, closed-loop system, capable of handling diverse hairstyles and visual goal configurations.

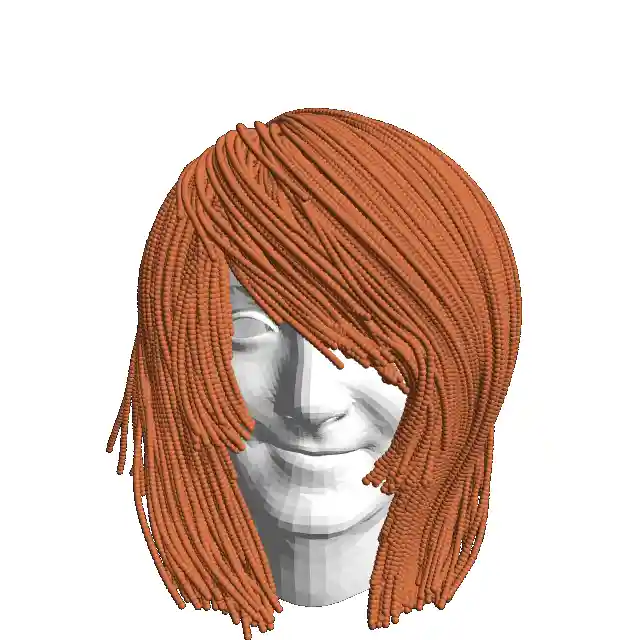

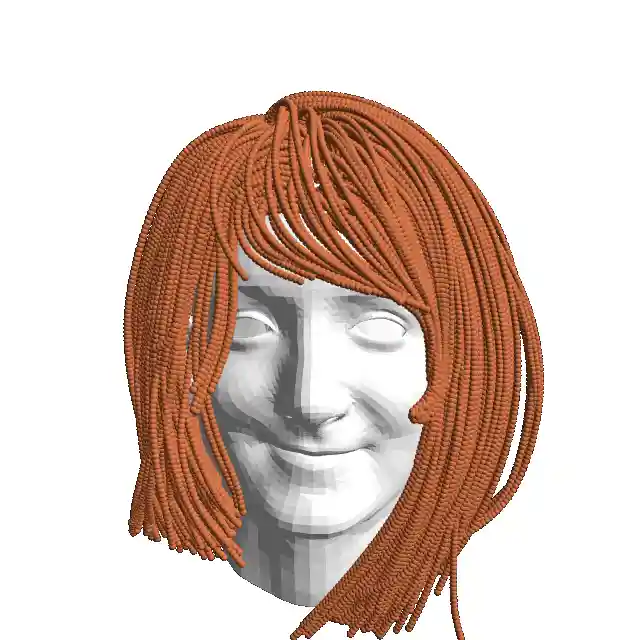

Strand-level, Contact-rich Hair Combing Simulation

Design Highlight

- With all three constraints, the hair maintains a realistic shape.

- The twist constraint, in particular, preserves the strand's curvature and prevents gravity-induced oversmoothing.

Dynamics Learning Results on Unseen Hairstyles

Key Takeaway

- Our model outperforms all baselines in capturing local deformation for unseen hairstyles, highlighting the effectiveness and generalizability of our model on hair-combing dynamics modeling.

Hair Styling Simulation Results on Unseen Hairstyles

All Method Comparisons on Hard Cases

Goal

Goal

Goal

DYMO-Hair (Ours) on More Various Messy Cases

Goal

Goal

Goal

Goal

Goal

Goal

Key Takeaway

- DYMO-Hair successfully styles a wide range of unseen hairstyles with varying difficulty, surpassing both the state-of-the-art rule-based system and all model-based baselines.

- Compared to the state-of-the-art rule-based system, DYMO-Hair reduces the final geometric error by 22% and achieves a 42% higher absolute success rate on average.

- This highlights the benefit of incorporating a dynamics model and demonstrates the superior performance of our advanced model in boosting closed-loop manipulation at the system level.

Hair Styling Real-world Tests on Wigs

Key Takeaway

- Real-world tests exhibit zero-shot transferability of DYMO-Hair to wigs, achieving consistent success on challenging unseen hairstyles where the state-of-the-art rule-based system fails.

- Compared to the state-of-the-art rule-based system, DYMO-Hair is more robust to environmental variations, generalizes across hair lengths, and can effectively capture spatially long-horizon goals.

Acknowledgements

We would like to thank Feiyu Zhu and John Z. Zhang for their valuable feedback and discussions. This work was supported by NSF IIS-2112633 and NSF Graduate Research Fellowship under Grant No. DGE2140739.

Contact

If you have any questions, please feel free to contact Chengyang Zhao.