DreamControl: Human-Inspired Whole-Body Humanoid Control for Scene Interaction via Guided Diffusion

Abstract

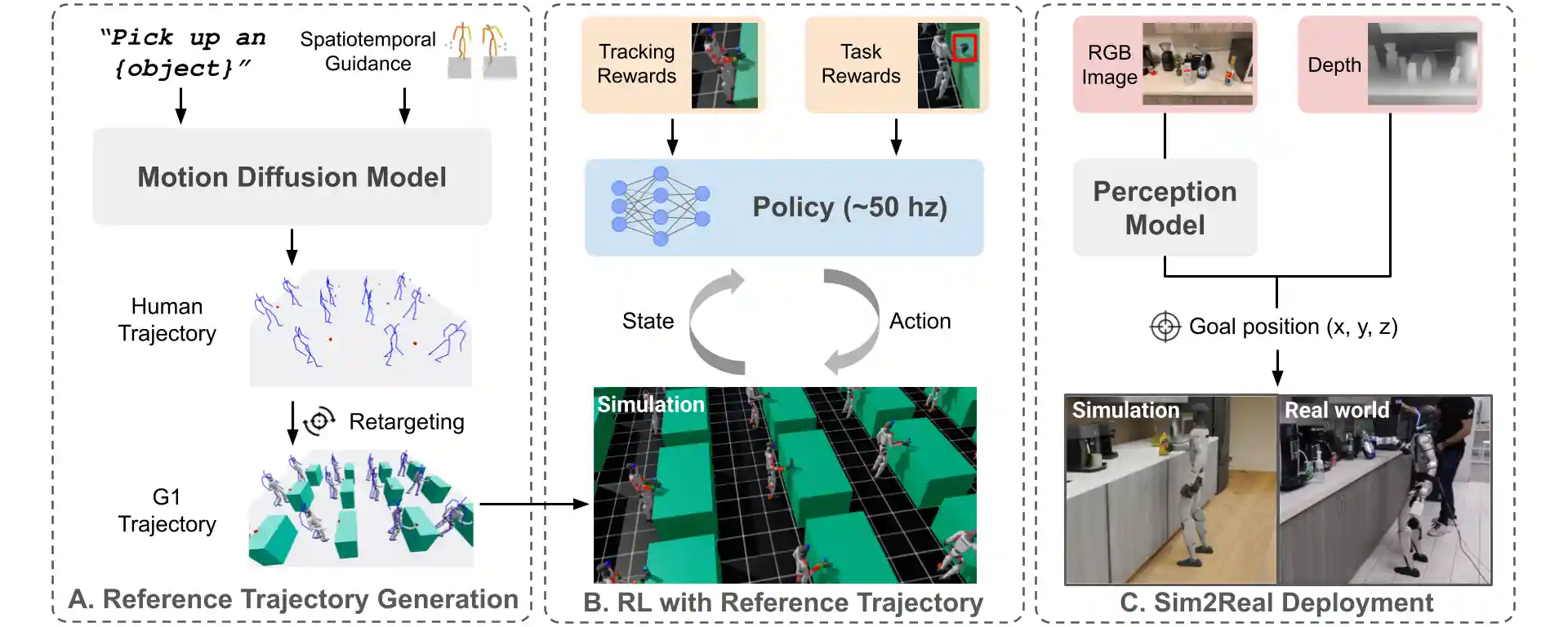

We introduce DreamControl, a novel methodology for learning autonomous whole-body humanoid skills. DreamControl~leverages the strengths of diffusion models and Reinforcement Learning (RL): our core innovation is the use of a diffusion prior trained on human motion data, which subsequently guides an RL policy in simulation to complete specific tasks of interest (e.g., opening a drawer or picking up an object). We demonstrate that this human motion-informed prior allows RL to discover solutions unattainable by direct RL, and that diffusion models inherently promote natural-looking motions, aiding in sim-to-real transfer. We validate DreamControl's effectiveness on a Unitree G1 robot across a diverse set of challenging tasks involving simultaneous lower and upper body control and object interaction.

Teaser Video

Skills deployed on hardware

"Open top drawer"

"Open 3rd drawer from top"

"Open top drawer" (is loaded)

"Open drawer" (is loaded)

"Pick and handover vanilla syrup"

"Pick and handover dishwasher soap"

"Pick and handover teabag"

"Pick and handover cheetos packet"

"Start microwave"

"Open microwave"

"Press button to call elevator"

"Open microwave"

"Start microwave"

"Press elevator button"

"Bimanual lift box with 1 book"

"Bimanual lift box with 2 books"

"Bimanual lift box with 4 books"

"Bimanual lift box with 1 book"

"Bimanual lift box with 2 books"

"Bimanual lift box with 4 books"

"Punch at given target"

"Punch the given target"

"Punch the given target"

"Punch the given target"

"Perform a full Squat"

"Perform a half Squat"

"Perform a full Squat"

"Perform a half Squat"

Skills deployed in simulation

"Pick the mustard bottle on platform"

"Pick the "cheez it" packet on ground" (Top grasp)

"Pick the bottle on ground" (Side grasp)

"Pick the sugar packet on platform"

"Pick the tomato can on platform"

"Pick the mug on platform"

"Open top drawer"

"Open 2nd drawer from top"

"Open 3rd drawer from top"

"Open top drawer"

"Open 2nd drawer from top"

"Open 3rd drawer from top"

"Press button to call elevator"

"Press button to call elevator"

"Press button to call elevator"

"Press button to call elevator"

"Punch the given target"

"Punch the given target"

"Punch the given target"

"Punch the given target"

"Kick the given target"

"Kick the given target"

"Kick the given target"

"Kick the given target"

"Bimanual lift the box"

"Jump over the obstacle"

"Sit at the given position"

"Bimanual lift the box"

"Jump over the obstacle"

"Sit at the given position"

DreamControl Methodology

Acknowledgements

We would like to thank Yasvi Patel for her help in recording and editing all the videos; Brandon Rishi for his help with the hardware; and Viswesh Nagaswamy Rajesh, Srujan Deolasee, and Geordie Moffatt for their help with recording videos of the robot.

BibTeX

@article{Kalaria2025DreamControlHW,

title={DreamControl: Human-Inspired Whole-Body Humanoid Control for Scene Interaction via Guided Diffusion},

author={Dvij Kalaria and Sudarshan S. Harithas and Pushkal Katara and Sangkyung Kwak and Sarthak Bhagat and Shankar Sastry and Srinath Sridhar and Sai H. Vemprala and Ashish Kapoor and Jonathan Chung-Kuan Huang},

journal={ArXiv},

year={2025},

volume={abs/2509.14353},

}