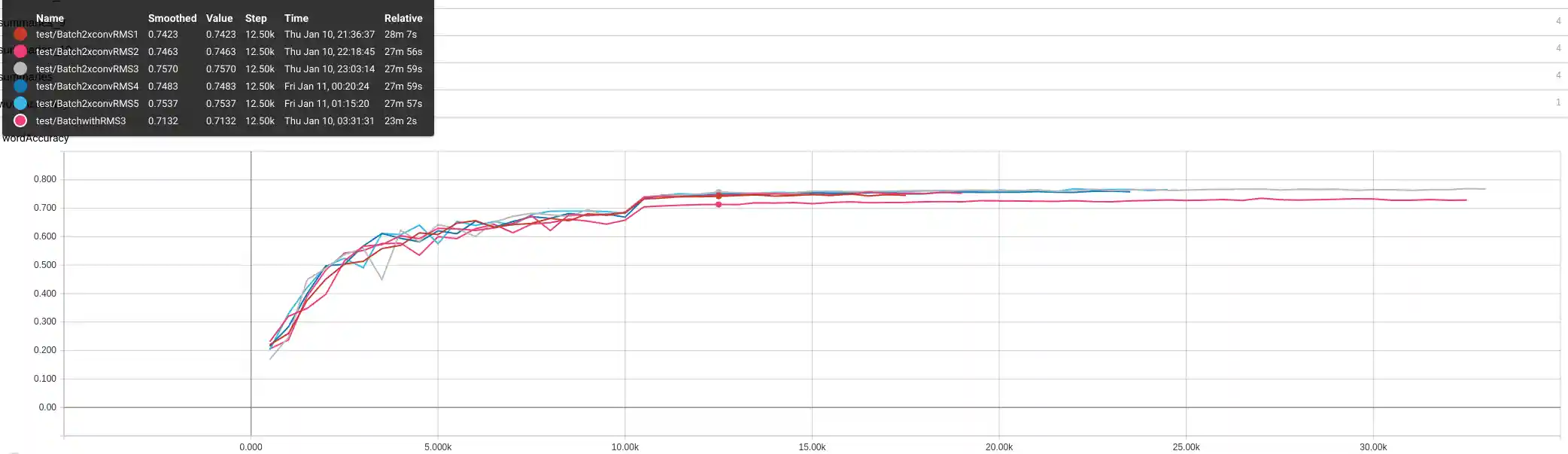

Doubling number of conv layers improves accuracy

I'm not sure the title is tremendously surprising to anyone, but I cleared 76% word accuracy with a deeper network. More interestingly, using a deeper network and terminating around epoch 25 yields a 74-75% word accuracy model, which is better and faster than training a smaller network to the bitter end.

Relevant code:

for i in range(numLayers):

kernel = tf.Variable(tf.truncated_normal([kernelVals[i], kernelVals[i], featureVals[i], featureVals[i + 1]], stddev=0.1))

conv = tf.nn.conv2d(pool, kernel, padding='SAME', strides=(1,1,1,1))

conv_norm = tf.layers.batch_normalization(conv, training=self.is_train)

relu = tf.nn.relu(conv_norm)

kernel2 = tf.Variable(tf.truncated_normal([kernelVals[i], kernelVals[i], featureVals[i+1], featureVals[i + 1]], stddev=0.1))

conv2 = tf.nn.conv2d(relu, kernel2, padding='SAME', strides=(1,1,1,1))

conv_norm2 = tf.layers.batch_normalization(conv2, training=self.is_train)

relu2 = tf.nn.relu(conv_norm2)

pool = tf.nn.max_pool(relu2, (1, poolVals[i][0], poolVals[i][1], 1), (1, strideVals[i][0], strideVals[i][1], 1), 'VALID')