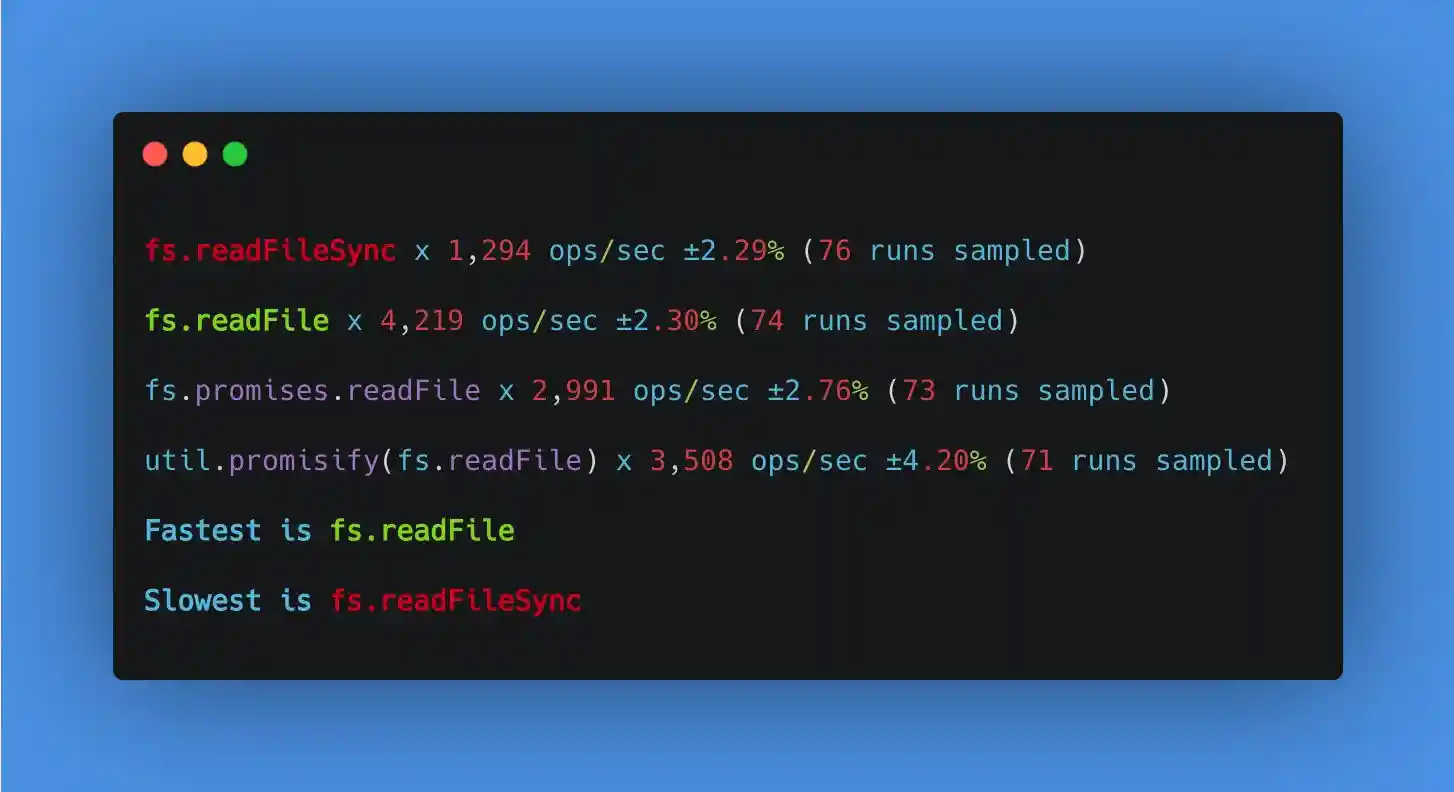

fs.promises.readFile is 40% slower than fs.readFile

- Version: 15.10.0

- Platform: macOS

- Subsystem: 11.2.1

What steps will reproduce the bug?

Run this benchmark on a 1 MB file (big.file):

const Benchmark = require("benchmark"); const fs = require("fs"); const path = require("path"); const chalk = require("chalk"); const util = require("util"); const promisifed = util.promisify(fs.readFile); const bigFilepath = path.resolve(__dirname, "./big.file"); const suite = new Benchmark.Suite("fs"); suite .add( "fs.readFileSync", (defer) => { fs.readFileSync(bigFilepath, "utf8"); defer.resolve(); }, { defer: true } ) .add( "fs.readFile", (defer) => { fs.readFile(bigFilepath, (err, data) => { defer.resolve(); }); }, { defer: true } ) .add( "fs.promises.readFile", (defer) => { fs.promises.readFile(bigFilepath).then(() => { defer.resolve(); }); }, { defer: true } ) .add( "util.promisify(fs.readFile)", (defer) => { promisifed(bigFilepath).then(() => { defer.resolve(); }); }, { defer: true } ) .on("cycle", function (event) { console.log(String(event.target)); }) .on("complete", function () { console.log( "Fastest is " + chalk.green(this.filter("fastest").map("name")) ); console.log("Slowest is " + chalk.red(this.filter("slowest").map("name"))); }) .run({ defer: true });

To create a 1 MB file (~40% slower):

dd if=/dev/zero of=big.file count=1024 bs=1024

To create a 20 KB file (~55% slower):

dd if=/dev/zero of=big.file count=20 bs=1024

How often does it reproduce? Is there a required condition?

Always.

What is the expected behavior?

fs.promises.readFile should perform similarly to fs.readFile

What do you see instead?

Additional information

I suspect the cause is right here: https://github.com/nodejs/node/blob/master/lib/internal/fs/promises.js#L319-L339

Instead of creating a new Buffer for each chunk, it could allocate a single Buffer and write to that buffer. I don't think Buffer.concat or temporary arrays are necessary.