GitHub - wb-droid/DeepLearningStepByStep

This is a summary of projects that I developed for study of Deep Learning.

There are many frameworks and libraries out there. Fastai is a good starting point. Huggingface has huge amount of models for use. From scratch projects are very good for learning the fundamentals.

There are so many ML concepts, designs and models to learn and try. I hope to grasp this revolutionary technology and make use of AI to solve real world problems.

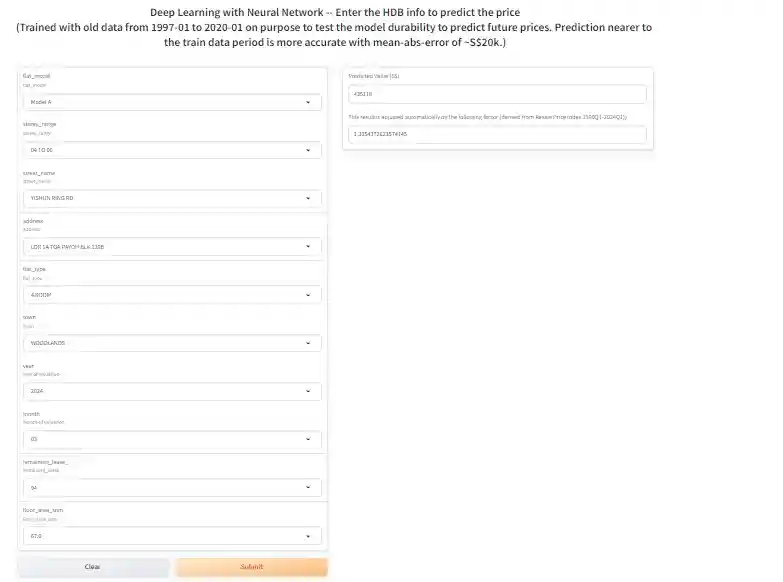

HDB price predictor

There are some very good existing HDB price predictor projects using RandomForests. I tried something different -- training a neural network to predict HDB prices. I only traind with a small portion of old data from 1997-01 to 2020-01 for evaluation. The result is pretty good with mean absolute error of S$14k. For prediction after training period, the mean absolute error is S$20k. When using this model to predict current price at 2024-03, after adjust by Resale Price Index, the predicted price has a bit higher mean absolute error of S$37k.

Try the model at Huggingface Spaces: HDB price predictor.

Data analysis, model, training and evaulation details can be found in this Jupyter notebook.

References:

This model uses fastai's framework.

There is a very good Exploratory Data Analysis on the HDB data by teyang-lau. His model was built with Linear Regression and RandomForest.

Data is from data.gov.sg.

Chinese poem generator

NLP Transformer models are very popular. There are many existing implementations and pretrained models. Creating one from scratch is good learning and practise. To make it managable and useful, a Chinese poem generator is the target.

a) A basic MLP model from scratch.

Data is from https://github.com/Werneror/Poetry.

First created a Chinese character level tokenizer. Built a simple Model just with token embedding, position embedding and MLP.

Modle/training details can be found here.

After training for a while, the following can be generated.

generate('终南') -- '终南汞懒飞收。俗始闻夜门。谁常波漫春'

generate('灵者') -- '灵者轩愁。月看曲,贱朱光受,书初去雨'

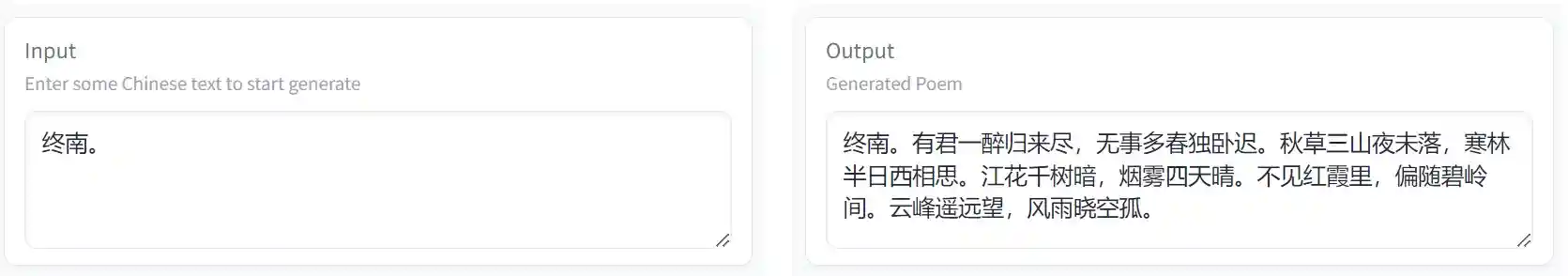

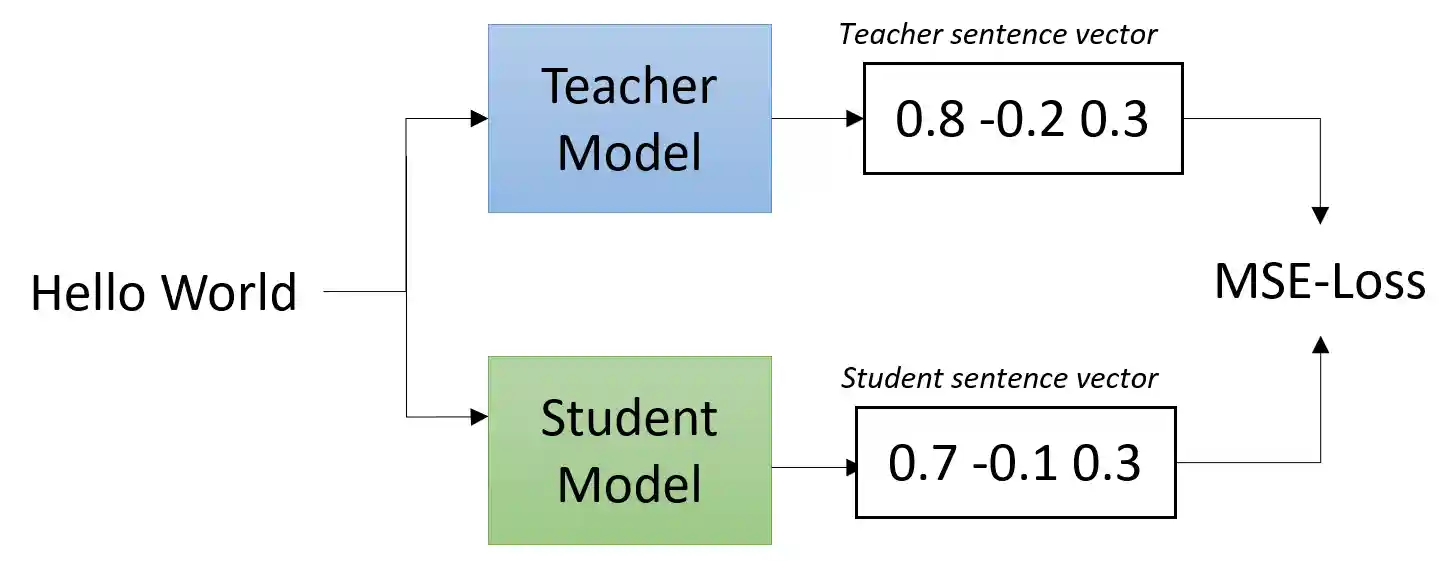

b) A GPT2-like model from scratch.

Follow the original GPT2 design and "Attention is all you need" paper. Add on top of the above MLP model to have all the additional critical components such as casual self attention, layer norm, dropout, skip connection, etc. After training for a while, the following nice poems can be generated.

generate('终南') -- '终南岸,水碧绿槐无处色。云雨初寒落月,江风满天。秋景遥,夜深烟暮春。一望青山里,千嶂孤城下,何远近东流。古人不见长空愁,万般心生泪难尽。'

generate('灵者') -- '灵者,寒暑气凝空濛长。风雨如霜月,万顷不闻钟客归。白纻初行尽柳枝,黄花满衣无愁懒。春色,残红芳兰深,一声兮,九陌上,相思君王。'

Model and training details can be found in https://github.com/wb-droid/myGPT/tree/main/GPT2_like.

Try it at Huggingface Space: https://huggingface.co/spaces/wb-droid/myGPT.

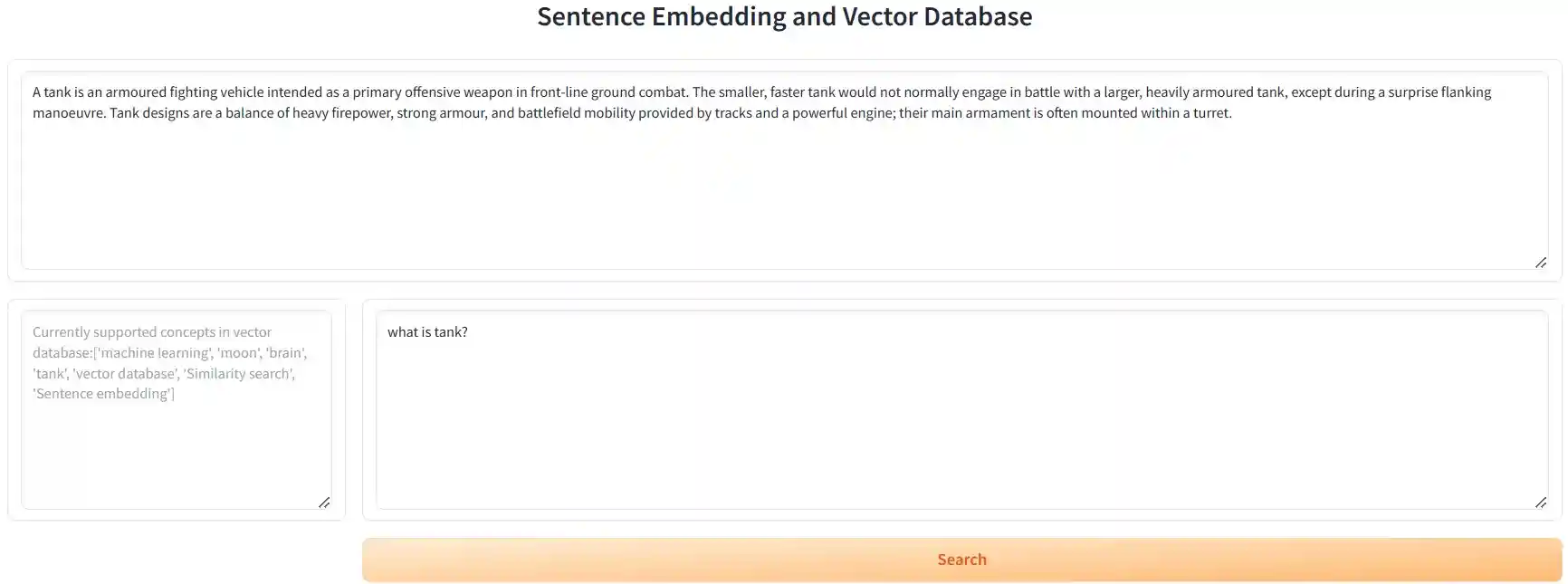

Sentence Embedding, Vector Database and Semantic Search

Besides used in semantic search applications, this project is also a good foundation for Retrival Augmented Generation I plan to build later. This is also very similar to CLIP model that performs contrastive training on image and text embedding.

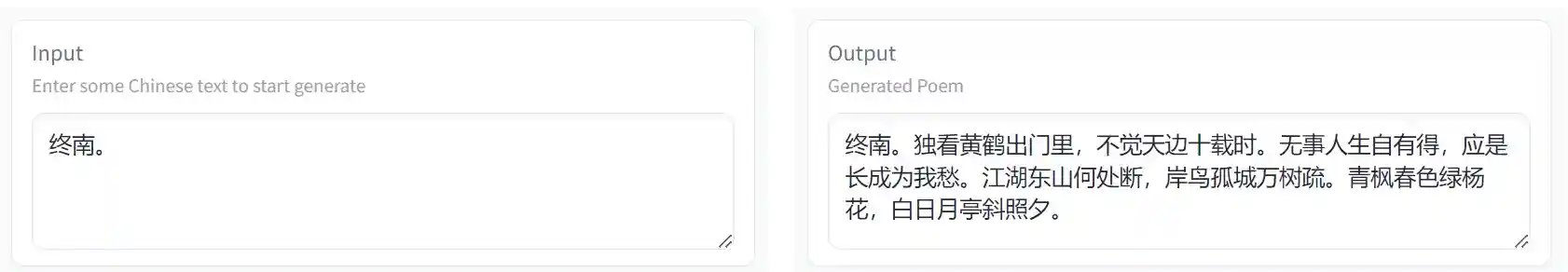

a) Build a text embedding model with "BERT + mean pooling".

b) Build a contrastive training model following the paper Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. Train it to improve semantic search accuracy.

c) Then use the trained model to implement a vector database (using data from a few wiki pages, chunk by sentence). Support cosine similarity for similarity search.

When testing with the model untrained, the search is not accurate.

search_document("what is BERT?") returns Research in similarity search is dominated by the inherent problems of searching over complex objects., etc.

When testing with the trained model, the search result is improved a lot.

search_document("what is BERT?") returns In practice however, BERT's sentence embedding with the [CLS] token achieves poor performance, often worse than simply averaging non-contextual word embeddings., etc.

More details on the model, training and inference can be found here.

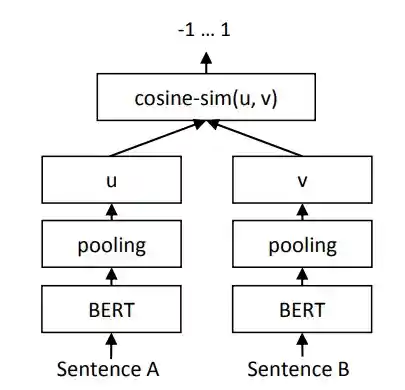

d) This model can be made faster and lighter. Embedding dimension reduction can help to reduce embedding storage but does not help model memory. Quantization is already used before on ChatGLM. So, this time I will try model Distillation, which uses the bigger model (teacher) to train a smaller model (student). Ilustrated below.

This diagram source is from sentence-transformers.

This diagram source is from sentence-transformers.

The student model is built by reducing BertEncoder from 12 BertLayers to 6 layers. And the model size is almost halved from 430MB to 260MB. Training model is implement as the diagram. After training, the student model performs similarly as the teacher, with matching top-2 similarity search result. More details on the models, training and inference can be found here.

e) Build a huggingface space app to demo this model. An example vector database is pre-built with concepts searched from wiki, by this script. User enters a question related to the concept. The app will use the question to do semantic search in the vector database and return the result.

Try it at Huggingface Space here.

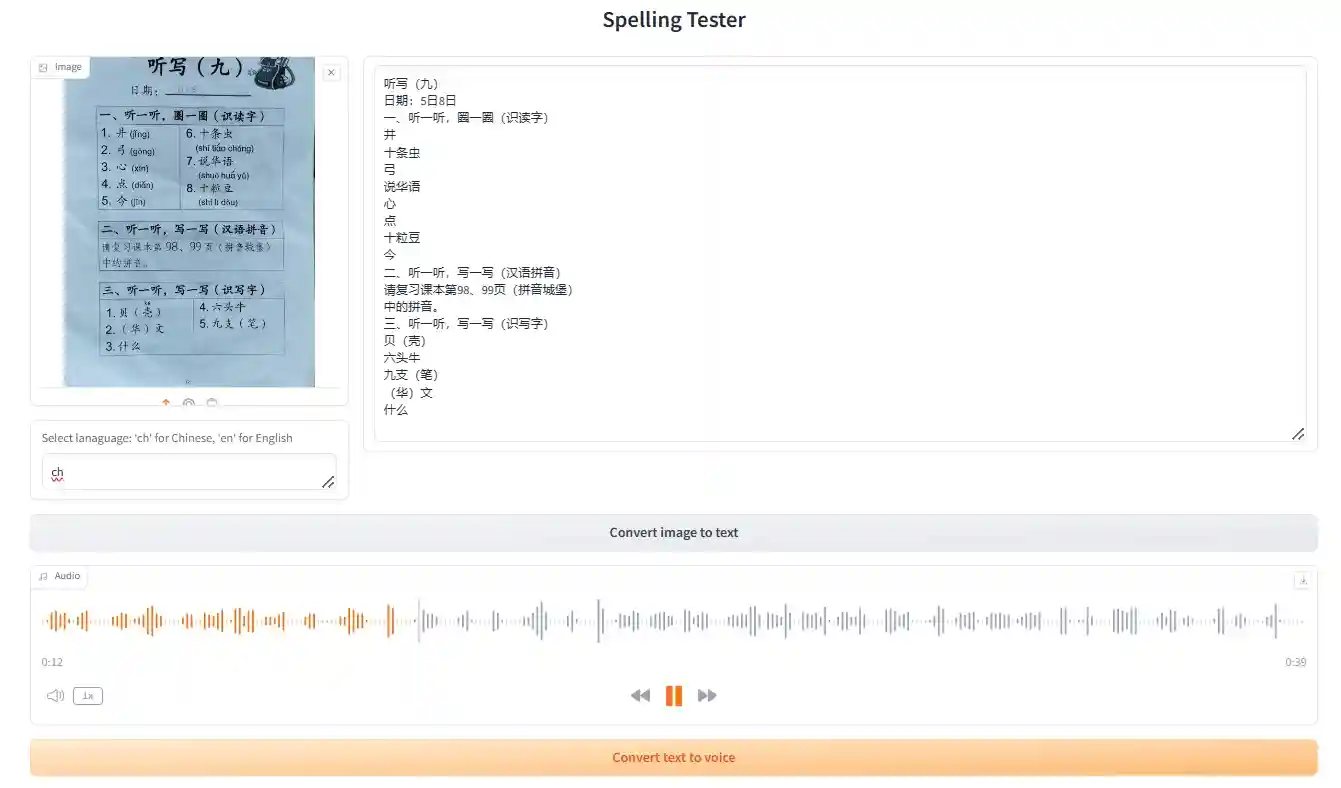

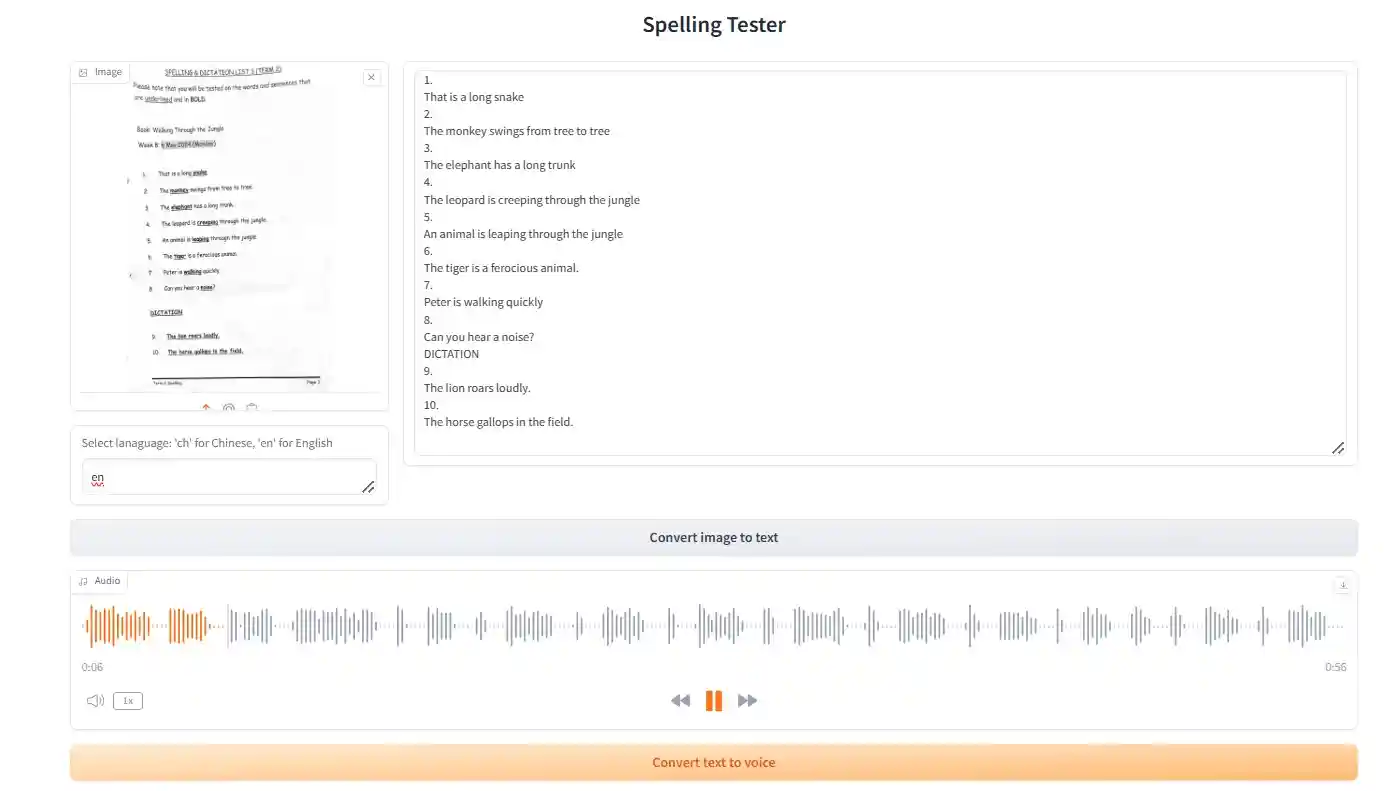

Spelling Tester App

I often need to help my son prepare his school spelling test, reading the words/dictations to him multiple time. This is a task better done by AI to save me some time and to have better pronunciation than me. My son can also practise when I'm not around.

a) The main component is text-to-voice. I evaluated bark first. It's a GPT-style transfomer model similar to the myGPT model I did above. The voice generation procedure and result can be found here. The generated voice is pretty good. It requires a lot of GPU VRAM resources and take a bit of time to generate. The voice generated is natural but not the studio-quality.

I also tested edge-tts. It uses Microsoft Edge's online text-to-speech service, so the model behind is not clear to me. The voice generation procedure and result can be found here. The generated voice is of better quality but not as natural. It does not use local resource and generates faster. But it needs internet access, and depends on the Microsoft service which may not be available/free forever.

My son is happy with the mp3 generated as he can pause, rewind or adjust speed in the mp3 player. He now uses it to prepare for the next Chinese spelling test by himself.

b) Next is to use OCR to convert spelling list scan image to text, to save effort of typing. Optical Character Recognition (OCR) is mature with high accuracy. There are many open-source solutions.

I first tested EasyOCR. Its pipeline make use of several models, such as CRAFT for detection, Resnet for feature extraction, LSTM for sequence labeling and CTC for decoding. The detection result has some errors as shown here.

Next I tested PaddleOCR. Its pipeline uses even more models. The result is much better as shown here. All Chinese characters and English letters are recognized correctly. So I will choose PaddleOCR.

c) Create an app to do everying together: image-to-text, text-to-voice, and play the generated audio to do mock test. Try it at Huggingface Space here.

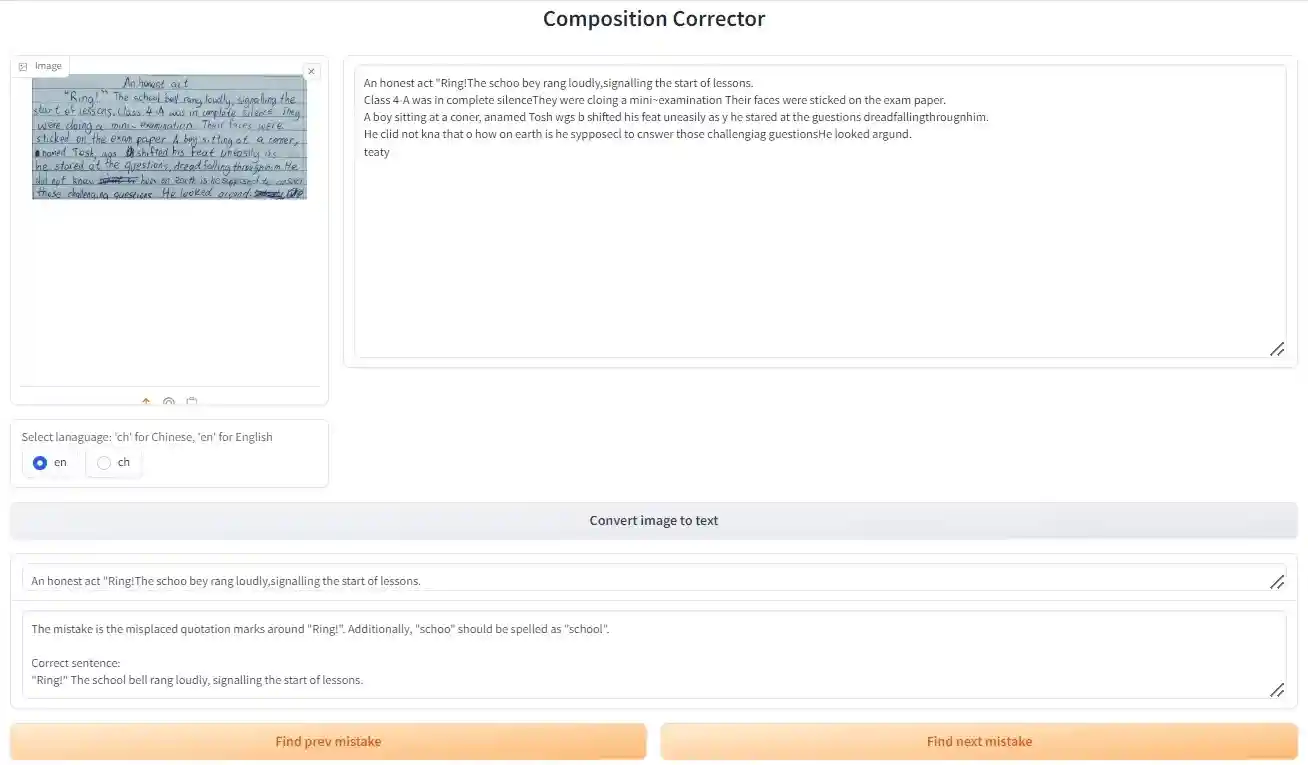

Composition Corrector App

Composition is another area my son needs extra help. But I'm not good at it either. LLM is already very advanced and should do a better job than me. This "Composition Corrector app" is designed to find mistakes, suggest corrections and make improvements.

a) LLM setup

The easier way to use a good LLM in application is to use ChatGPT API. But it's not free and not opensource.

Another way is to run LLM like llama and ChatGLM locally. With my small GPU, this is still doable after extra efforts as shown in the "model quantization" section below.

But I'm choosing a third way -- Huggingface's Inference API. It's same effect as running pretrained models locally, but it is free, more flexible and easier to maintain.

The app will use the latest and greatest Llama 3 as the language model.

b) Prompt Engineering

System prompt is "You are a helpful and honest assistant. Please, respond concisely and truthfully."

I do a search of error sentence first, with this prompt -- "Only answer Yes or No. Is there grammatical or logical mistake in the sentence:".

Then, the detail of the mistakes and correction is queried by this prompt -- "What is the mistake and what is the correct sentence?"

c) Create the App to put everything together: image-to-text, text to query LLM to find mistake and get correction. Indeed it can find more mistakes than me. And the suggested corrections are better than what I usually come up with. Also, after making corrections, make sure to run through the Corrector again. It may still detect a few extra errors that are more subtle.

Try it at Huggingface Space here.

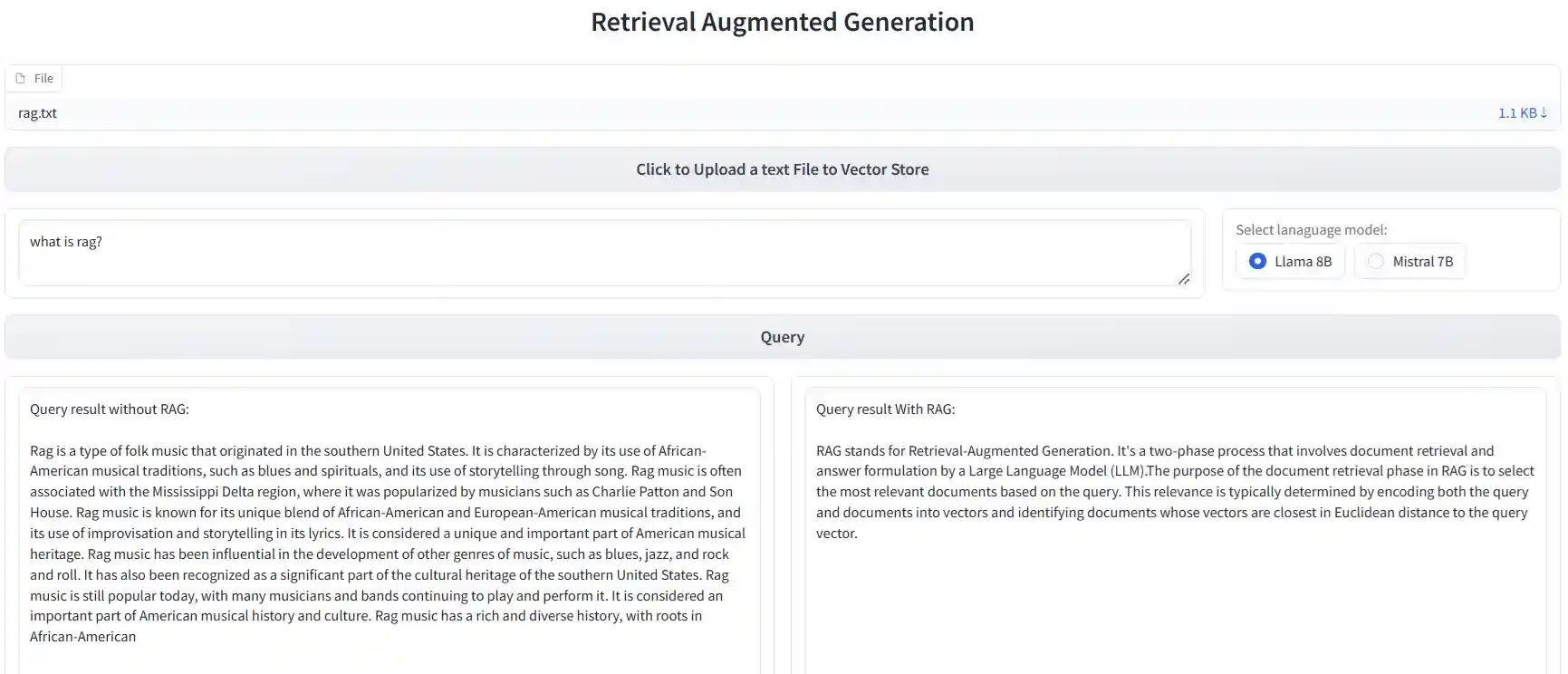

Retrieval Augmented Generation App

LLM can learn new concept and do customization with prompt engineering, fine-tuning, and Retrieval Augmented Generation (RAG). RAG can be built with the from-scratch embedding model and vector store I implemented earlier. However, for learning, this time I will use LangChain framework which is more flexible and scalable.

For this App, following models are used. VectorStore - FAISS. Embedding - "BAAI/bge-base-en-v1.5". LLM can select mistral or llama. Both query with RAG and without RAG are performed together, and the results are displayed side by side to showcase the great improvement achieved with RAG.

Try it at Huggingface Space here.

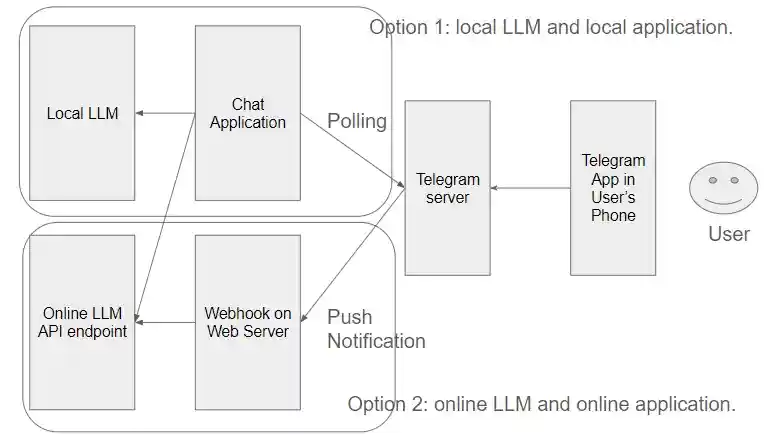

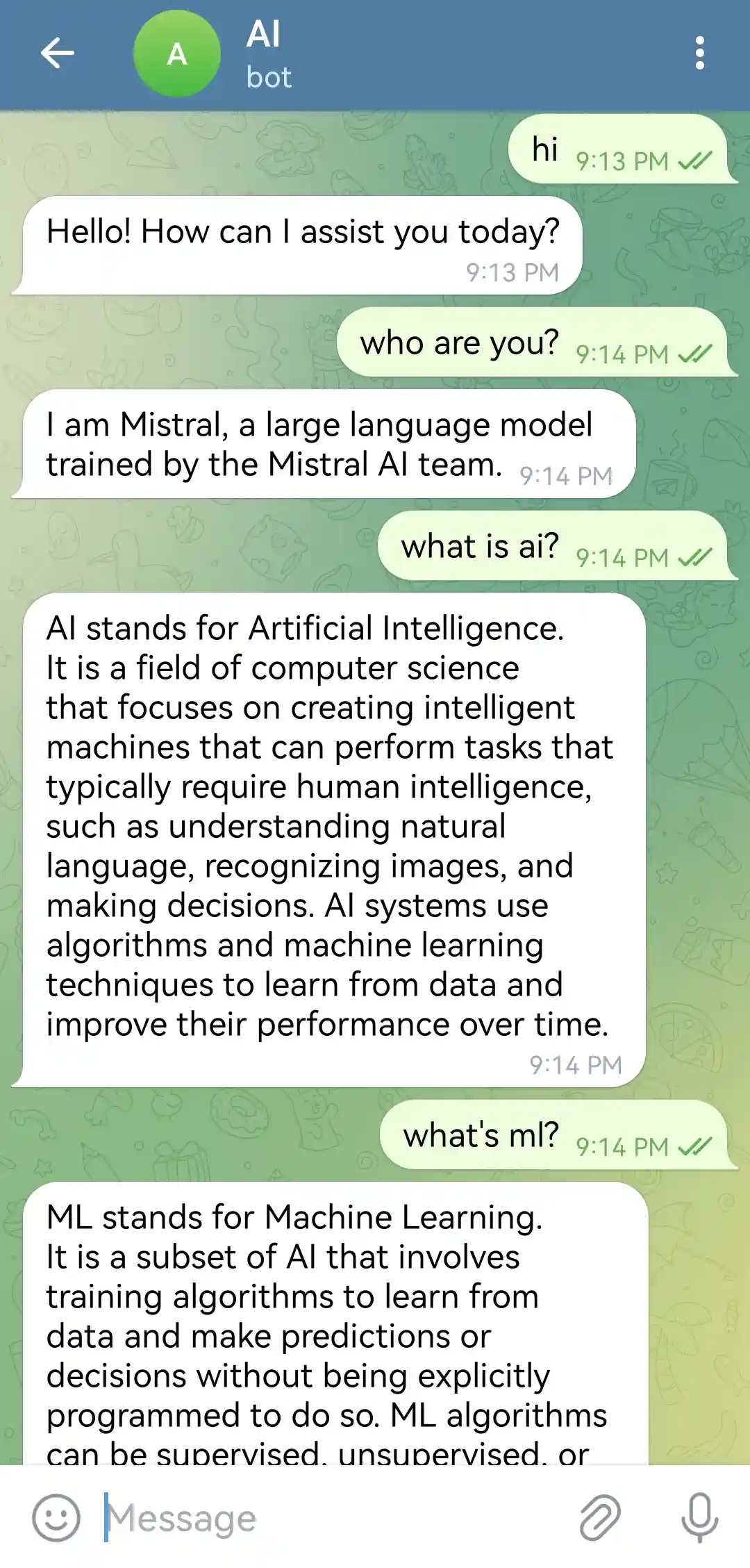

Telegram Chat Bot with Local LLM/application

Previous developed LLM generation/chat apps are web based. A chat bot in phone app such as Telegram is more accessible and has more options to customize. There are two ways to build such chat bot as shown below. Local LLM/application is easier to deploy. And developer has full control. It's also more secure. On the other hand, online LLM/application is more flexible to use multiple LLMs, and does not require local resource/maintenance. This project will use the first option: Local LLM/application. Next project will use the second option.

Local LLM can be setup similar to my previous projects. But this time, I will use text-generation-webui for its maturity and features. Use the command below to enable API endpoint, and load a quantized Mistral 7B model.

start_wsl.bat --api --model TheBloke_Mistral-7B-Instruct-v0.1-GPTQ

Local chat application can be developed with Telegram API library, such as python-telegram-bot. For this project, I will use chatgpt-mirai-qq-bot for it's easy to customize and full of features. With this config.cfg, and update its bot_token with your own Telegram Bot Token (refer to https://core.telegram.org/bots). Then, run the command below.

docker run --name mirai-chatgpt-bot -v ./config.cfg:/app/config.cfg --network host lss233/chatgpt-mirai-qq-bot:browser-version

Now talk to the Mistral AI with Telegram like you talk to another person.

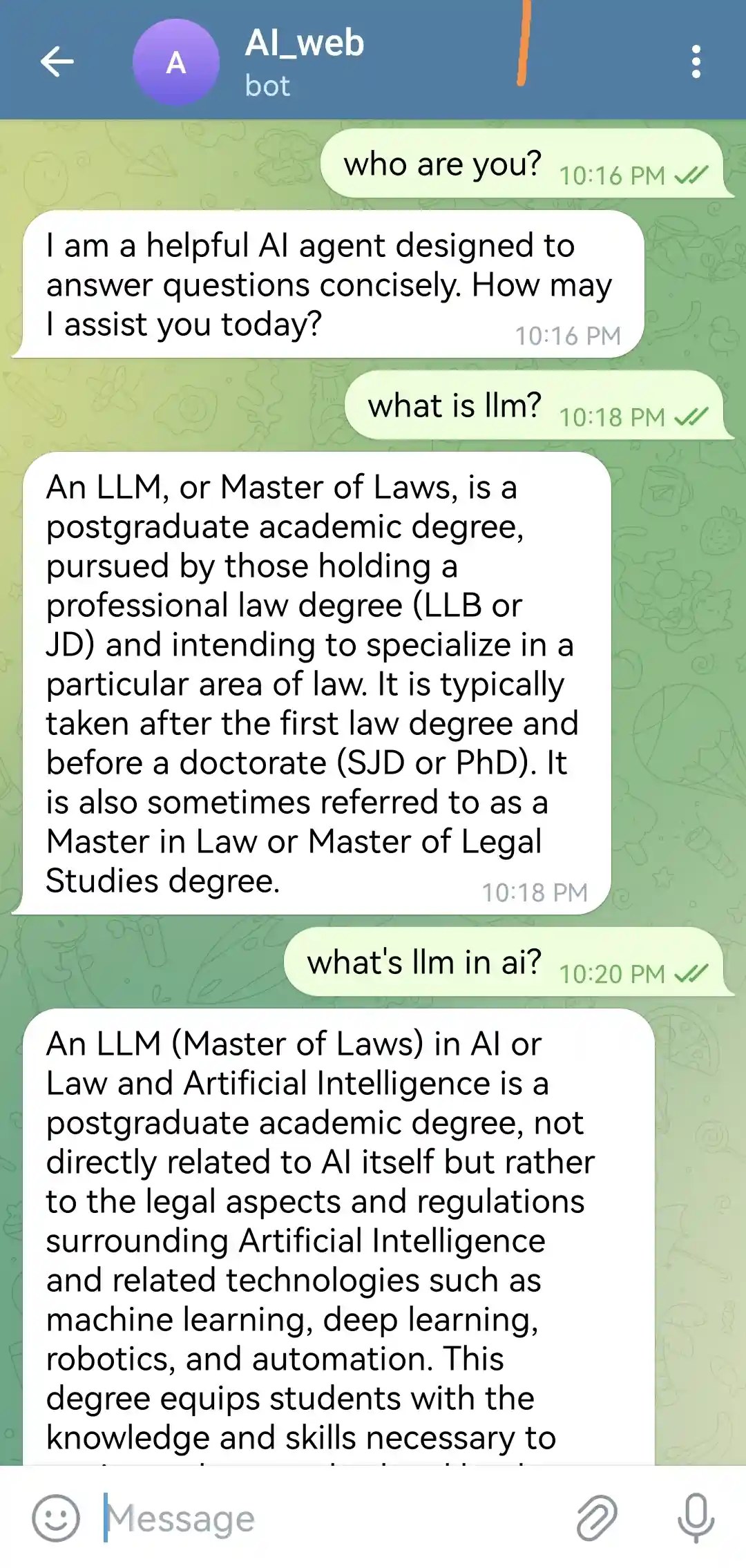

Telegram Chat Bot with Online LLM/Webhook

The previous Telegram Bot needs local resources to run local LLM/application. To save my resources, I also try to build the 2nd option, "Online LLM/Webhook", using only free online resource.

a) Attempt on Huggingface is only partial successful.

The Telegram webhook webserver is setup on Huggingface here: https://wb-droid-telegramwebhook.hf.space/webhook. After it's running, use the following to register this webhook with Telegram: https://api.telegram.org/bot{YOUR_TOKEN}/setWebhook?url={YOUR_WEBHOOK_ENDPOINT}. This webhooks works and can receive Telegram messages. But it cannot sent reply message out because Huggingface does not support connection to api.telegram.org and fails for "No address associated with hostname".

b) Cloudflare worker works successfully.

To workaroud the limitation in previous Huggingface webhook, I build another Telegram webhook as Cloudflare worker. This webhook will also call online Mistral LLM endpoint for text generation/chat response. The webhook script can be found here. After register this webhook with Telegram, I can chat with Telegram AI bot without using any local resources, and for free.

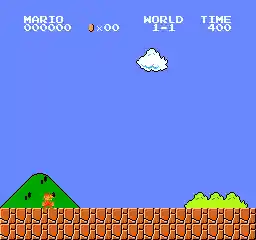

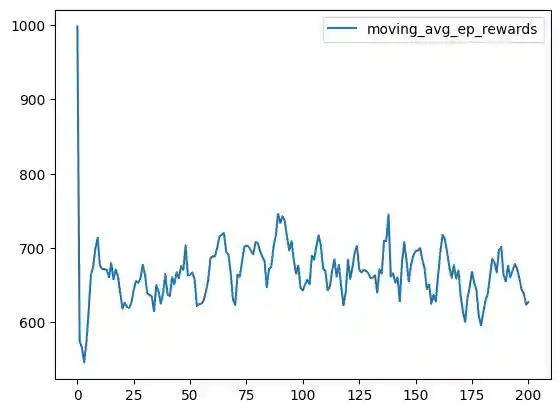

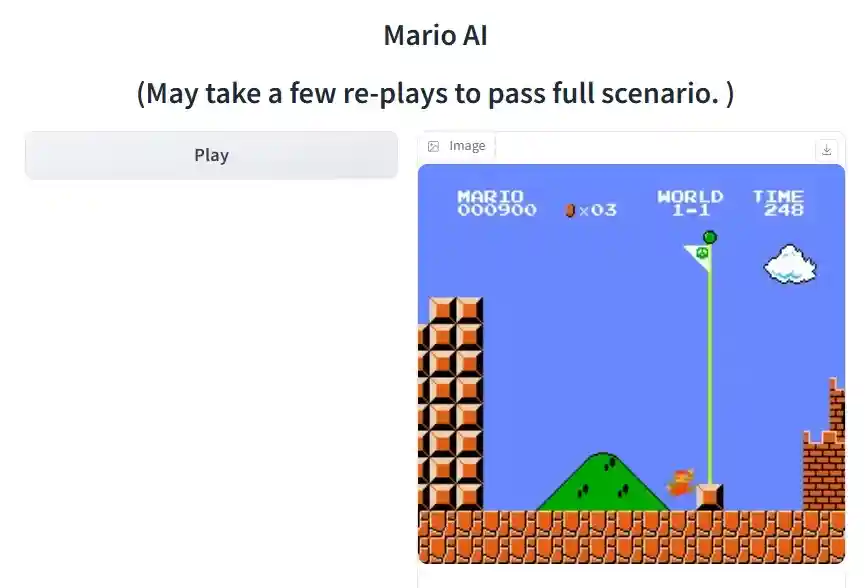

MyMarioAI with Deep Reinforcement Learning

AI can play games better than human. There are various Mario AI implementations, such as PPO AI and DDQN AI. I like MadMario's elegant design and implementation, using DDQN (Double Deep Q Network). But when I try to train it, the training is not progressing very well. This diagrom shows that total rewards barely improved after 8,000 episodes of training.

The author suggested 40,000 episodes of training loops to properly train the AI.

The author suggested 40,000 episodes of training loops to properly train the AI.

I made some adjustments: More balanced exploration and exploitation. Reduced burn-in to start training faster. Enhanced learning for Mario death cases. Weighted learning experience to focus more on newer actions/states. Then the training is faster.

Training and playback scripts can be found here

A Huggingface app is built to demo the Mario AI in action. Try it here.

Variational Autoencoder

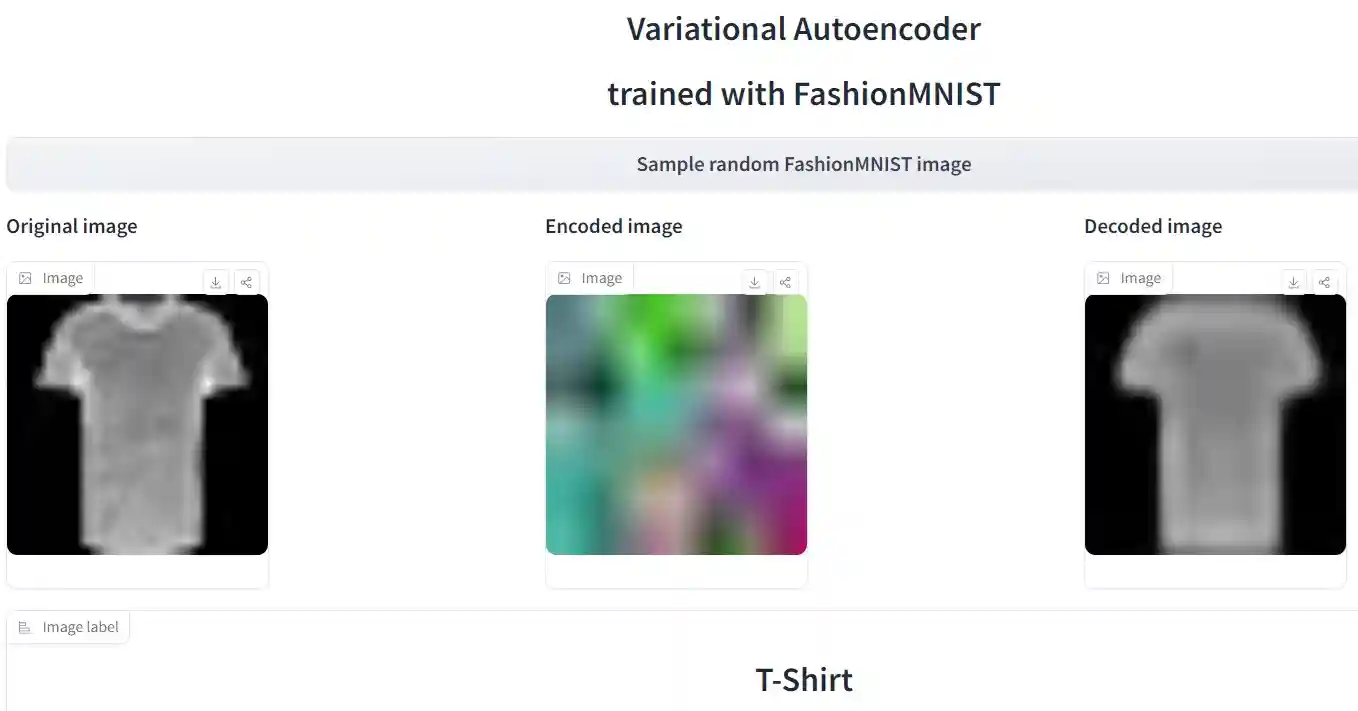

VAE (Variational Autoencoder) is one of the 3 component models of stable diffusion. After defusion generates new image at latent space, VAE is used to decode the latent image representation to restore the image into original space.

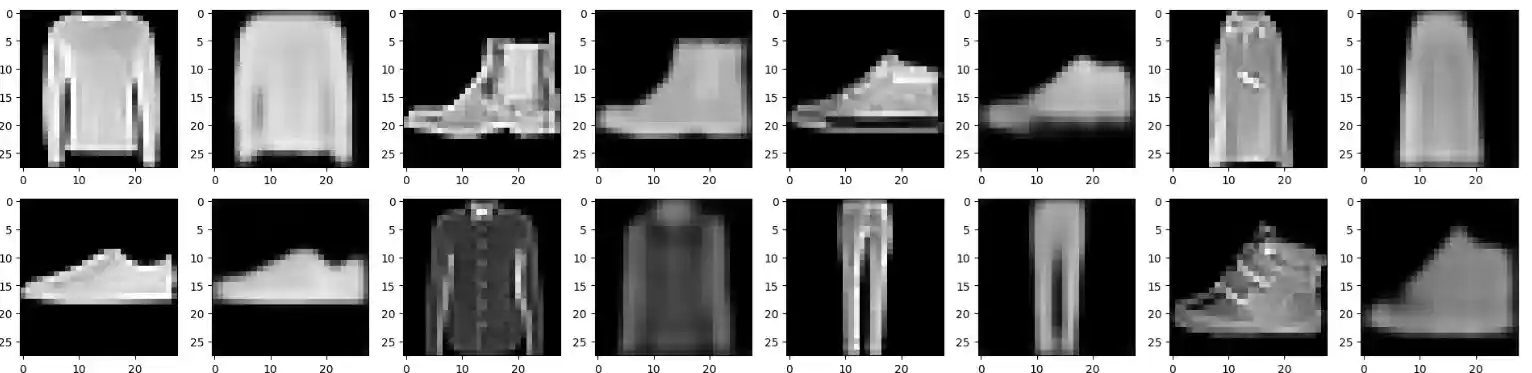

VAE has a decoder (down sampler) and encoder (up sampler). I implemented a simple VAE for FashionMNIST dataset. The result below (original image and generated image are side-by-side) shows that the VAE can capture the main features of the original images, and use it to restore a image close to original image.

The model, training and inference code can be found here.

A Huggingface app is also built to showcase the VAE. Try it here.

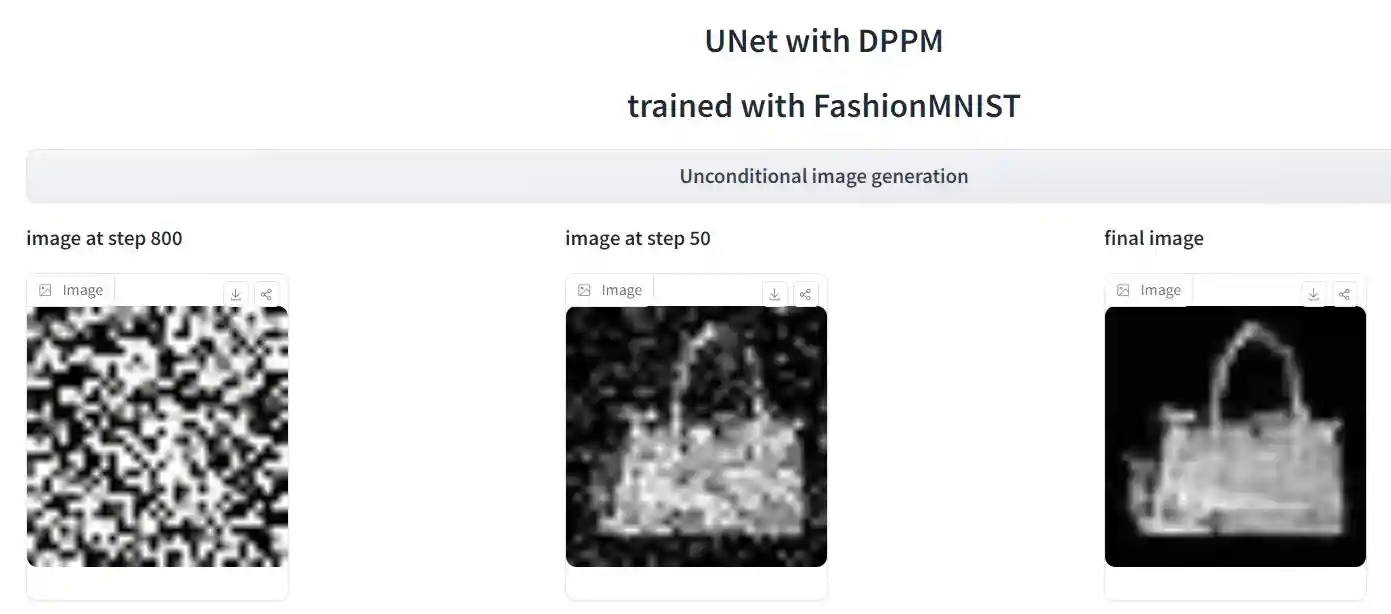

UNet with DDPM

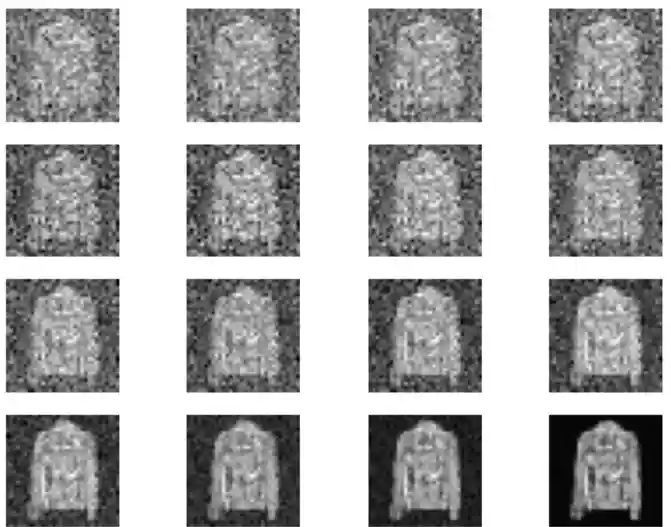

UNet with DDPM (Denoising Diffusion Probilistic Model) is another one of the 3 component models of stable diffusion. UNet is trained with noisified images to predict the noise. Then the trained UNet is used for image generation. It goes through 1000 time steps of denoising process to generate a new image.

I implemented a simple UNet by UNet2DModel. Trained it with FashionMNIST dataset. I tried DDPMScheduler but could not make it generate correct image. So I used fastai's DDPM sampler implementation instead. The result below shows the DDPM denoising process that removes noises step by step to generate the final image.

The model, training and inference code can be found here.

A Huggingface app is also built to showcase the diffusion process. Try it here.

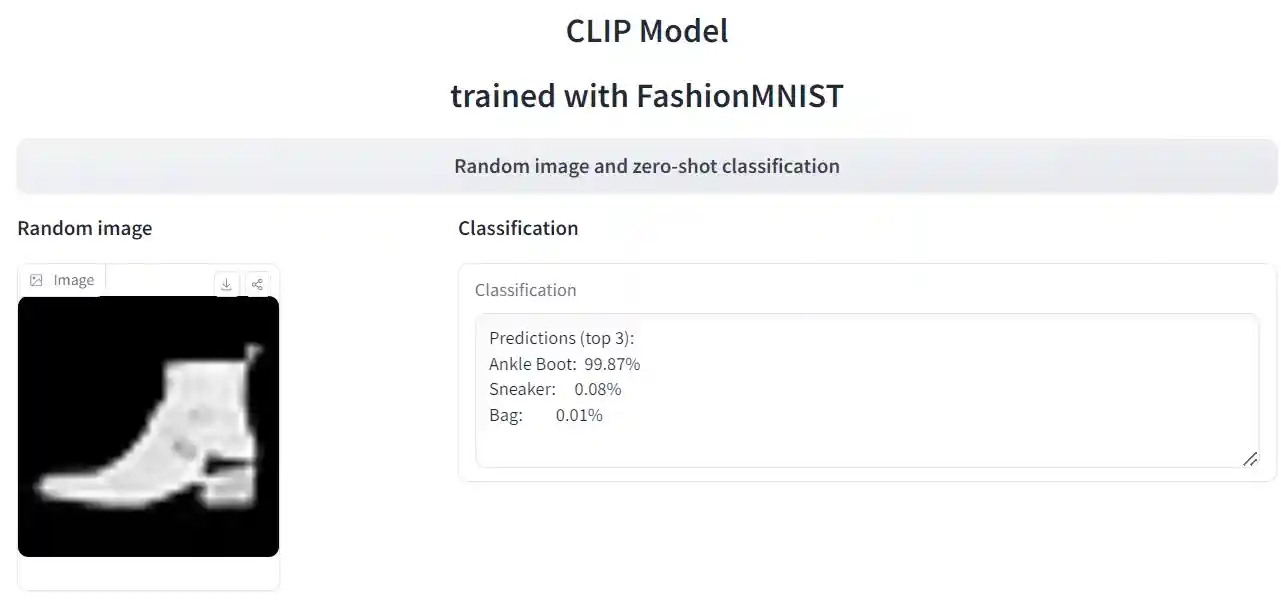

CLIP model

CLIP (Constrasitive Language-Image Pretraining) is the 3rd of the 3 component models of stable diffusion. CLIP is used to align text embedding and image embedding at latent space. CLIP can perform zero shot image classification/image captioning. It's also used to generate text/image embedding that can be used to guide image generation in diffusion.

I implemented a simple CLIP model. The image encoder reuses the VAE encoder implemented earlier. The text encoder is a label class embedding. Trained it with FashionMNIST dataset. The zero-shot image classification achived >90% accuracy.

The model, training and inference code can be found here.

A Huggingface app is built to showcase the CLIP model. Try it here.

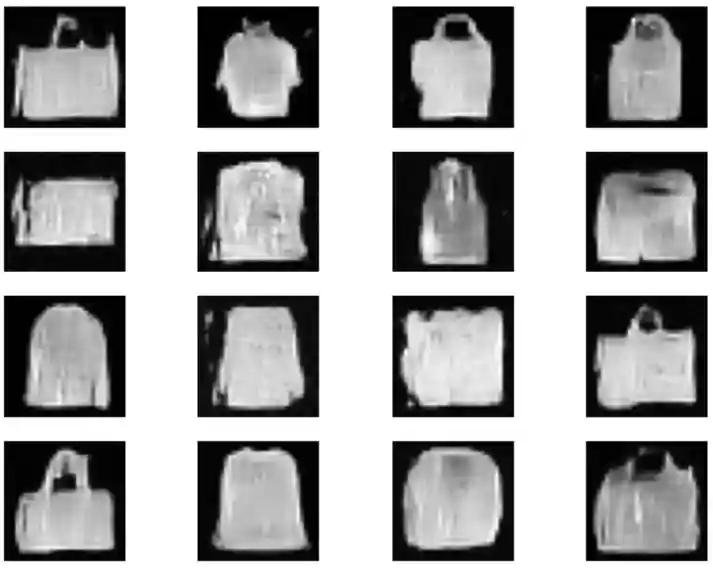

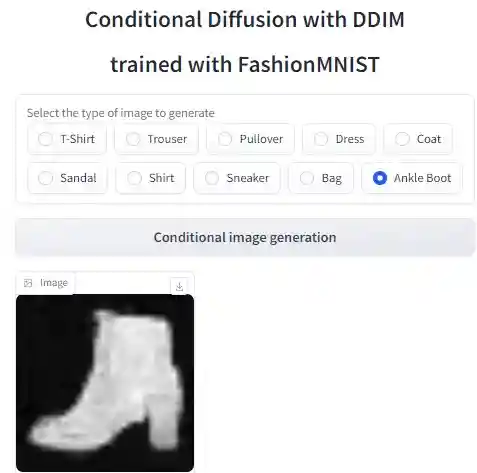

Conditional Diffusion with DDPM/DDIM

The previous unconditional diffusion randomaly generates sample from the UNet model's learned distribution. A more useful thing to do is to generate a targeted image that has certain properties or concepts. Conditional diffusion can generated this kind of image, guided by the conditional embedding what is an additonal input to the model.

I implemented such a model with UNet2DConditionModel. Trained it with FashionMNIST dataset. The conditional embedding is simply tokenizing the image label (as label index), then go through an embedding layer, so that the model can generate certain type of images dictated by the input.

DDIM (Denoising Diffusion Implicit Model) is more efficient way to generate images faster than DDPM. Both DDPM and DDIM generation of "T-Shirt" results are shown below.

The model, training and inference code can be found here.

Another interesting usage of this model is to conditon on multiple features, to generate images that has multiple properties. For example, a "Bag" that looks a bit like "T-Shirt" can be generated with this, as shown below.

A Huggingface app is built to showcase the conditional diffusion model. Try it here.

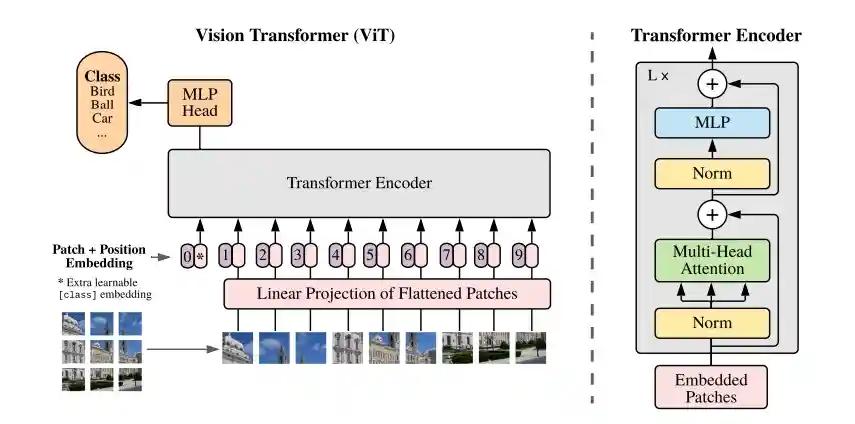

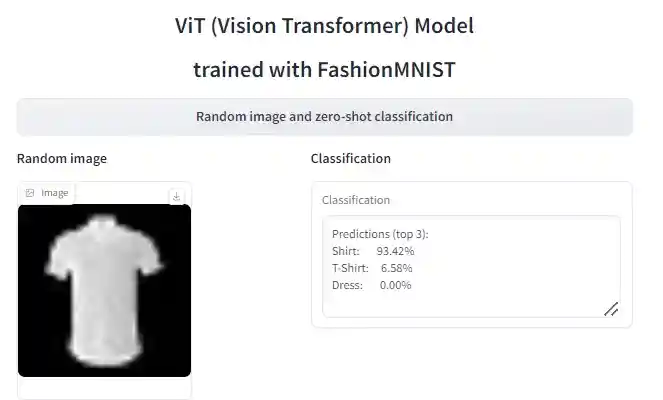

Vision Transformer

ViT(Vision Transformer) has been proven to achieve State-Of-The-Art performace as CNN. It re-uses transformer architecture originally used for NLP. I implemented a ViT from scratch, following the paper "TRANSFORMERS FOR IMAGE RECOGNITION AT SCALE" and its model  .

.

The main difference from GPT (such as myGPT above) is that the inputs are sequence of patches, instead of sequence of tokens. Also, the attention mask is not needed since all relations between patches should be discovered. Lastly, the CLS-token is replaced by mean pooling of the patches in MyViT, for classification. I trained MyViT with FashionMNIST dataset and achieved 90% accuracy in image classification after 10 epochs.

The model, training and inference code can be found here.

A Huggingface app is built to showcase the ViT model. Try it here.

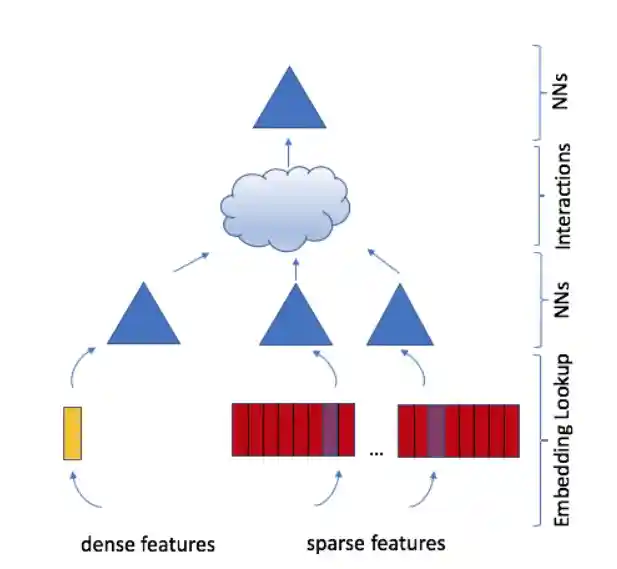

Deep Learning Recommendation Model

Facebook (Meta) AI's DLRM (Deep Learning Recommendation Model) is widely used for recommentation system. It's improved on collaborative filtering, to handle multiple sparse (categorical) features and dense (continuous) features interacting with each other. I implemented a DLRM from scratch following the paper and its model below.

The model is trained with Movielens database. 3 sparse features (user, movie, genre) and 1 dense feature (timestamp) is used as input, to predict the movie ratings. Trained for 10 epochs before the model started to overfit.

The model, training and inference code can be found here.

An additional feature of the model is that both model parallel (for embedding) and data parallel (for mlp) can be applied on different part of the model.

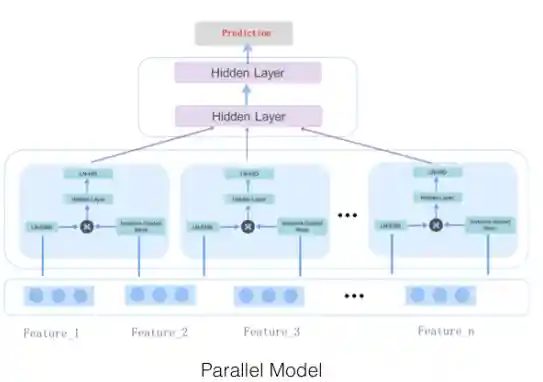

MaskNet Model

MaskNet is another recommendation model that is widely used for CTR ranking. For example, Twitter (X) uses Parallel MaskNet for its Heavy Ranker to rank tweets. Alibaba also includes MaskNet in its EasyRec recommendation framework. MaskNet has a serial variation and a parallel variation. The Parallel MaskNet is also a mixture of multiple experts (MMoE) design, where each MaskBlock is an expert paying attention to specific kind of important features or feature interactions. I implemented a Parallel MaskNet from scratch following the paper and its model below.

The model is trained with Movielens dataset. 3 sparse features (user, movie, genre) are used as input, to predict the movie ratings. Trained for 10 epochs before the model started to overfit.

The model, training and inference code can be found here.

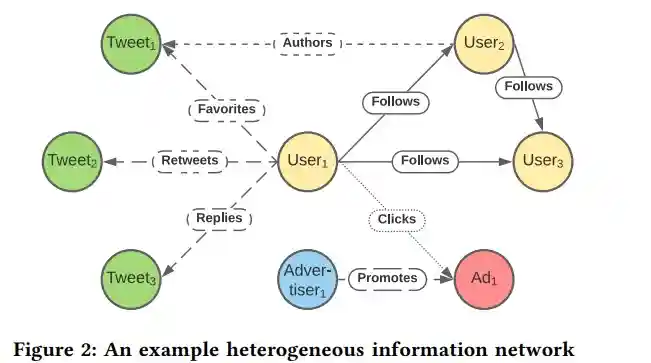

Knowledge Graph Embedding

Besides token/text/sentence embedding, image embedding and feature embedding, Knowledge Graph Embedding (KGE) is another interesting model that is used widely in prediction and recommendation tasks.

TwHIN is Twitter (X)'s production KGE model to capture Twitter information network's entities and relations/interactions, as illustrated below. As the embedding table is huge, torchrec's EmbeddingBagCollection is used to support DistributedModelParallel. One special new design in the model is that the scoring function for logits is ((source_embedding + relation_embedding) * target_embedding).sum(-1). Another special design is the negative sampling used to create fake negative labels for training -- "Negative edges are sampled by corrupting positive edges via replacing either the source or target entity".

I implemented the KGE model following the paper. The model is trained with TwitterFaveGraph dataset. Source entity is user. Target entity is tweet. Relation is favorate.

The model, training and inference code can be found here.

Code Generator

One very useful application of AI model is for automatic code generation. Since programming language is also a language, NLP model can be used for code generation too. This time, I use huggingface transformer framework to save efforts and reuse pre-trained GPT2 models.

The target is a python code generator. First thing is to build/train a python code tokenizer, since it's more efficient than the pre-trained GPT2 tokenizer in handling python code. The "code_search_net" dataset is used to fine-tune the tokenizer. Next thing is to fine-tune the pretrained GPT2 model. "codeparrot-ds" dataset is used for the training. The dataset's python code is first chunked and tokenized with the fine-tuned python tokenizer, then feed into the GPT2 model to fine-tune it. The resulting model can then be used to generate python code base on input prompt code/comments.

The model, training and inference code can be found here.