When to Use Graphs in RAG: A Comprehensive Analysis for Graph Retrieval-Augmented Generation

Zhishang Xiang† 1, Chuanjie Wu† 1, Qinggang Zhang* 1,

Shengyuan Chen2,Zijin Hong2, Xiao Huang2,Jinsong Su* 1

1Xiamen University 2The Hong Kong Polytechnic University

†Equal contribution, *Corresponding author

🎉 News

[2025-06-06] We introduce GraphRAG-Bench, a comprehensive benchmark for evaluating Graph Retrieval-Augmented Generation models.

[2025-05-25] Leaderboard is on!

[2025-05-14] We release the GraphRAG-Bench dataset.

[2025-01-21] We release the GraphRAG survey.

📖 About

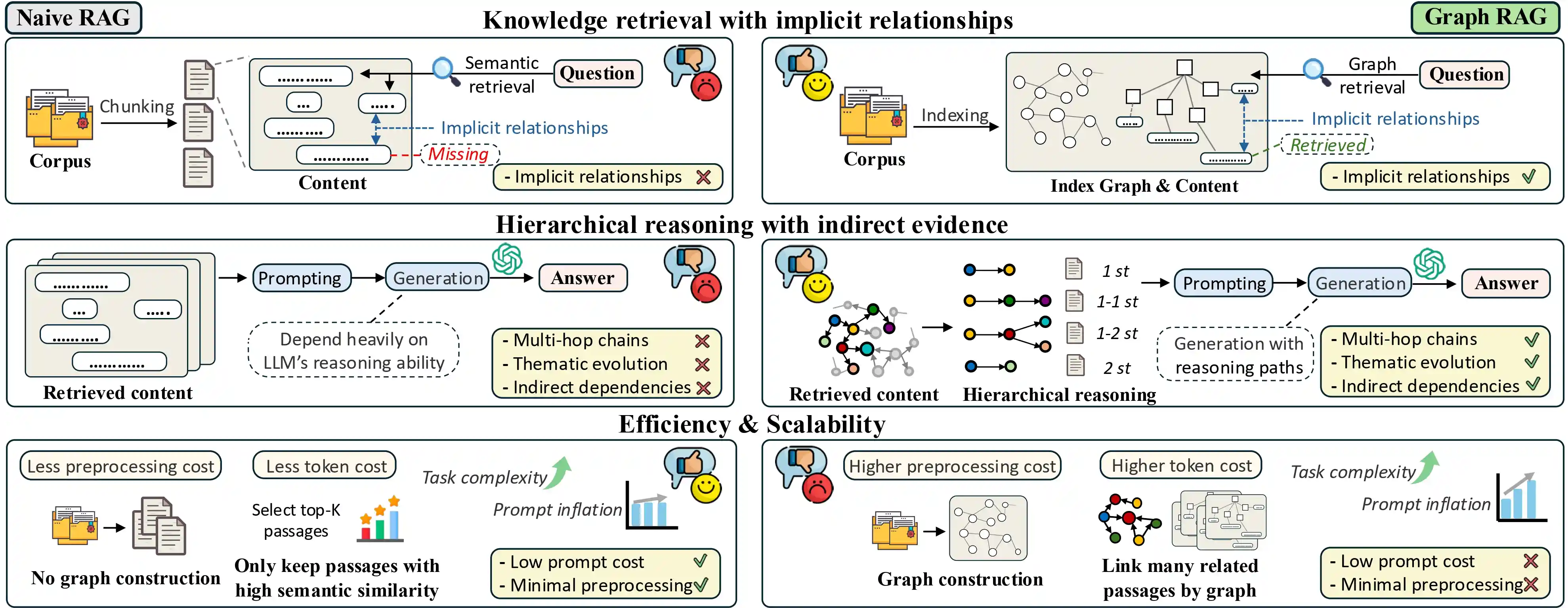

Graph retrieval-augmented generation (GraphRAG) has emerged as a powerful paradigm for enhancing large

language models (LLMs) with external knowledge. It leverages graphs to model the hierarchical structure

between specific concepts, enabling more coherent and effective knowledge retrieval for accurate reasoning.

Despite its conceptual promise, recent studies report that GraphRAG frequently underperforms vanilla RAG on

many real-world tasks. This raises a critical question: Is GraphRAG really effective, and in which

scenarios

do graph structures provide measurable benefits for RAG systems? To address this, we propose

GraphRAG-Bench, a

comprehensive benchmark designed to evaluate GraphRAG models on both hierarchical knowledge retrieval and deep

contextual reasoning. GraphRAG-Bench features a comprehensive dataset with tasks of increasing difficulty,

covering fact retrieval, complex reasoning, contextual summarization, and creative generation, and a

systematic evaluation across the entire pipeline, from graph construction and knowledge retrieval to final

generation. Leveraging this novel benchmark, we systematically investigate the conditions when GraphRAG

surpasses traditional RAG and the underlying reasons for its success, offering guidelines for its practical

application.

🏆 Leaderboard

GraphRAG-Bench (Novel)

|

Model↕ |

Rank↕ |

Average↕ |

Date↕ |

Fact Retrieval | Complex Reasoning | Contextual Summarize | Creative Generation |

Link |

|||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

ACC↕ |

ROUGE-L↕ |

ACC↕ |

ROUGE-L↕ |

ACC↕ |

Cov↕ |

ACC↕ |

FS↕ |

Cov↕ |

|||||

GraphRAG-Bench (Medical)

|

Model↕ |

Rank↕ |

Average↕ |

Date↕ |

Fact Retrieval | Complex Reasoning | Contextual Summarize | Creative Generation |

Link |

|||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

ACC↕ |

ROUGE-L↕ |

ACC↕ |

ROUGE-L↕ |

ACC↕ |

Cov↕ |

ACC↕ |

FS↕ |

Cov↕ |

|||||

🧩 Examples

Category: Level 1

Task Name: Fact Retrieval

Brief Description: Require retrieving isolated knowledge points with minimal reasoning; mainly test precise keyword matching.

Example: Which region of France is Mont St. Michel located?

Category: Level 2

Task Name: Complex Reasoning

Brief Description: Require chaining multiple knowledge points across documents via logical connections.

Example: How did Hinze’s agreement with Felicia relate to the perception of England’s rulers?

Category: Level 3

Task Name: Contextual Summarize

Brief Description: Involve synthesizing fragmented information into a coherent, structured answer; emphasize logical coherence and context.

Example: What role does John Curgenven play as a Cornish boatman for the visitors exploring this region?

Category: Level 4

Task Name: Creative Generation

Brief Description: Require inference beyond retrieved content, often involving hypothetical or novel scenarios.

Example: Retell the scene of King Arthur’s comparison to John Curgenven and the exploration of the Cornish coastline as a newspaper article.

📝 Citation

Copy

@article{xiang2025use, title={When to use Graphs in RAG: A Comprehensive Analysis for Graph Retrieval-Augmented Generation}, author={Xiang, Zhishang and Wu, Chuanjie and Zhang, Qinggang and Chen, Shengyuan and Hong, Zijin and Huang, Xiao and Su, Jinsong}, journal={arXiv preprint arXiv:2506.05690}, year={2025} }