Lucas Resck

I am a second-year PhD student in Computation, Cognition and Language at the Language Technology Lab at the University of Cambridge, supervised by Prof. Anna Korhonen and Prof. Isabelle Augenstein under the ELLIS PhD Program. I am a Girton College member and a Cambridge Trust scholar. My research interests are machine learning, explainability, interpretability and multilingual NLP.

Previously, I was a researcher at the Visual Data Science Lab at Fundação Getulio Vargas (FGV) in Rio de Janeiro, Brazil, conducting research on similar topics. I hold an MSc in Mathematical Modeling (2024) and a BSc in Applied Mathematics (2021) from the School of Applied Mathematics at FGV.

In 2022, I was a visiting researcher at the Vision, Language, and Learning Lab at Rice University, Houston. I also have experience applying computational methods to solve legal problems.

-

EMNLP 2025

Explainability and Interpretability of Multilingual Large Language Models: A Survey

In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing , Nov 2025

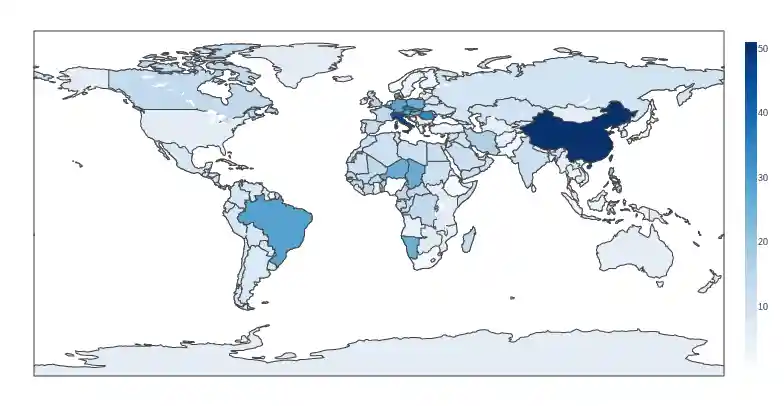

Multilingual large language models (MLLMs) demonstrate state-of-the-art capabilities across diverse cross-lingual and multilingual tasks. Their complex internal mechanisms, however, often lack transparency, posing significant challenges in elucidating their internal processing of multilingualism, cross-lingual transfer dynamics and handling of language-specific features. This paper addresses this critical gap by presenting a survey of current explainability and interpretability methods specifically for MLLMs. To our knowledge, it is the first comprehensive review of its kind. Existing literature is categorised according to the explainability techniques employed, the multilingual tasks addressed, the languages investigated and available resources. The survey further identifies key challenges, distils core findings and outlines promising avenues for future research within this rapidly evolving domain.

-

Exploring the Trade-off Between Model Performance and Explanation Plausibility of Text Classifiers Using Human Rationales

Lucas Resck, Marcos M. Raimundo, and Jorge Poco

In Findings of the Association for Computational Linguistics: NAACL 2024 . Also presented as a poster at the LatinX in NLP at NAACL 2024 workshop , Jun 2024

Saliency post-hoc explainability methods are important tools for understanding increasingly complex NLP models. While these methods can reflect the model’s reasoning, they may not align with human intuition, making the explanations not plausible. In this work, we present a methodology for incorporating rationales, which are text annotations explaining human decisions, into text classification models. This incorporation enhances the plausibility of post-hoc explanations while preserving their faithfulness. Our approach is agnostic to model architectures and explainability methods. We introduce the rationales during model training by augmenting the standard cross-entropy loss with a novel loss function inspired by contrastive learning. By leveraging a multi-objective optimization algorithm, we explore the trade-off between the two loss functions and generate a Pareto-optimal frontier of models that balance performance and plausibility. Through extensive experiments involving diverse models, datasets, and explainability methods, we demonstrate that our approach significantly enhances the quality of model explanations without causing substantial (sometimes negligible) degradation in the original model’s performance.

-

Distill n’ Explain: explaining graph neural networks using simple surrogates

Tamara Pereira, Erik Nascimento, Lucas E. Resck, Diego Mesquita, and Amauri Souza

In Proceedings of The 26th International Conference on Artificial Intelligence and Statistics , Apr 2023

Explaining node predictions in graph neural networks (GNNs) often boils down to finding graph substructures that preserve predictions. Finding these structures usually implies back-propagating through the GNN, bonding the complexity (e.g., number of layers) of the GNN to the cost of explaining it. This naturally begs the question: Can we break this bond by explaining a simpler surrogate GNN? To answer the question, we propose Distill n’ Explain (DnX). First, DnX learns a surrogate GNN via knowledge distillation. Then, DnX extracts node or edge-level explanations by solving a simple convex program. We also propose FastDnX, a faster version of DnX that leverages the linear decomposition of our surrogate model. Experiments show that DnX and FastDnX often outperform state-of-the-art GNN explainers while being orders of magnitude faster. Additionally, we support our empirical findings with theoretical results linking the quality of the surrogate model (i.e., distillation error) to the faithfulness of explanations.