Gaussian Deformation Fields for Real-time Dynamic Novel View Synthesis

WACV 2025

1Brown University, 2Meta Reality Lab

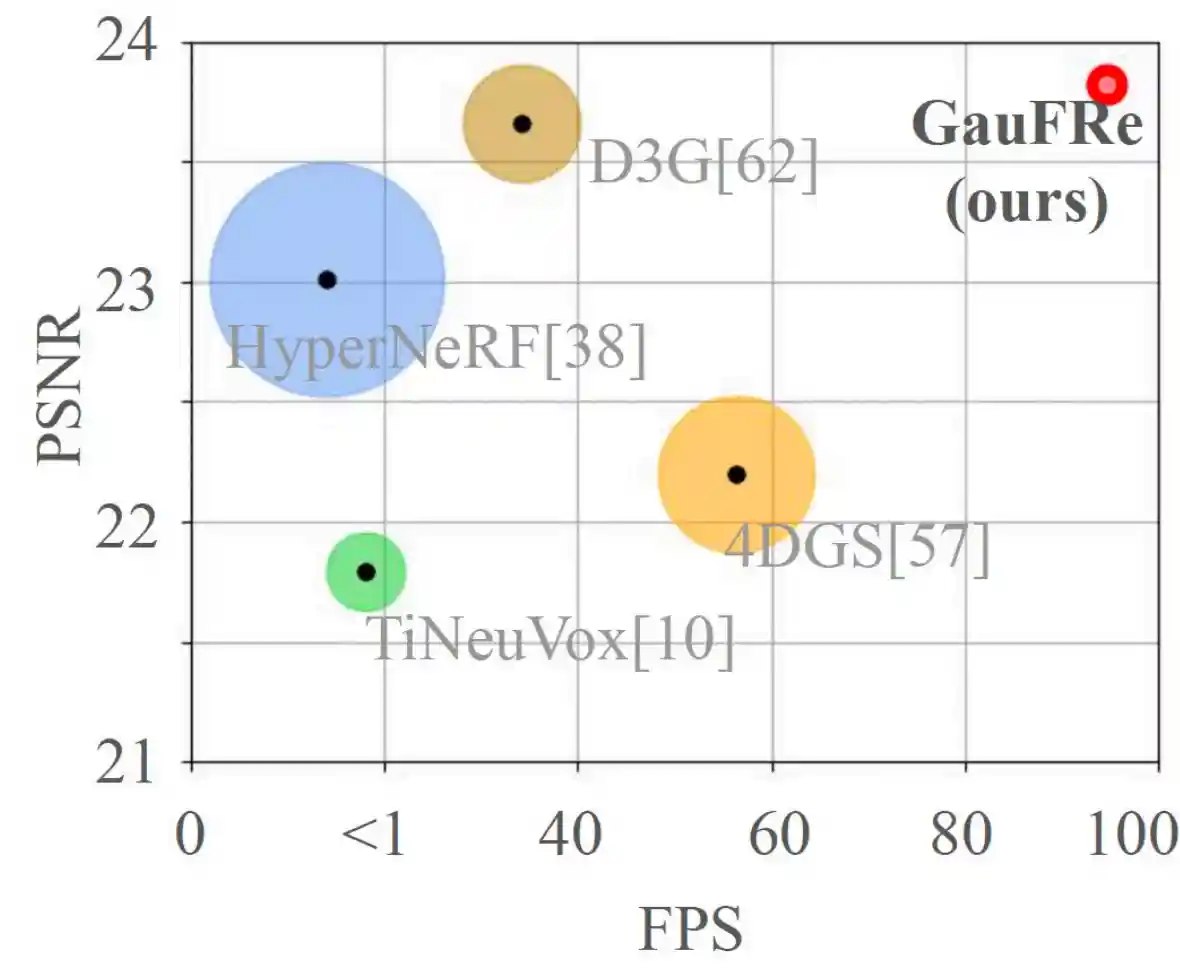

GauFRe reconstructs dynamic scenes from casually-captured monocular video inputs. Our representation renders in real-time (> 30FPS) while achieving high rendering performance. GauFRe also decomposes static/dynamic regions without extra supervision.

Our method, GauFRe, enables high-quality 3D dynamic scene rendering from monocular video.

On real-world data, we achieve 96 FPS, and train faster than previous methods.

Circle size denotes training

time. All methods are trained on a single RTX 3090 GPU.

Results on the Real-world NeRF-DS Dataset

Baseline method (left) vs GauFRe (right). Click to select different methods and scenes.

Training Speed Comparison with D3G

GauFRe (right) achieves similar quality to the D3G (left) while converging much faster. Here, we render test views every 10th optimization iteration. Our method uses a larger number of initial Gaussians, and renders slower in early stages. But it quickly overtakes D3G in overall training-time performance.

Results on the Synthetic D-NeRF Dataset

Baseline method (left) vs GauFRe (right). Click to select different methods and scenes.

Our forward-warping deformation field design allows explicitly motion modeling. Below we plot the trajectories of a subset of Gaussians.

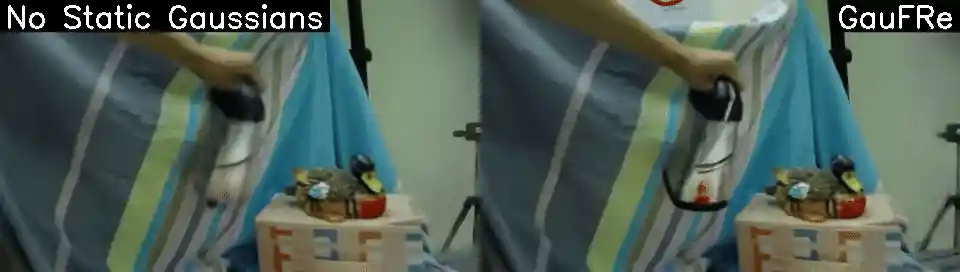

Method Ablations

Please move the slider to view the ablated variant (left) overlayed on GauFRe (right).

Bibtex

@inproceedings{liang2024gaufregaussiandeformationfields, author={Liang, Yiqing and Khan, Numair and Li, Zhengqin and Nguyen-Phuoc, Thu and Lanman, Douglas and Tompkin, James and Xiao, Lei}, title = {GauFRe: Gaussian Deformation Fields for Real-time Dynamic Novel View Synthesis}, booktitle = {Proc. IEEE/CVF Winter Conference on Applications of Computer Vision (WACV)}, year = {2025}, }