Nicolás Violante Grezzi

About

I'm currently a postdoc at Imagine, École Nationale des Ponts et Chaussées, working with Vincent Lepetit. I completed my Ph.D. at Inria Sophia Antipolis with the GraphDeco group, supervised by George Drettakis. I earned my master's degree in Mathematics, Vision, and Learning from ENS Paris-Saclay, graduating with highest honors (mention très bien). I also earned a degree in Electrical Engineering from Universidad de la República in Uruguay.

News

05/2025

Our paper "Splat and Replace: 3D Reconstruction with Repetitive Elements" was accepted at SIGGRAPH 2025.

09/2024

I started an internship at Adobe, San Francisco, under the supervision of Thibault Groueix.

Research

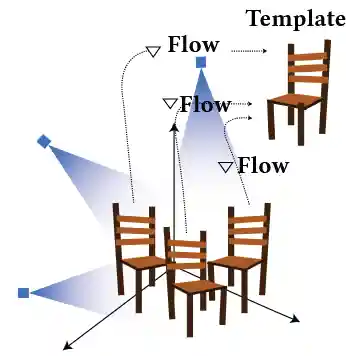

Splat and Replace: 3D Reconstruction with Repetitive Elements

N. Violante, A. Meuleman, A. Gauthier, F. Durand, T. Groueix, G. Drettakis

SIGGRAPH 2025

We leverage repetitive elements in 3D scenes to improve novel view synthesis. Neural Radiance Fields (NeRF) and 3D Gaussian Splatting (3DGS) have greatly improved novel view synthesis but renderings of unseen and occluded parts remain low-quality if the training views are not exhaustive enough. Our key observation is that our environment is often full of repetitive elements. We propose to leverage those repetitions to improve the reconstruction of low-quality parts of the scene due to poor coverage and occlusions. We propose a method that segments each repeated instance in a 3DGS reconstruction, registers them together, and allows information to be shared among instances. Our method improves the geometry while also accounting for appearance variations across instances. We demonstrate our method on a variety of synthetic and real scenes with typical repetitive elements, leading to a substantial improvement in the quality of novel view synthesis.

Physically-based Lighting of 3D Generative Models of Cars

N. Violante, A. Gauthier, S. Diolatzis, T. Leimkühler, G. Drettakis

Computer Graphics Forum (Eurographics) 2024

Recent work has demonstrated that Generative Adversarial Networks (GANs) can be trained to generate 3D content from 2D image collections, by synthesizing features for neural radiance field rendering. However, most such solutions generate radiance, with lighting entangled with materials. This results in unrealistic appearance, since lighting cannot be changed and view-dependent effects such as reflections do not move correctly with the viewpoint. In addition, many methods have difficulty for full, 360◦ rotations, since they are often designed for mainly front-facing scenes such as faces We introduce a new 3D GAN framework that addresses these shortcomings, allowing multi-view coherent 360◦ viewing and at the same time relighting for objects with shiny reflections, which we exemplify using a car dataset. The success of our solution stems from three main contributions. First, we estimate initial camera poses for a dataset of car images, and then learn to refine the distribution of camera parameters while training the GAN. Second, we propose an efficient Image-Based Lighting model, that we use in a 3D GAN to generate disentangled reflectance, as opposed to the radiance synthesized in most previous work. The material is used for physically-based rendering with a dataset of environment maps. Third, we improve the 3D GAN architecture compared to previous work and design a careful training strategy that allows effective disentanglement. Our model is the first that generatea variety of 3D cars that are multi-view consistent and that can be relit interactively with any environment map.