Shengqu Cai

Mode Seeking meets Mean Seeking for Fast Long Video Generation

Mode Seeking meets Mean Seeking for Fast Long Video Generation

Shengqu Cai, Weili Nie*, Chao Liu*, Julius Berner, Lvmin Zhang, Nanye Ma, Hansheng Chen, Maneesh Agrawala, Leonidas Guibas, Gordon Wetzstein, Arash Vahdat

In arXiv 2026

[Project Page][Paper]

Decouple local realism and long-range coherence for fast long-video generation by combining mode-seeking teacher distillation with mean-seeking long-video supervision.

Mixture of Contexts for Long Video Generation

Mixture of Contexts for Long Video Generation

Shengqu Cai, Ceyuan Yang, Lvmin Zhang, Yuwei Guo, Junfei Xiao, Ziyan Yang, Yinghao Xu, Zhenheng Yang, Alan Yuille, Leonidas Guibas, Maneesh Agrawala, Lu Jiang, Gordon Wetzstein

In ICLR 2026

[Project Page][Paper]

Learnable sparse attention routing enables minute-long context with short-video cost, pruning most token pairs while preserving minute-long context coherence.

FramePack: Frame Context Packing and Drift Prevention in Next-Frame-Prediction Video Diffusion Models

FramePack: Frame Context Packing and Drift Prevention in Next-Frame-Prediction Video Diffusion Models

Lvmin Zhang, Shengqu Cai, Muyang Li, Gordon Wetzstein, Maneesh Agrawala

In NeurIPS 2025 (Spotlight)

[Project Page] [Paper] [Code]

Frame context packing for next-frame prediction enables longer contexts within fixed sequence budgets, with drift prevention to reduce error accumulation.

BulletTime: Decoupled Control of Time and Camera Pose for Video Generation

BulletTime: Decoupled Control of Time and Camera Pose for Video Generation

Yiming Wang, Qihang Zhang*, Shengqu Cai*, Tong Wu†, Jan Ackermann†, Zhengfei Kuang†, Yang Zheng†, Frano Rajič†, Siyu Tang, Gordon Wetzstein

In CVPR 2026

[Project Page][Paper]

Purely implicit time- and camera-controlled 4D video diffusion that enables decoupled control over world time and camera pose (bullet-time effects).

Generated Reality: Human-centric World Simulation using Interactive Video Generation with Hand and Camera Control

Generated Reality: Human-centric World Simulation using Interactive Video Generation with Hand and Camera Control

Linxi Xie*, Lisong C. Sun*, Ashley Neall, Tong Wu, Shengqu Cai, Gordon Wetzstein

In CVPR Findings 2026

[Project Page] [Paper]

Interactive human-centric video world simulation conditioned on tracked head pose and joint-level hand poses.

Diffusion Self-Distillation for Zero-Shot Customized Image Generation

Diffusion Self-Distillation for Zero-Shot Customized Image Generation

Shengqu Cai, Eric Ryan Chan, Yunzhi Zhang, Leonidas Guibas, Jiajun Wu, Gordon Wetzstein

In CVPR 2025

[Project Page][Paper][Code][Demo]

Training-free customized image generation model that scales to any instance and any context.

Captain Cinema: Towards Short Movie Generation

Captain Cinema: Towards Short Movie Generation

Junfei Xiao, Ceyuan Yang, Lvmin Zhang, Shengqu Cai, Yang Zhao, Yuwei Guo, Gordon Wetzstein, Maneesh Agrawala, Alan Yuille, Lu Jiang

In ICLR 2026

[Project Page] [Paper]

Top-down keyframe planning with bottom-up long-context video synthesis, using interleaved training to adapt MM-DiT for stable and efficient multi-scene generation.

CL-Splats: Continual Learning of Gaussian Splatting with Local Optimization

CL-Splats: Continual Learning of Gaussian Splatting with Local Optimization

Jan Ackermann, Jonas Kulhanek, Shengqu Cai, Haofei Xu, Marc Pollefeys, Gordon Wetzstein, Leonidas Guibas, Songyou Peng

In ICCV 2025

[Project Page][Paper][Code]

Efficiently updates Gaussian splatting-based 3D scene reconstructions from incremental images via change detection and local optimization.

ByteMorph: Benchmarking Instruction-Guided Image Editing with Non-Rigid Motions

ByteMorph: Benchmarking Instruction-Guided Image Editing with Non-Rigid Motions

Di Chang*, Mingdeng Cao*, Yichun Shi, Bo Liu, Shengqu Cai, Shijie Zhou, Weilin Huang, Gordon Wetzstein, Mohammad Soleymani, Peng Wang

In arXiv 2025

[Project Page] [Paper] [Code]

Large-scale benchmark and baseline for instruction-guided image editing with complex non-rigid motions such as viewpoint changes, articulations, and deformations.

ReStyle3D: Scene-level Appearance Transfer with Semantic Correspondences

ReStyle3D: Scene-level Appearance Transfer with Semantic Correspondences

Liyuan Zhu, Shengqu Cai*, Shengyu Huang*, Gordon Wetzstein, Naji Khosravan, Iro Armeni

In SIGGRAPH 2025

[Project Page][Paper][Code]

Scene-level appearance transfer from a single style image to multi-view real-world scenes with semantic correspondences.

X-Dyna: Expressive Dynamic Human Image Animation

X-Dyna: Expressive Dynamic Human Image Animation

Di Chang, Hongyi Xu*, You Xie*, Yipeng Gao*, Zhengfei Kuang*, Shengqu Cai*, Chenxu Zhang*, Guoxian Song, Chao Wang, Yichun Shi, Zeyuan Chen, Shijie Zhou, Linjie Luo, Gordon Wetzstein, Mohammad Soleymani

In CVPR 2025 (Highlight)

[Project Page][Paper][Code]

Human image animation using facial expressions and body movements derived from a driving video.

Collaborative Video Diffusion: Consistent Multi-video Generation with Camera Control

Collaborative Video Diffusion: Consistent Multi-video Generation with Camera Control

Zhengfei Kuang*, Shengqu Cai*, Hao He, Yinghao Xu, Hongsheng Li, Leonidas Guibas, Gordon Wetzstein

In NeurIPS 2024

[Project Page][Paper][Code]

Multi-view/multi-trajectory generation of videos sharing the same underlying content and dynamics.

Robust Symmetry Detection via Riemannian Langevin Dynamics

Robust Symmetry Detection via Riemannian Langevin Dynamics

Jihyeon Je*, Jiayi Liu*, Guandao Yang*, Boyang Deng*, Shengqu Cai, Gordon Wetzstein, Or Litany, Leonidas Guibas

In SIGGRAPH Asia 2024

[Project Page][Paper]

Render low fidelity animated mesh directly into animation using pre-trained 2D diffusion models, without the need of any further training/distillation.

Generative Rendering: Controllable 4D-Guided Video Generation with 2D Diffusion Models

Generative Rendering: Controllable 4D-Guided Video Generation with 2D Diffusion Models

Shengqu Cai, Duygu Ceylan*, Matheus Gadelha*, Chun-Hao Paul Huang, Tuanfeng Y. Wang, Gordon Wetzstein

In CVPR 2024

[Project Page][Paper]

Render low fidelity animated mesh directly into animation using pre-trained 2D diffusion models, without the need of any further training/distillation.

DiffDreamer: Towards Consistent Unsupervised Single-view Scene Extrapolation with Conditional Diffusion Models

DiffDreamer: Towards Consistent Unsupervised Single-view Scene Extrapolation with Conditional Diffusion Models

Shengqu Cai, Eric Ryan Chan, Songyou Peng, Mohamad Shahbazi, Anton Obukhov, Luc Van Gool, Gordon Wetzstein

In ICCV 2023

[Project Page][Paper][Code]

A diffusion-model based unsupervised framework capable of synthesizing novel views depicting a long camera trajectory flying into an input image.

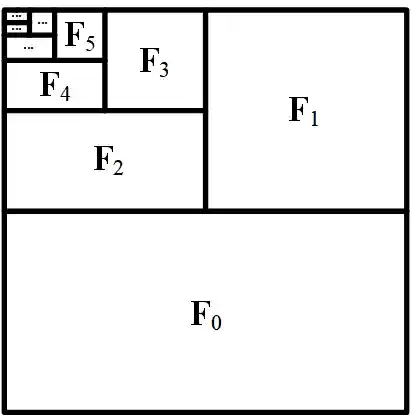

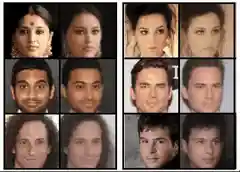

Pix2NeRF: Unsupervised Conditional π-GAN for Single Image to Neural Radiance Fields Translation

Pix2NeRF: Unsupervised Conditional π-GAN for Single Image to Neural Radiance Fields Translation

Shengqu Cai, Anton Obukhov, Dengxin Dai, Luc Van Gool

In CVPR 2022

[Paper][Code]

3D-free unsupervised Single view NeRF-based novel view synthesis via conditional NeRF-GAN training and inversion.