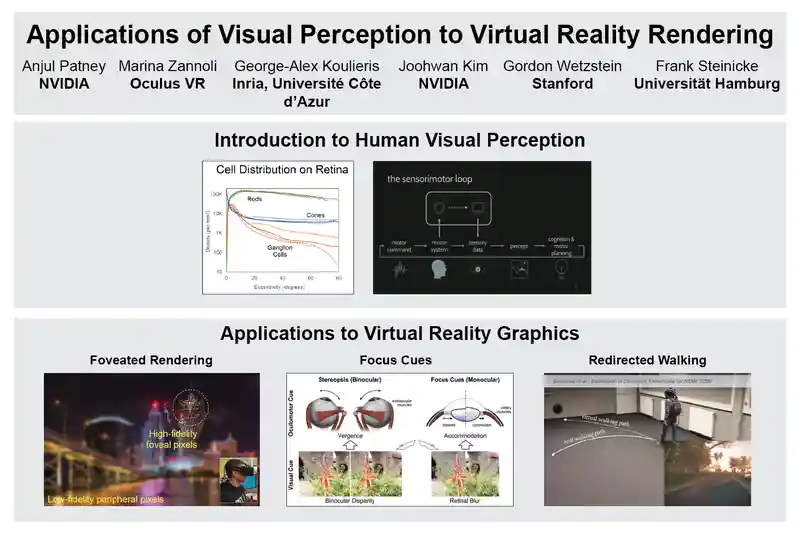

Applications of Visual Perception to Virtual Reality Rendering

SIGGRAPH 2017 Course

Sunday, 30 July, Los Angeles Convention Center, Room 153

Abstract

Over the past few years, virtual reality (VR) has transitioned from the realm of expensive research prototypes and military installations into widely available consumer devices. These devices enable experiences that are highly immersive and entertaining, and have the potential to redefine the future of computer graphics. Yet, several challenges limit the practicality and accessibility of modern virtual reality Head-Mounted Displays (HMDs), including:

- Performance : The high pixel counts and frame rates of increase rendering costs by up to 7 times compared to 1920 × 1080 30 Hz gaming, and next-generation HMDs could easily double or triple costs again.

- Visual Quality / Immersion : Visual immersion using contemporary HMDs is limited due to several factors including image resolution and field-of-view. It is also subject to discomfort and even sickness because of various sparsely-explored factors such as incorrect visual cues and system latency.

- Physical Design and Ergonomics : Modern HMDs tend to be unwieldy and unsuitable for hours of continuous use. Further, while room-scale VR experiences successfully permit intuitive locomotion, they are limited by the size of the physical room.

This course explores the role of ongoing and future research in visual perception to solve the above challenges. Human visual perception has repeatedly been shown to be an important consideration in improving the quality of computer graphics while keeping up with its performance requirements. Thus, an understanding of visual perception and its applications in real-time VR graphics is vital for HMD designers, application developers, and content creators.

Course Schedule

| 9:00 AM | Welcome Anjul Patney, NVIDIA Research |

Slides |

| 9:10 AM | A Framework for Perception-driven Advancement of VR systems Marina Zannoli, Oculus Research |

Slides |

| 9:40 AM | A Brief Dive into Human Visual Perception George-Alex Koulieris, Inria, Université Côte d'Azur |

Slides |

| 10:10 AM | Case study: Foveated Rendering Joohwan Kim, NVIDIA Research |

Slides |

| 10:40 AM | Break | -- |

| 10:50 AM | Case study: Incorporating focus cues into VR displays Prof. Gordon Wetzstein, Stanford University |

Slides |

| 11:20 AM | Case study: Redirected walking in VR Prof. Dr. Frank Steinicke, Universität Hamburg |

Slides |

| 11:50 AM | Summary / Q&A Moderated by Anjul Patney, NVIDIA Research |

Slides (same as introduction) |

Slides will be available after July 30.

Site last updated: August 4, 2017