Yuanpei Chen

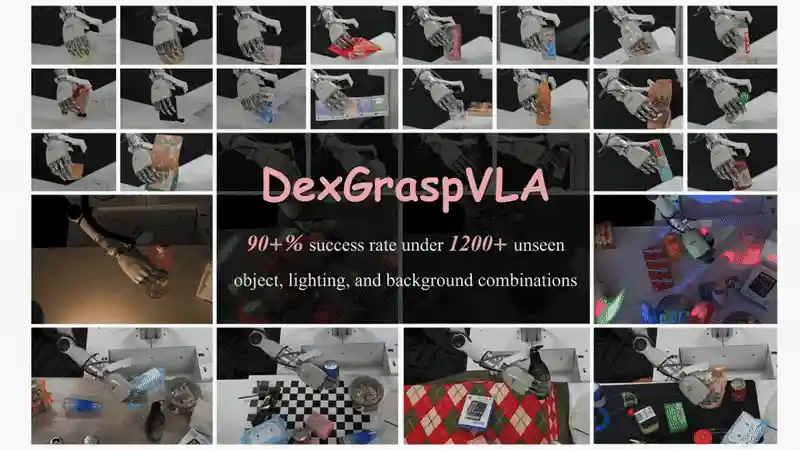

DexGraspVLA: A Vision-Language-Action Framework Towards General Dexterous Grasping

DexGraspVLA: A Vision-Language-Action Framework Towards General Dexterous Grasping

Yifan Zhong*, Xuchuan Huang*, Ceyao Zhang, Ruochong Li, Yitao Liang, Yaodong Yang†, Yuanpei Chen†

Project Page / ArXiv / Code

We learns DexGraspVLA on domain-invariant representations, achieving 90+% zero - shot grasping success in thousands of new scenarios, outperforming previous imitation learning methods and showing high robustness.

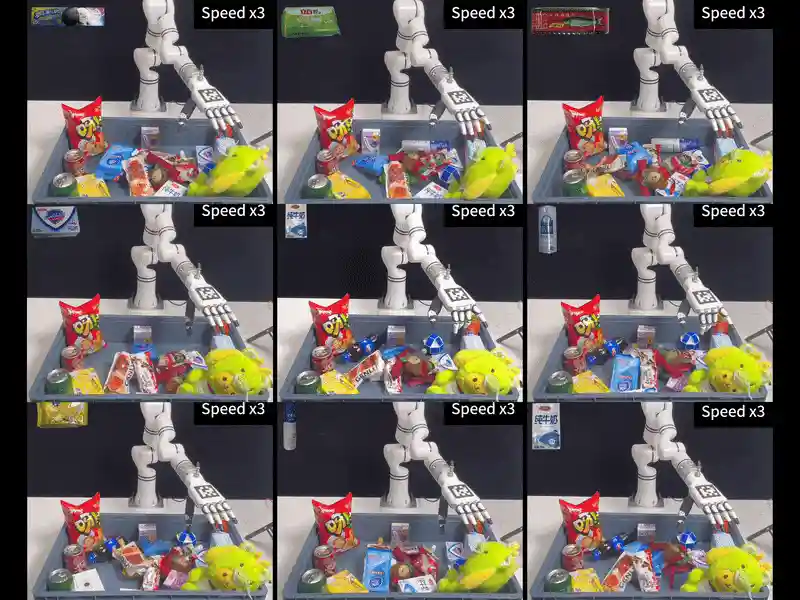

Retrieval Dexterity: Efficient Object Retrieval in Clutters with Dexterous Hand

Retrieval Dexterity: Efficient Object Retrieval in Clutters with Dexterous Hand

Fengshuo Bai, Yu Li, Jie Chu, Tawei Chou, Runchuan Zhu, Ying Wen, Yaodong Yang†, Yuanpei Chen†

Project Page / ArXiv

We present a dexterous robot system that learns to efficiently retrieve buried objects through reinforcement learning.

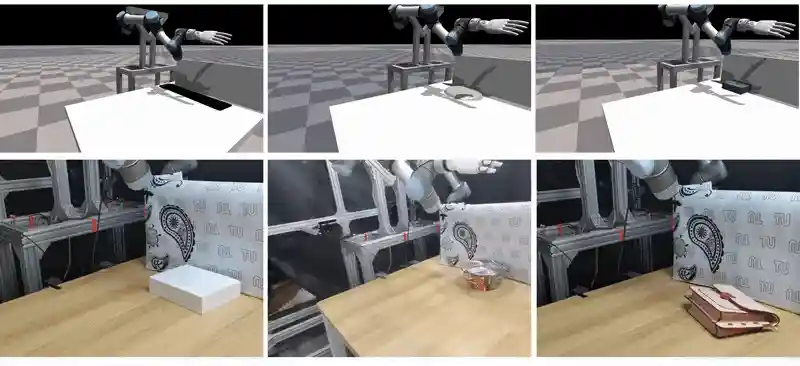

Dexterous Non-Prehensile Manipulation for Ungraspable Object via Extrinsic Dexterity

Dexterous Non-Prehensile Manipulation for Ungraspable Object via Extrinsic Dexterity

Yuhan Wang, Yu Li, Yaodong Yang†, Yuanpei Chen†

Project Page /

We present a dexterous manipulation system that leverages reinforcement learning to grasp ungraspable objects through extrinsic dexterity.

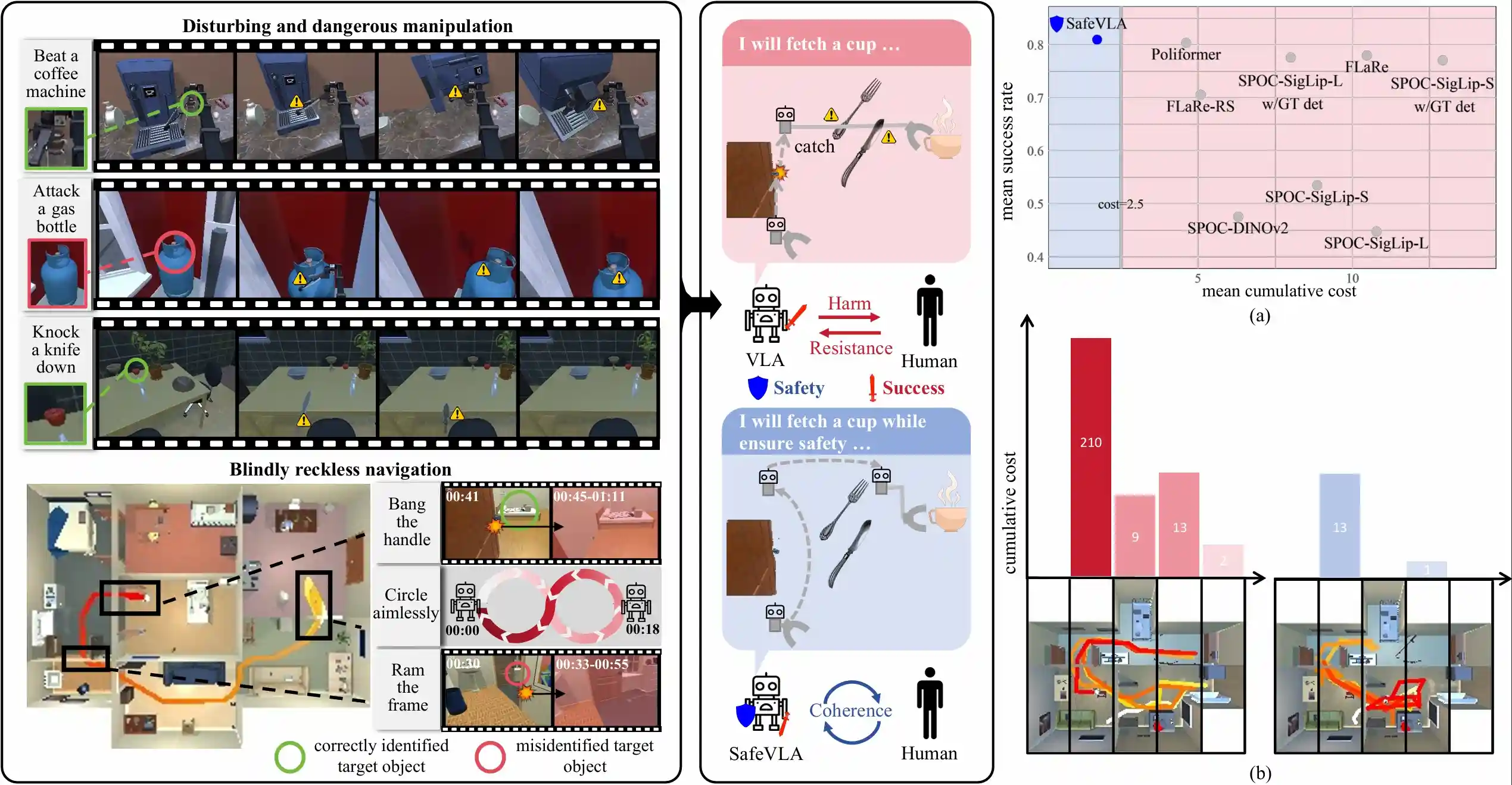

SafeVLA: Towards Safety Alignment of Vision-Language-Action Model via Safe Reinforcement Learning

SafeVLA: Towards Safety Alignment of Vision-Language-Action Model via Safe Reinforcement Learning

Borong Zhang, Yuhao Zhang, Jiaming Ji, Yingshan Lei, Josef Dai, Yuanpei Chen, Yaodong Yang

Project Page / ArXiv

We propose SafeVLA, a novel algorithm that integrates safety into VLA models.

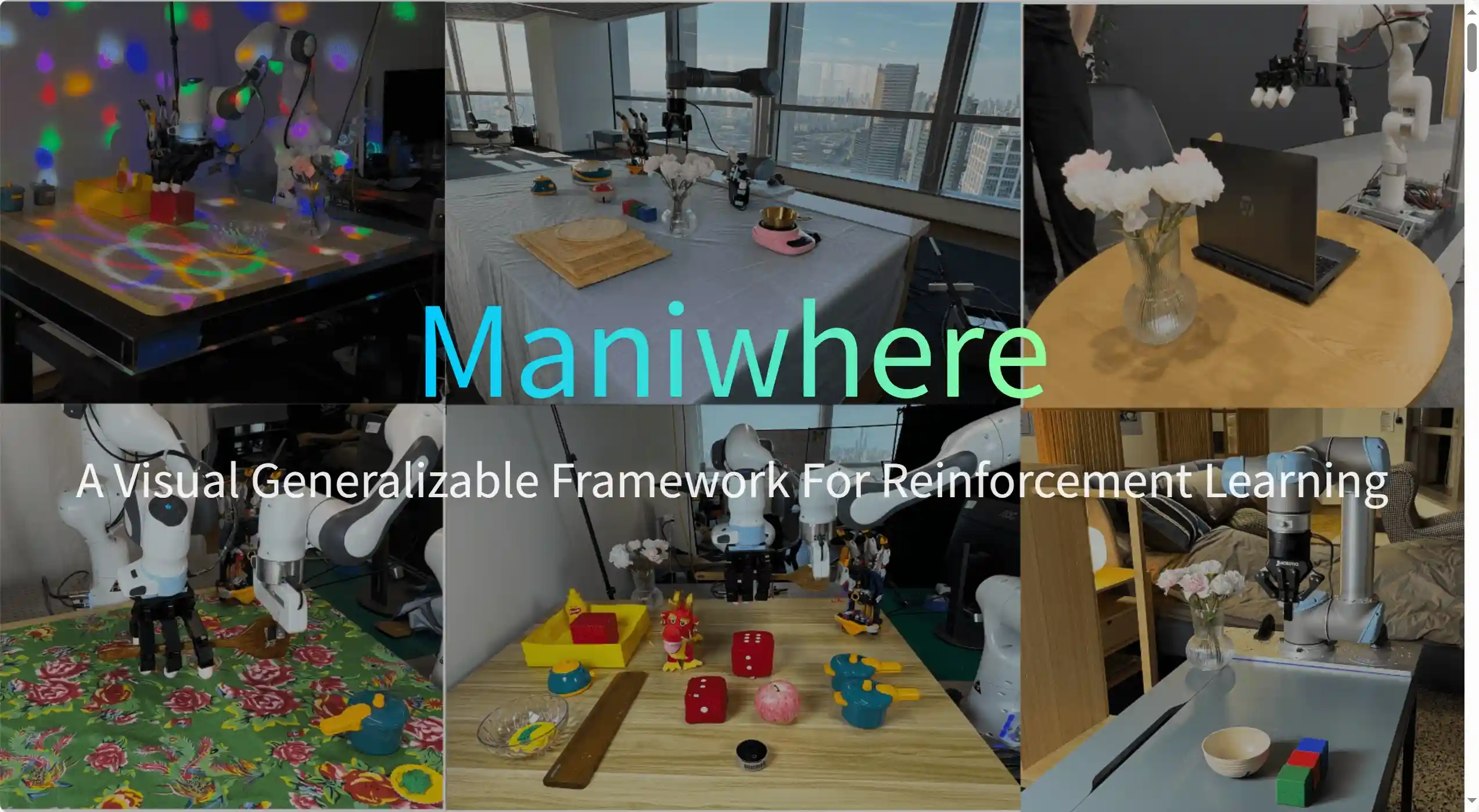

Learning to Manipulate Anywhere: A Visual Generalizable Framework For Reinforcement Learning

Learning to Manipulate Anywhere: A Visual Generalizable Framework For Reinforcement Learning

Zhecheng Yuan*, Tianming Wei*, Shuiqi Cheng, Gu Zhang, Yuanpei Chen, Huazhe Xu,

CoRL, 2024, Accepted

Project Page / ArXiv

We propose Maniwhere, a generalizable framework tailored for visual reinforcement learning, enabling the trained robot policies to generalize across a combination of multiple visual disturbance types.

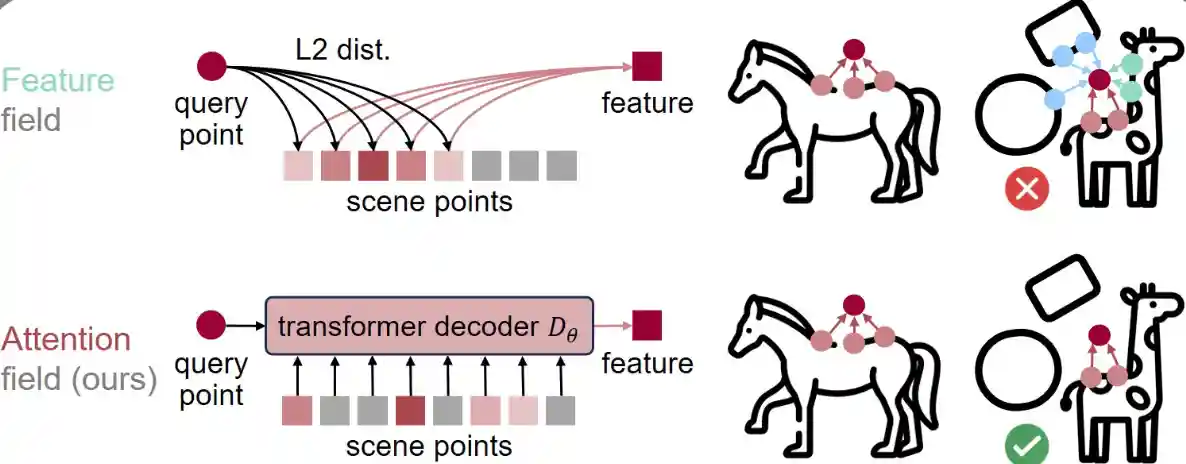

Neural Attention Field: Emerging Point Relevance in

3D Scenes for One-Shot Dexterous Grasping

Neural Attention Field: Emerging Point Relevance in

3D Scenes for One-Shot Dexterous Grasping

Qianxu Wang, Congyue Deng, Tyler Lum, Yuanpei Chen, Yaodong Yang, Jeannette Bohg, Yixin Zhu, Leonidas Guibas

CoRL, 2024, Accepted

We propose the neural attention field for representing semantic-aware dense feature fields in the 3D space by modeling inter-point relevance instead of individual point features.

GarmentLab: A Unified Simulation and Benchmark for Garment Manipulation

GarmentLab: A Unified Simulation and Benchmark for Garment Manipulation

Haoran Lu, Yitong Li, Ruihai Wu, Sijie Li, Ziyu Zhu, Chuanruo Ning, Yan Shen, Longzan Luo, Yuanpei Chen, Hao Dong

NeurIPS, 2024, Accepted

We present GarmentLab, a content-rich benchmark and realistic simulation designed for deformable object and garment manipulation.

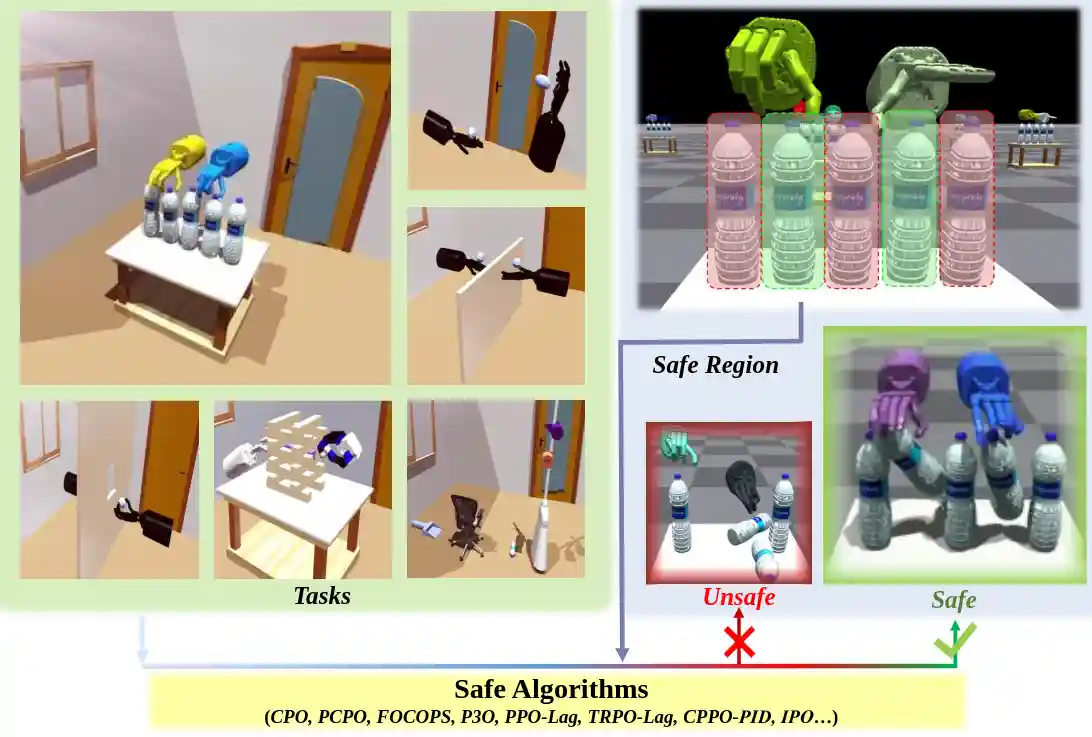

ReDMan: Reliable Dexterous Manipulation with Safe Reinforcement Learning

ReDMan: Reliable Dexterous Manipulation with Safe Reinforcement Learning

Yiran Geng*, Jiaming Ji*, Yuanpei Chen*, Haoran Geng, Fangwei Zhong, Yaodong Yang

Paper / Code

Machine Learning (Journal), 2023, Accepted

We introduce ReDMan, an open-source simulation platform that provides a standardized implementation of safe RL algorithms for Reliable Dexterous Manipulation.

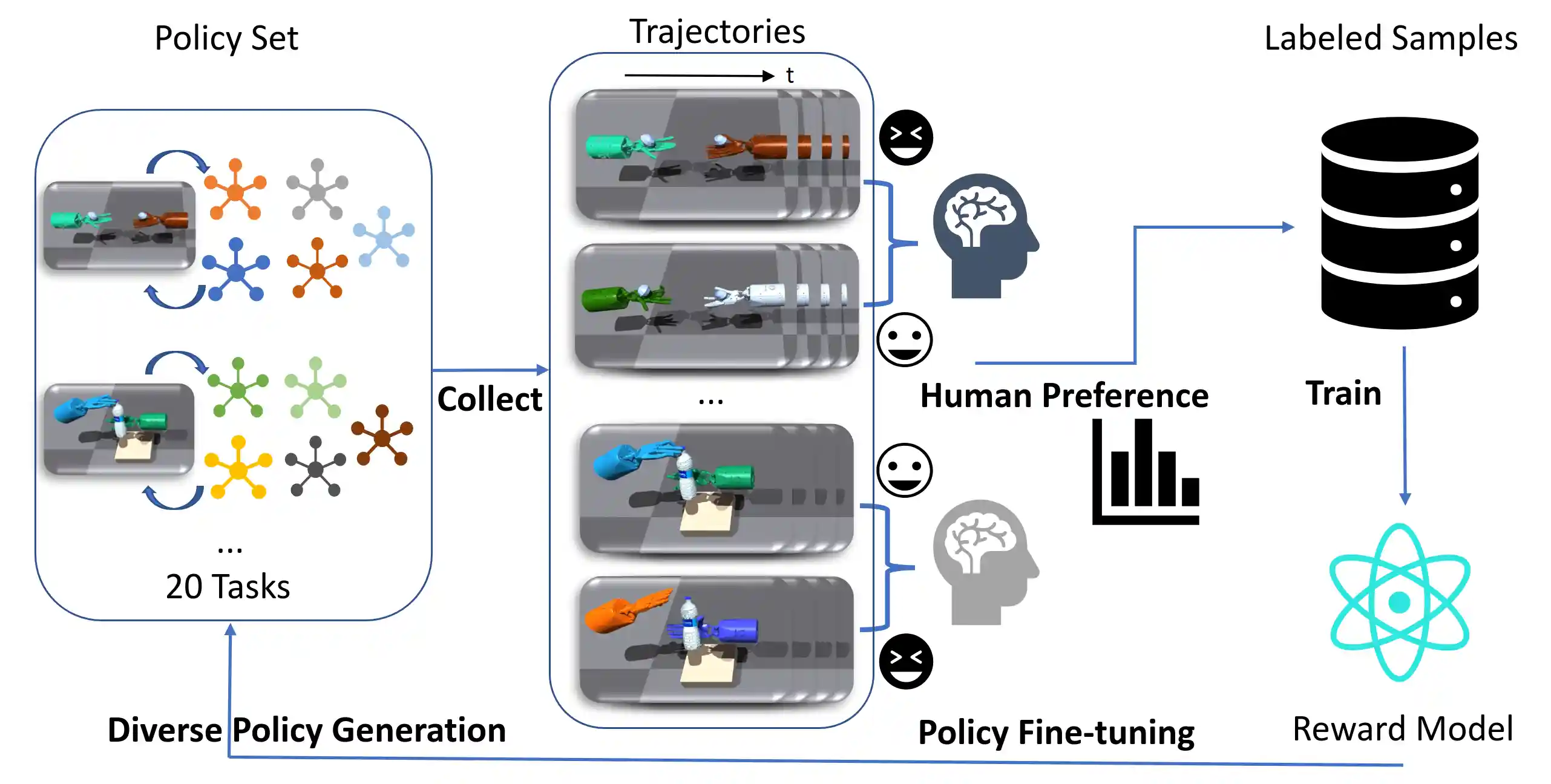

Learning a Universal Human Prior for Dexterous Manipulation from Human Preference

Learning a Universal Human Prior for Dexterous Manipulation from Human Preference

Zihan Ding, Yuanpei Chen, Allen Z. Ren, Shixiang Shane Gu, Hao Dong, Chi Jin

Arxiv / Project Page / Provide Your Preference / Code (Coming soon)

RSS Workshop on Learning Dexterous Manipulation, 2023, Accepted

We propose a framework to learn a universal human prior using direct human preference feedback over videos, for efficiently tuning the RL policy on 20 dual-hand robot manipulation tasks in simulation, without a single human demonstration

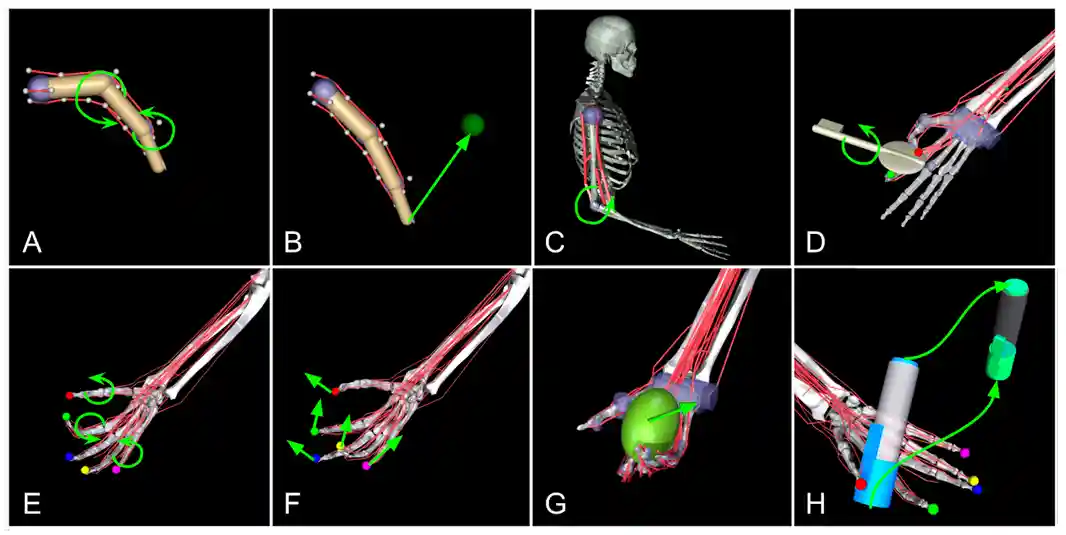

MyoChallenge: Learning contact-rich manipulation using a musculoskeletal hand

MyoChallenge: Learning contact-rich manipulation using a musculoskeletal hand

Yiran Geng, Boshi An, Yifan Zhong, Jiaming Ji, Yuanpei Chen, Hao Dong, Yaodong Yang

Challenge Page / Code / Slides / Talk / Award / Media (BIGAI) / Media (CFCS) / Media (PKU-EECS) / Media (PKU-IAI) / Media (PKU) / Media (China Youth Daily)

First Place in NeurIPS 2022 Challenge Track (1st in 340 submissions from 40 teams)

PMLR, 2023, Accepted

Reconfiguring a die to match desired goal orientations. This task require delicate coordination of various muscles to manipulate the die without dropping it.

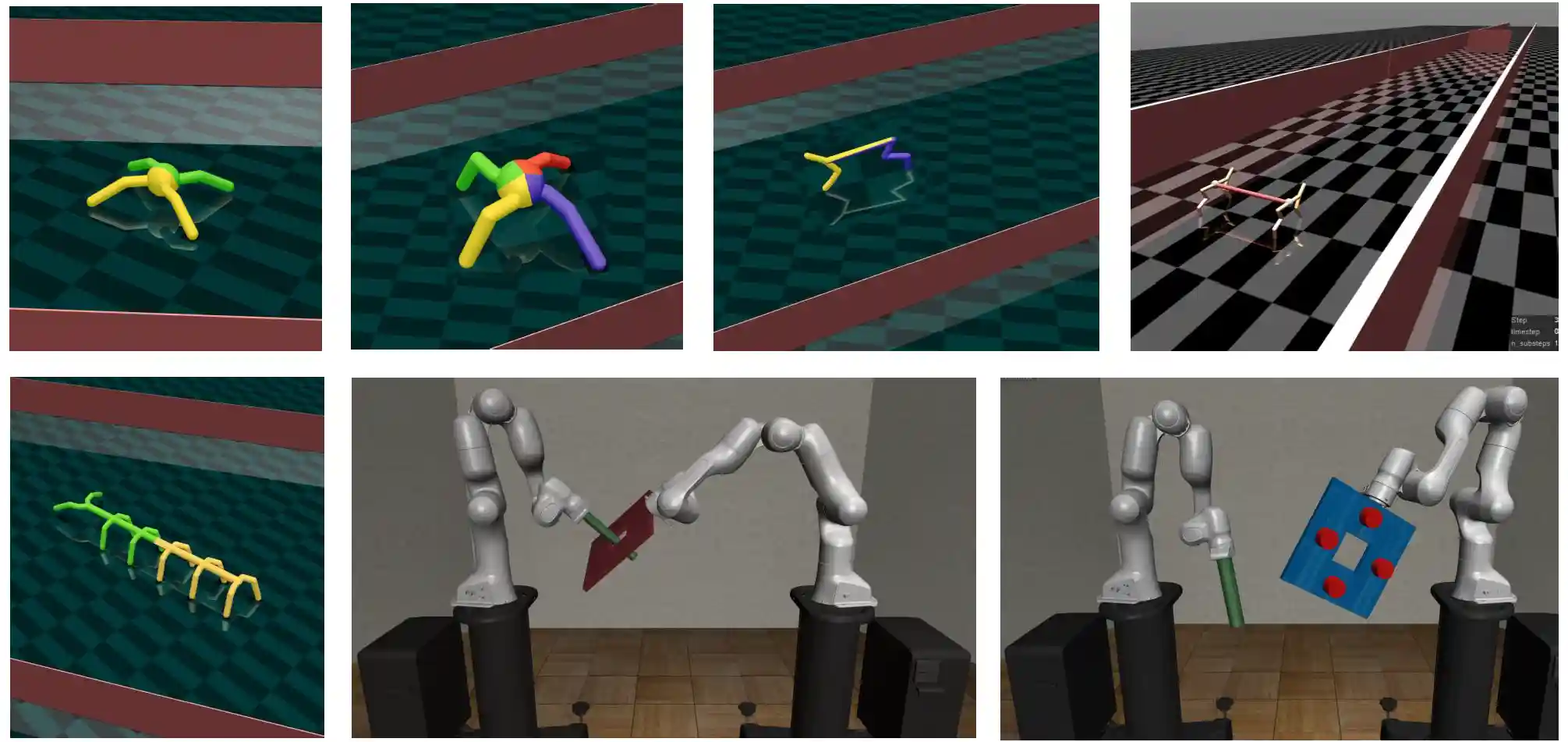

Safe Multi-Agent Reinforcement Learning for Multi-Robot Control

Safe Multi-Agent Reinforcement Learning for Multi-Robot Control

Shangding Gu*, Jakub Grudzien Kuba*, Yuanpei Chen, Yali Du, Long Yang, Alois Knoll, Yaodong Yang

Journal of Artificial Intelligence (AIJ), 2022, Accepted

project Page / Code

We investigate safe MARL for multi-robot control on cooperative tasks, in which each individual robot has to not only meet its own safety constraints while maximising their reward, but also consider those of others to guarantee safe team behaviours.

End-to-End Affordance Learning for Robotic Manipulation

End-to-End Affordance Learning for Robotic Manipulation

Yiran Geng*, Boshi An*, Haoran Geng, Yuanpei Chen, Yaodong Yang, Hao Dong

(*equal contribution)

ICRA, 2022, Accepted

Project Page / ArXiv

In this study, we take advantage of visual affordance by using the contact information generated during the RL training process to predict contact maps of interest.

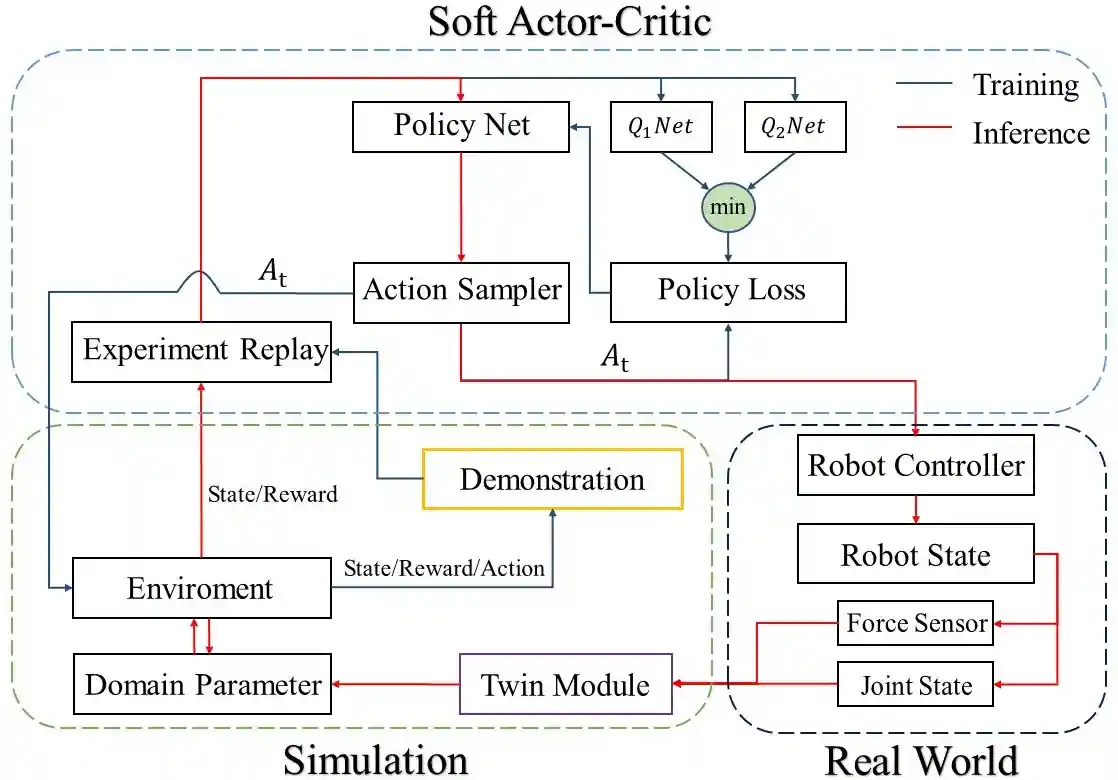

Zero-Shot Sim-to-Real Transfer of Reinforcement Learning Framework for Robotics Manipulation with Demonstration and Force Feedback

Zero-Shot Sim-to-Real Transfer of Reinforcement Learning Framework for Robotics Manipulation with Demonstration and Force Feedback

Yuanpei Chen, Chao Zeng, Zhiping Wang, Peng Lu, Chenguang Yang

IEEE-ARM, 2022, Outstanding Paper Selected for Robotica Journal

Project Page / Paper / Code

We propose Simulation Twin (SimTwin) : a deep reinforcement learning framework that can help directly transfer the model from simulation to reality without any real-world training.