Note : CUTLASS-3 requires users to use CUDA 11.4 or newer, and SM70 or newer, for the target toolkit and architecture, respectively.

N - Column Major Matrix

T - Row Major matrix

{N,T} x {N,T} - All combinations, i.e., NN, NT, TN, TT

NHWC - 4 dimension tensor used for convolution

NCxHWx - Interleaved 4 dimension tensor used for convolution

f - floating point

s - signed int

b - bit

cf - complex float

bf16 - bfloat16

tf32 - tfloat32

Simt - Use Simt CUDA Core MMA

TensorOp - Use Tensor Core MMA

SpTensorOp - Use Sparse Tensor Core MMA

WmmaTensorOp - Use WMMA abstraction to use Tensor Core MMA

Device-level GEMM#

The following tables summarize device-level GEMM kernels in CUTLASS, organized by opcode class, data type, and layout. Hyperlinks to relevant unit tests demonstrate how specific template instances may be defined.

CUTLASS 3.x Kernels#

CUTLASS 2.x Kernels#

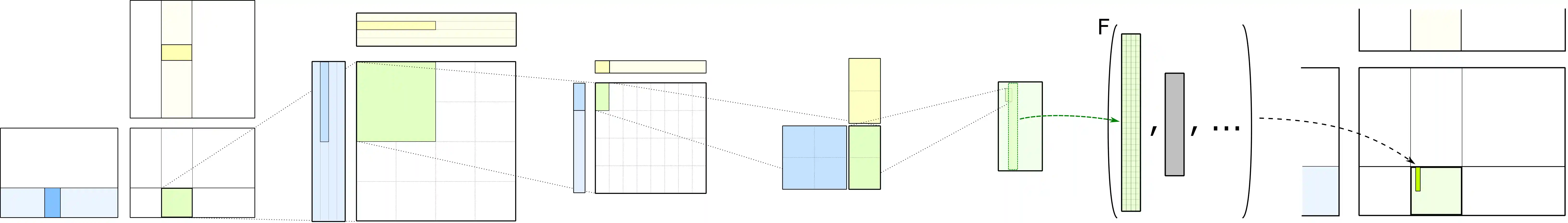

Device-level Implicit GEMM convolution#

The following table summarizes device-level implicit GEMM convolution kernels in CUTLASS, organized by opcode class, data type, and layout. Hyperlinks to relevant conv2d fprop unit tests demonstrate how specific template instances may be defined. One can find and/or create equivalent dgrad and wgrad convolutional operators.

Warp-level Matrix Multiply with Tensor Cores#

The following table summarizes supported warp level shapes for each TensorOp instruction.

TensorOp instructions depend on a permuted shared memory layout that can be efficiently loaded from. The following tables summarize the destination shared memory layout that can be targeted by matrix operands. It is assumed that each thread loads 128b vectors from global memory with layout specified in the column “GMEM Layout.”

TensorOp 8-by-8-by-4.

TensorOp 16-by-8-by-8.

TensorOp 16-by-8-by-8.

TensorOp 16-by-8-by-16.

TensorOp 8-by-8-by-4.

TensorOp 8-by-8-by-16.

TensorOp 16-by-8-by-32.

TensorOp 8-by-8-by-32.

TensorOp 16-by-8-by-64.

TensorOp 8-by-8-by-128.

SpTensorOp 16-by-8-by-16.

SpTensorOp 16-by-8-by-32.

SpTensorOp 16-by-8-by-64.

SpTensorOp 16-by-8-by-128.

Warp-level Matrix Multiply with CUDA WMMA API#

The following table summarizes supported warp level shapes for each WmmaTensorOp instruction.

CUDA exposes warp-level matrix operations in the CUDA C++ WMMA API. The CUDA C++ WMMA API exposes Tensor Cores via a set of functions and types in the nvcuda::wmma namespace. The functions and types in nvcuda::wmma provide target-independent APIs and implement architecture-specific tensor operation using TensorOp instruction underneath. CUTLASS exposes WMMA API through WmmaTensorOp. The WmmaTensorOp supports canonical shared memory layouts. The following table summarizes the destination shared memory layout that can be targeted by matrix operands. The WMMA API expects that matrices in shared memory loaded by nvcuda::wmma::load_matrix_sync() satisfy 128 bit alignment.

WmmaTensorOp (all matrix sizes and data types).

Copyright#

Copyright (c) 2017 - 2026 NVIDIA CORPORATION & AFFILIATES. All rights reserved. SPDX-License-Identifier: BSD-3-Clause

Redistribution and use in source and binary forms, with or without modification, are permitted provided that the following conditions are met: 1. Redistributions of source code must retain the above copyright notice, this list of conditions and the following disclaimer. 2. Redistributions in binary form must reproduce the above copyright notice, this list of conditions and the following disclaimer in the documentation and/or other materials provided with the distribution. 3. Neither the name of the copyright holder nor the names of its contributors may be used to endorse or promote products derived from this software without specific prior written permission. THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.