🍪 Overview

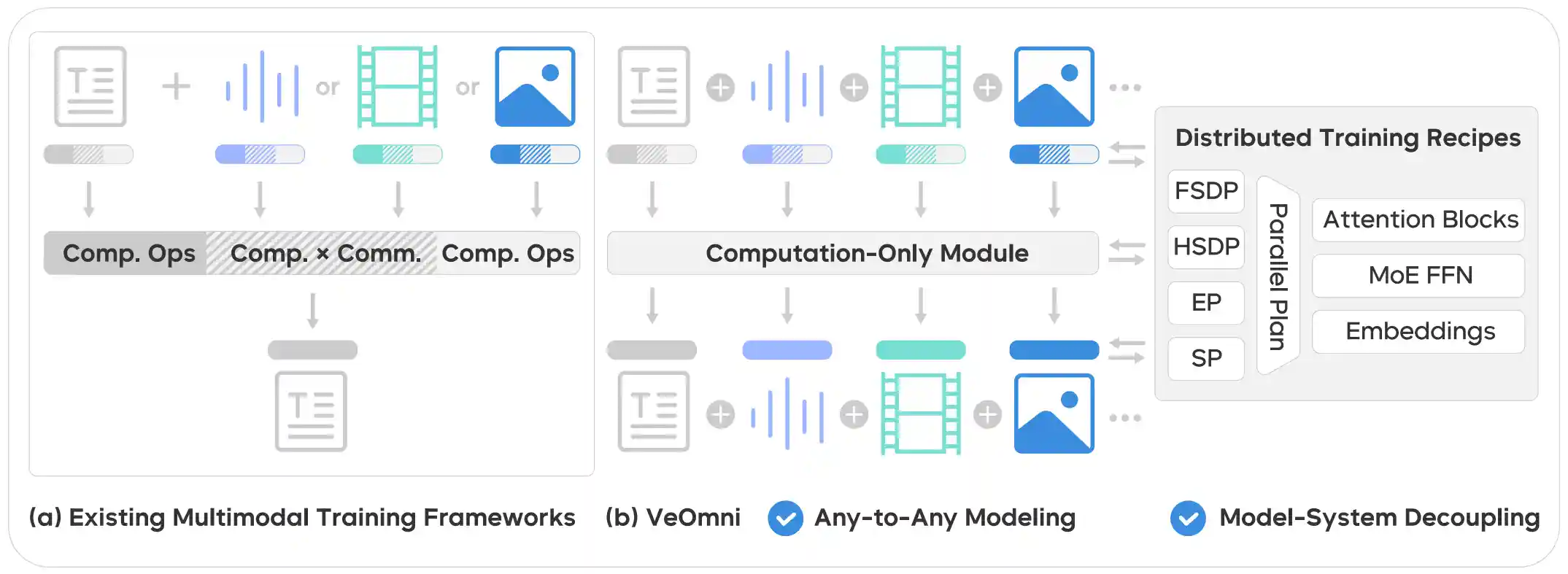

VeOmni is a versatile framework for both single- and multi-modal pre-training and post-training. It empowers users to seamlessly scale models of any modality across various accelerators, offering both flexibility and user-friendliness.

Our guiding principles when building VeOmni are:

-

Flexibility and Modularity: VeOmni is built with a modular design, allowing users to decouple most components and replace them with their own implementations as needed.

-

Trainer-free: VeOmni supports linear training scripts that avoid rigid, structured trainer classes (e.g., PyTorch-Lightning or HuggingFace Trainer). These training scripts expose the entire training logic to users for maximum transparency and control. Besides, VeOmni supports a basic trainer for text-only or vlm/omni models training and a rl trainer as a trainer backend in reinforcement learning.

-

Omni model native: VeOmni enables users to effortlessly scale any omni-model across devices and accelerators.

-

Torch native: VeOmni is designed to leverage PyTorch’s native functions to the fullest extent, ensuring maximum compatibility and performance.

🔥 Latest News

- [2025/11] Our Paper OmniScale: Scaling Any Modality Model Training with Model-Centric Distributed Recipe Zoo was accepted by AAAI 2026

- [2025/09] We release first offical release v0.1.0 of VeOmni.

- [2025/08] We release VeOmni Tech report and open the WeChat group. Feel free to join us!

- [2025/04] We release VeOmni!

📚 Key Features

- FSDP, FSDP2 backend for training.

- Sequence Parallelism with Deepspeed Ulysess, support with non-async and async mode.

- Experts Parallelism support large MOE model training, like Qwen3-Moe.

- Efficient GroupGemm kernel for Moe model, Liger-Kernel.

- Compatible with HuggingFace Transformers models. Qwen3, Qwen3-VL, Qwen3-Moe, etc

- Dynamic batching strategy, Omnidata processing

- Torch Distributed Checkpoint for checkpoint.

- Support for both Nvidia-GPU and Ascend-NPU training.

- Experiment tracking with wandb

📝 Upcoming Features and Changes

- VeOmni v0.2 Roadmap #268, #271

- Vit balance tool #280

- Validation dataset during training #247

- RL post training for omni-modality models with VeRL #262

🚀 Getting Started

Quick Start

✏️ Supported Models

| Model | Model size | Example config File |

|---|---|---|

| DeepSeek2.5/3/R1 | 236B/671B | deepseek.yaml |

| Llama3-3.3 | 1B/3B/8B/70B | llama3.yaml |

| Qwen2-3 | 0.5B/1.5B/3B/7B/14B/32B/72B/ | qwen2_5.yaml |

| Qwen2-3 VL/QVQ | 2B/3B/7B/32B/72B | qwen3_vl_dense.yaml |

| Qwen3-VL MoE | 30BA3B/235BA22B | qwen3_vl_moe.yaml |

| Qwen3-MoE | 30BA3B/235BA22B | qwen3-moe.yaml |

| Qwen2-3 Omni | 7B/30BA3B | qwen25_omni.yaml |

| Wan | Wan2.1-I2V-14B-480P | wan_sft.yaml |

| Omni Model | Any Modality Training | seed_omni.yaml |

Support new models to VeOmni see Support New Models

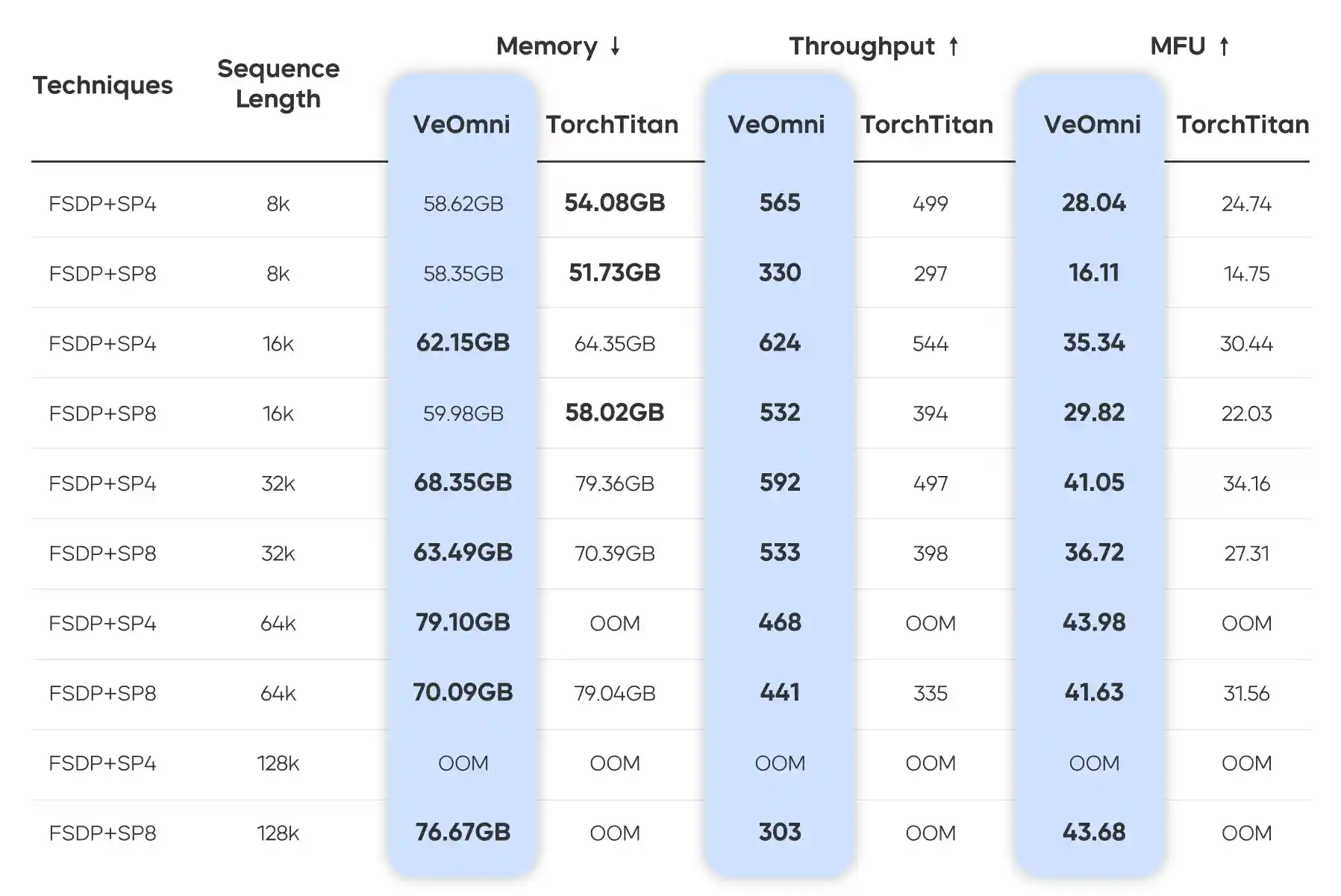

⛰️ Performance

For more details, please refer to our paper.

💡 Awesome work using VeOmni

- dFactory: Easy and Efficient dLLM Fine-Tuning

- LMMs-Engine

- UI-TARS: Pioneering Automated GUI Interaction with Native Agents

- OpenHA: A Series of Open-Source Hierarchical Agentic Models in Minecraft

- UI-TARS-2 Technical Report: Advancing GUI Agent with Multi-Turn Reinforcement Learning

- Open-dLLM: Open Diffusion Large Language Models

- LingBot-VLA: A Pragmatic VLA Foundation Model

🎨 Contributing

Contributions from the community are welcome! Please check out CONTRIBUTING.md our project roadmap(To be updated),

📝 Citation and Acknowledgement

If you find VeOmni useful for your research and applications, feel free to give us a star ⭐ or cite us using:

@article{ma2025veomni, title={VeOmni: Scaling Any Modality Model Training with Model-Centric Distributed Recipe Zoo}, author={Ma, Qianli and Zheng, Yaowei and Shi, Zhelun and Zhao, Zhongkai and Jia, Bin and Huang, Ziyue and Lin, Zhiqi and Li, Youjie and Yang, Jiacheng and Peng, Yanghua and others}, journal={arXiv preprint arXiv:2508.02317}, year={2025} }

Thanks to the following projects for their excellent work:

Star History

🌱 About ByteDance Seed Team

Founded in 2023, ByteDance Seed Team is dedicated to crafting the industry's most advanced AI foundation models. The team aspires to become a world-class research team and make significant contributions to the advancement of science and society. You can get to know Bytedance Seed better through the following channels👇