Introduction

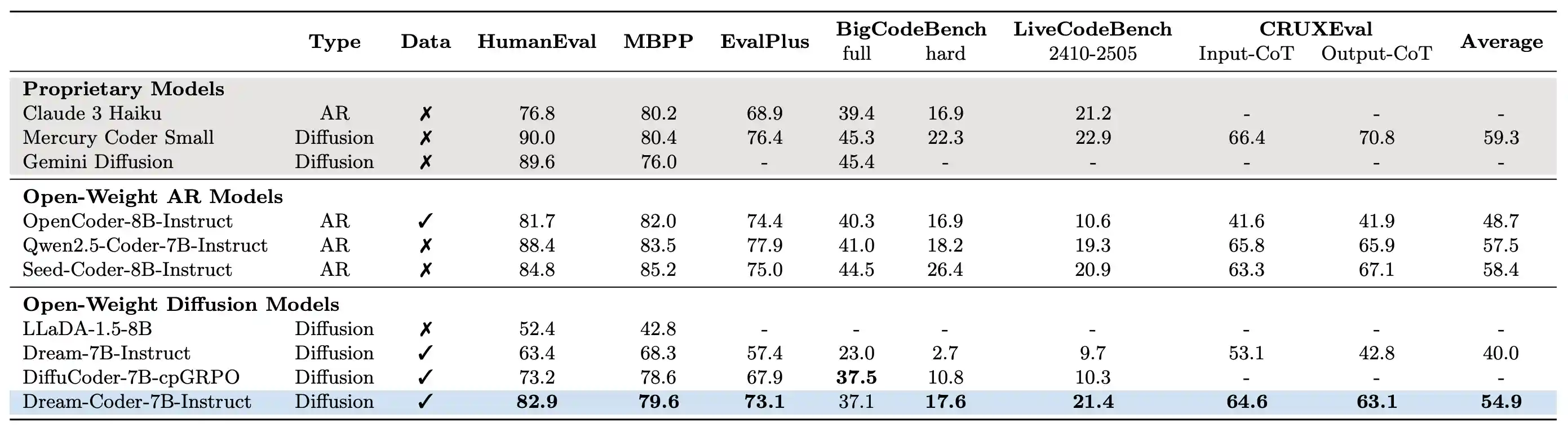

Dream-Coder 7B is a diffusion LLM for code trained exclusively on open-source data across its development stages—adaptation, supervised fine-tuning, and reinforcement learning. It achieves an impressive 21.4% pass@1 on LiveCodeBench (2410-2505), outperforming other open-source diffusion LLMs by a wide margin.

News

- Sep 25, 2025: Released data processing, training, and evaluation scripts for the instruct model. See instruct.

- Sep 21, 2025: Released data details and evaluation scripts for the base model. See base.

- Sep 1, 2025: Our technical report was out.

- July 23, 2025: Try our online demo via HF space!

- July 15, 2025: Released Dream-Coder checkpoints, along with our blog post and Notion page.

Features

Flexible Code Generation

We observe Dream-Coder 7B exhibits emergent any-order generation that adaptively determines its decoding style based on the coding task. For example, Dream-Coder 7B Instruct displays patterns such as:

Sketch-First Generation |

Left-to-Right Generation |

Iterative Back-and-Forth Generation |

These demos were collected using consistent sampling parameters: temperature=0.1, diffusion_steps=512, max_new_tokens=512, alg="entropy", top_p=1.0, alg_temp=0.0, eos_penalty=3.0.

Variable-Length Code Infilling

We also introduce an infilling variant, DreamOn-7B, that naturally adjusts the length of masked spans during generation for variable-length code infilling. For more details, please refer to our accompanying blog post.

Quickstart

To get start with,

please install transformers==4.46.2 and torch==2.5.1. Here is an example to use Dream-Coder 7B:

import torch from transformers import AutoModel, AutoTokenizer model_path = "Dream-org/Dream-Coder-v0-Instruct-7B" model = AutoModel.from_pretrained(model_path, torch_dtype=torch.bfloat16, trust_remote_code=True) tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True) model = model.to("cuda").eval() messages = [ {"role": "user", "content": "Write a quick sort algorithm."} ] inputs = tokenizer.apply_chat_template( messages, return_tensors="pt", return_dict=True, add_generation_prompt=True ) input_ids = inputs.input_ids.to(device="cuda") attention_mask = inputs.attention_mask.to(device="cuda") output = model.diffusion_generate( input_ids, attention_mask=attention_mask, max_new_tokens=768, output_history=True, return_dict_in_generate=True, steps=768, temperature=0.1, top_p=0.95, alg="entropy", alg_temp=0., ) generations = [ tokenizer.decode(g[len(p) :].tolist()) for p, g in zip(input_ids, output.sequences) ] print(generations[0].split(tokenizer.eos_token)[0])

Acknowledgement

We gratefully acknowledge the following open-source projects, which have been instrumental to this work:

- verl: Reinforcement learning training framework

- SandboxFusion: Secure code execution environment

- Fast-dLLM: Inference acceleration for dLLMs

Citation

@article{xie2025dream,

title={Dream-coder 7b: An open diffusion language model for code},

author={Xie, Zhihui and Ye, Jiacheng and Zheng, Lin and Gao, Jiahui and Dong, Jingwei and Wu, Zirui and Zhao, Xueliang and Gong, Shansan and Jiang, Xin and Li, Zhenguo and others},

journal={arXiv preprint arXiv:2509.01142},

year={2025}

}