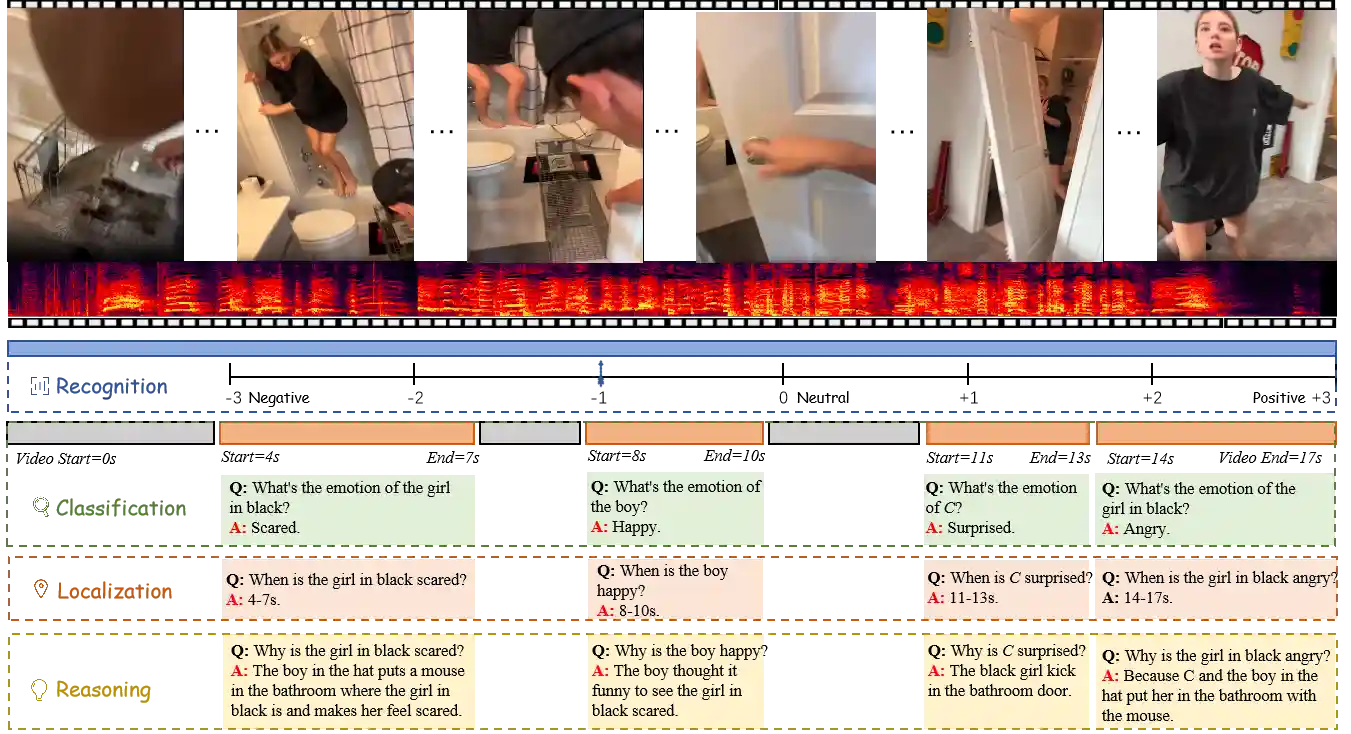

$E^3$: Exploring Embodied Emotion Through A Large-Scale Egocentric Video Dataset

This repo holds the implementation code and dataset

🚀 Demo

1. Clone the repository

git clone https://github.com/Exploring-Embodied-Emotion-official/E3.git

cd E3/MiniGPT4-video2. Set up the environment

conda env create -f environment.yml

🔥 Training

set the cfg-path in the script to train_configs/224_v2_llama2_video_stage_3.yaml

set the model name here minigpt4/configs/datasets/video_chatgpt/default.yaml to llama2

⚡ Evaluation

Run evaluation script

Set the each evaluation script parameters to include the path to the checkpoints, the dataset name and whether to use subtitles/speech or not

CUDA_VISIBLE_DEVICES=3 python eval_video.py \ --dataset "ego-sentiment" \ --batch_size 4 \ --name "llama2_best" \ --ckpt <path-to-weights> \ --cfg-path "test_configs/llama2_test_config.yaml" \ --wav_base <path-to-audio> \ --result_path <result-json-path> \ --ann_path <path-to-test-json> \ --add_subtitles \ --need_speech

For evaluation on all 4 criteria, you can use:

# Llama2

bash jobs_video/eval/llama2_evaluation.shThen Use GPT3.5 turbo to compare the predictions with the ground truth and generate the accuracy and scores

Set these variables in both evaluate_benchmark.sh and evaluate_zeroshot.sh

PRED="path_to_predictions" OUTPUT_DIR="path_to_output_dir" API_KEY="openAI_key" NUM_TASKS=128

Then run the following script

bash test_benchmark/quantitative_evaluation/evaluate_benchmark.sh

🎈Citation

If you find our work valuable, we would appreciate your citation:

@article{feng20243,

title={$ E\^{} 3$: Exploring Embodied Emotion Through A Large-Scale Egocentric Video Dataset},

author={Feng, Yueying and Han, WenKang and Jin, Tao and Zhao, Zhou and Wu, Fei and Yao, Chang and Chen, Jingyuan and others},

journal={Advances in Neural Information Processing Systems},

volume={37},

pages={118182--118197},

year={2024}

}

Acknowledgements

License

This repository is under Attribution-NonCommercial-ShareAlike 4.0 International. Many codes are based on MiniGPT4-video.