UMAD Dataset Google Drive Link, about 10GB, and UMAD-Dataset-Usage-Guide-Doc

😊News

This work is maintaining. You can hit the STAR and WATCH to follow the updates.

-

2024-9-5: We have released the UMAD-1.0 dataset, along with the robot system code.

-

2024-8-27: We will update the UMAD-homo-eva dataset and the extension experiments on the UMAD-homo-eva.

-

2024-8-22: UMAD paper sharing on arXiv~

-

2024/6/30: UMAD has been accepted by IROS 2024! Thanks to everyone who participated in this project!

-

2024/3/21: We have publicly released a supplementary video for the paper submission.

📝ToDo List

- Make the project paper publicly available.

- Open-source the UMAD dataset.

- Open-source the UMAD-homo-eval dataset.

- Open-source the code related to the datasets.

- Open source robotic system code.

- Release C++/python Adaptive Warping code.

Dataset

Dataset Overview

You can refer to the UMAD-Dataset-Usage-Guide-Doc for information on how to use the UMAD dataset and details about the ground truth mask files.

Benchmark

Anomaly Detection Benchmark

Change Detection Benchmark

System

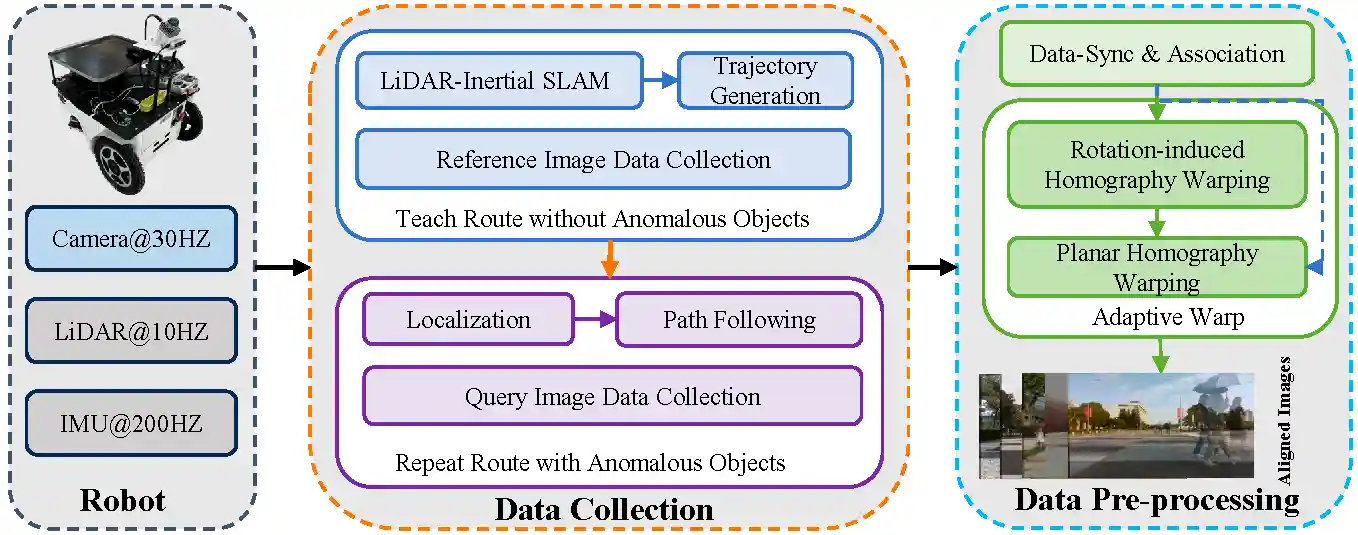

You can easily collect data or deploy a system like our UMAD robot system:

# Prerequisites: [FAST_LIO](https://github.com/hku-mars/FAST_LIO) and [FAST_LIO_LOCALIZATION](https://github.com/HViktorTsoi/FAST_LIO_LOCALIZATION) # 1. Build develop environment: Download UMAD's code, and put src/FAST_LIO_LOCALIZATION in the workspace of ROS git clone https://github.com/IMRL/UMAD #catkin make # 2. Build map and record path: Put the robot in the scene, run FAST_LIO and record the waypoints roslaunch fast_lio mapping_mid360.launch python3 UMAD/robot_system_code/script/path_record.py # generate a path.txt file # 3.Control the robot around the environment # 4.Shut down the fast_lio and waypoint_record scripts. # 5.Save the scene map output from FAST-LIO # 6. Collect reference data: put robot back start point, run FAST_LIO_LOCALIZATION and path follow code python3 UMAD/robot_system_code/script/path_follow.py rosrun fast_lio_localization publish_initial_pose.py 0 0 0 0 0 0 roslaunch fast_lio_localization localization_mid360.launch map:=/home/imrl/Desktop/3.Central-Avenue.pcd rosbag record /camera/color/image_raw/compressed /localization # Assuming a long time has passed, or you have placed some anomalous Objects in the scene. # 7.Collect query data: put robot back start point, run FAST_LIO_LOCALIZATION and path follow code like 6 python3 UMAD/robot_system_code/script/path_follow.py rosrun fast_lio_localization publish_initial_pose.py 0 0 0 0 0 0 roslaunch fast_lio_localization localization_mid360.launch map:=/home/imrl/Desktop/3.Central-Avenue.pcd rosbag record /camera/color/image_raw/compressed /localization

Acknowledgement

The authors would like to thank the following people for their contributions to data collection and data annotation for this project: @Xiangyu QIN, @Shenbo WANG, @Kaijie YIN, @Shuhao ZHAI, @Xiaonan LI, @Beibei ZHOU, and @Hongzhi CHENG.

License

Our datasets and code is released under the MIT License (see LICENSE file for details).

Citing

If you find our work useful, please consider citing:

@inproceedings{li2024umad,

title={UMAD: University of Macau Anomaly Detection Benchmark Dataset},

author={Li, Dong and Chen, Lineng and Xu, Cheng-Zhong and Kong, Hui},

booktitle={2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)},

pages={5836--5843},

year={2024},

organization={IEEE}

}

Note

You can contact Dong Li via email(lidong8421bcd@gmail.com) or open an issue on UMAD repo directly If you have any questions.