Dr. MAS: Stable Reinforcement Learning for Multi-Agent LLM Systems

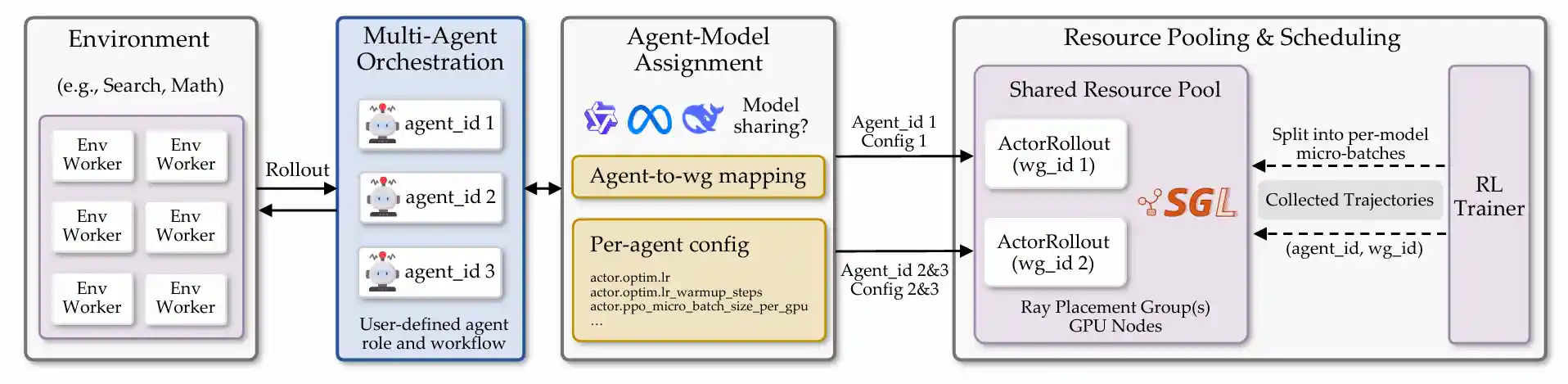

Dr.MAS is designed for end-to-end post-training of Multi-Agent LLM Systems via Reinforcement Learning (RL), enabling multiple LLM-based agents to collaborate on complex reasoning and decision-making tasks.

This framework features flexible agent registry, customizable multi-agent orchestration, LLM sharing/non-sharing (e.g., heterogeneous LLMs), per-agent configuration, and shared resource pooling, making it well suited for training multi-agent LLM systems with RL.

Feature Summary

| Feature Category | Supported Capabilities |

|---|---|

| Flexible Agent Registry | ✅ User-defined agent registration via @AgentRegistry.register✅ Clear role specialization per agent |

| Multi-Agent Orchestration | ✅ User-defined multi-agent orchestration ✅ Sequential, hierarchical, and conditional workflows ✅ Built-in Search/Math Orchestra |

| Agent-Model Assignment | ✅ Logical agents (1,...,K) mapped to LLM worker groups ✅ LLM non-sharing: one LLM per agent (supports heterogeneous model families/checkpoints) ✅ LLM Sharing: agents using the same model share one LLM worker group |

| Per-Agent Configuration | ✅ Per-agent training overrides for fine-grained control ✅ Per-agent learning rates, micro-batch sizes, and other hyperparameters |

| Shared Resource Pooling | ✅ Shared GPU pool across multiple LLM worker groups for efficient hardware utilization ✅ Gradient updates applied independently for each worker group during optimization |

| Environments | ✅ Math ✅ Search |

| Model Support | ✅ Qwen2.5 ✅ Qwen3 ✅ LLaMA3.2 and more |

| RL Algorithms | ✅ Dr.MAS ✅ GRPO 🧪 GiGPO (experimental) 🧪 DAPO (experimental) 🧪 RLOO (experimental) 🧪 PPO (experimental) and more |

Table of Contents

Installation

Install veRL

conda create -n DrMAS python==3.12 -y

conda activate DrMAS

pip3 install -r requirements_sglang.txt

pip3 install flash-attn==2.7.4.post1 --no-build-isolation --no-cache-dir

pip3 install -e .Install Supported Environments

1. Search

conda activate DrMAS cd ./agent_system/environments/env_package/search/third_party pip install -e . pip install gym==0.26.2

Prepare dataset:

cd repo_root/

python examples/data_preprocess/drmas_search.pySince faiss-gpu is not available via pip, we setup a separate conda environment for the local retrieval server. Running this server will use around 6GB of GPU memory per GPU, so make sure to account for this in your training run configuration. Build Retriever environments:

conda create -n retriever python=3.10 -y conda activate retriever conda install numpy==1.26.4 pip install torch==2.6.0 torchvision==0.21.0 torchaudio==2.6.0 --index-url https://download.pytorch.org/whl/cu124 pip install transformers datasets pyserini huggingface_hub conda install faiss-gpu==1.8.0 -c pytorch -c nvidia -y pip install uvicorn fastapi

Download the index:

conda activate retriever local_dir=~/data/searchR1 python examples/search/searchr1_download.py --local_dir $local_dir cat $local_dir/part_* > $local_dir/e5_Flat.index gzip -d $local_dir/wiki-18.jsonl.gz

Start the local flat e5 retrieval server:

conda activate retriever # redirect the output to a file to avoid cluttering the terminal # we have observed outputting to the terminal causing spikes in server response times bash examples/search/retriever/retrieval_launch.sh > retrieval_server.log

2. Math

Prepare the dataset:

cd repo_root/

python examples/data_preprocess/drmas_math.pyRun Examples

Search

Search (hierarchical routing): a 3-agent hierarchy where Verifier decides whether information is sufficient; it routes to Search Agent (generate queries) or Answer Agent (final response). See agent_system/agent/orchestra/search/README.md.

bash examples/drmas_trainer/run_search.sh

After training completes, evaluate the multi-agent system on the full test dataset:

bash examples/drmas_trainer/run_search.sh evalMath

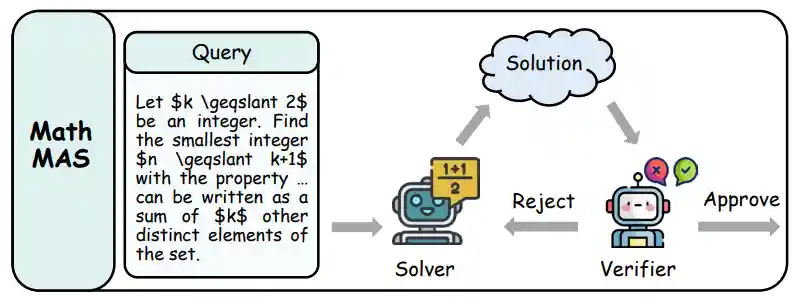

Math (iterative refinement): a 2-agent loop where Solver proposes step-by-step solutions and Verifier checks them; items are iterated until approved or max loops reached. See agent_system/agent/orchestra/math/README.md.

bash examples/drmas_trainer/run_math.sh

After training completes, evaluate the multi-agent system on the full test dataset:

bash examples/drmas_trainer/run_math.sh evalCompute Requirements

1. Search Task

| Strategy | Model Configuration | Resources | Est. Time |

|---|---|---|---|

| LLM Sharing | 1 $\times$ Qwen2.5-3B | 4 $\times$ H100 | ~12h |

| LLM Non-Sharing | 3 $\times$ Qwen2.5-3B | 4 $\times$ H100 | ~13h |

| LLM Sharing | 1 $\times$ Qwen2.5-7B | 8 $\times$ H100 | ~25h |

| LLM Non-Sharing | 3 $\times$ Qwen2.5-7B | 8 $\times$ H100 | ~26h |

| Heterogeneous | 2 $\times$ Llama-3.2-3B + 1 $\times$ Qwen2.5-7B | 8 $\times$ H100 | ~16h |

2. Math Task

| Strategy | Model Configuration | Resources | Est. Time |

|---|---|---|---|

| LLM Sharing | 1 $\times$ Qwen3-4B | 4 $\times$ H100 | ~35h |

| LLM Non-Sharing | 2 $\times$ Qwen3-4B | 4 $\times$ H100 | ~38h |

| LLM Sharing | 1 $\times$ Qwen3-8B | 8 $\times$ H100 | ~37h |

| LLM Non-Sharing | 2 $\times$ Qwen3-8B | 8 $\times$ H100 | ~42h |

Multi-Agent Development Guide

For a comprehensive guide on developing custom multi-agent LLM systems, including detailed examples and best practices, see the Multi-Agent Development Guide.

The guide covers:

- Architecture overview and core components

- Step-by-step agent creation and registration

- Orchestra development patterns

- Configuration options and per-agent parameter overrides

Acknowledgement

This codebase is built upon verl-agent and verl. The Search environment is adapted from Search-R1 and SkyRL-Gym. The Math environment is adapted from DeepScaleR and DAPO.

We extend our gratitude to the authors and contributors of these projects for their valuable work.