dalex: Responsible Machine Learning in Python

Overview

Unverified black box model is the path to the failure. Opaqueness leads to distrust. Distrust leads to ignoration. Ignoration leads to rejection.

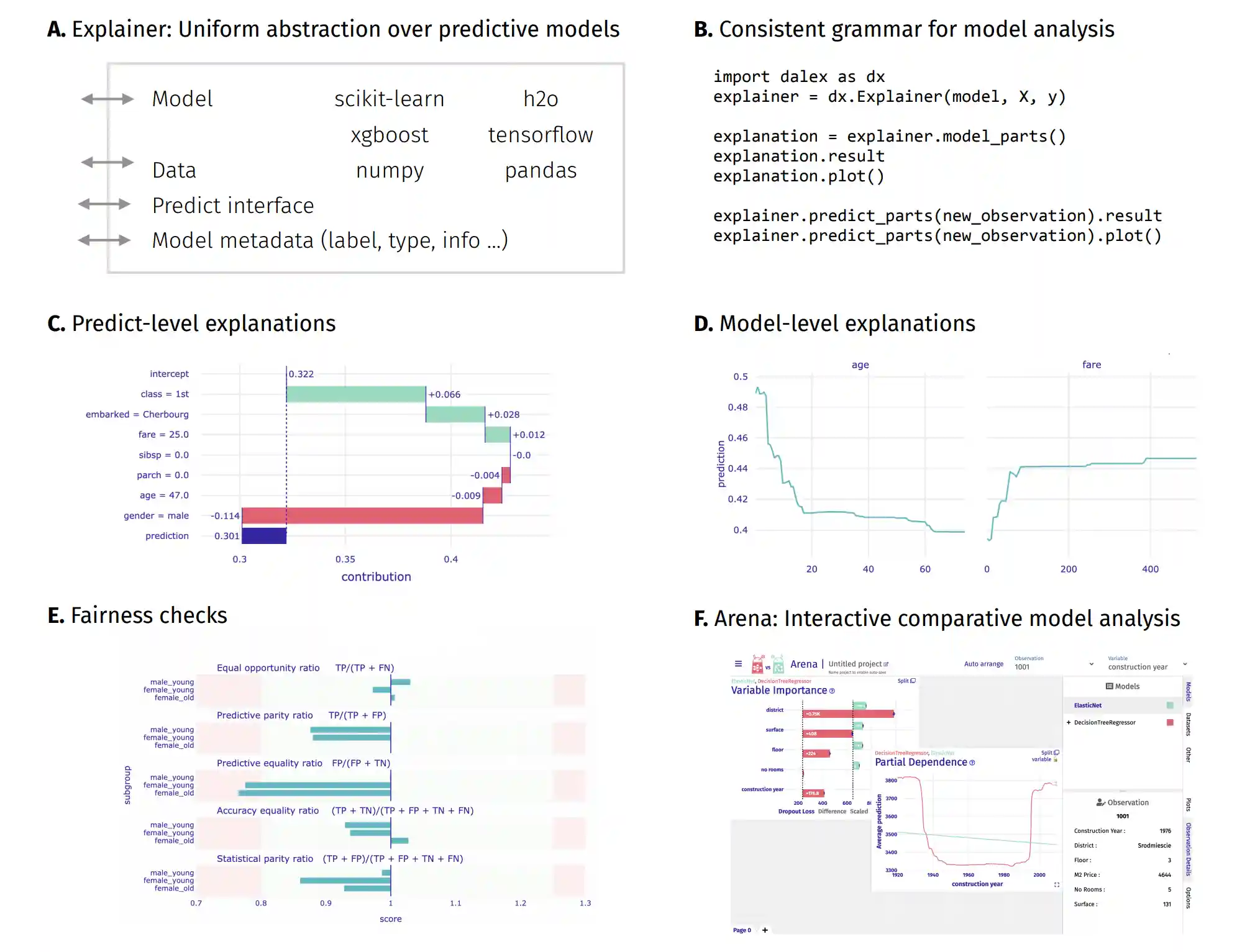

The dalex package xrays any model and helps to explore and explain its behaviour, helps to understand how complex models are working.

The main Explainer object creates a wrapper around a predictive model. Wrapped models may then be explored and compared with a collection of model-level and predict-level explanations. Moreover, there are fairness methods and interactive exploration dashboards available to the user.

The philosophy behind dalex explanations is described in the Explanatory Model Analysis book.

Installation

The dalex package is available on PyPI and conda-forge.

pip install dalex -U conda install -c conda-forge dalex

One can install optional dependencies for all additional features using pip install dalex[full].

Resources: https://dalex.drwhy.ai/python

API reference: https://dalex.drwhy.ai/python/api

Authors

The authors of the dalex package are:

- Hubert Baniecki

- Wojciech Kretowicz

- Piotr Piatyszek maintains the

arenamodule - Jakub Wisniewski maintains the

fairnessmodule - Mateusz Krzyzinski maintains the

aspectmodule - Artur Zolkowski maintains the

aspectmodule - Przemyslaw Biecek

We welcome contributions: start by opening an issue on GitHub.

Citation

If you use dalex, please cite our JMLR paper:

@article{JMLR:v22:20-1473,

author = {Hubert Baniecki and

Wojciech Kretowicz and

Piotr Piatyszek and

Jakub Wisniewski and

Przemyslaw Biecek},

title = {dalex: Responsible Machine Learning

with Interactive Explainability and Fairness in Python},

journal = {Journal of Machine Learning Research},

year = {2021},

volume = {22},

number = {214},

pages = {1-7},

url = {http://jmlr.org/papers/v22/20-1473.html}

}