GraspNeRF: Multiview-based 6-DoF Grasp Detection for Transparent and Specular Objects Using Generalizable NeRF (ICRA 2023)

This is the official repository of GraspNeRF: Multiview-based 6-DoF Grasp Detection for Transparent and Specular Objects Using Generalizable NeRF.

For more information, please visit our project page.

Introduction

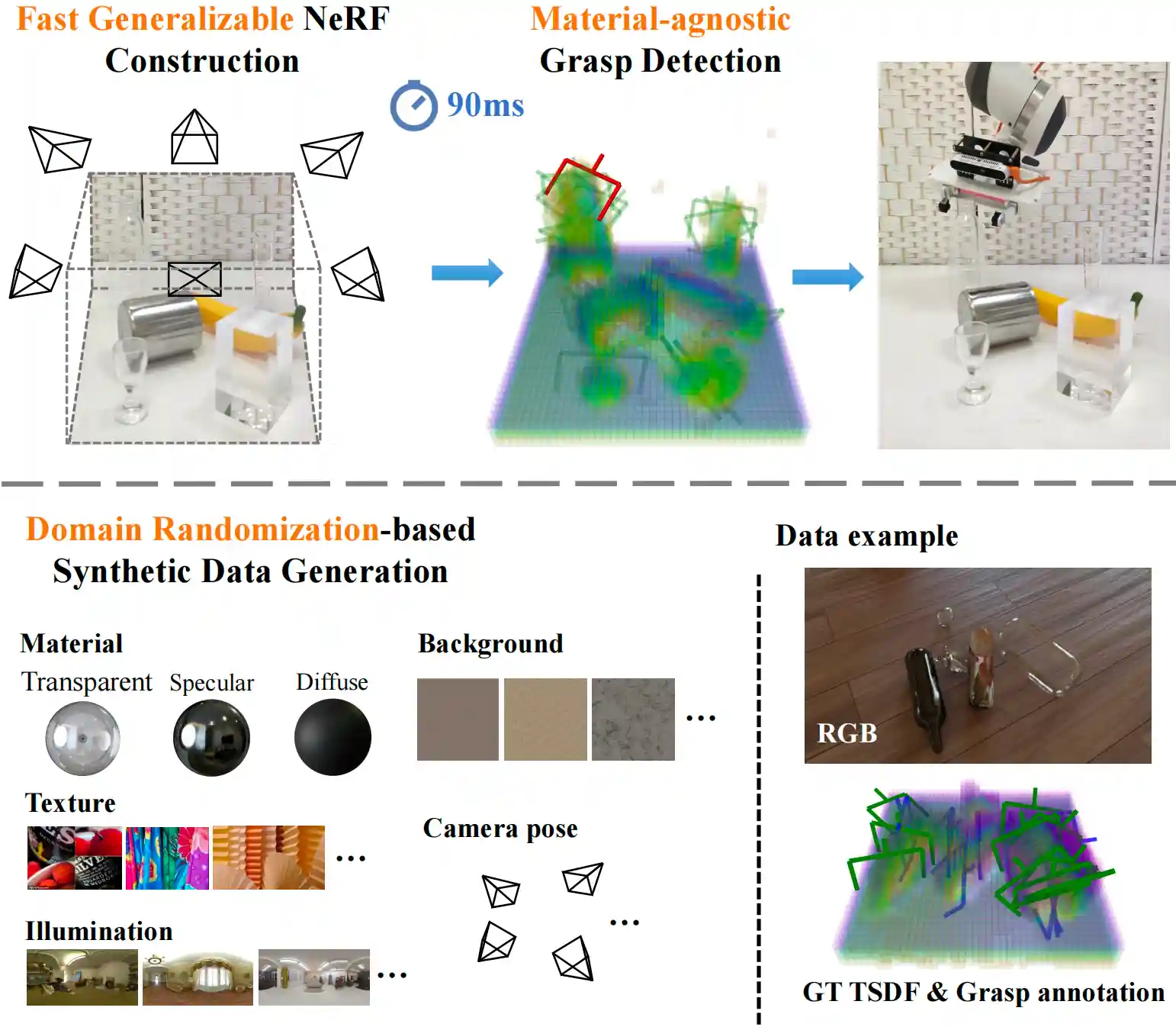

In this work, we propose a multiview RGB-based 6-DoF grasp detection network, GraspNeRF, that leverages the generalizable neural radiance field (NeRF) to achieve material-agnostic object grasping in clutter. Compared to the existing NeRF-based 3-DoF grasp detection methods that rely on densely captured input images and time-consuming per-scene optimization, our system can perform zero-shot NeRF construction with sparse RGB inputs and reliably detect 6-DoF grasps, both in real-time. The proposed framework jointly learns generalizable NeRF and grasp detection in an end-to-end manner, optimizing the scene representation construction for the grasping. For training data, we generate a large-scale photorealistic domain-randomized synthetic dataset of grasping in cluttered tabletop scenes that enables direct transfer to the real world. Experiments in synthetic and real-world environments demonstrate that our method significantly outperforms all the baselines in all the experiments.

Overview

This repository provides:

- PyTorch code, and weights of GraspNeRF.

- Grasp Simulator based on blender and pybullet.

- Multiview 6-DoF Grasping Dataset Generator and Examples.

Dependency

- Please run

pip install -r requirements.txt

to install dependency.

- (optional) Please install blender 2.93.3--Ubuntu if you need simulation.

Data & Checkpoints

- Please generate or download and uncompress the example data to

data/for training, and rendering assets todata/assetsfor simulation. Specifically, download imagenet valset todata/assets/imagenet/images/valwhich is used as random texture in simulation. - We provide pretrained weights for testing. Please download the checkpoint to

src/nr/ckpt/test.

Testing

Our grasp simulation pipeline is depend on blender and pybullet. Please verify the installation before running simulation.

After the dependency and assets are ready, please run

Training

After the training data is ready, please run

e.g. bash train.sh 0.

Data Generator

in ./data_generator for pile data generation.

Citation

If you find our work useful in your research, please consider citing:

@article{Dai2023GraspNeRF,

title={GraspNeRF: Multiview-based 6-DoF Grasp Detection for Transparent and Specular Objects Using Generalizable NeRF},

author={Qiyu Dai and Yan Zhu and Yiran Geng and Ciyu Ruan and Jiazhao Zhang and He Wang},

booktitle={IEEE International Conference on Robotics and Automation (ICRA)},

year={2023}

License

This work and the dataset are licensed under CC BY-NC 4.0.

Contact

If you have any questions, please open a github issue or contact us:

Qiyu Dai: qiyudai@pku.edu.cn, Yan Zhu: zhuyan_@stu.pku.edu.cn, He Wang: hewang@pku.edu.cn