Observability and DevTool platform for AI Agents

agentops_demo.mp4

AgentOps helps developers build, evaluate, and monitor AI agents. From prototype to production.

Key Integrations 🔌

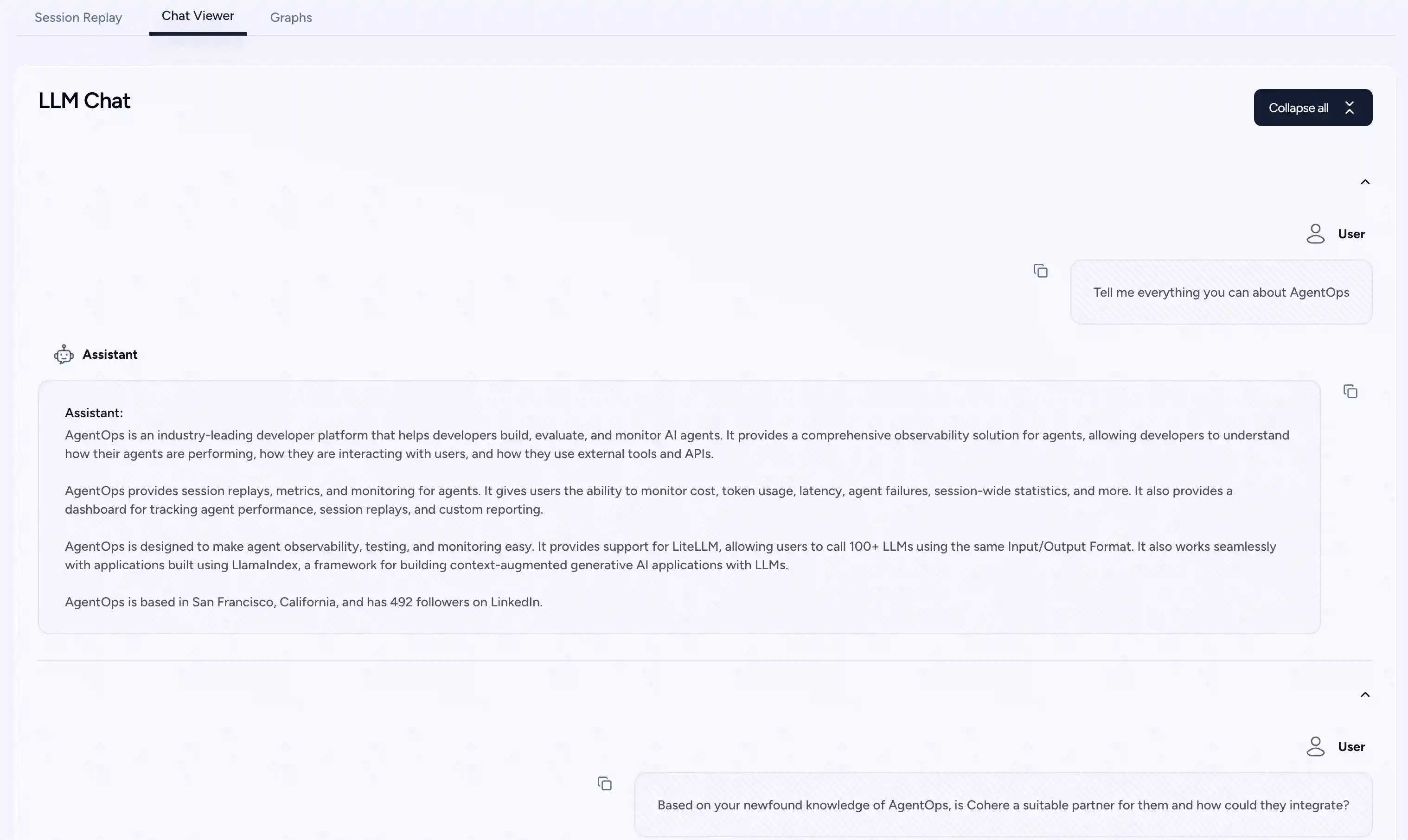

| 📊 Replay Analytics and Debugging | Step-by-step agent execution graphs |

| 💸 LLM Cost Management | Track spend with LLM foundation model providers |

| 🧪 Agent Benchmarking | Test your agents against 1,000+ evals |

| 🔐 Compliance and Security | Detect common prompt injection and data exfiltration exploits |

| 🤝 Framework Integrations | Native Integrations with CrewAI, AG2 (AutoGen), Camel AI, & LangChain |

Quick Start ⌨️

Session replays in 2 lines of code

Initialize the AgentOps client and automatically get analytics on all your LLM calls.

import agentops # Beginning of your program (i.e. main.py, __init__.py) agentops.init( < INSERT YOUR API KEY HERE >) ... # End of program agentops.end_session('Success')

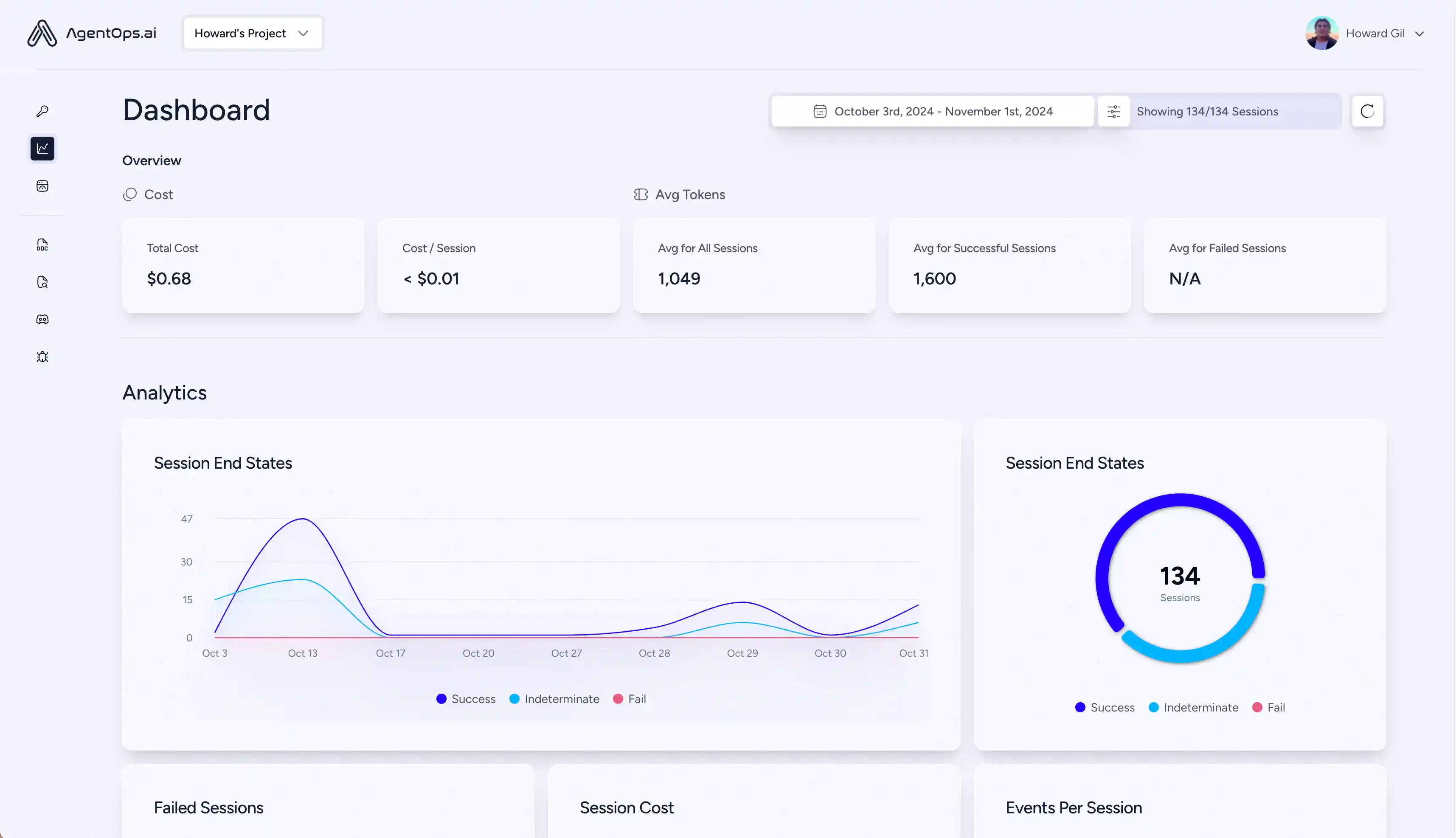

All your sessions can be viewed on the AgentOps dashboard

First class Developer Experience

Add powerful observability to your agents, tools, and functions with as little code as possible: one line at a time.

Refer to our documentation

# Create a session span (root for all other spans) from agentops.sdk.decorators import session @session def my_workflow(): # Your session code here return result

# Create an agent span for tracking agent operations from agentops.sdk.decorators import agent @agent class MyAgent: def __init__(self, name): self.name = name # Agent methods here

# Create operation/task spans for tracking specific operations from agentops.sdk.decorators import operation, task @operation # or @task def process_data(data): # Process the data return result

# Create workflow spans for tracking multi-operation workflows from agentops.sdk.decorators import workflow @workflow def my_workflow(data): # Workflow implementation return result

# Nest decorators for proper span hierarchy from agentops.sdk.decorators import session, agent, operation @agent class MyAgent: @operation def nested_operation(self, message): return f"Processed: {message}" @operation def main_operation(self): result = self.nested_operation("test message") return result @session def my_session(): agent = MyAgent() return agent.main_operation()

All decorators support:

- Input/Output Recording

- Exception Handling

- Async/await functions

- Generator functions

- Custom attributes and names

Integrations 🦾

OpenAI Agents SDK 🖇️

Build multi-agent systems with tools, handoffs, and guardrails. AgentOps natively integrates with OpenAI Agents.

pip install openai-agents

CrewAI 🛶

Build Crew agents with observability in just 2 lines of code. Simply set an AGENTOPS_API_KEY in your environment, and your crews will get automatic monitoring on the AgentOps dashboard.

pip install 'crewai[agentops]'AG2 🤖

With only two lines of code, add full observability and monitoring to AG2 (formerly AutoGen) agents. Set an AGENTOPS_API_KEY in your environment and call agentops.init()

Camel AI 🐪

Track and analyze CAMEL agents with full observability. Set an AGENTOPS_API_KEY in your environment and initialize AgentOps to get started.

- Camel AI - Advanced agent communication framework

- AgentOps integration example

- Official Camel AI documentation

Installation

pip install "camel-ai[all]==0.2.11"

pip install agentopsimport os import agentops from camel.agents import ChatAgent from camel.messages import BaseMessage from camel.models import ModelFactory from camel.types import ModelPlatformType, ModelType # Initialize AgentOps agentops.init(os.getenv("AGENTOPS_API_KEY"), tags=["CAMEL Example"]) # Import toolkits after AgentOps init for tracking from camel.toolkits import SearchToolkit # Set up the agent with search tools sys_msg = BaseMessage.make_assistant_message( role_name='Tools calling operator', content='You are a helpful assistant' ) # Configure tools and model tools = [*SearchToolkit().get_tools()] model = ModelFactory.create( model_platform=ModelPlatformType.OPENAI, model_type=ModelType.GPT_4O_MINI, ) # Create and run the agent camel_agent = ChatAgent( system_message=sys_msg, model=model, tools=tools, ) response = camel_agent.step("What is AgentOps?") print(response) agentops.end_session("Success")

Check out our Camel integration guide for more examples including multi-agent scenarios.

Langchain 🦜🔗

AgentOps works seamlessly with applications built using Langchain. To use the handler, install Langchain as an optional dependency:

Installation

pip install agentops[langchain]

To use the handler, import and set

import os from langchain.chat_models import ChatOpenAI from langchain.agents import initialize_agent, AgentType from agentops.integration.callbacks.langchain import LangchainCallbackHandler AGENTOPS_API_KEY = os.environ['AGENTOPS_API_KEY'] handler = LangchainCallbackHandler(api_key=AGENTOPS_API_KEY, tags=['Langchain Example']) llm = ChatOpenAI(openai_api_key=OPENAI_API_KEY, callbacks=[handler], model='gpt-3.5-turbo') agent = initialize_agent(tools, llm, agent=AgentType.CHAT_ZERO_SHOT_REACT_DESCRIPTION, verbose=True, callbacks=[handler], # You must pass in a callback handler to record your agent handle_parsing_errors=True)

Check out the Langchain Examples Notebook for more details including Async handlers.

Cohere ⌨️

First class support for Cohere(>=5.4.0). This is a living integration, should you need any added functionality please message us on Discord!

Installation

import cohere import agentops # Beginning of program's code (i.e. main.py, __init__.py) agentops.init(<INSERT YOUR API KEY HERE>) co = cohere.Client() chat = co.chat( message="Is it pronounced ceaux-hear or co-hehray?" ) print(chat) agentops.end_session('Success')

import cohere import agentops # Beginning of program's code (i.e. main.py, __init__.py) agentops.init(<INSERT YOUR API KEY HERE>) co = cohere.Client() stream = co.chat_stream( message="Write me a haiku about the synergies between Cohere and AgentOps" ) for event in stream: if event.event_type == "text-generation": print(event.text, end='') agentops.end_session('Success')

Anthropic ﹨

Track agents built with the Anthropic Python SDK (>=0.32.0).

Installation

import anthropic import agentops # Beginning of program's code (i.e. main.py, __init__.py) agentops.init(<INSERT YOUR API KEY HERE>) client = anthropic.Anthropic( # This is the default and can be omitted api_key=os.environ.get("ANTHROPIC_API_KEY"), ) message = client.messages.create( max_tokens=1024, messages=[ { "role": "user", "content": "Tell me a cool fact about AgentOps", } ], model="claude-3-opus-20240229", ) print(message.content) agentops.end_session('Success')

Streaming

import anthropic import agentops # Beginning of program's code (i.e. main.py, __init__.py) agentops.init(<INSERT YOUR API KEY HERE>) client = anthropic.Anthropic( # This is the default and can be omitted api_key=os.environ.get("ANTHROPIC_API_KEY"), ) stream = client.messages.create( max_tokens=1024, model="claude-3-opus-20240229", messages=[ { "role": "user", "content": "Tell me something cool about streaming agents", } ], stream=True, ) response = "" for event in stream: if event.type == "content_block_delta": response += event.delta.text elif event.type == "message_stop": print("\n") print(response) print("\n")

Async

import asyncio from anthropic import AsyncAnthropic client = AsyncAnthropic( # This is the default and can be omitted api_key=os.environ.get("ANTHROPIC_API_KEY"), ) async def main() -> None: message = await client.messages.create( max_tokens=1024, messages=[ { "role": "user", "content": "Tell me something interesting about async agents", } ], model="claude-3-opus-20240229", ) print(message.content) await main()

Mistral 〽️

Track agents built with the Mistral Python SDK (>=0.32.0).

Installation

Sync

from mistralai import Mistral import agentops # Beginning of program's code (i.e. main.py, __init__.py) agentops.init(<INSERT YOUR API KEY HERE>) client = Mistral( # This is the default and can be omitted api_key=os.environ.get("MISTRAL_API_KEY"), ) message = client.chat.complete( messages=[ { "role": "user", "content": "Tell me a cool fact about AgentOps", } ], model="open-mistral-nemo", ) print(message.choices[0].message.content) agentops.end_session('Success')

Streaming

from mistralai import Mistral import agentops # Beginning of program's code (i.e. main.py, __init__.py) agentops.init(<INSERT YOUR API KEY HERE>) client = Mistral( # This is the default and can be omitted api_key=os.environ.get("MISTRAL_API_KEY"), ) message = client.chat.stream( messages=[ { "role": "user", "content": "Tell me something cool about streaming agents", } ], model="open-mistral-nemo", ) response = "" for event in message: if event.data.choices[0].finish_reason == "stop": print("\n") print(response) print("\n") else: response += event.text agentops.end_session('Success')

Async

import asyncio from mistralai import Mistral client = Mistral( # This is the default and can be omitted api_key=os.environ.get("MISTRAL_API_KEY"), ) async def main() -> None: message = await client.chat.complete_async( messages=[ { "role": "user", "content": "Tell me something interesting about async agents", } ], model="open-mistral-nemo", ) print(message.choices[0].message.content) await main()

Async Streaming

import asyncio from mistralai import Mistral client = Mistral( # This is the default and can be omitted api_key=os.environ.get("MISTRAL_API_KEY"), ) async def main() -> None: message = await client.chat.stream_async( messages=[ { "role": "user", "content": "Tell me something interesting about async streaming agents", } ], model="open-mistral-nemo", ) response = "" async for event in message: if event.data.choices[0].finish_reason == "stop": print("\n") print(response) print("\n") else: response += event.text await main()

CamelAI ﹨

Track agents built with the CamelAI Python SDK (>=0.32.0).

Installation

pip install camel-ai[all] pip install agentops

#Import Dependencies import agentops import os from getpass import getpass from dotenv import load_dotenv #Set Keys load_dotenv() openai_api_key = os.getenv("OPENAI_API_KEY") or "<your openai key here>" agentops_api_key = os.getenv("AGENTOPS_API_KEY") or "<your agentops key here>"

You can find usage examples here!.

LiteLLM 🚅

AgentOps provides support for LiteLLM(>=1.3.1), allowing you to call 100+ LLMs using the same Input/Output Format.

Installation

# Do not use LiteLLM like this # from litellm import completion # ... # response = completion(model="claude-3", messages=messages) # Use LiteLLM like this import litellm ... response = litellm.completion(model="claude-3", messages=messages) # or response = await litellm.acompletion(model="claude-3", messages=messages)

LlamaIndex 🦙

AgentOps works seamlessly with applications built using LlamaIndex, a framework for building context-augmented generative AI applications with LLMs.

Installation

pip install llama-index-instrumentation-agentops

To use the handler, import and set

from llama_index.core import set_global_handler # NOTE: Feel free to set your AgentOps environment variables (e.g., 'AGENTOPS_API_KEY') # as outlined in the AgentOps documentation, or pass the equivalent keyword arguments # anticipated by AgentOps' AOClient as **eval_params in set_global_handler. set_global_handler("agentops")

Check out the LlamaIndex docs for more details.

Llama Stack 🦙🥞

AgentOps provides support for Llama Stack Python Client(>=0.0.53), allowing you to monitor your Agentic applications.

SwarmZero AI 🐝

Track and analyze SwarmZero agents with full observability. Set an AGENTOPS_API_KEY in your environment and initialize AgentOps to get started.

- SwarmZero - Advanced multi-agent framework

- AgentOps integration example

- SwarmZero AI integration example

- SwarmZero AI - AgentOps documentation

- Official SwarmZero Python SDK

Installation

pip install swarmzero pip install agentops

from dotenv import load_dotenv load_dotenv() import agentops agentops.init(<INSERT YOUR API KEY HERE>) from swarmzero import Agent, Swarm # ...

Evaluations Roadmap 🧭

| Platform | Dashboard | Evals |

|---|---|---|

| ✅ Python SDK | ✅ Multi-session and Cross-session metrics | ✅ Custom eval metrics |

| 🚧 Evaluation builder API | ✅ Custom event tag tracking | 🔜 Agent scorecards |

| ✅ Javascript/Typescript SDK | ✅ Session replays | 🔜 Evaluation playground + leaderboard |

Debugging Roadmap 🧭

| Performance testing | Environments | LLM Testing | Reasoning and execution testing |

|---|---|---|---|

| ✅ Event latency analysis | 🔜 Non-stationary environment testing | 🔜 LLM non-deterministic function detection | 🚧 Infinite loops and recursive thought detection |

| ✅ Agent workflow execution pricing | 🔜 Multi-modal environments | 🚧 Token limit overflow flags | 🔜 Faulty reasoning detection |

| 🚧 Success validators (external) | 🔜 Execution containers | 🔜 Context limit overflow flags | 🔜 Generative code validators |

| 🔜 Agent controllers/skill tests | ✅ Honeypot and prompt injection detection (PromptArmor) | ✅ API bill tracking | 🔜 Error breakpoint analysis |

| 🔜 Information context constraint testing | 🔜 Anti-agent roadblocks (i.e. Captchas) | 🔜 CI/CD integration checks | |

| 🔜 Regression testing | ✅ Multi-agent framework visualization |

Why AgentOps? 🤔

Without the right tools, AI agents are slow, expensive, and unreliable. Our mission is to bring your agent from prototype to production. Here's why AgentOps stands out:

- Comprehensive Observability: Track your AI agents' performance, user interactions, and API usage.

- Real-Time Monitoring: Get instant insights with session replays, metrics, and live monitoring tools.

- Cost Control: Monitor and manage your spend on LLM and API calls.

- Failure Detection: Quickly identify and respond to agent failures and multi-agent interaction issues.

- Tool Usage Statistics: Understand how your agents utilize external tools with detailed analytics.

- Session-Wide Metrics: Gain a holistic view of your agents' sessions with comprehensive statistics.

AgentOps is designed to make agent observability, testing, and monitoring easy.

Star History

Check out our growth in the community:

Popular projects using AgentOps

| Repository | Stars |

|---|---|

| 42787 | |

| 34446 | |

| 18287 | |

| 5166 | |

| 5050 | |

| 4713 | |

| 2723 | |

| 2007 | |

| 272 | |

| 195 | |

| 134 | |

| 55 | |

| 47 | |

| 27 | |

| 19 | |

| 14 | |

| 13 |

Generated using github-dependents-info, by Nicolas Vuillamy