BT-Adapter: Video Conversation is Feasible Without Video Instruction Tuning

| Paper | Weights | Video-text Pretraining | Downstream Evaluation | Instruction Tuning | VideoChatGPT Evaluation |

|---|---|---|---|---|---|

|

|

Video-text Pretraining | Downstream Evaluation | Instruction Tuning | VideoChatGPT Evaluation |

Overview and Highlights

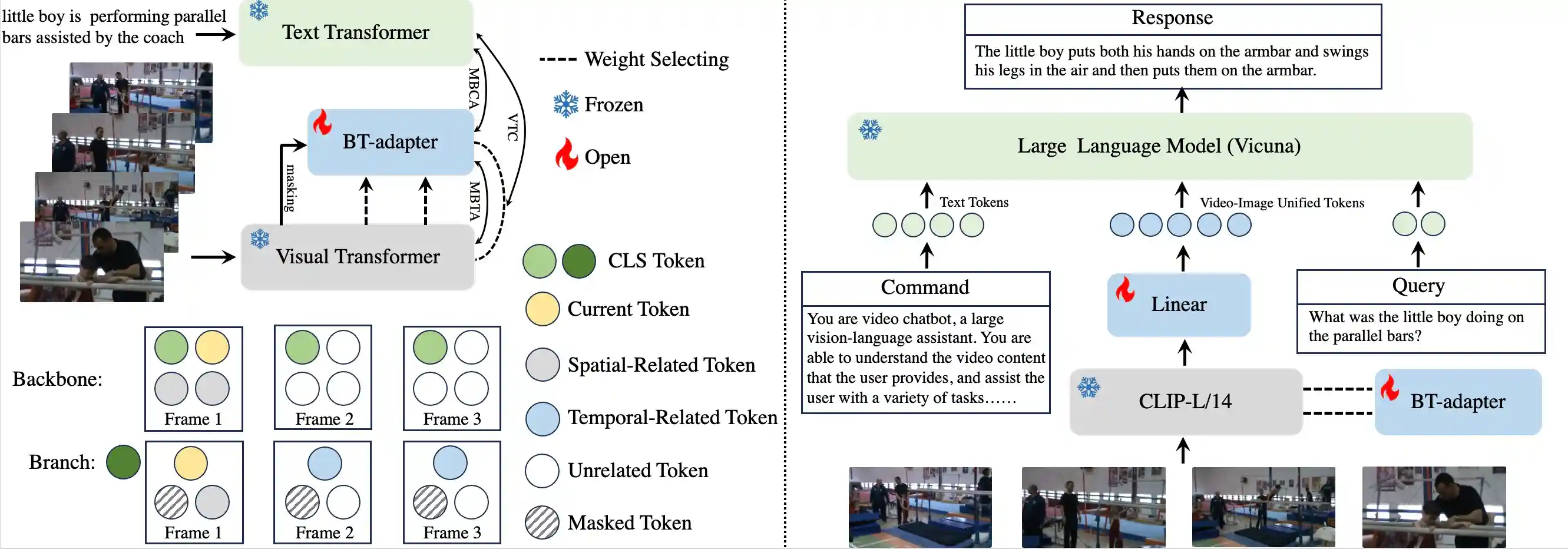

💡 Plug-and-use, parameter-efficient, multimodal-friendly, and temporal-sensitive structure

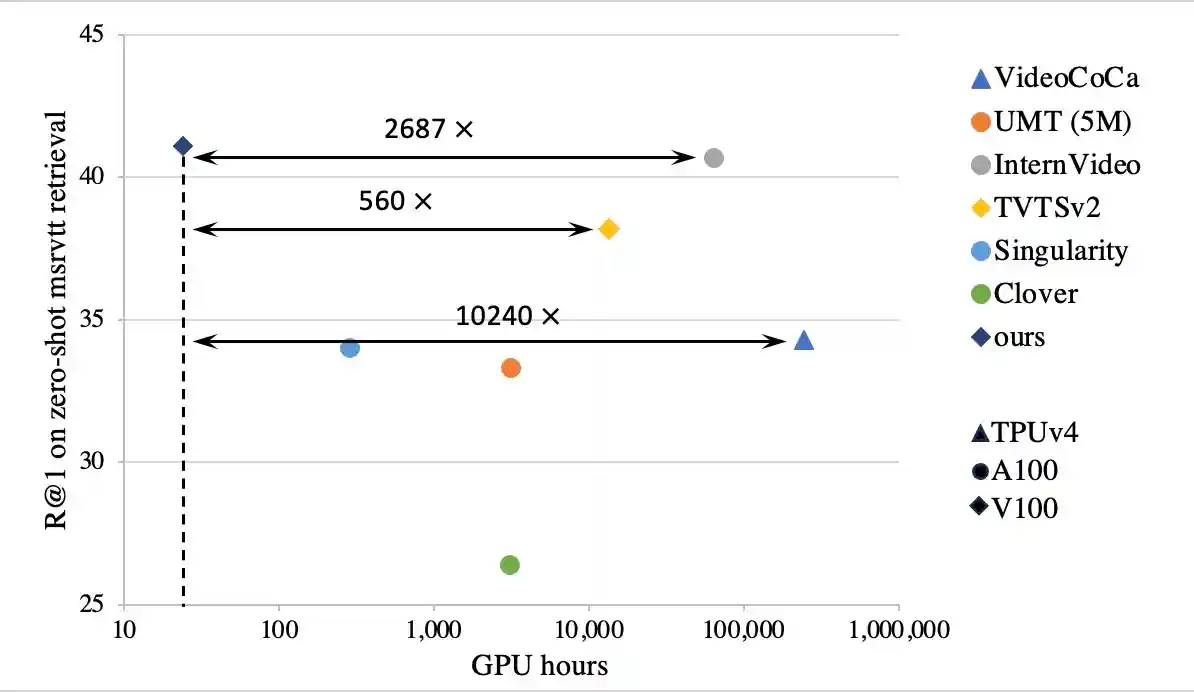

💡 State-of-the-art zero-shot results on various video tasks using thousands of fewer GPU hours

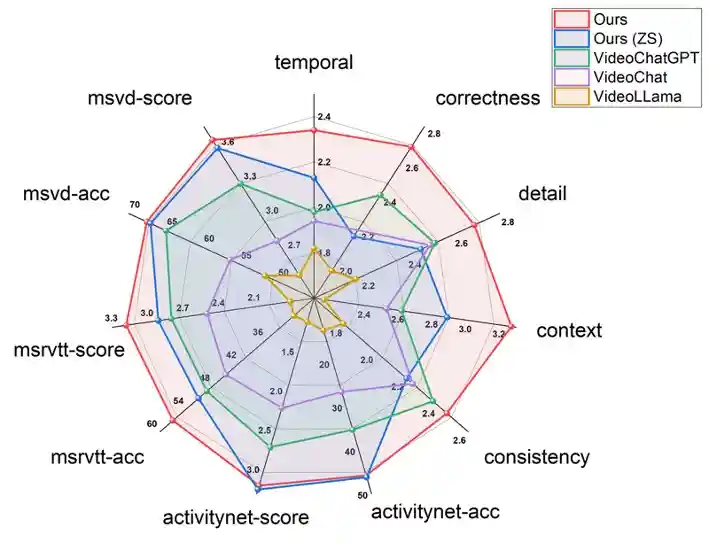

💡 State-of-the-art video conversation results with and without video instruction tuning

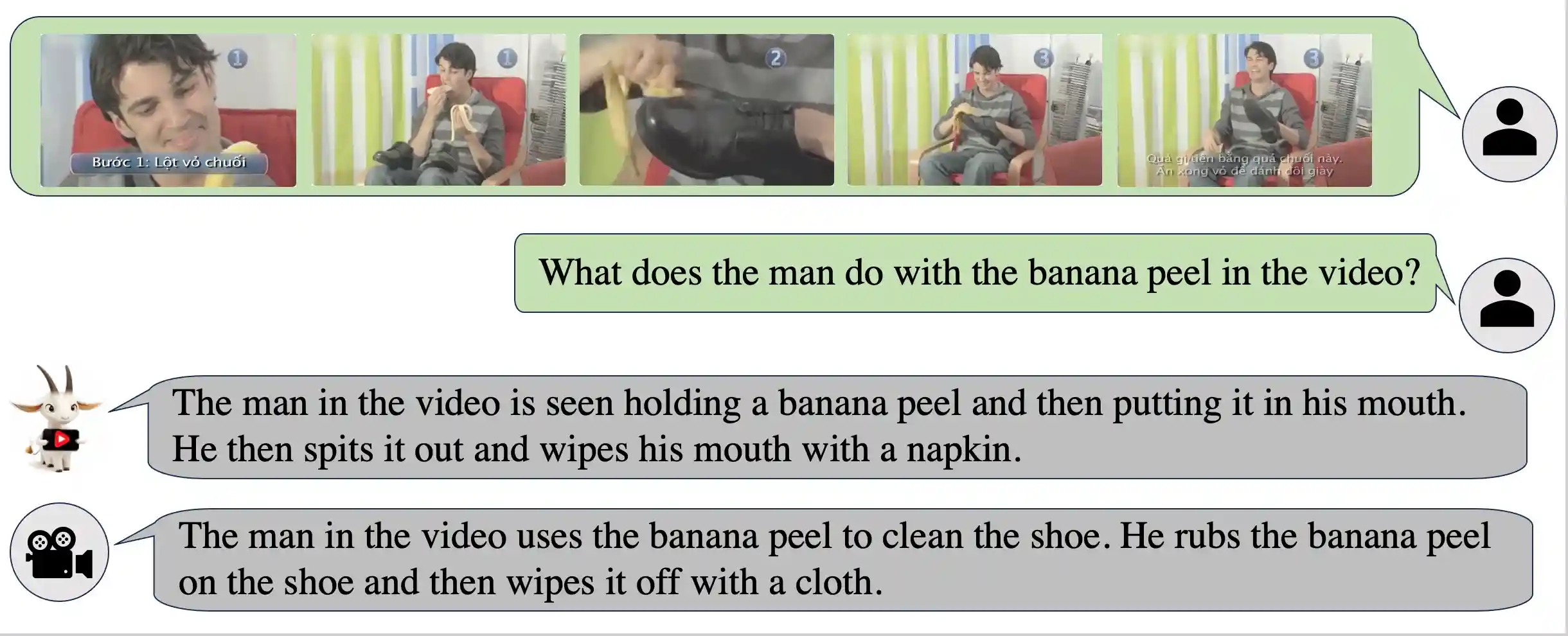

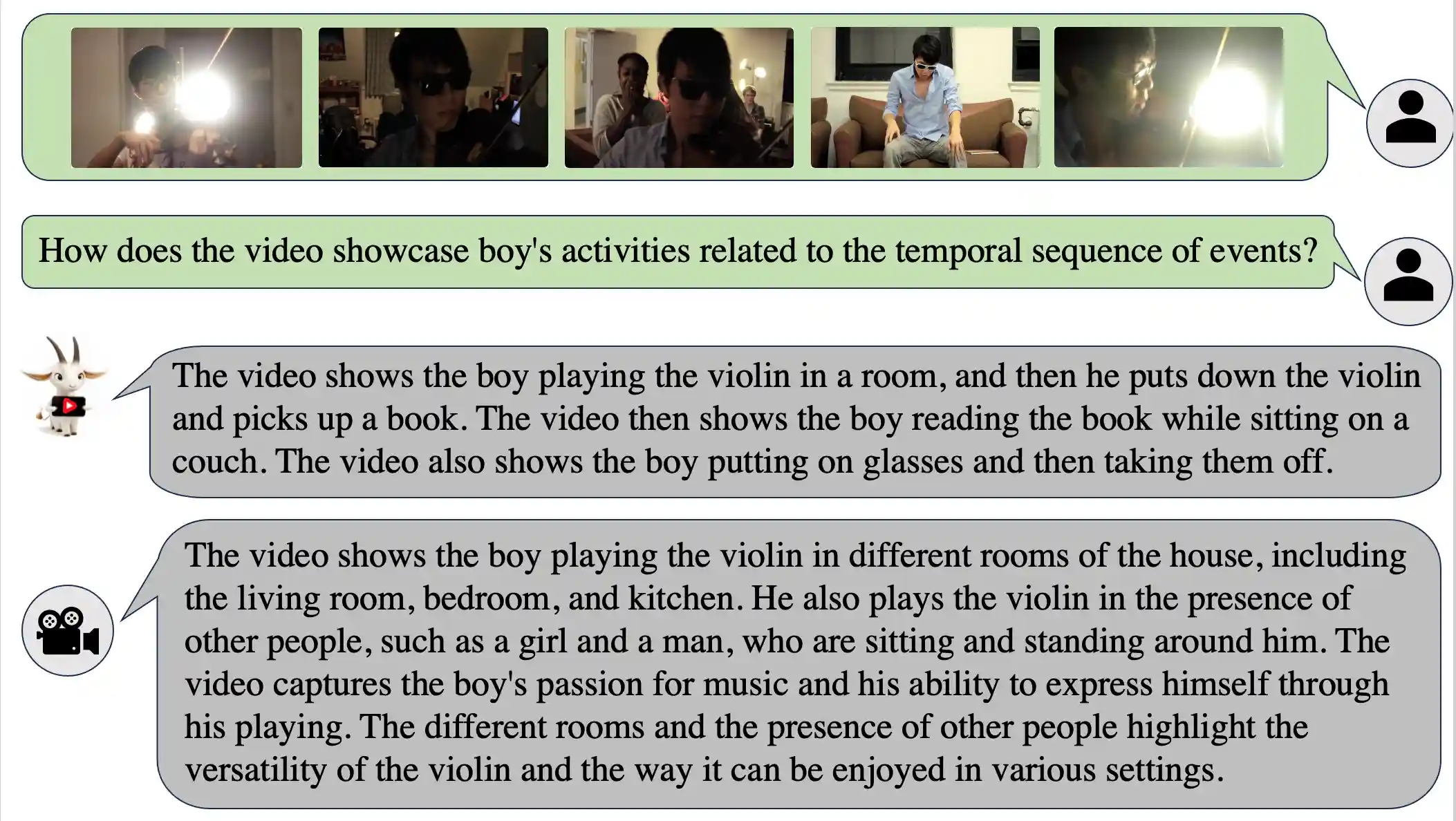

Qualitative Results

The Evaluation of BT-Adapter's Performance across Different Situations.

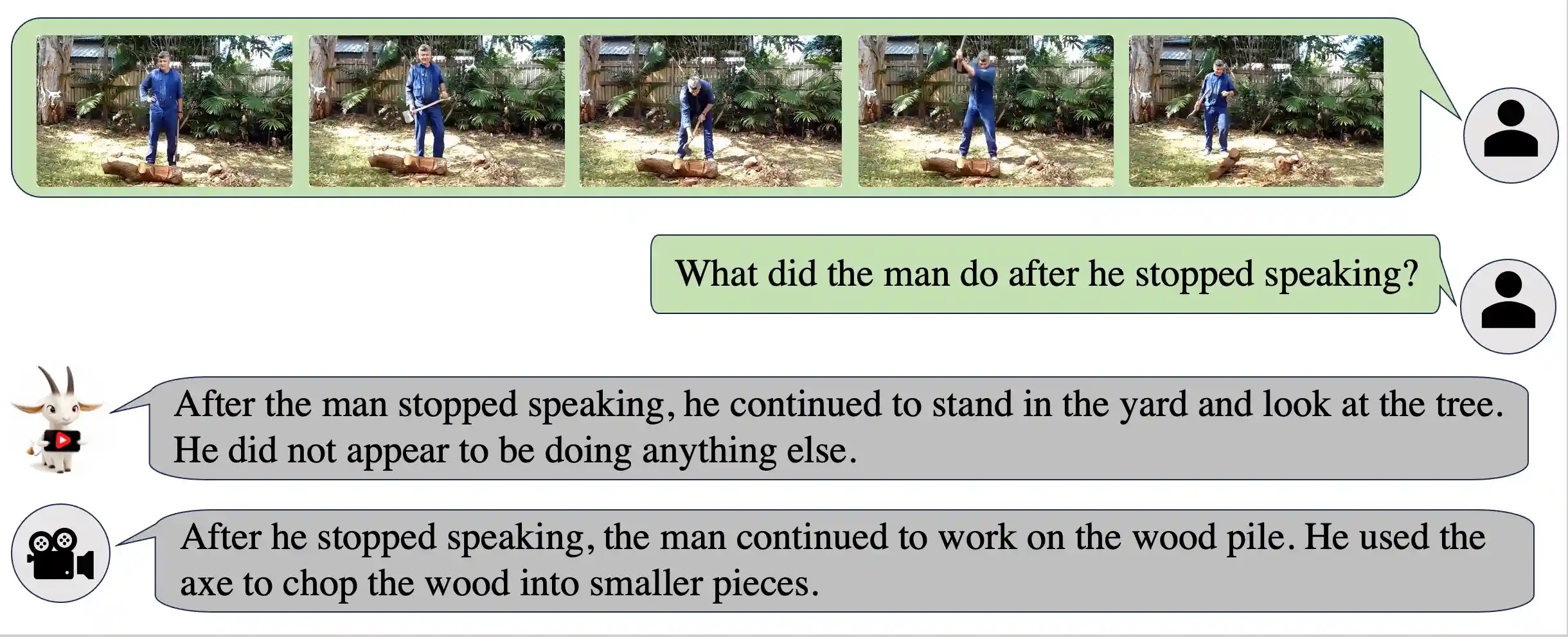

👀 The Sequence of Actions

👀 Unusual Actions

👀 Complex Actions and Scenes In A Long Video

Citation

If you find the code useful for your research, please consider citing our paper:

@article{liu2023one,

title={One for all: Video conversation is feasible without video instruction tuning},

author={Liu, Ruyang and Li, Chen and Ge, Yixiao and Shan, Ying and Li, Thomas H and Li, Ge},

journal={arXiv preprint arXiv:2309.15785},

year={2023}

}