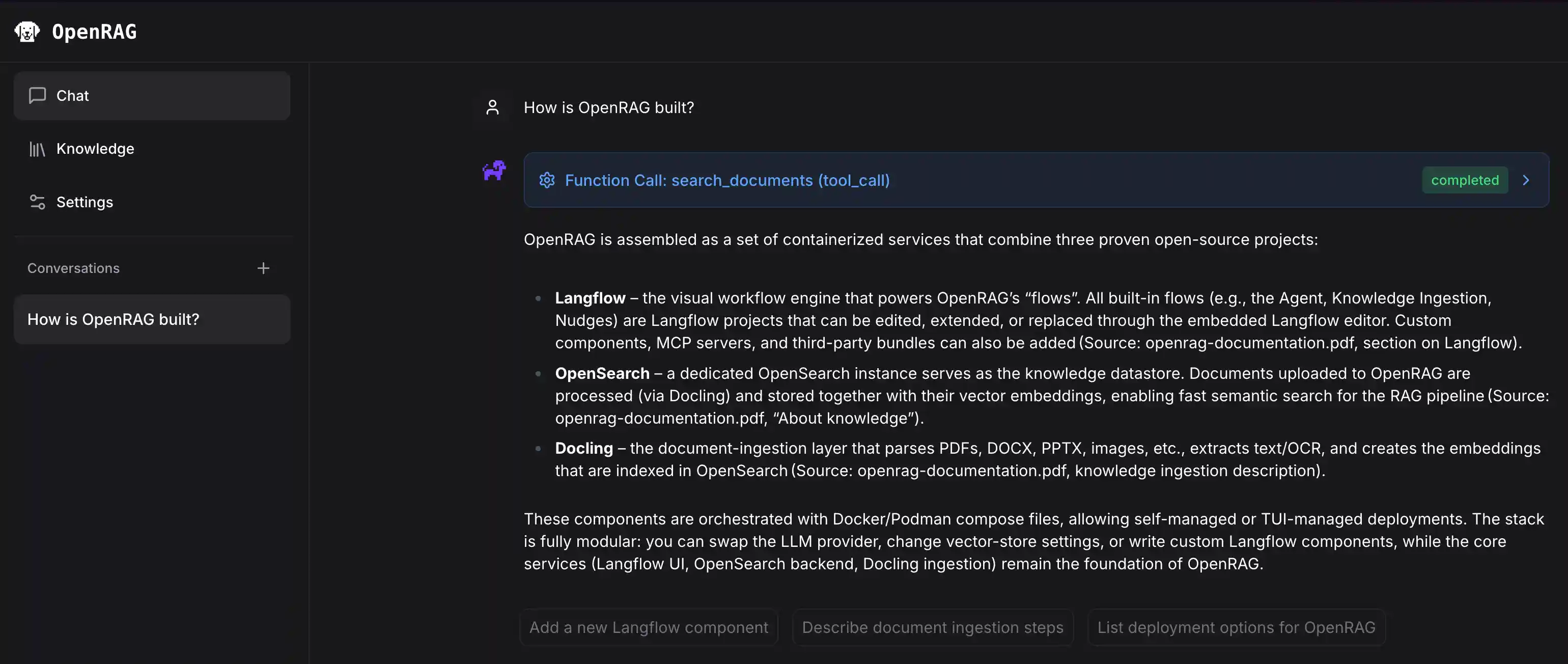

OpenRAG is a comprehensive Retrieval-Augmented Generation platform that enables intelligent document search and AI-powered conversations.

Users can upload, process, and query documents through a chat interface backed by large language models and semantic search capabilities. The system utilizes Langflow for document ingestion, retrieval workflows, and intelligent nudges, providing a seamless RAG experience.

Built with Starlette and Next.js. Powered by OpenSearch, Langflow, and Docling.

✨ Highlight Features

- Pre-packaged & ready to run - All core tools are hooked up and ready to go, just install and run

- Agentic RAG workflows - Advanced orchestration with re-ranking and multi-agent coordination

- Document ingestion - Handles messy, real-world data with intelligent parsing

- Drag-and-drop workflow builder - Visual interface powered by Langflow for rapid iteration

- Modular enterprise add-ons - Extend functionality when you need it

- Enterprise search at any scale - Powered by OpenSearch for production-grade performance

🔄 How OpenRAG Works

OpenRAG follows a streamlined workflow to transform your documents into intelligent, searchable knowledge:

🚀 Install OpenRAG

To get started with OpenRAG, see the installation guides in the OpenRAG documentation:

✨ Quick Start Workflow

📦 SDKs

Integrate OpenRAG into your applications with our official SDKs:

Python SDK

Quick Example:

import asyncio from openrag_sdk import OpenRAGClient async def main(): async with OpenRAGClient() as client: response = await client.chat.create(message="What is RAG?") print(response.response) if __name__ == "__main__": asyncio.run(main())

📖 Full Python SDK Documentation

TypeScript/JavaScript SDK

Quick Example:

import { OpenRAGClient } from "openrag-sdk"; const client = new OpenRAGClient(); const response = await client.chat.create({ message: "What is RAG?" }); console.log(response.response);

📖 Full TypeScript/JavaScript SDK Documentation

🔌 Model Context Protocol (MCP)

Connect AI assistants like Cursor and Claude Desktop to your OpenRAG knowledge base:

Quick Example (Cursor/Claude Desktop config):

{

"mcpServers": {

"openrag": {

"command": "uvx",

"args": ["openrag-mcp"],

"env": {

"OPENRAG_URL": "http://localhost:3000",

"OPENRAG_API_KEY": "your_api_key_here"

}

}

}

}The MCP server provides tools for RAG-enhanced chat, semantic search, and settings management.

🛠️ Development

For developers who want to contribute to OpenRAG or set up a development environment, see CONTRIBUTING.md.

🆘 Troubleshooting

For assistance with OpenRAG, see Troubleshoot OpenRAG and visit the Discussions page.

To report a bug or submit a feature request, visit the Issues page.