INTERN-2.5: Multimodal Multitask General Large Model

This repository is an official implementation of the InternImage: Exploring Large-Scale Vision Foundation Models with Deformable Convolutions.

Paper | Blog in Chinese | Documents

Introduction

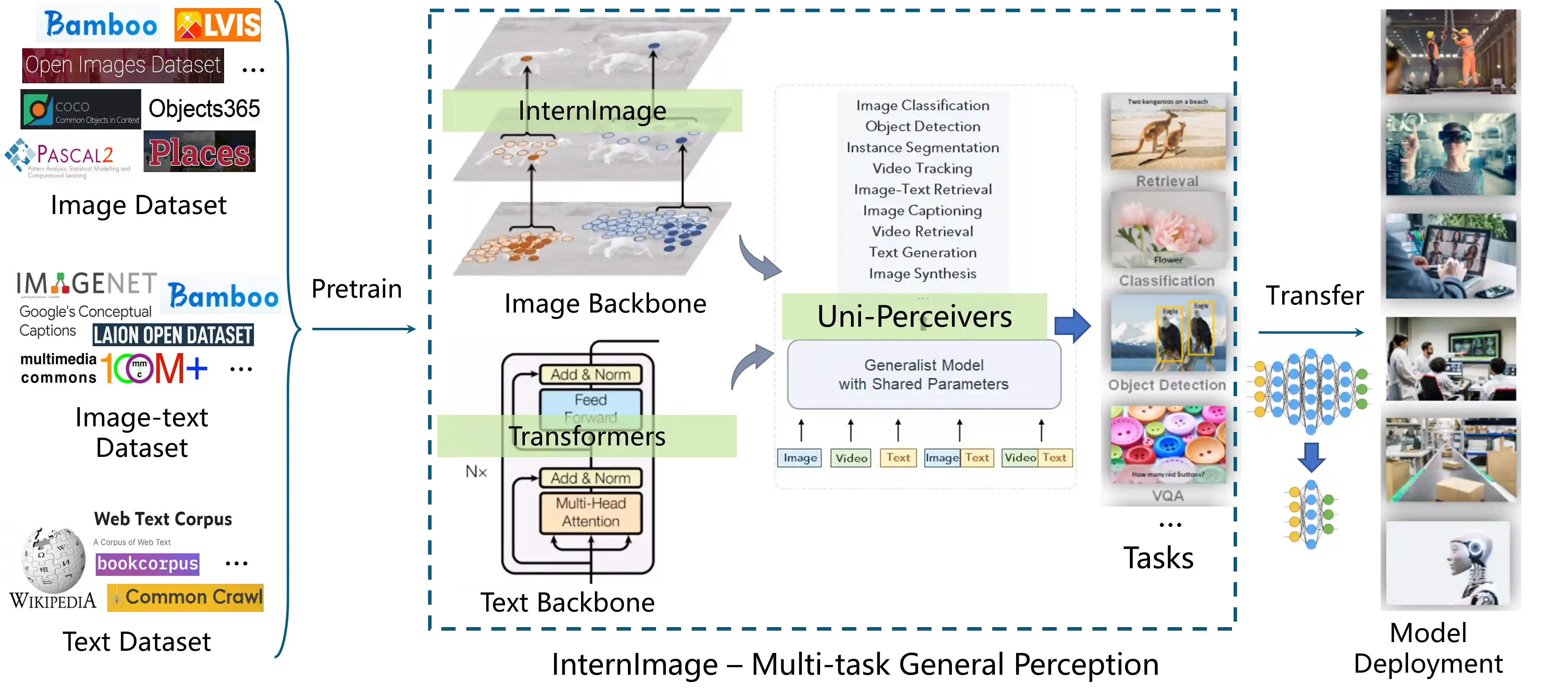

SenseTime and Shanghai AI Laboratory jointly released the multimodal multitask general model "INTERN-2.5" on March 14, 2023. "INTERN-2.5" achieved multiple breakthroughs in multimodal multitask processing, and its excellent cross-modal task processing ability in text and image can provide efficient and accurate perception and understanding capabilities for general scenarios such as autonomous driving.

Overview

Highlights

- 👍 The strongest visual universal backbone model with up to 3 billion parameters

- 🏆 Achieved

90.1% Top1accuracy in ImageNet, the most accurate among open-source models - 🏆 Achieved

65.5 mAPon the COCO benchmark dataset for object detection, the only model that exceeded65.0 mAP

News

Mar 14, 2023: 🚀 "INTERN-2.5" is released!Feb 28, 2023: 🚀 InternImage is accepted to CVPR 2023!Nov 18, 2022: 🚀 InternImage-XL merged into BEVFormer v2 achieves state-of-the-art performance of63.4 NDSon nuScenes Camera Only.Nov 10, 2022: 🚀 InternImage-H achieves a new record65.4 mAPon COCO detection test-dev and62.9 mIoUon ADE20K, outperforming previous models by a large margin.

Applications

1. Performance on Image Modality Tasks

- On the ImageNet benchmark dataset, "INTERN-2.5" achieved a Top-1 accuracy of 90.1% using only publicly available data for image classification. This is the only model, besides two undisclosed models from Google and Microsoft and additional datasets, to achieve a Top-1 accuracy of over 90.0%. It is also the highest-accuracy open-source model on ImageNet and the largest model in scale in the world.

- On the COCO object detection benchmark dataset, "INTERN-2.5" achieved a mAP of 65.5, making it the only model in the world to surpass 65 mAP.

- "INTERN-2.5" achieved the world's best performance on 16 other important visual benchmark datasets, covering classification, detection, and segmentation tasks.

Classification Task

| Image Classification | Scene Classification | Long-Tail Classification | |

|---|---|---|---|

| ImageNet | Places365 | Places 205 | iNaturalist 2018 |

| 90.1 | 61.2 | 71.7 | 92.3 |

Detection Task

| Conventional Object Detection | Long-Tail Object Detection | Autonomous Driving Object Detection | Dense Object Detection | |||||

|---|---|---|---|---|---|---|---|---|

| COCO | VOC 2007 | VOC 2012 | OpenImage | LVIS minival | LVIS val | BDD100K | nuScenes | CrowdHuman |

| 65.5 | 94.0 | 97.2 | 74.1 | 65.8 | 63.2 | 38.8 | 64.8 | 97.2 |

Segmentation Task

| Semantic Segmentation | Street Segmentation | RGBD Segmentation | ||

|---|---|---|---|---|

| ADE20K | COCO Stuff-10K | Pascal Context | CityScapes | NYU Depth V2 |

| 62.9 | 59.6 | 70.3 | 86.1 | 69.7 |

2. Cross-Modal Performance for Image and Text Tasks

- Image-Text Retrieval

"INTERN-2.5" can quickly locate and retrieve the most semantically relevant images based on textual content requirements. This capability can be applied to both videos and image collections and can be further combined with object detection boxes to enable a variety of applications, helping users quickly and easily find the required image resources. For example, it can return the relevant images specified by the text in the album.

- Image-To-Text

"INTERN-2.5" has a strong understanding capability in various aspects of visual-to-text tasks such as image captioning, visual question answering, visual reasoning, and optical character recognition. For example, in the context of autonomous driving, it can enhance the scene perception and understanding capabilities, assist the vehicle in judging traffic signal status, road signs, and other information, and provide effective perception information support for vehicle decision-making and planning.

Multimodal Tasks

| Image Captioning | Fine-tuning Image-Text Retrieval | Zero-shot Image-Text Retrieval | |

|---|---|---|---|

| COCO Caption | COCO Caption | Flickr30k | Flickr30k |

| 148.2 | 76.4 | 94.8 | 89.1 |

Core Technologies

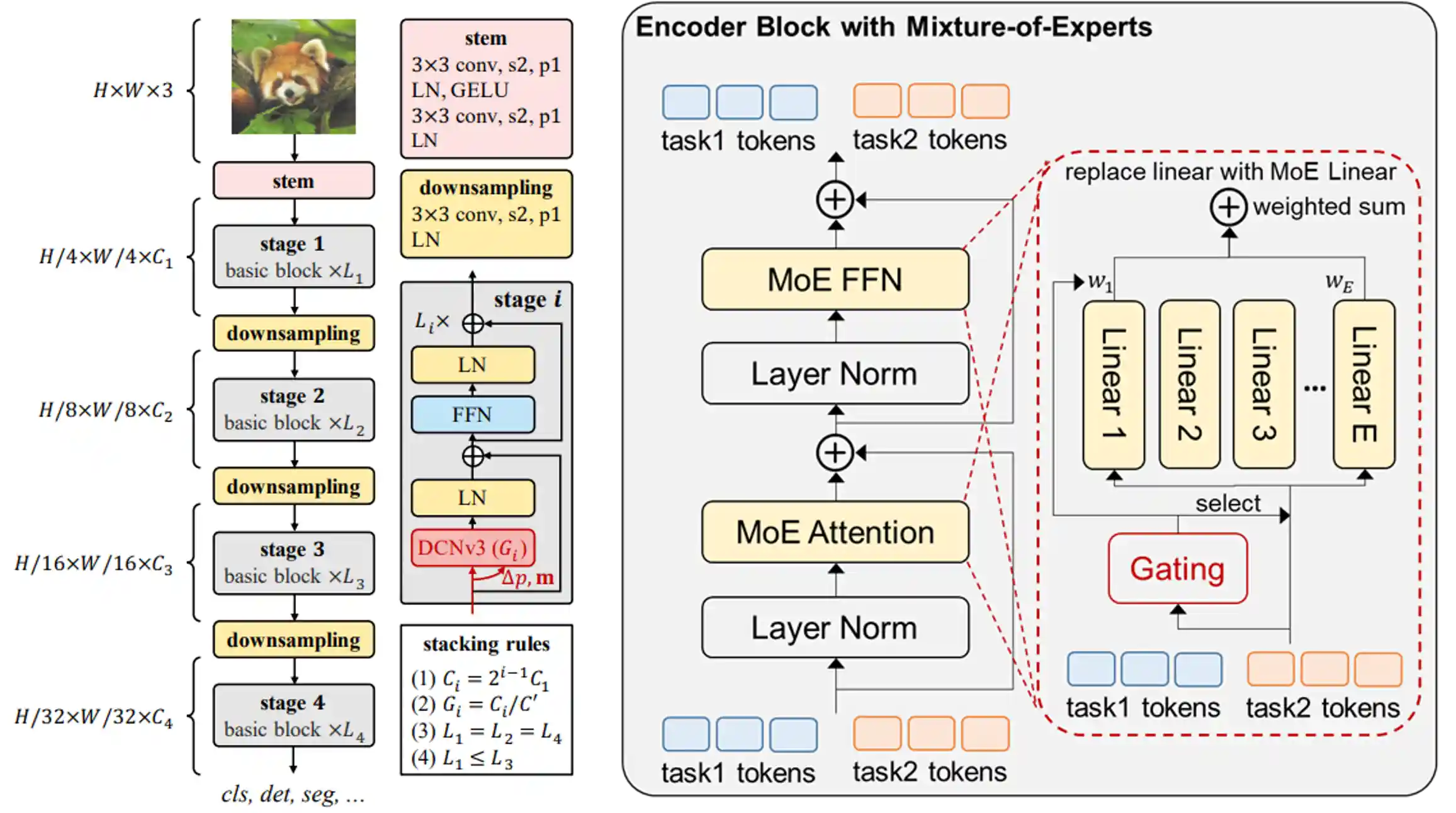

The outstanding performance of "INTERN-2.5" in the field of cross-modal learning is due to several innovations in the core technology of multi-modal multi-task general model, including the development of InternImage as the backbone network for visual perception, LLM as the large-scale text pre-training network for text processing, and Uni-Perceiver as the compatible decoding modeling for multi-task learning.

InternImage, the visual backbone network of "INTERN-2.5", has a parameter size of up to 3 billion and can adaptively adjust the position and combination of convolutions based on dynamic sparse convolution operators, providing powerful representations for multi-functional visual perception. Uni-Perceiver, a versatile task decoding model, encodes data from different modalities into a unified representation space and unifies different tasks into the same task paradigm, enabling simultaneous processing of various modalities and tasks with the same task architecture and shared model parameters.

Project Release

- Model for other downstream tasks

- InternImage-H(1B)/G(3B)

- TensorRT inference

- Classification code of the InternImage series

- InternImage-T/S/B/L/XL ImageNet-1K pretrained model

- InternImage-L/XL ImageNet-22K pretrained model

- InternImage-T/S/B/L/XL detection and instance segmentation model

- InternImage-T/S/B/L/XL semantic segmentation model

Related Projects

- Object Detection and Instance Segmentation: COCO

- Semantic Segmentation: ADE20K, Cityscapes

- Image-Text Retrieval, Image Captioning, and Visual Question Answering: Uni-Perceiver

- 3D Perception: BEVFormer

Open-source Visual Pretrained Models

| name | pretrain | pre-training resolution | #param | download |

|---|---|---|---|---|

| InternImage-L | ImageNet-22K | 384x384 | 223M | ckpt |

| InternImage-XL | ImageNet-22K | 384x384 | 335M | ckpt |

| InternImage-H | Joint 427M | 384x384 | 1.08B | ckpt |

| InternImage-G | - | 384x384 | 3B | ckpt |

ImageNet-1K Image Classification

| name | pretrain | resolution | acc@1 | #param | FLOPs | download |

|---|---|---|---|---|---|---|

| InternImage-T | ImageNet-1K | 224x224 | 83.5 | 30M | 5G | ckpt | cfg |

| InternImage-S | ImageNet-1K | 224x224 | 84.2 | 50M | 8G | ckpt | cfg |

| InternImage-B | ImageNet-1K | 224x224 | 84.9 | 97M | 16G | ckpt | cfg |

| InternImage-L | ImageNet-22K | 384x384 | 87.7 | 223M | 108G | ckpt | cfg |

| InternImage-XL | ImageNet-22K | 384x384 | 88.0 | 335M | 163G | ckpt | cfg |

| InternImage-H | Joint 427M | 640x640 | 89.6 | 1.08B | 1478G | ckpt | cfg |

| InternImage-G | - | 512x512 | 90.1 | 3B | 2700G | ckpt | cfg |

COCO Object Detection and Instance Segmentation

| backbone | method | schd | box mAP | mask mAP | #param | FLOPs | download |

|---|---|---|---|---|---|---|---|

| InternImage-T | Mask R-CNN | 1x | 47.2 | 42.5 | 49M | 270G | ckpt | cfg |

| InternImage-T | Mask R-CNN | 3x | 49.1 | 43.7 | 49M | 270G | ckpt | cfg |

| InternImage-S | Mask R-CNN | 1x | 47.8 | 43.3 | 69M | 340G | ckpt | cfg |

| InternImage-S | Mask R-CNN | 3x | 49.7 | 44.5 | 69M | 340G | ckpt | cfg |

| InternImage-B | Mask R-CNN | 1x | 48.8 | 44.0 | 115M | 501G | ckpt | cfg |

| InternImage-B | Mask R-CNN | 3x | 50.3 | 44.8 | 115M | 501G | ckpt | cfg |

| InternImage-L | Cascade | 1x | 54.9 | 47.7 | 277M | 1399G | ckpt | cfg |

| InternImage-L | Cascade | 3x | 56.1 | 48.5 | 277M | 1399G | ckpt | cfg |

| InternImage-XL | Cascade | 1x | 55.3 | 48.1 | 387M | 1782G | ckpt | cfg |

| InternImage-XL | Cascade | 3x | 56.2 | 48.8 | 387M | 1782G | ckpt | cfg |

| backbone | method | box mAP (val/test) | #param | FLOPs | download |

|---|---|---|---|---|---|

| InternImage-H | DINO (TTA) | 65.0 / 65.4 | 2.18B | TODO | TODO |

| InternImage-G | DINO (TTA) | 65.3 / 65.5 | 3B | TODO | TODO |

ADE20K Semantic Segmentation

| backbone | method | resolution | mIoU (ss/ms) | #param | FLOPs | download |

|---|---|---|---|---|---|---|

| InternImage-T | UperNet | 512x512 | 47.9 / 48.1 | 59M | 944G | ckpt | cfg |

| InternImage-S | UperNet | 512x512 | 50.1 / 50.9 | 80M | 1017G | ckpt | cfg |

| InternImage-B | UperNet | 512x512 | 50.8 / 51.3 | 128M | 1185G | ckpt | cfg |

| InternImage-L | UperNet | 640x640 | 53.9 / 54.1 | 256M | 2526G | ckpt | cfg |

| InternImage-XL | UperNet | 640x640 | 55.0 / 55.3 | 368M | 3142G | ckpt | cfg |

| InternImage-H | UperNet | 896x896 | 59.9 / 60.3 | 1.12B | 3566G | ckpt | cfg |

| InternImage-H | Mask2Former | 896x896 | 62.5 / 62.9 | 1.31B | 4635G | TODO |

Main Results of FPS

export classification model from pytorch to tensorrt

export detection model from pytorch to tensorrt

export segmentation model from pytorch to tensorrt

| name | resolution | #param | FLOPs | batch 1 FPS (TensorRT) |

|---|---|---|---|---|

| InternImage-T | 224x224 | 30M | 5G | 156 |

| InternImage-S | 224x224 | 50M | 8G | 129 |

| InternImage-B | 224x224 | 97M | 16G | 116 |

| InternImage-L | 384x384 | 223M | 108G | 56 |

| InternImage-XL | 384x384 | 335M | 163G | 47 |

Before using mmdeploy to convert our PyTorch models to TensorRT, please make sure you have the DCNv3 custom operator builded correctly. You can build it with the following command:

export MMDEPLOY_DIR=/the/root/path/of/MMDeploy # prepare our custom ops, you can find it at InternImage/tensorrt/modulated_deform_conv_v3 cp -r modulated_deform_conv_v3 ${MMDEPLOY_DIR}/csrc/mmdeploy/backend_ops/tensorrt # build custom ops cd ${MMDEPLOY_DIR} mkdir -p build && cd build cmake -DCMAKE_CXX_COMPILER=g++-7 -DMMDEPLOY_TARGET_BACKENDS=trt -DTENSORRT_DIR=${TENSORRT_DIR} -DCUDNN_DIR=${CUDNN_DIR} .. make -j$(nproc) && make install # install the mmdeploy after building custom ops cd ${MMDEPLOY_DIR} pip install -e .

For more details on building custom ops, please refering to this document.

Citation

If this work is helpful for your research, please consider citing the following BibTeX entry.

@article{wang2022internimage,

title={InternImage: Exploring Large-Scale Vision Foundation Models with Deformable Convolutions},

author={Wang, Wenhai and Dai, Jifeng and Chen, Zhe and Huang, Zhenhang and Li, Zhiqi and Zhu, Xizhou and Hu, Xiaowei and Lu, Tong and Lu, Lewei and Li, Hongsheng and others},

journal={arXiv preprint arXiv:2211.05778},

year={2022}

}

@inproceedings{zhu2022uni,

title={Uni-perceiver: Pre-training unified architecture for generic perception for zero-shot and few-shot tasks},

author={Zhu, Xizhou and Zhu, Jinguo and Li, Hao and Wu, Xiaoshi and Li, Hongsheng and Wang, Xiaohua and Dai, Jifeng},

booktitle={CVPR},

pages={16804--16815},

year={2022}

}

@article{zhu2022uni,

title={Uni-perceiver-moe: Learning sparse generalist models with conditional moes},

author={Zhu, Jinguo and Zhu, Xizhou and Wang, Wenhai and Wang, Xiaohua and Li, Hongsheng and Wang, Xiaogang and Dai, Jifeng},

journal={arXiv preprint arXiv:2206.04674},

year={2022}

}

@article{li2022uni,

title={Uni-Perceiver v2: A Generalist Model for Large-Scale Vision and Vision-Language Tasks},

author={Li, Hao and Zhu, Jinguo and Jiang, Xiaohu and Zhu, Xizhou and Li, Hongsheng and Yuan, Chun and Wang, Xiaohua and Qiao, Yu and Wang, Xiaogang and Wang, Wenhai and others},

journal={arXiv preprint arXiv:2211.09808},

year={2022}

}

@article{yang2022bevformer,

title={BEVFormer v2: Adapting Modern Image Backbones to Bird's-Eye-View Recognition via Perspective Supervision},

author={Yang, Chenyu and Chen, Yuntao and Tian, Hao and Tao, Chenxin and Zhu, Xizhou and Zhang, Zhaoxiang and Huang, Gao and Li, Hongyang and Qiao, Yu and Lu, Lewei and others},

journal={arXiv preprint arXiv:2211.10439},

year={2022}

}

@article{su2022towards,

title={Towards All-in-one Pre-training via Maximizing Multi-modal Mutual Information},

author={Su, Weijie and Zhu, Xizhou and Tao, Chenxin and Lu, Lewei and Li, Bin and Huang, Gao and Qiao, Yu and Wang, Xiaogang and Zhou, Jie and Dai, Jifeng},

journal={arXiv preprint arXiv:2211.09807},

year={2022}

}

@inproceedings{li2022bevformer,

title={Bevformer: Learning bird’s-eye-view representation from multi-camera images via spatiotemporal transformers},

author={Li, Zhiqi and Wang, Wenhai and Li, Hongyang and Xie, Enze and Sima, Chonghao and Lu, Tong and Qiao, Yu and Dai, Jifeng},

booktitle={ECCV},

pages={1--18},

year={2022},

}