🎨 DDColor

Official PyTorch implementation of ICCV 2023 Paper "DDColor: Towards Photo-Realistic Image Colorization via Dual Decoders".

Xiaoyang Kang, Tao Yang, Wenqi Ouyang, Peiran Ren, Lingzhi Li, Xuansong Xie

DAMO Academy, Alibaba Group

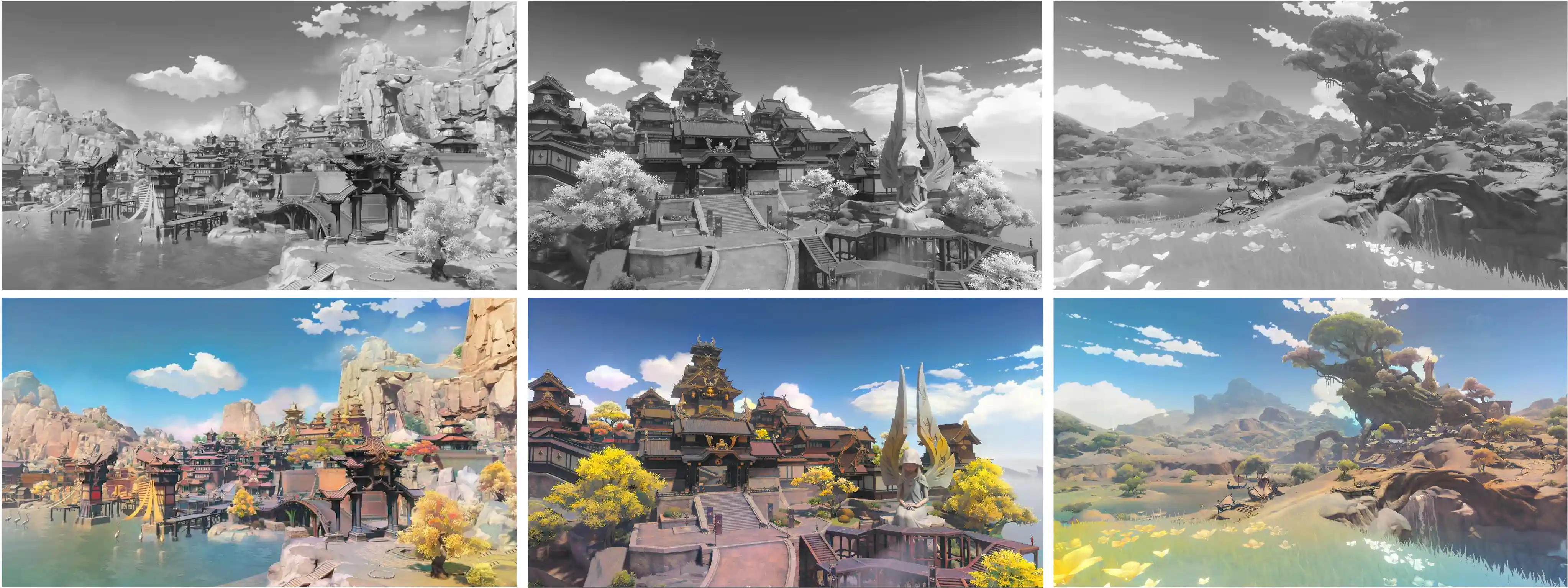

🪄 DDColor can provide vivid and natural colorization for historical black and white old photos.

🎲 It can even colorize/recolor landscapes from anime games, transforming your animated scenery into a realistic real-life style! (Image source: Genshin Impact)

News

- [2024-01-28] Support inference via 🤗 Hugging Face! Thanks @Niels for the suggestion and example code and @Skwara for fixing bug.

- [2024-01-18] Add Replicate demo and API! Thanks @Chenxi.

- [2023-12-13] Release the DDColor-tiny pre-trained model!

- [2023-09-07] Add the Model Zoo and release three pretrained models!

- [2023-05-15] Code release for training and inference!

- [2023-05-05] The online demo is available!

Online Demo

Try our online demos at ModelScope and Replicate.

Methods

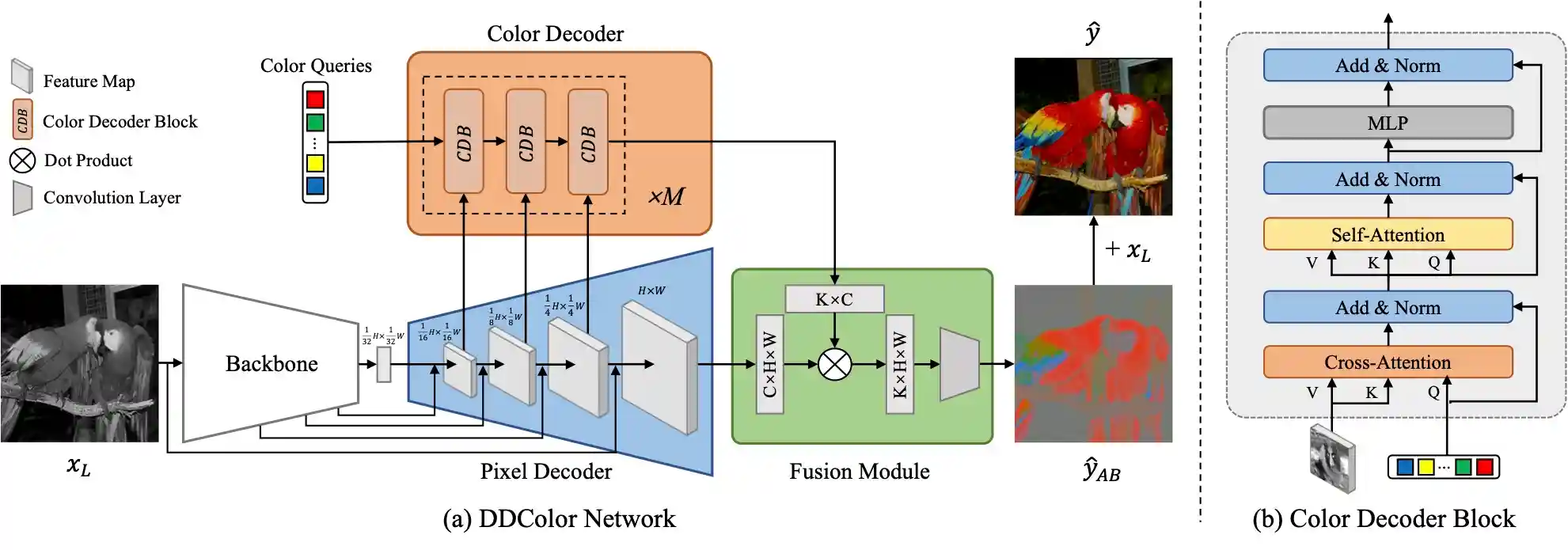

In short: DDColor uses multi-scale visual features to optimize learnable color tokens (i.e. color queries) and achieves state-of-the-art performance on automatic image colorization.

Installation

Requirements

- Python >= 3.7

- PyTorch >= 1.7

Installation with conda (recommended)

conda create -n ddcolor python=3.9

conda activate ddcolor

pip install torch==2.2.0 torchvision==0.17.0 --index-url https://download.pytorch.org/whl/cu118

pip install -r requirements.txt

# For training, install the following additional dependencies and basicsr

pip install -r requirements.train.txt

python3 setup.py developQuick Start

Inference Using Local Script (No basicsr Required)

- Download the pretrained model:

from modelscope.hub.snapshot_download import snapshot_download model_dir = snapshot_download('damo/cv_ddcolor_image-colorization', cache_dir='./modelscope') print('model assets saved to %s' % model_dir)

- Run inference with

python scripts/infer.py --model_path ./modelscope/damo/cv_ddcolor_image-colorization/pytorch_model.pt --input ./assets/test_images

or

Inference Using Hugging Face

Load the model via Hugging Face Hub:

from huggingface_hub import PyTorchModelHubMixin from ddcolor import DDColor class DDColorHF(DDColor, PyTorchModelHubMixin): def __init__(self, config=None, **kwargs): if isinstance(config, dict): kwargs = {**config, **kwargs} super().__init__(**kwargs) ddcolor_paper_tiny = DDColorHF.from_pretrained("piddnad/ddcolor_paper_tiny") ddcolor_paper = DDColorHF.from_pretrained("piddnad/ddcolor_paper") ddcolor_modelscope = DDColorHF.from_pretrained("piddnad/ddcolor_modelscope") ddcolor_artistic = DDColorHF.from_pretrained("piddnad/ddcolor_artistic")

Or directly perform model inference by running:

python scripts/infer.py --model_name ddcolor_modelscope --input ./assets/test_images

# model_name: [ddcolor_paper | ddcolor_modelscope | ddcolor_artistic | ddcolor_paper_tiny]Inference Using ModelScope

- Install modelscope:

- Run inference:

import cv2 from modelscope.outputs import OutputKeys from modelscope.pipelines import pipeline from modelscope.utils.constant import Tasks img_colorization = pipeline(Tasks.image_colorization, model='damo/cv_ddcolor_image-colorization') result = img_colorization('https://modelscope.oss-cn-beijing.aliyuncs.com/test/images/audrey_hepburn.jpg') cv2.imwrite('result.png', result[OutputKeys.OUTPUT_IMG])

This code will automatically download the ddcolor_modelscope model (see ModelZoo) and performs inference. The model file pytorch_model.pt can be found in the local path ~/.cache/modelscope/hub/damo.

Gradio Demo

Install the gradio and other required libraries:

pip install gradio gradio_imageslider

Then, you can run the demo with the following command:

python demo/gradio_app.py

Model Zoo

We provide several different versions of pretrained models, please check out Model Zoo.

Train

- Dataset Preparation: Download the ImageNet dataset or create a custom dataset. Use this script to obtain the dataset list file:

python scripts/get_meta_file.py

-

Download the pretrained weights for ConvNeXt and InceptionV3 and place them in the

pretrainfolder. -

Specify 'meta_info_file' and other options in

options/train/train_ddcolor.yml. -

Start training:

ONNX export

Support for ONNX model exports is available.

- Install dependencies:

pip install onnx==1.16.1 onnxruntime==1.19.2 onnxsim==0.4.36

- Usage example:

python scripts/export_onnx.py --model_path pretrain/ddcolor_paper_tiny.pth --export_path weights/ddcolor-tiny.onnx

Demo of ONNX export using a ddcolor_paper_tiny model is available here.

Citation

If our work is helpful for your research, please consider citing:

@inproceedings{kang2023ddcolor,

title={DDColor: Towards Photo-Realistic Image Colorization via Dual Decoders},

author={Kang, Xiaoyang and Yang, Tao and Ouyang, Wenqi and Ren, Peiran and Li, Lingzhi and Xie, Xuansong},

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision},

pages={328--338},

year={2023}

}

Acknowledgments

We thank the authors of BasicSR for the awesome training pipeline.

Xintao Wang, Ke Yu, Kelvin C.K. Chan, Chao Dong and Chen Change Loy. BasicSR: Open Source Image and Video Restoration Toolbox. https://github.com/xinntao/BasicSR, 2020.

Some codes are adapted from ColorFormer, BigColor, ConvNeXt, Mask2Former, and DETR. Thanks for their excellent work!