🦐 PicoClaw is an ultra-lightweight personal AI Assistant inspired by nanobot, refactored from the ground up in Go through a self-bootstrapping process, where the AI agent itself drove the entire architectural migration and code optimization.

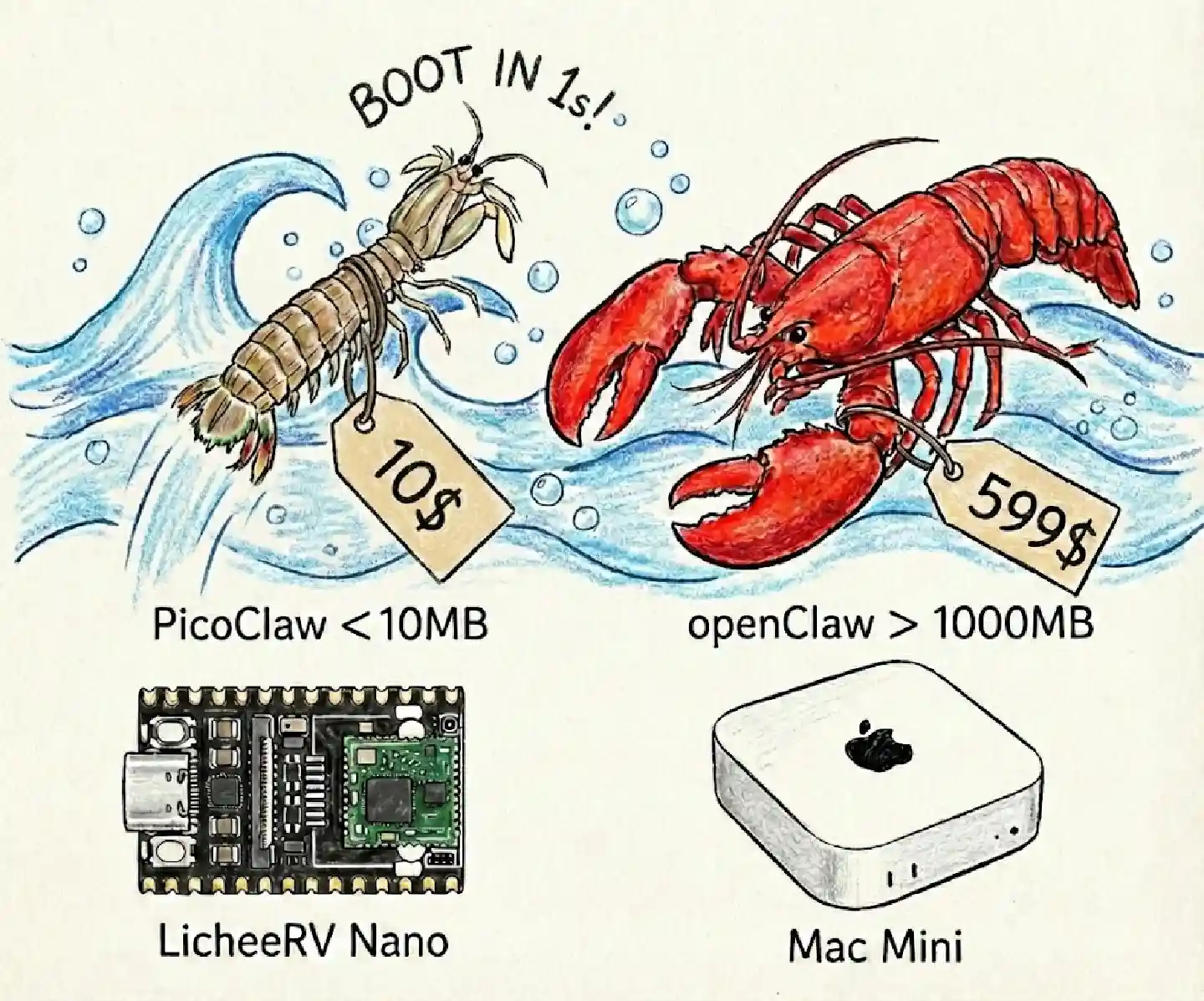

⚡️ Runs on $10 hardware with <10MB RAM: That's 99% less memory than OpenClaw and 98% cheaper than a Mac mini!

Caution

🚨 SECURITY & OFFICIAL CHANNELS / 安全声明

-

NO CRYPTO: PicoClaw has NO official token/coin. All claims on

pump.funor other trading platforms are SCAMS. -

OFFICIAL DOMAIN: The ONLY official website is picoclaw.io, and company website is sipeed.com

-

Warning: Many

.ai/.org/.com/.net/...domains are registered by third parties. -

Warning: picoclaw is in early development now and may have unresolved network security issues. Do not deploy to production environments before the v1.0 release.

-

Note: picoclaw has recently merged a lot of PRs, which may result in a larger memory footprint (10–20MB) in the latest versions. We plan to prioritize resource optimization as soon as the current feature set reaches a stable state.

📢 News

2026-02-16 🎉 PicoClaw hit 12K stars in one week! Thank you all for your support! PicoClaw is growing faster than we ever imagined. Given the high volume of PRs, we urgently need community maintainers. Our volunteer roles and roadmap are officially posted here —we can’t wait to have you on board!

2026-02-13 🎉 PicoClaw hit 5000 stars in 4days! Thank you for the community! There are so many PRs & issues coming in (during Chinese New Year holidays), we are finalizing the Project Roadmap and setting up the Developer Group to accelerate PicoClaw's development.

🚀 Call to Action: Please submit your feature requests in GitHub Discussions. We will review and prioritize them during our upcoming weekly meeting.

2026-02-09 🎉 PicoClaw Launched! Built in 1 day to bring AI Agents to $10 hardware with <10MB RAM. 🦐 PicoClaw,Let's Go!

✨ Features

🪶 Ultra-Lightweight: <10MB Memory footprint — 99% smaller than Clawdbot - core functionality.

💰 Minimal Cost: Efficient enough to run on $10 Hardware — 98% cheaper than a Mac mini.

⚡️ Lightning Fast: 400X Faster startup time, boot in 1 second even in 0.6GHz single core.

🌍 True Portability: Single self-contained binary across RISC-V, ARM, MIPS, and x86, One-click to Go!

🤖 AI-Bootstrapped: Autonomous Go-native implementation — 95% Agent-generated core with human-in-the-loop refinement.

| OpenClaw | NanoBot | PicoClaw | |

|---|---|---|---|

| Language | TypeScript | Python | Go |

| RAM | >1GB | >100MB | < 10MB |

| Startup (0.8GHz core) |

>500s | >30s | <1s |

| Cost | Mac Mini 599$ | Most Linux SBC ~50$ |

Any Linux Board As low as 10$ |

🦾 Demonstration

🛠️ Standard Assistant Workflows

🧩 Full-Stack Engineer |

🗂️ Logging & Planning Management |

🔎 Web Search & Learning |

|---|---|---|

| Develop • Deploy • Scale | Schedule • Automate • Memory | Discovery • Insights • Trends |

📱 Run on old Android Phones

Give your decade-old phone a second life! Turn it into a smart AI Assistant with PicoClaw. Quick Start:

- Install Termux (Available on F-Droid or Google Play).

- Execute cmds

# Note: Replace v0.1.1 with the latest version from the Releases page

wget https://github.com/sipeed/picoclaw/releases/download/v0.1.1/picoclaw-linux-arm64

chmod +x picoclaw-linux-arm64

pkg install proot

termux-chroot ./picoclaw-linux-arm64 onboardAnd then follow the instructions in the "Quick Start" section to complete the configuration!

🐜 Innovative Low-Footprint Deploy

PicoClaw can be deployed on almost any Linux device!

- $9.9 LicheeRV-Nano E(Ethernet) or W(WiFi6) version, for Minimal Home Assistant

- $30~50 NanoKVM, or $100 NanoKVM-Pro for Automated Server Maintenance

- $50 MaixCAM or $100 MaixCAM2 for Smart Monitoring

picoclaw_detect_person.mp4

🌟 More Deployment Cases Await!

📦 Install

Install with precompiled binary

Download the firmware for your platform from the release page.

Install from source (latest features, recommended for development)

git clone https://github.com/sipeed/picoclaw.git cd picoclaw make deps # Build, no need to install make build # Build for multiple platforms make build-all # Build for Raspberry Pi Zero 2 W (32-bit: make build-linux-arm; 64-bit: make build-linux-arm64) make build-pi-zero # Build And Install make install

Raspberry Pi Zero 2 W: Use the binary that matches your OS: 32-bit Raspberry Pi OS → make build-linux-arm (output: build/picoclaw-linux-arm); 64-bit → make build-linux-arm64 (output: build/picoclaw-linux-arm64). Or run make build-pi-zero to build both.

🐳 Docker Compose

You can also run PicoClaw using Docker Compose without installing anything locally.

# 1. Clone this repo git clone https://github.com/sipeed/picoclaw.git cd picoclaw # 2. First run — auto-generates docker/data/config.json then exits docker compose -f docker/docker-compose.yml --profile gateway up # The container prints "First-run setup complete." and stops. # 3. Set your API keys vim docker/data/config.json # Set provider API keys, bot tokens, etc. # 4. Start docker compose -f docker/docker-compose.yml --profile gateway up -d

Tip

Docker Users: By default, the Gateway listens on 127.0.0.1 which is not accessible from the host. If you need to access the health endpoints or expose ports, set PICOCLAW_GATEWAY_HOST=0.0.0.0 in your environment or update config.json.

# 5. Check logs docker compose -f docker/docker-compose.yml logs -f picoclaw-gateway # 6. Stop docker compose -f docker/docker-compose.yml --profile gateway down

Agent Mode (One-shot)

# Ask a question docker compose -f docker/docker-compose.yml run --rm picoclaw-agent -m "What is 2+2?" # Interactive mode docker compose -f docker/docker-compose.yml run --rm picoclaw-agent

Update

docker compose -f docker/docker-compose.yml pull docker compose -f docker/docker-compose.yml --profile gateway up -d

🚀 Quick Start

Tip

Set your API key in ~/.picoclaw/config.json.

Get API keys: OpenRouter (LLM) · Zhipu (LLM)

Web Search is optional - get free Tavily API (1000 free queries/month), SearXNG (free, self-hosted) or Brave Search API (2000 free queries/month) or use built-in auto fallback.

1. Initialize

2. Configure (~/.picoclaw/config.json)

{

"agents": {

"defaults": {

"workspace": "~/.picoclaw/workspace",

"model_name": "gpt4",

"max_tokens": 8192,

"temperature": 0.7,

"max_tool_iterations": 20

}

},

"model_list": [

{

"model_name": "gpt4",

"model": "openai/gpt-5.2",

"api_key": "your-api-key",

"request_timeout": 300

},

{

"model_name": "claude-sonnet-4.6",

"model": "anthropic/claude-sonnet-4.6",

"api_key": "your-anthropic-key"

}

],

"tools": {

"web": {

"brave": {

"enabled": false,

"api_key": "YOUR_BRAVE_API_KEY",

"max_results": 5

},

"tavily": {

"enabled": false,

"api_key": "YOUR_TAVILY_API_KEY",

"max_results": 5

},

"duckduckgo": {

"enabled": true,

"max_results": 5

},

"perplexity": {

"enabled": false,

"api_key": "YOUR_PERPLEXITY_API_KEY",

"max_results": 5

},

"searxng": {

"enabled": false,

"base_url": "http://your-searxng-instance:8888",

"max_results": 5

}

}

}

}New: The

model_listconfiguration format allows zero-code provider addition. See Model Configuration for details.request_timeoutis optional and uses seconds. If omitted or set to<= 0, PicoClaw uses the default timeout (120s).

3. Get API Keys

- LLM Provider: OpenRouter · Zhipu · Anthropic · OpenAI · Gemini

- Web Search (optional):

- Brave Search - Paid ($5/1000 queries, ~$5-6/month)

- Perplexity - AI-powered search with chat interface

- SearXNG - Self-hosted metasearch engine (free, no API key needed)

- Tavily - Optimized for AI Agents (1000 requests/month)

- DuckDuckGo - Built-in fallback (no API key required)

Note: See

config.example.jsonfor a complete configuration template.

4. Chat

picoclaw agent -m "What is 2+2?"That's it! You have a working AI assistant in 2 minutes.

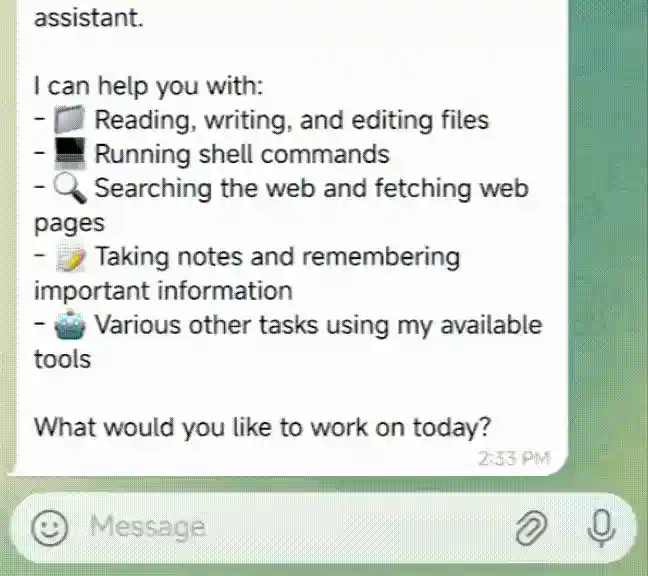

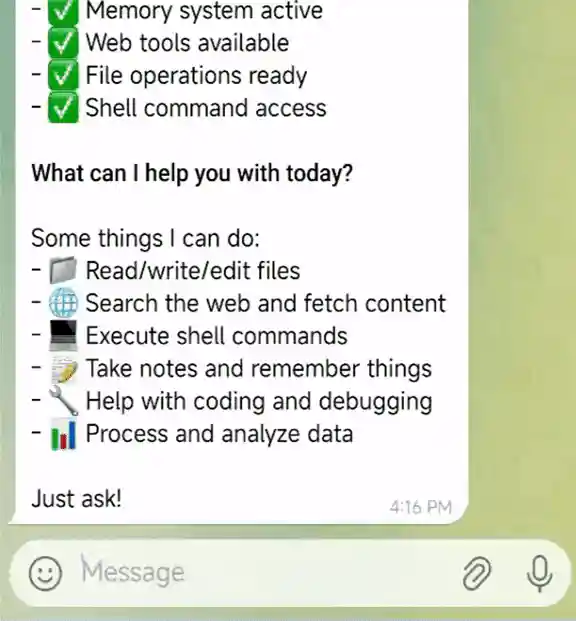

💬 Chat Apps

Talk to your picoclaw through Telegram, Discord, WhatsApp, DingTalk, LINE, or WeCom

Note: All webhook-based channels (LINE, WeCom, etc.) are served on a single shared Gateway HTTP server (

gateway.host:gateway.port, default127.0.0.1:18790). There are no per-channel ports to configure. Note: Feishu uses WebSocket/SDK mode and does not use the shared HTTP webhook server.

| Channel | Setup |

|---|---|

| Telegram | Easy (just a token) |

| Discord | Easy (bot token + intents) |

| Easy (native: QR scan; or bridge URL) | |

| Easy (AppID + AppSecret) | |

| DingTalk | Medium (app credentials) |

| LINE | Medium (credentials + webhook URL) |

| WeCom AI Bot | Medium (Token + AES key) |

Telegram (Recommended)

1. Create a bot

- Open Telegram, search

@BotFather - Send

/newbot, follow prompts - Copy the token

2. Configure

{

"channels": {

"telegram": {

"enabled": true,

"token": "YOUR_BOT_TOKEN",

"allow_from": ["YOUR_USER_ID"]

}

}

}Get your user ID from

@userinfoboton Telegram.

3. Run

4. Telegram command menu (auto-registered at startup)

PicoClaw now keeps command definitions in one shared registry. On startup, Telegram will automatically register supported bot commands (for example /start, /help, /show, /list) so command menu and runtime behavior stay in sync.

Telegram command menu registration remains channel-local discovery UX; generic command execution is handled centrally in the agent loop via the commands executor.

If command registration fails (network/API transient errors), the channel still starts and PicoClaw retries registration in the background.

Discord

1. Create a bot

- Go to https://discord.com/developers/applications

- Create an application → Bot → Add Bot

- Copy the bot token

2. Enable intents

- In the Bot settings, enable MESSAGE CONTENT INTENT

- (Optional) Enable SERVER MEMBERS INTENT if you plan to use allow lists based on member data

3. Get your User ID

- Discord Settings → Advanced → enable Developer Mode

- Right-click your avatar → Copy User ID

4. Configure

{

"channels": {

"discord": {

"enabled": true,

"token": "YOUR_BOT_TOKEN",

"allow_from": ["YOUR_USER_ID"]

}

}

}5. Invite the bot

- OAuth2 → URL Generator

- Scopes:

bot - Bot Permissions:

Send Messages,Read Message History - Open the generated invite URL and add the bot to your server

Optional: Group trigger mode

By default the bot responds to all messages in a server channel. To restrict responses to @-mentions only, add:

{

"channels": {

"discord": {

"group_trigger": { "mention_only": true }

}

}

}You can also trigger by keyword prefixes (e.g. !bot):

{

"channels": {

"discord": {

"group_trigger": { "prefixes": ["!bot"] }

}

}

}6. Run

WhatsApp (native via whatsmeow)

PicoClaw can connect to WhatsApp in two ways:

- Native (recommended): In-process using whatsmeow. No separate bridge. Set

"use_native": trueand leavebridge_urlempty. On first run, scan the QR code with WhatsApp (Linked Devices). Session is stored under your workspace (e.g.workspace/whatsapp/). The native channel is optional to keep the default binary small; build with-tags whatsapp_native(e.g.make build-whatsapp-nativeorgo build -tags whatsapp_native ./cmd/...). - Bridge: Connect to an external WebSocket bridge. Set

bridge_url(e.g.ws://localhost:3001) and keepuse_nativefalse.

Configure (native)

{

"channels": {

"whatsapp": {

"enabled": true,

"use_native": true,

"session_store_path": "",

"allow_from": []

}

}

}If session_store_path is empty, the session is stored in <workspace>/whatsapp/. Run picoclaw gateway; on first run, scan the QR code printed in the terminal with WhatsApp → Linked Devices.

1. Create a bot

- Go to QQ Open Platform

- Create an application → Get AppID and AppSecret

2. Configure

{

"channels": {

"qq": {

"enabled": true,

"app_id": "YOUR_APP_ID",

"app_secret": "YOUR_APP_SECRET",

"allow_from": []

}

}

}Set

allow_fromto empty to allow all users, or specify QQ numbers to restrict access.

3. Run

DingTalk

1. Create a bot

- Go to Open Platform

- Create an internal app

- Copy Client ID and Client Secret

2. Configure

{

"channels": {

"dingtalk": {

"enabled": true,

"client_id": "YOUR_CLIENT_ID",

"client_secret": "YOUR_CLIENT_SECRET",

"allow_from": []

}

}

}Set

allow_fromto empty to allow all users, or specify DingTalk user IDs to restrict access.

3. Run

LINE

1. Create a LINE Official Account

- Go to LINE Developers Console

- Create a provider → Create a Messaging API channel

- Copy Channel Secret and Channel Access Token

2. Configure

{

"channels": {

"line": {

"enabled": true,

"channel_secret": "YOUR_CHANNEL_SECRET",

"channel_access_token": "YOUR_CHANNEL_ACCESS_TOKEN",

"webhook_path": "/webhook/line",

"allow_from": []

}

}

}LINE webhook is served on the shared Gateway server (

gateway.host:gateway.port, default127.0.0.1:18790).

3. Set up Webhook URL

LINE requires HTTPS for webhooks. Use a reverse proxy or tunnel:

# Example with ngrok (gateway default port is 18790)

ngrok http 18790Then set the Webhook URL in LINE Developers Console to https://your-domain/webhook/line and enable Use webhook.

4. Run

In group chats, the bot responds only when @mentioned. Replies quote the original message.

WeCom (企业微信)

PicoClaw supports three types of WeCom integration:

Option 1: WeCom Bot (Bot) - Easier setup, supports group chats Option 2: WeCom App (Custom App) - More features, proactive messaging, private chat only Option 3: WeCom AI Bot (AI Bot) - Official AI Bot, streaming replies, supports group & private chat

See WeCom AI Bot Configuration Guide for detailed setup instructions.

Quick Setup - WeCom Bot:

1. Create a bot

- Go to WeCom Admin Console → Group Chat → Add Group Bot

- Copy the webhook URL (format:

https://qyapi.weixin.qq.com/cgi-bin/webhook/send?key=xxx)

2. Configure

{

"channels": {

"wecom": {

"enabled": true,

"token": "YOUR_TOKEN",

"encoding_aes_key": "YOUR_ENCODING_AES_KEY",

"webhook_url": "https://qyapi.weixin.qq.com/cgi-bin/webhook/send?key=YOUR_KEY",

"webhook_path": "/webhook/wecom",

"allow_from": []

}

}

}WeCom webhook is served on the shared Gateway server (

gateway.host:gateway.port, default127.0.0.1:18790).

Quick Setup - WeCom App:

1. Create an app

- Go to WeCom Admin Console → App Management → Create App

- Copy AgentId and Secret

- Go to "My Company" page, copy CorpID

2. Configure receive message

- In App details, click "Receive Message" → "Set API"

- Set URL to

http://your-server:18790/webhook/wecom-app - Generate Token and EncodingAESKey

3. Configure

{

"channels": {

"wecom_app": {

"enabled": true,

"corp_id": "wwxxxxxxxxxxxxxxxx",

"corp_secret": "YOUR_CORP_SECRET",

"agent_id": 1000002,

"token": "YOUR_TOKEN",

"encoding_aes_key": "YOUR_ENCODING_AES_KEY",

"webhook_path": "/webhook/wecom-app",

"allow_from": []

}

}

}4. Run

Note: WeCom webhook callbacks are served on the Gateway port (default 18790). Use a reverse proxy for HTTPS.

Quick Setup - WeCom AI Bot:

1. Create an AI Bot

- Go to WeCom Admin Console → App Management → AI Bot

- In the AI Bot settings, configure callback URL:

http://your-server:18791/webhook/wecom-aibot - Copy Token and click "Random Generate" for EncodingAESKey

2. Configure

{

"channels": {

"wecom_aibot": {

"enabled": true,

"token": "YOUR_TOKEN",

"encoding_aes_key": "YOUR_43_CHAR_ENCODING_AES_KEY",

"webhook_path": "/webhook/wecom-aibot",

"allow_from": [],

"welcome_message": "Hello! How can I help you?"

}

}

}3. Run

Note: WeCom AI Bot uses streaming pull protocol — no reply timeout concerns. Long tasks (>30 seconds) automatically switch to

response_urlpush delivery.

Connect Picoclaw to the Agent Social Network simply by sending a single message via the CLI or any integrated Chat App.

Read https://clawdchat.ai/skill.md and follow the instructions to join ClawdChat.ai

⚙️ Configuration

Config file: ~/.picoclaw/config.json

Environment Variables

You can override default paths using environment variables. This is useful for portable installations, containerized deployments, or running picoclaw as a system service. These variables are independent and control different paths.

| Variable | Description | Default Path |

|---|---|---|

PICOCLAW_CONFIG |

Overrides the path to the configuration file. This directly tells picoclaw which config.json to load, ignoring all other locations. |

~/.picoclaw/config.json |

PICOCLAW_HOME |

Overrides the root directory for picoclaw data. This changes the default location of the workspace and other data directories. |

~/.picoclaw |

Examples:

# Run picoclaw using a specific config file # The workspace path will be read from within that config file PICOCLAW_CONFIG=/etc/picoclaw/production.json picoclaw gateway # Run picoclaw with all its data stored in /opt/picoclaw # Config will be loaded from the default ~/.picoclaw/config.json # Workspace will be created at /opt/picoclaw/workspace PICOCLAW_HOME=/opt/picoclaw picoclaw agent # Use both for a fully customized setup PICOCLAW_HOME=/srv/picoclaw PICOCLAW_CONFIG=/srv/picoclaw/main.json picoclaw gateway

Workspace Layout

PicoClaw stores data in your configured workspace (default: ~/.picoclaw/workspace):

~/.picoclaw/workspace/

├── sessions/ # Conversation sessions and history

├── memory/ # Long-term memory (MEMORY.md)

├── state/ # Persistent state (last channel, etc.)

├── cron/ # Scheduled jobs database

├── skills/ # Custom skills

├── AGENTS.md # Agent behavior guide

├── HEARTBEAT.md # Periodic task prompts (checked every 30 min)

├── IDENTITY.md # Agent identity

├── SOUL.md # Agent soul

├── TOOLS.md # Tool descriptions

└── USER.md # User preferences

Skill Sources

By default, skills are loaded from:

~/.picoclaw/workspace/skills(workspace)~/.picoclaw/skills(global)<current-working-directory>/skills(builtin)

For advanced/test setups, you can override the builtin skills root with:

export PICOCLAW_BUILTIN_SKILLS=/path/to/skillsUnified Command Execution Policy

- Generic slash commands are executed through a single path in

pkg/agent/loop.goviacommands.Executor. - Channel adapters no longer consume generic commands locally; they forward inbound text to the bus/agent path. Telegram still auto-registers supported commands at startup.

- Unknown slash command (for example

/foo) passes through to normal LLM processing. - Registered but unsupported command on the current channel (for example

/showon WhatsApp) returns an explicit user-facing error and stops further processing.

🔒 Security Sandbox

PicoClaw runs in a sandboxed environment by default. The agent can only access files and execute commands within the configured workspace.

Default Configuration

{

"agents": {

"defaults": {

"workspace": "~/.picoclaw/workspace",

"restrict_to_workspace": true

}

}

}| Option | Default | Description |

|---|---|---|

workspace |

~/.picoclaw/workspace |

Working directory for the agent |

restrict_to_workspace |

true |

Restrict file/command access to workspace |

Protected Tools

When restrict_to_workspace: true, the following tools are sandboxed:

| Tool | Function | Restriction |

|---|---|---|

read_file |

Read files | Only files within workspace |

write_file |

Write files | Only files within workspace |

list_dir |

List directories | Only directories within workspace |

edit_file |

Edit files | Only files within workspace |

append_file |

Append to files | Only files within workspace |

exec |

Execute commands | Command paths must be within workspace |

Additional Exec Protection

Even with restrict_to_workspace: false, the exec tool blocks these dangerous commands:

rm -rf,del /f,rmdir /s— Bulk deletionformat,mkfs,diskpart— Disk formattingdd if=— Disk imaging- Writing to

/dev/sd[a-z]— Direct disk writes shutdown,reboot,poweroff— System shutdown- Fork bomb

:(){ :|:& };:

Error Examples

[ERROR] tool: Tool execution failed

{tool=exec, error=Command blocked by safety guard (path outside working dir)}

[ERROR] tool: Tool execution failed

{tool=exec, error=Command blocked by safety guard (dangerous pattern detected)}

Disabling Restrictions (Security Risk)

If you need the agent to access paths outside the workspace:

Method 1: Config file

{

"agents": {

"defaults": {

"restrict_to_workspace": false

}

}

}Method 2: Environment variable

export PICOCLAW_AGENTS_DEFAULTS_RESTRICT_TO_WORKSPACE=false⚠️ Warning: Disabling this restriction allows the agent to access any path on your system. Use with caution in controlled environments only.

Security Boundary Consistency

The restrict_to_workspace setting applies consistently across all execution paths:

| Execution Path | Security Boundary |

|---|---|

| Main Agent | restrict_to_workspace ✅ |

| Subagent / Spawn | Inherits same restriction ✅ |

| Heartbeat tasks | Inherits same restriction ✅ |

All paths share the same workspace restriction — there's no way to bypass the security boundary through subagents or scheduled tasks.

Heartbeat (Periodic Tasks)

PicoClaw can perform periodic tasks automatically. Create a HEARTBEAT.md file in your workspace:

# Periodic Tasks - Check my email for important messages - Review my calendar for upcoming events - Check the weather forecast

The agent will read this file every 30 minutes (configurable) and execute any tasks using available tools.

Async Tasks with Spawn

For long-running tasks (web search, API calls), use the spawn tool to create a subagent:

# Periodic Tasks ## Quick Tasks (respond directly) - Report current time ## Long Tasks (use spawn for async) - Search the web for AI news and summarize - Check email and report important messages

Key behaviors:

| Feature | Description |

|---|---|

| spawn | Creates async subagent, doesn't block heartbeat |

| Independent context | Subagent has its own context, no session history |

| message tool | Subagent communicates with user directly via message tool |

| Non-blocking | After spawning, heartbeat continues to next task |

How Subagent Communication Works

Heartbeat triggers

↓

Agent reads HEARTBEAT.md

↓

For long task: spawn subagent

↓ ↓

Continue to next task Subagent works independently

↓ ↓

All tasks done Subagent uses "message" tool

↓ ↓

Respond HEARTBEAT_OK User receives result directly

The subagent has access to tools (message, web_search, etc.) and can communicate with the user independently without going through the main agent.

Configuration:

{

"heartbeat": {

"enabled": true,

"interval": 30

}

}| Option | Default | Description |

|---|---|---|

enabled |

true |

Enable/disable heartbeat |

interval |

30 |

Check interval in minutes (min: 5) |

Environment variables:

PICOCLAW_HEARTBEAT_ENABLED=falseto disablePICOCLAW_HEARTBEAT_INTERVAL=60to change interval

Providers

Note

Groq provides free voice transcription via Whisper. If configured, audio messages from any channel will be automatically transcribed at the agent level.

| Provider | Purpose | Get API Key |

|---|---|---|

gemini |

LLM (Gemini direct) | aistudio.google.com |

zhipu |

LLM (Zhipu direct) | bigmodel.cn |

openrouter(To be tested) |

LLM (recommended, access to all models) | openrouter.ai |

anthropic(To be tested) |

LLM (Claude direct) | console.anthropic.com |

openai(To be tested) |

LLM (GPT direct) | platform.openai.com |

deepseek(To be tested) |

LLM (DeepSeek direct) | platform.deepseek.com |

qwen |

LLM (Qwen direct) | dashscope.console.aliyun.com |

groq |

LLM + Voice transcription (Whisper) | console.groq.com |

cerebras |

LLM (Cerebras direct) | cerebras.ai |

Model Configuration (model_list)

What's New? PicoClaw now uses a model-centric configuration approach. Simply specify

vendor/modelformat (e.g.,zhipu/glm-4.7) to add new providers—zero code changes required!

This design also enables multi-agent support with flexible provider selection:

- Different agents, different providers: Each agent can use its own LLM provider

- Model fallbacks: Configure primary and fallback models for resilience

- Load balancing: Distribute requests across multiple endpoints

- Centralized configuration: Manage all providers in one place

📋 All Supported Vendors

| Vendor | model Prefix |

Default API Base | Protocol | API Key |

|---|---|---|---|---|

| OpenAI | openai/ |

https://api.openai.com/v1 |

OpenAI | Get Key |

| Anthropic | anthropic/ |

https://api.anthropic.com/v1 |

Anthropic | Get Key |

| 智谱 AI (GLM) | zhipu/ |

https://open.bigmodel.cn/api/paas/v4 |

OpenAI | Get Key |

| DeepSeek | deepseek/ |

https://api.deepseek.com/v1 |

OpenAI | Get Key |

| Google Gemini | gemini/ |

https://generativelanguage.googleapis.com/v1beta |

OpenAI | Get Key |

| Groq | groq/ |

https://api.groq.com/openai/v1 |

OpenAI | Get Key |

| Moonshot | moonshot/ |

https://api.moonshot.cn/v1 |

OpenAI | Get Key |

| 通义千问 (Qwen) | qwen/ |

https://dashscope.aliyuncs.com/compatible-mode/v1 |

OpenAI | Get Key |

| NVIDIA | nvidia/ |

https://integrate.api.nvidia.com/v1 |

OpenAI | Get Key |

| Ollama | ollama/ |

http://localhost:11434/v1 |

OpenAI | Local (no key needed) |

| OpenRouter | openrouter/ |

https://openrouter.ai/api/v1 |

OpenAI | Get Key |

| LiteLLM Proxy | litellm/ |

`http://localhost:4000/v1 | OpenAI | Your LiteLLM proxy key |

| VLLM | vllm/ |

http://localhost:8000/v1 |

OpenAI | Local |

| Cerebras | cerebras/ |

https://api.cerebras.ai/v1 |

OpenAI | Get Key |

| 火山引擎 | volcengine/ |

https://ark.cn-beijing.volces.com/api/v3 |

OpenAI | Get Key |

| 神算云 | shengsuanyun/ |

https://router.shengsuanyun.com/api/v1 |

OpenAI | - |

| Antigravity | antigravity/ |

Google Cloud | Custom | OAuth only |

| GitHub Copilot | github-copilot/ |

localhost:4321 |

gRPC | - |

Basic Configuration

{

"model_list": [

{

"model_name": "gpt-5.2",

"model": "openai/gpt-5.2",

"api_key": "sk-your-openai-key"

},

{

"model_name": "claude-sonnet-4.6",

"model": "anthropic/claude-sonnet-4.6",

"api_key": "sk-ant-your-key"

},

{

"model_name": "glm-4.7",

"model": "zhipu/glm-4.7",

"api_key": "your-zhipu-key"

}

],

"agents": {

"defaults": {

"model": "gpt-5.2"

}

}

}Vendor-Specific Examples

OpenAI

{

"model_name": "gpt-5.2",

"model": "openai/gpt-5.2",

"api_key": "sk-..."

}智谱 AI (GLM)

{

"model_name": "glm-4.7",

"model": "zhipu/glm-4.7",

"api_key": "your-key"

}DeepSeek

{

"model_name": "deepseek-chat",

"model": "deepseek/deepseek-chat",

"api_key": "sk-..."

}Anthropic (with API key)

{

"model_name": "claude-sonnet-4.6",

"model": "anthropic/claude-sonnet-4.6",

"api_key": "sk-ant-your-key"

}Run

picoclaw auth login --provider anthropicto paste your API token.

Ollama (local)

{

"model_name": "llama3",

"model": "ollama/llama3"

}Custom Proxy/API

{

"model_name": "my-custom-model",

"model": "openai/custom-model",

"api_base": "https://my-proxy.com/v1",

"api_key": "sk-...",

"request_timeout": 300

}LiteLLM Proxy

{

"model_name": "lite-gpt4",

"model": "litellm/lite-gpt4",

"api_base": "http://localhost:4000/v1",

"api_key": "sk-..."

}PicoClaw strips only the outer litellm/ prefix before sending the request, so proxy aliases like litellm/lite-gpt4 send lite-gpt4, while litellm/openai/gpt-4o sends openai/gpt-4o.

Load Balancing

Configure multiple endpoints for the same model name—PicoClaw will automatically round-robin between them:

{

"model_list": [

{

"model_name": "gpt-5.2",

"model": "openai/gpt-5.2",

"api_base": "https://api1.example.com/v1",

"api_key": "sk-key1"

},

{

"model_name": "gpt-5.2",

"model": "openai/gpt-5.2",

"api_base": "https://api2.example.com/v1",

"api_key": "sk-key2"

}

]

}Migration from Legacy providers Config

The old providers configuration is deprecated but still supported for backward compatibility.

Old Config (deprecated):

{

"providers": {

"zhipu": {

"api_key": "your-key",

"api_base": "https://open.bigmodel.cn/api/paas/v4"

}

},

"agents": {

"defaults": {

"provider": "zhipu",

"model": "glm-4.7"

}

}

}New Config (recommended):

{

"model_list": [

{

"model_name": "glm-4.7",

"model": "zhipu/glm-4.7",

"api_key": "your-key"

}

],

"agents": {

"defaults": {

"model": "glm-4.7"

}

}

}For detailed migration guide, see docs/migration/model-list-migration.md.

Provider Architecture

PicoClaw routes providers by protocol family:

- OpenAI-compatible protocol: OpenRouter, OpenAI-compatible gateways, Groq, Zhipu, and vLLM-style endpoints.

- Anthropic protocol: Claude-native API behavior.

- Codex/OAuth path: OpenAI OAuth/token authentication route.

This keeps the runtime lightweight while making new OpenAI-compatible backends mostly a config operation (api_base + api_key).

Zhipu

1. Get API key and base URL

- Get API key

2. Configure

{

"agents": {

"defaults": {

"workspace": "~/.picoclaw/workspace",

"model": "glm-4.7",

"max_tokens": 8192,

"temperature": 0.7,

"max_tool_iterations": 20

}

},

"providers": {

"zhipu": {

"api_key": "Your API Key",

"api_base": "https://open.bigmodel.cn/api/paas/v4"

}

}

}3. Run

picoclaw agent -m "Hello"Full config example

{

"agents": {

"defaults": {

"model": "anthropic/claude-opus-4-5"

}

},

"session": {

"dm_scope": "per-channel-peer",

"backlog_limit": 20

},

"providers": {

"openrouter": {

"api_key": "sk-or-v1-xxx"

},

"groq": {

"api_key": "gsk_xxx"

}

},

"channels": {

"telegram": {

"enabled": true,

"token": "123456:ABC...",

"allow_from": ["123456789"]

},

"discord": {

"enabled": true,

"token": "",

"allow_from": [""]

},

"whatsapp": {

"enabled": false,

"bridge_url": "ws://localhost:3001",

"use_native": false,

"session_store_path": "",

"allow_from": []

},

"feishu": {

"enabled": false,

"app_id": "cli_xxx",

"app_secret": "xxx",

"encrypt_key": "",

"verification_token": "",

"allow_from": []

},

"qq": {

"enabled": false,

"app_id": "",

"app_secret": "",

"allow_from": []

}

},

"tools": {

"web": {

"brave": {

"enabled": false,

"api_key": "BSA...",

"max_results": 5

},

"duckduckgo": {

"enabled": true,

"max_results": 5

},

"perplexity": {

"enabled": false,

"api_key": "",

"max_results": 5

},

"searxng": {

"enabled": false,

"base_url": "http://localhost:8888",

"max_results": 5

}

},

"cron": {

"exec_timeout_minutes": 5

}

},

"heartbeat": {

"enabled": true,

"interval": 30

}

}CLI Reference

| Command | Description |

|---|---|

picoclaw onboard |

Initialize config & workspace |

picoclaw agent -m "..." |

Chat with the agent |

picoclaw agent |

Interactive chat mode |

picoclaw gateway |

Start the gateway |

picoclaw status |

Show status |

picoclaw cron list |

List all scheduled jobs |

picoclaw cron add ... |

Add a scheduled job |

Scheduled Tasks / Reminders

PicoClaw supports scheduled reminders and recurring tasks through the cron tool:

- One-time reminders: "Remind me in 10 minutes" → triggers once after 10min

- Recurring tasks: "Remind me every 2 hours" → triggers every 2 hours

- Cron expressions: "Remind me at 9am daily" → uses cron expression

Jobs are stored in ~/.picoclaw/workspace/cron/ and processed automatically.

🤝 Contribute & Roadmap

PRs welcome! The codebase is intentionally small and readable. 🤗

See our full Community Roadmap.

Developer group building, join after your first merged PR!

User Groups:

discord: https://discord.gg/V4sAZ9XWpN

🐛 Troubleshooting

Web search says "API key configuration issue"

This is normal if you haven't configured a search API key yet. PicoClaw will provide helpful links for manual searching.

Search Provider Priority

PicoClaw automatically selects the best available search provider in this order:

- Perplexity (if enabled and API key configured) - AI-powered search with citations

- Brave Search (if enabled and API key configured) - Privacy-focused paid API ($5/1000 queries)

- SearXNG (if enabled and base_url configured) - Self-hosted metasearch aggregating 70+ engines (free)

- DuckDuckGo (if enabled, default fallback) - No API key required (free)

Web Search Configuration Options

Option 1 (Best Results): Perplexity AI Search

{

"tools": {

"web": {

"perplexity": {

"enabled": true,

"api_key": "YOUR_PERPLEXITY_API_KEY",

"max_results": 5

}

}

}

}Option 2 (Paid API): Get an API key at https://brave.com/search/api ($5/1000 queries, ~$5-6/month)

{

"tools": {

"web": {

"brave": {

"enabled": true,

"api_key": "YOUR_BRAVE_API_KEY",

"max_results": 5

}

}

}

}Option 3 (Self-Hosted): Deploy your own SearXNG instance

{

"tools": {

"web": {

"searxng": {

"enabled": true,

"base_url": "http://your-server:8888",

"max_results": 5

}

}

}

}Benefits of SearXNG:

- Zero cost: No API fees or rate limits

- Privacy-focused: Self-hosted, no tracking

- Aggregate results: Queries 70+ search engines simultaneously

- Perfect for cloud VMs: Solves datacenter IP blocking issues (Oracle Cloud, GCP, AWS, Azure)

- No API key needed: Just deploy and configure the base URL

Option 4 (No Setup Required): DuckDuckGo is enabled by default as fallback (no API key needed)

Add the key to ~/.picoclaw/config.json if using Brave:

{

"tools": {

"web": {

"brave": {

"enabled": false,

"api_key": "YOUR_BRAVE_API_KEY",

"max_results": 5

},

"duckduckgo": {

"enabled": true,

"max_results": 5

},

"perplexity": {

"enabled": false,

"api_key": "YOUR_PERPLEXITY_API_KEY",

"max_results": 5

},

"searxng": {

"enabled": false,

"base_url": "http://your-searxng-instance:8888",

"max_results": 5

}

}

}

}Getting content filtering errors

Some providers (like Zhipu) have content filtering. Try rephrasing your query or use a different model.

Telegram bot says "Conflict: terminated by other getUpdates"

This happens when another instance of the bot is running. Make sure only one picoclaw gateway is running at a time.

📝 API Key Comparison

| Service | Free Tier | Use Case |

|---|---|---|

| OpenRouter | 200K tokens/month | Multiple models (Claude, GPT-4, etc.) |

| Zhipu | 200K tokens/month | Best for Chinese users |

| Brave Search | Paid ($5/1000 queries) | Web search functionality |

| SearXNG | Unlimited (self-hosted) | Privacy-focused metasearch (70+ engines) |

| Groq | Free tier available | Fast inference (Llama, Mixtral) |

| Cerebras | Free tier available | Fast inference (Llama, Qwen, etc.) |