Dexterous Functional Pre-Grasp Manipulation with Diffusion Policy

This repo is the official implementation of Dexterous Functional Pre-Grasp Manipulation with Diffusion Policy. Please note this is an early version of the release, we will refine and release a new version in the future.

TODOs (Under Development):

- Point Cloud Pretraining

- Pose Clustering

- Expert Demo Collection

- Refactor

Overview

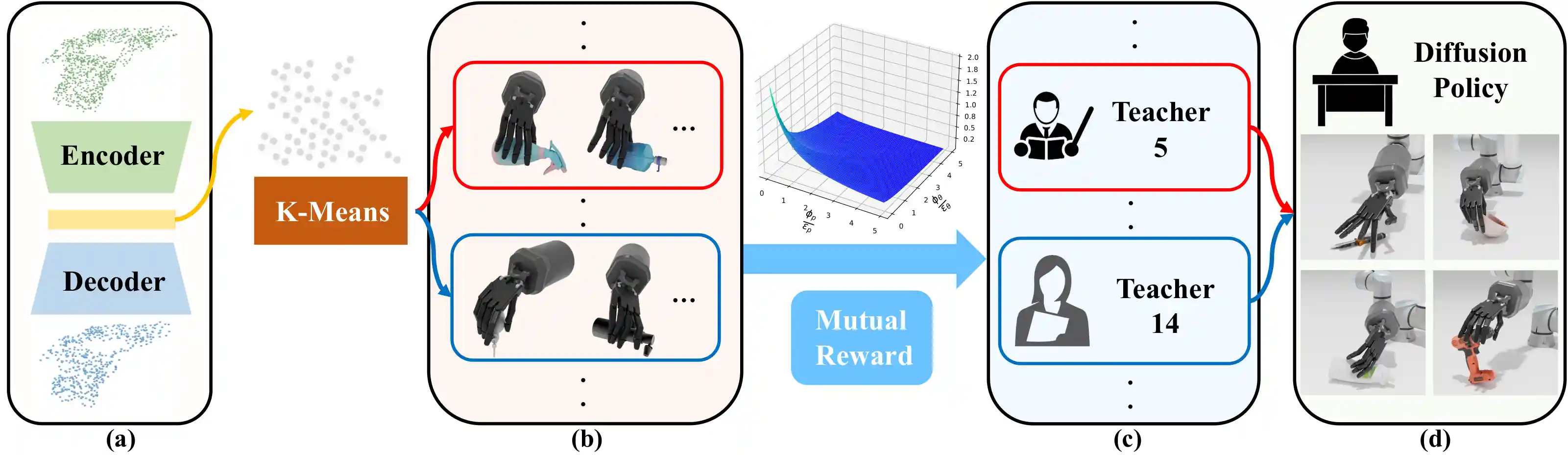

In this paper, we focus on a task called Dexterous Functional Pre-Grasp Manipulation that aims to train a policy for controlling a robotic arm and dexterous hand to reorient and reposition the object to achieve the functional goal pose.

We propose a teacher-student learning approach that utilizes a novel mutual reward. Additionally, we introduce a pipeline that employs a mixture-of-experts strategy to learn diverse manipulation policies, followed by a diffusion policy to capture complex action distributions from these experts.

Contents of this repo are as follows:

Installation

Requirements

The code has been tested on Ubuntu 20.04 with Python 3.8 and Pytorch 1.10.0.

Install

IsaacGym:

You can refer installation of IsaacGym here. We currently support the Preview Release 4 version of IsaacGym.

IsaacGymEnvs:

You can refer installation of IsaacGymEnvs here.

Other Dependencies

python package:

rich

pre-commit

python-dotenv

skrl==0.10.2

ipdb

transforms3d

open3d

pytorch3d

diffusers

einops

zarr

numpy==1.21.6

trimesh

pycollada

Dataset

Asset

You can download object mesh from DexFunPreGrasp/assets, put on following directory and extract.

Functional Grasp Pose Dataset

You can download retargeted functional grasping dataset from DexFunPreGrasp/pose_datasets, put on following directory and extract.

Training

Teacher

Train Multiple Experts

Currently we assume use the cluster we generated before, the total cluster number is 20. You can train each cluster by running the following shell, and change the "cluster" argument. We provide the pretrained checkpoint for each cluster in "ckpt".

Expert Demonstrtation Collection

After all the experts finished training, we need to collect demonstrations by running the following shell. Please note there is issue with data collection, which we will fix later!!!

sh ./collect_expert_demonstration.sh

Create Expert Dataset

We then create the expert dataset using the generated data by running the following shell. We provide the generated dataset on DexFunPreGrasp/expert_datasets

sh ./create_expert_dataset.sh

Student

Training

You can run the following shell to train the diffusion policy. Please note currently we only support DDPM and Switch for pointcloud training need to set "PCL=True" in "src/algorithms/DDIM/policy/DDIM.py" line 20.

Evaluation

You can download pretrained checkpoint from DexFunPreGrasp/pretrained_ckpt, and put to "ckpt" directory.

Acknowledgement

The code and dataset used in this project is built from these repository:

Environment:

Dataset:

Diffusion:

Clustering:

Sim-Web-Visualizer:

Contact

If you have any suggestion or questions, please feel free to contact us:

Yunchong Gan: yunchong@pku.edu.cn

Tianhao Wu: thwu@stu.pku.edu.cn

License

This project is released under the MIT license. See LICENSE for additional details.