Niv Haim

|

|

Reconstructing Training Data From Real-World Models Trained with Transfer Learning

Yakir Oz, Gilad Yehudai, Gal Vardi, Itai Antebi, Michal Irani, Niv Haim SaTML 2026 BibTeX /

ArXiv /

Code

|

|

|

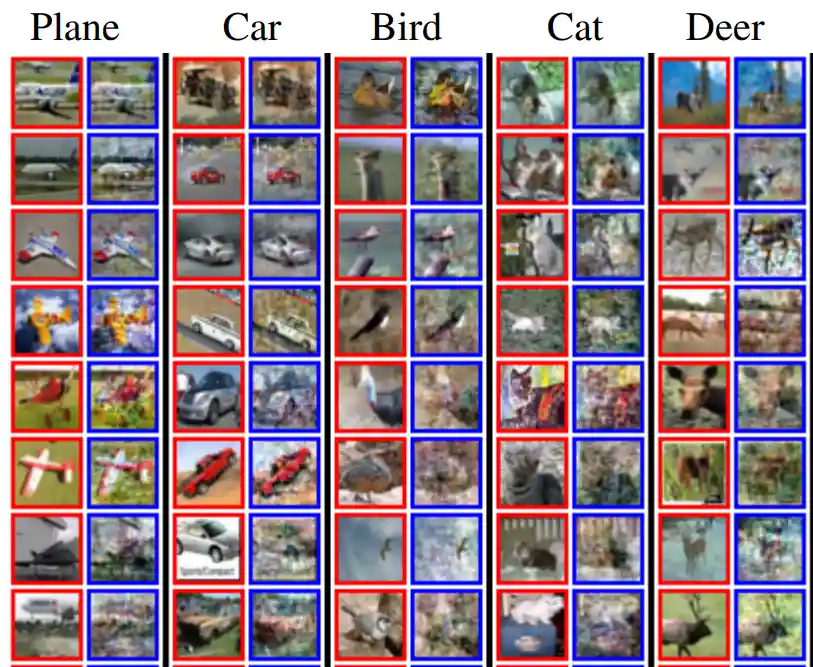

Deconstructing Data Reconstruction: Multiclass, Weight Decay and General Losses

Gon Buzaglo*, Niv Haim*, Gilad Yehudai, Gal Vardi, Yakir Oz, Yaniv Nikankin, Michal Irani NeurIPS 2023 BibTeX /

ArXiv /

Code /

Video

|

|

|

SinFusion: Training Diffusion Models on a Single Image or Video

Yaniv Nikankin*, Niv Haim*, Michal Irani ICML 2023 BibTeX /

ArXiv /

Code /

Project Page

|

|

|

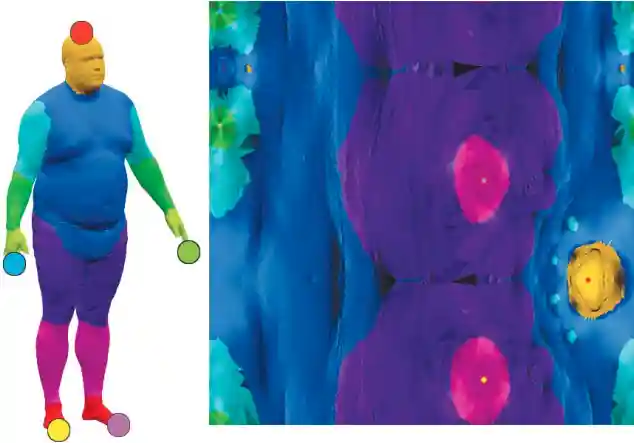

Reconstructing Training Data from Trained Neural Networks

Niv Haim*, Gal Vardi*, Gilad Yehudai*, Ohad Shamir, Michal Irani NeurIPS 2022 ORALBibTeX /

ArXiv /

Code /

Project Page /

Video

|

|

|

Diverse Generation from a Single Video Made Possible

Niv Haim*, Ben Finestein*, Niv Granot, Assaf Shocher, Shai Bagon, Tali Dekel, Michal Irani ECCV 2022 BibTeX /

ArXiv /

Code /

Video /

Project Page

|

|

|

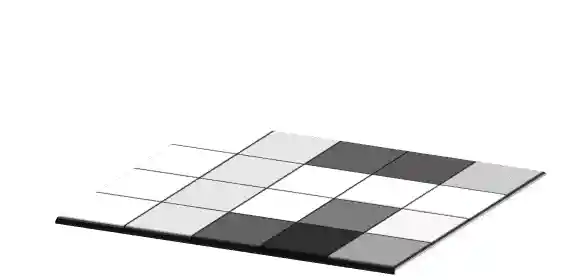

From Discrete to Continuous Convolution Layers

Assaf Shocher*, Ben Finestein*, Niv Haim*, Michal Irani Preprint, 2020 BibTeX /

ArXiv

|

|

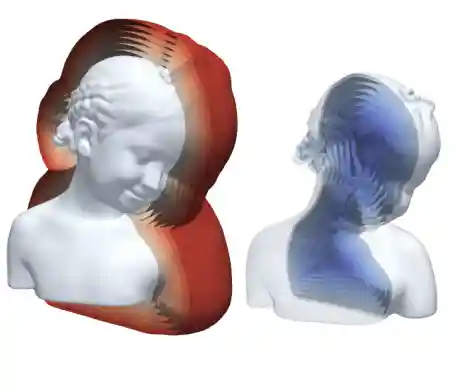

Implicit Geometric Regularization for Learning Shapes

Amos Gropp, Lior Yariv, Niv Haim, Matan Atzmon, Yaron Lipman ICML 2020 BibTeX /

ArXiv /

Code /

Video

|

|

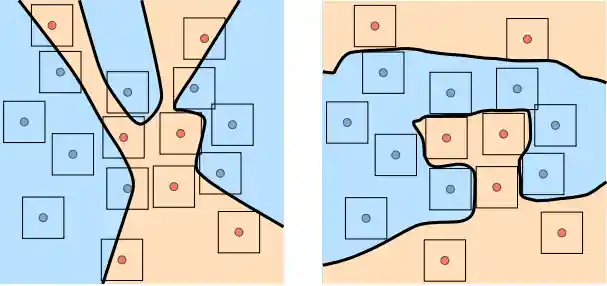

Controlling Neural Level Sets

Matan Atzmon, Niv Haim, Lior Yariv, Ofer Israelov, Haggai Maron, Yaron Lipman NeurIPS 2019 BibTeX /

ArXiv /

Code /

Poster

|

|

Surface Networks via General Covers

Niv Haim*, Nimrod Segol*, Heli Ben-Hamu, Haggai Maron, Yaron Lipman ICCV 2019 BibTeX /

ArXiv /

Code

|

|

|

Extreme close approaches in hierarchical triple systems with comparable masses

Niv Haim, Boaz Katz MNRAS 2018 BibTeX /

ArXiv /

Code

|