Trending Papers - Hugging Face

new

Get trending papers in your email inbox once a day!

Get trending papers in your email inbox!

by AK and the research community

AK and the research community

Flavors of Moonshine: Tiny Specialized ASR Models for Edge Devices

Monolingual ASR models trained on a balanced mix of high-quality, pseudo-labeled, and synthetic data outperform multilingual models for small model sizes, achieving superior error rates and enabling on-device ASR for underrepresented languages.

· Published on Sep 2, 2025

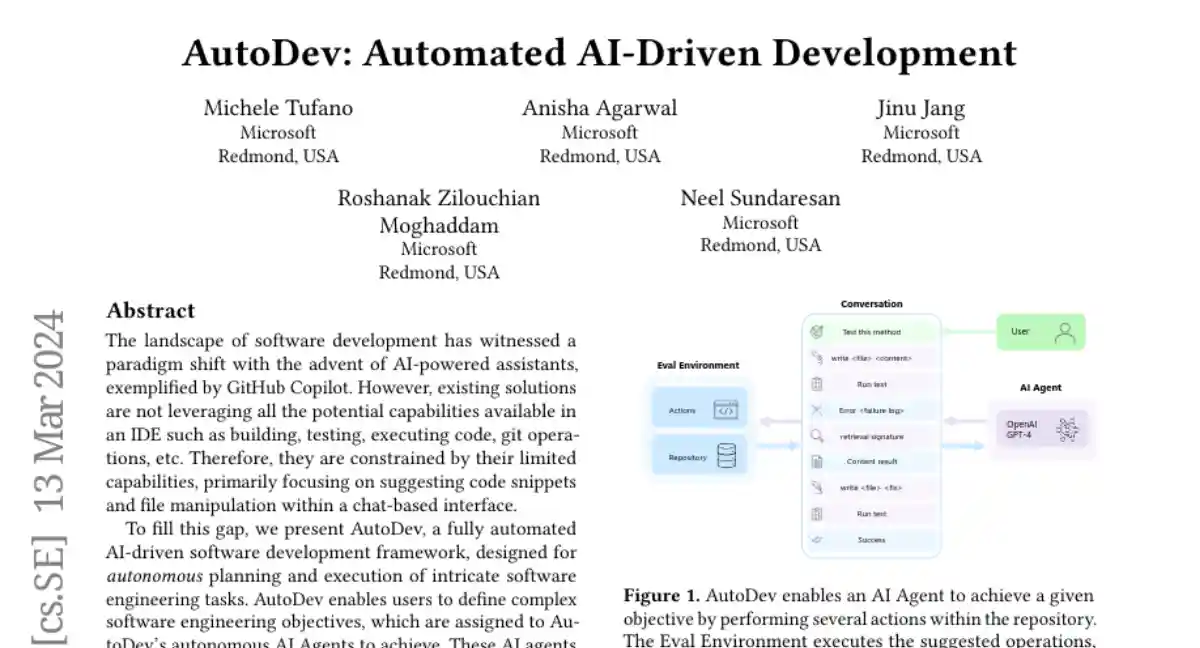

AutoDev: Automated AI-Driven Development

AutoDev is an AI-driven software development framework that automates complex engineering tasks within a secure Docker environment, achieving high performance in code and test generation.

- 5 authors

· Published on Mar 13, 2024

AutoDev: Automated AI-Driven Development

AutoDev is an AI-driven software development framework that automates complex engineering tasks within a secure Docker environment, achieving high performance in code and test generation.

Mobile-Agent-v3: Foundamental Agents for GUI Automation

GUI-Owl and Mobile-Agent-v3 are open-source GUI agent models and frameworks that achieve state-of-the-art performance across various benchmarks using innovations in environment infrastructure, agent capabilities, and scalable reinforcement learning.

· Published on Aug 21, 2025

Mobile-Agent-v3: Foundamental Agents for GUI Automation

GUI-Owl and Mobile-Agent-v3 are open-source GUI agent models and frameworks that achieve state-of-the-art performance across various benchmarks using innovations in environment infrastructure, agent capabilities, and scalable reinforcement learning.

Mem0: Building Production-Ready AI Agents with Scalable Long-Term Memory

Mem0, a memory-centric architecture with graph-based memory, enhances long-term conversational coherence in LLMs by efficiently extracting, consolidating, and retrieving information, outperforming existing memory systems in terms of accuracy and computational efficiency.

· Published on Apr 28, 2025

Qwen3-TTS Technical Report

The Qwen3-TTS series presents advanced multilingual text-to-speech models with voice cloning and controllable speech generation capabilities, utilizing dual-track LM architecture and specialized speech tokenizers for efficient streaming synthesis.

Qwen · Published on Jan 22, 2026

Qwen · Published on Jan 22, 2026

Qwen3-TTS Technical Report

The Qwen3-TTS series presents advanced multilingual text-to-speech models with voice cloning and controllable speech generation capabilities, utilizing dual-track LM architecture and specialized speech tokenizers for efficient streaming synthesis.

Qwen · Jan 22, 2026

Qwen · Jan 22, 2026

Agent READMEs: An Empirical Study of Context Files for Agentic Coding

Agentic coding tools receive goals written in natural language as input, break them down into specific tasks, and write or execute the actual code with minimal human intervention. Central to this process are agent context files ("READMEs for agents") that provide persistent, project-level instructions. In this paper, we conduct the first large-scale empirical study of 2,303 agent context files from 1,925 repositories to characterize their structure, maintenance, and content. We find that these files are not static documentation but complex, difficult-to-read artifacts that evolve like configuration code, maintained through frequent, small additions. Our content analysis of 16 instruction types shows that developers prioritize functional context, such as build and run commands (62.3%), implementation details (69.9%), and architecture (67.7%). We also identify a significant gap: non-functional requirements like security (14.5%) and performance (14.5%) are rarely specified. These findings indicate that while developers use context files to make agents functional, they provide few guardrails to ensure that agent-written code is secure or performant, highlighting the need for improved tooling and practices.

- 11 authors

· Published on Nov 17, 2025

Agent READMEs: An Empirical Study of Context Files for Agentic Coding

Agentic coding tools receive goals written in natural language as input, break them down into specific tasks, and write or execute the actual code with minimal human intervention. Central to this process are agent context files ("READMEs for agents") that provide persistent, project-level instructions. In this paper, we conduct the first large-scale empirical study of 2,303 agent context files from 1,925 repositories to characterize their structure, maintenance, and content. We find that these files are not static documentation but complex, difficult-to-read artifacts that evolve like configuration code, maintained through frequent, small additions. Our content analysis of 16 instruction types shows that developers prioritize functional context, such as build and run commands (62.3%), implementation details (69.9%), and architecture (67.7%). We also identify a significant gap: non-functional requirements like security (14.5%) and performance (14.5%) are rarely specified. These findings indicate that while developers use context files to make agents functional, they provide few guardrails to ensure that agent-written code is secure or performant, highlighting the need for improved tooling and practices.

- 11 authors

· Nov 17, 2025

Arch-Router: Aligning LLM Routing with Human Preferences

A preference-aligned routing framework using a compact 1.5B model effectively matches queries to user-defined domains and action types, outperforming proprietary models in subjective evaluation criteria.

· Published on Jun 19, 2025

Very Large-Scale Multi-Agent Simulation in AgentScope

Enhancements to the AgentScope platform improve scalability, efficiency, and ease of use for large-scale multi-agent simulations through distributed mechanisms, flexible environments, and user-friendly tools.

· Published on Jul 25, 2024

LightRAG: Simple and Fast Retrieval-Augmented Generation

LightRAG improves Retrieval-Augmented Generation by integrating graph structures for enhanced contextual awareness and efficient information retrieval, achieving better accuracy and response times.

- 5 authors

· Published on Oct 8, 2024

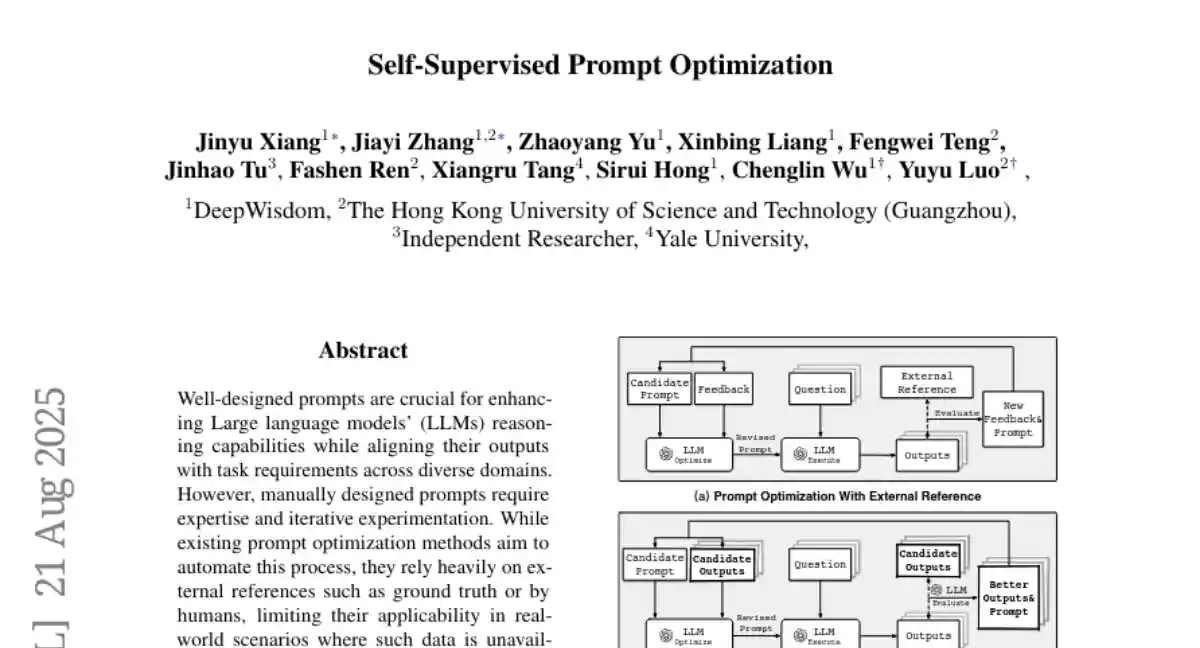

Self-Supervised Prompt Optimization

A self-supervised framework optimizes prompts for both closed and open-ended tasks by evaluating LLM outputs without external references, reducing costs and required data.

· Published on Feb 7, 2025

Self-Supervised Prompt Optimization

A self-supervised framework optimizes prompts for both closed and open-ended tasks by evaluating LLM outputs without external references, reducing costs and required data.

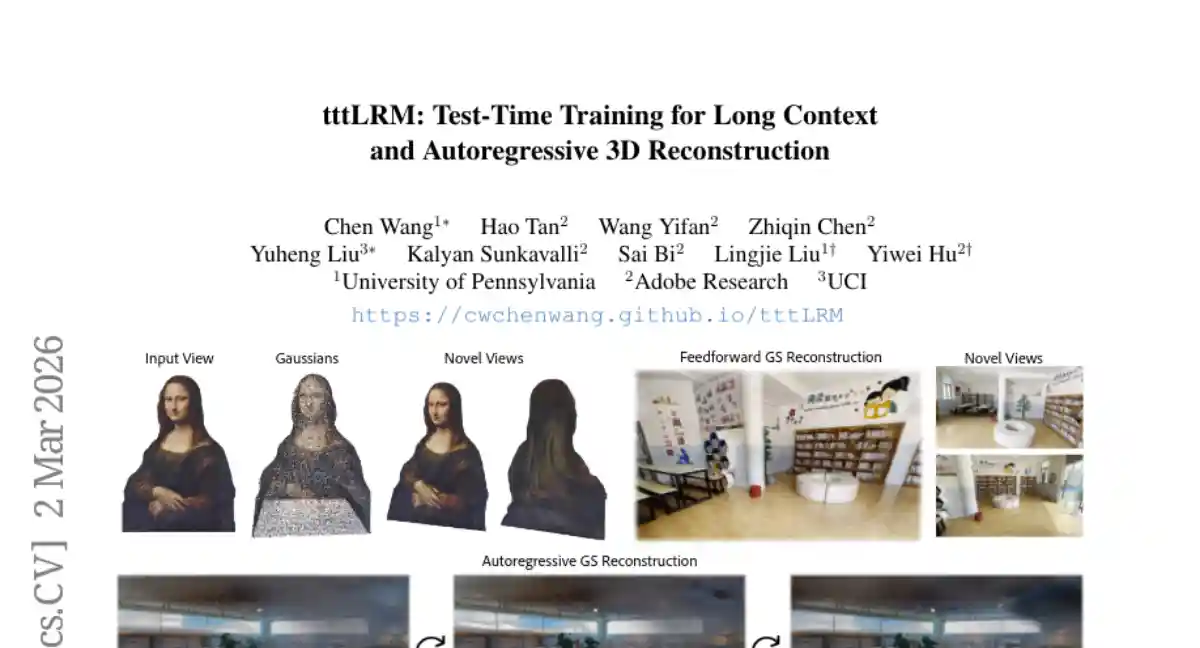

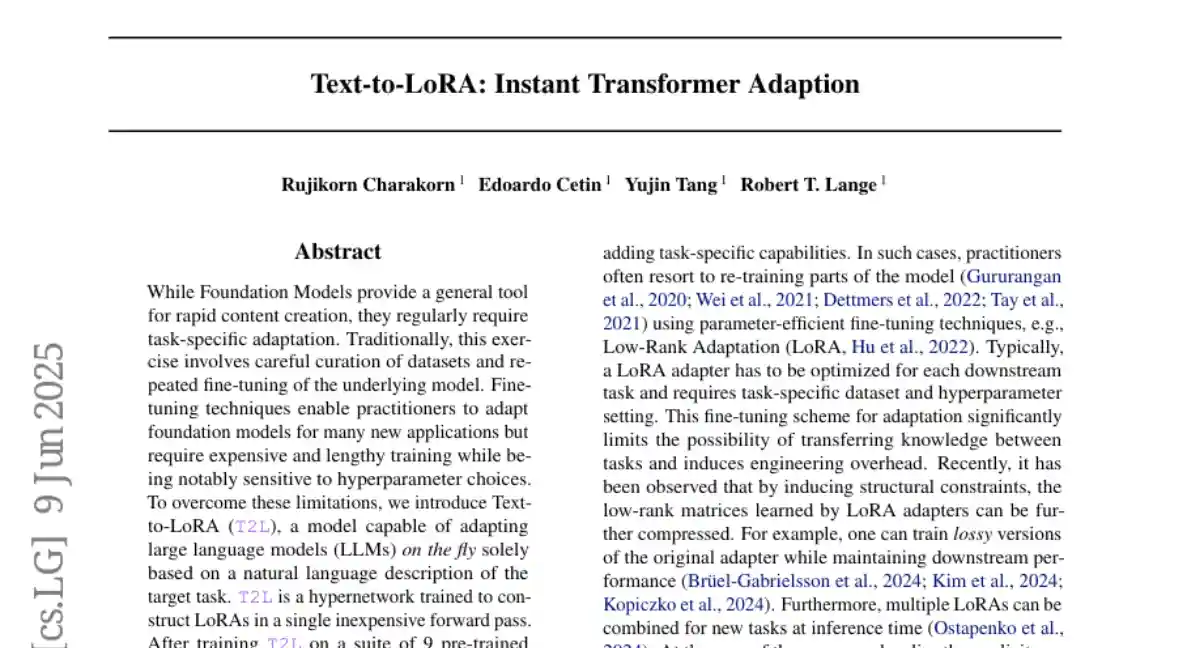

Text-to-LoRA: Instant Transformer Adaption

Text-to-LoRA (T2L) is a hypernetwork that dynamically adapts large language models using natural language descriptions, enabling efficient and zero-shot task-specific fine-tuning with minimal computational resources.

- 4 authors

· Published on Jun 6, 2025

Text-to-LoRA: Instant Transformer Adaption

Text-to-LoRA (T2L) is a hypernetwork that dynamically adapts large language models using natural language descriptions, enabling efficient and zero-shot task-specific fine-tuning with minimal computational resources.

- 4 authors

· Jun 6, 2025

MemOS: A Memory OS for AI System

MemOS, a memory operating system for Large Language Models, addresses memory management challenges by unifying plaintext, activation-based, and parameter-level memories, enabling efficient storage, retrieval, and continual learning.

· Published on Jul 4, 2025

MemOS: A Memory OS for AI System

MemOS, a memory operating system for Large Language Models, addresses memory management challenges by unifying plaintext, activation-based, and parameter-level memories, enabling efficient storage, retrieval, and continual learning.

GLM-5: from Vibe Coding to Agentic Engineering

GLM-5 advances foundation models with DSA for cost reduction, asynchronous reinforcement learning for improved alignment, and enhanced coding capabilities for real-world software engineering.

· Published on Feb 17, 2026

GLM-5: from Vibe Coding to Agentic Engineering

GLM-5 advances foundation models with DSA for cost reduction, asynchronous reinforcement learning for improved alignment, and enhanced coding capabilities for real-world software engineering.

RAG-Anything: All-in-One RAG Framework

RAG-Anything is a unified framework that enhances multimodal knowledge retrieval by integrating cross-modal relationships and semantic matching, outperforming existing methods on complex benchmarks.

RAG-Anything: All-in-One RAG Framework

RAG-Anything is a unified framework that enhances multimodal knowledge retrieval by integrating cross-modal relationships and semantic matching, outperforming existing methods on complex benchmarks.

OmniGAIA: Towards Native Omni-Modal AI Agents

OmniGAIA benchmark evaluates multi-modal agents on complex reasoning tasks across video, audio, and image modalities, while OmniAtlas agent improves tool-use capabilities through hindsight-guided tree exploration and OmniDPO fine-tuning.

· Published on Feb 26, 2026

OmniGAIA: Towards Native Omni-Modal AI Agents

OmniGAIA benchmark evaluates multi-modal agents on complex reasoning tasks across video, audio, and image modalities, while OmniAtlas agent improves tool-use capabilities through hindsight-guided tree exploration and OmniDPO fine-tuning.

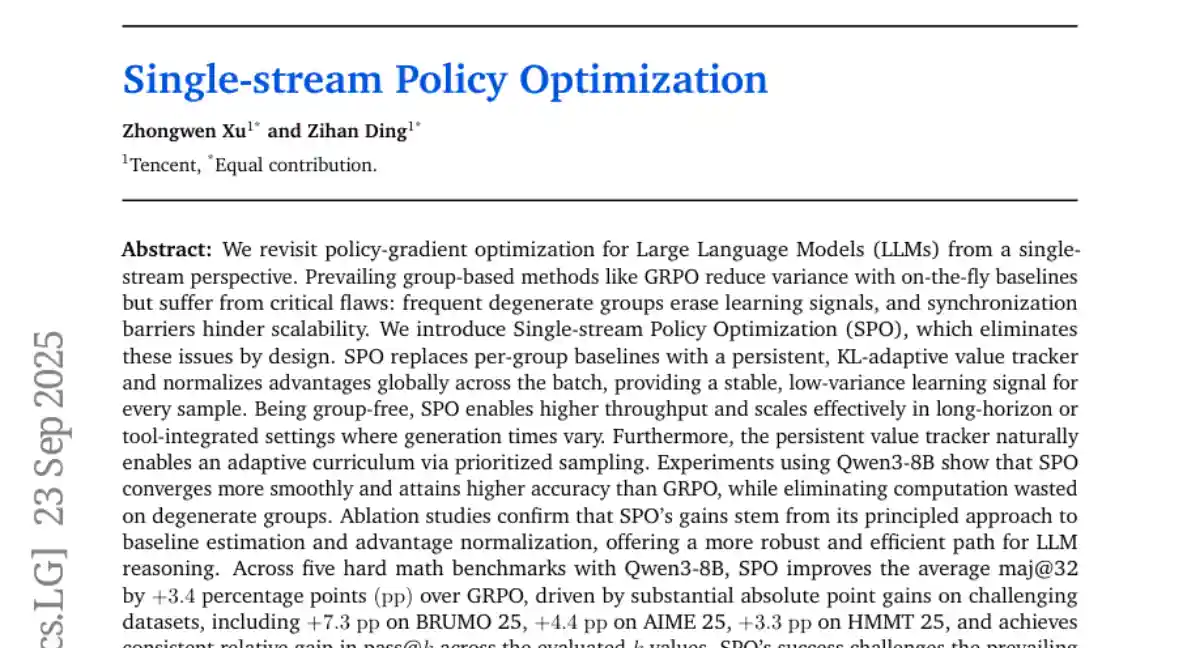

Single-stream Policy Optimization

Single-stream Policy Optimization (SPO) improves policy-gradient training for Large Language Models by eliminating group-based issues and providing a stable, low-variance learning signal, leading to better performance and efficiency.

Tencent · Published on Sep 16, 2025

Tencent · Published on Sep 16, 2025

Single-stream Policy Optimization

Single-stream Policy Optimization (SPO) improves policy-gradient training for Large Language Models by eliminating group-based issues and providing a stable, low-variance learning signal, leading to better performance and efficiency.

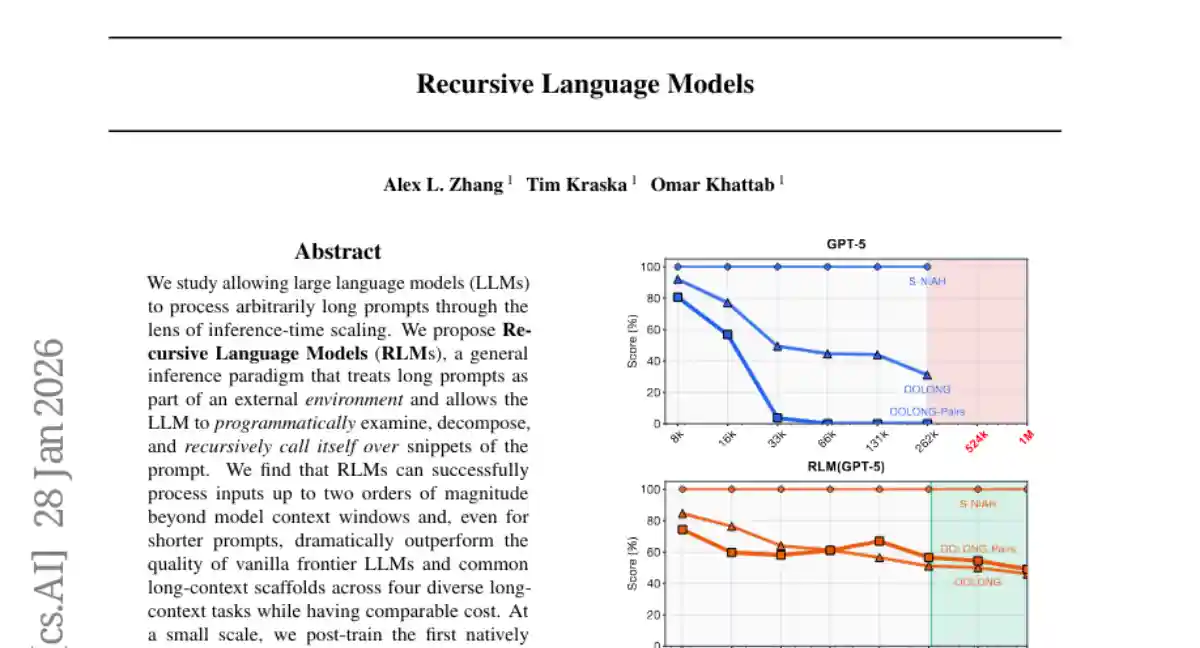

Recursive Language Models

We study allowing large language models (LLMs) to process arbitrarily long prompts through the lens of inference-time scaling. We propose Recursive Language Models (RLMs), a general inference strategy that treats long prompts as part of an external environment and allows the LLM to programmatically examine, decompose, and recursively call itself over snippets of the prompt. We find that RLMs successfully handle inputs up to two orders of magnitude beyond model context windows and, even for shorter prompts, dramatically outperform the quality of base LLMs and common long-context scaffolds across four diverse long-context tasks, while having comparable (or cheaper) cost per query.

Recursive Language Models

We study allowing large language models (LLMs) to process arbitrarily long prompts through the lens of inference-time scaling. We propose Recursive Language Models (RLMs), a general inference strategy that treats long prompts as part of an external environment and allows the LLM to programmatically examine, decompose, and recursively call itself over snippets of the prompt. We find that RLMs successfully handle inputs up to two orders of magnitude beyond model context windows and, even for shorter prompts, dramatically outperform the quality of base LLMs and common long-context scaffolds across four diverse long-context tasks, while having comparable (or cheaper) cost per query.

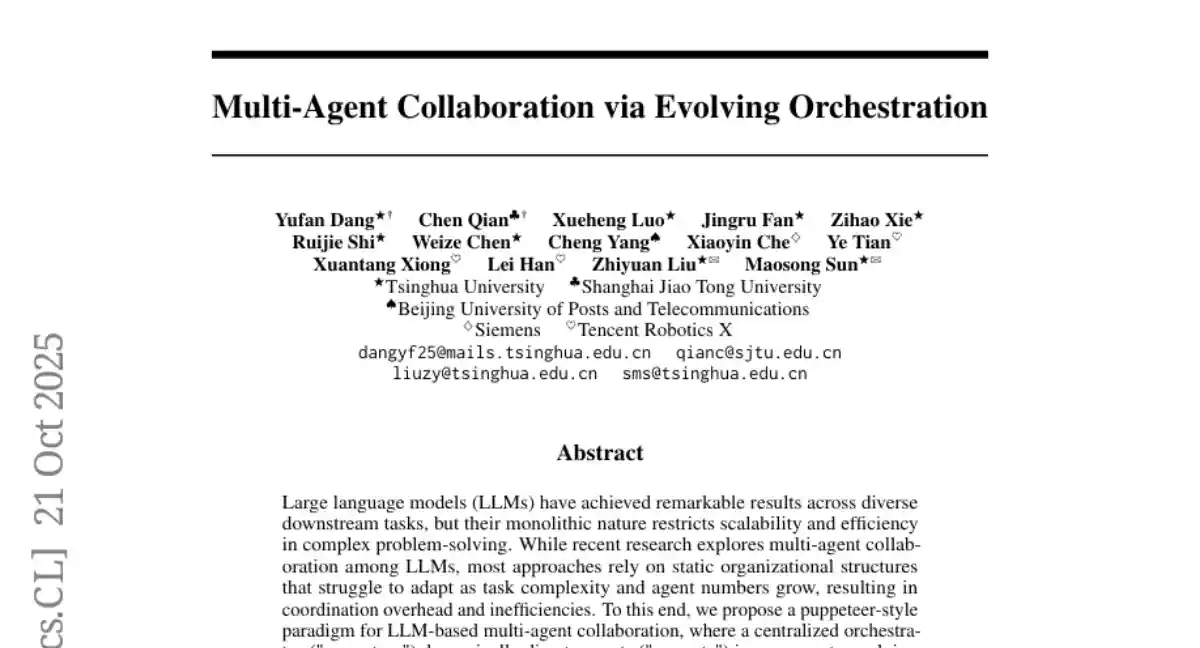

Multi-Agent Collaboration via Evolving Orchestration

A centralized orchestrator dynamically directs LLM agents via reinforcement learning, achieving superior multi-agent collaboration in varying tasks with reduced computational costs.

- 14 authors

· Published on May 26, 2025

Multi-Agent Collaboration via Evolving Orchestration

A centralized orchestrator dynamically directs LLM agents via reinforcement learning, achieving superior multi-agent collaboration in varying tasks with reduced computational costs.

- 14 authors

· May 26, 2025

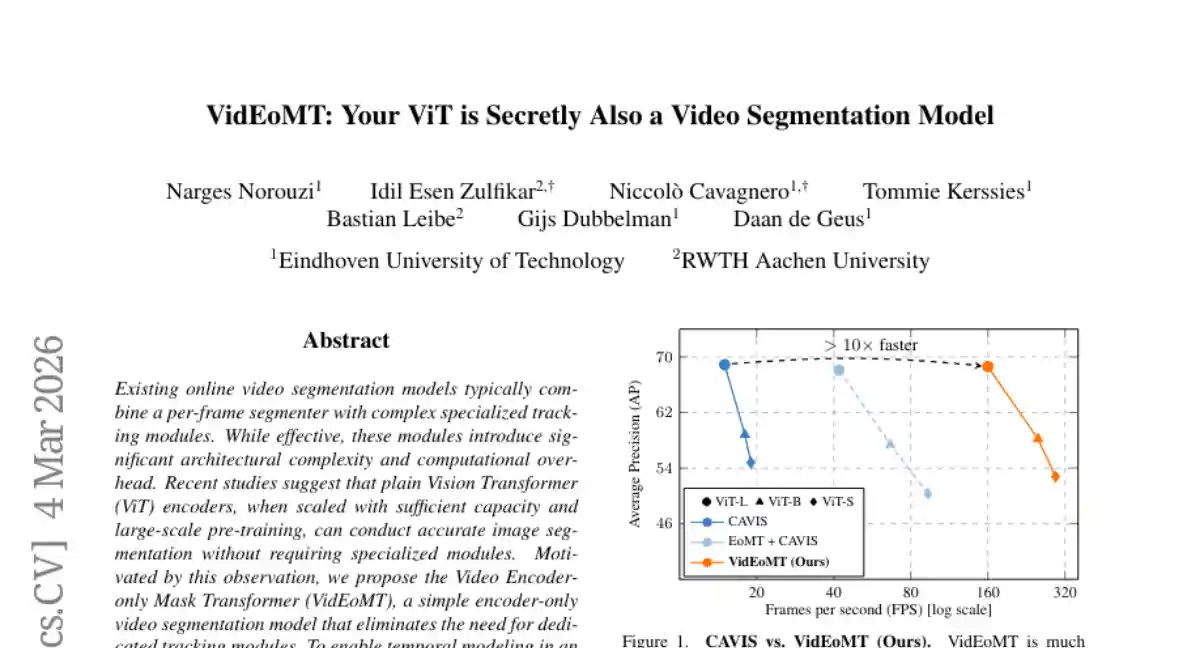

DeepSeek-V3 Technical Report

DeepSeek-V3 is a parameter-efficient Mixture-of-Experts language model using MLA and DeepSeekMoE architectures, achieving high performance with efficient training and minimal computational cost.

DeepSeek · Published on Dec 27, 2024

DeepSeek · Published on Dec 27, 2024

DeepSeek-V3 Technical Report

DeepSeek-V3 is a parameter-efficient Mixture-of-Experts language model using MLA and DeepSeekMoE architectures, achieving high performance with efficient training and minimal computational cost.

LTX-2: Efficient Joint Audio-Visual Foundation Model

LTX-2 is an open-source audiovisual diffusion model that generates synchronized video and audio content using a dual-stream transformer architecture with cross-modal attention and classifier-free guidance.

· Published on Jan 6, 2026

HeartMuLa: A Family of Open Sourced Music Foundation Models

A suite of open-source music foundation models is introduced, featuring components for audio-text alignment, lyric recognition, music coding, and large language model-based song generation with controllable attributes and scalable parameterization.

· Published on Jan 15, 2026

HeartMuLa: A Family of Open Sourced Music Foundation Models

A suite of open-source music foundation models is introduced, featuring components for audio-text alignment, lyric recognition, music coding, and large language model-based song generation with controllable attributes and scalable parameterization.

World Action Models are Zero-shot Policies

DreamZero is a World Action Model that leverages video diffusion to enable better generalization of physical motions across novel environments and embodiments compared to vision-language-action models.

World Action Models are Zero-shot Policies

DreamZero is a World Action Model that leverages video diffusion to enable better generalization of physical motions across novel environments and embodiments compared to vision-language-action models.

VibeVoice Technical Report

VibeVoice synthesizes long-form multi-speaker speech using next-token diffusion and a highly efficient continuous speech tokenizer, achieving superior performance and fidelity.

VibeVoice Technical Report

VibeVoice synthesizes long-form multi-speaker speech using next-token diffusion and a highly efficient continuous speech tokenizer, achieving superior performance and fidelity.

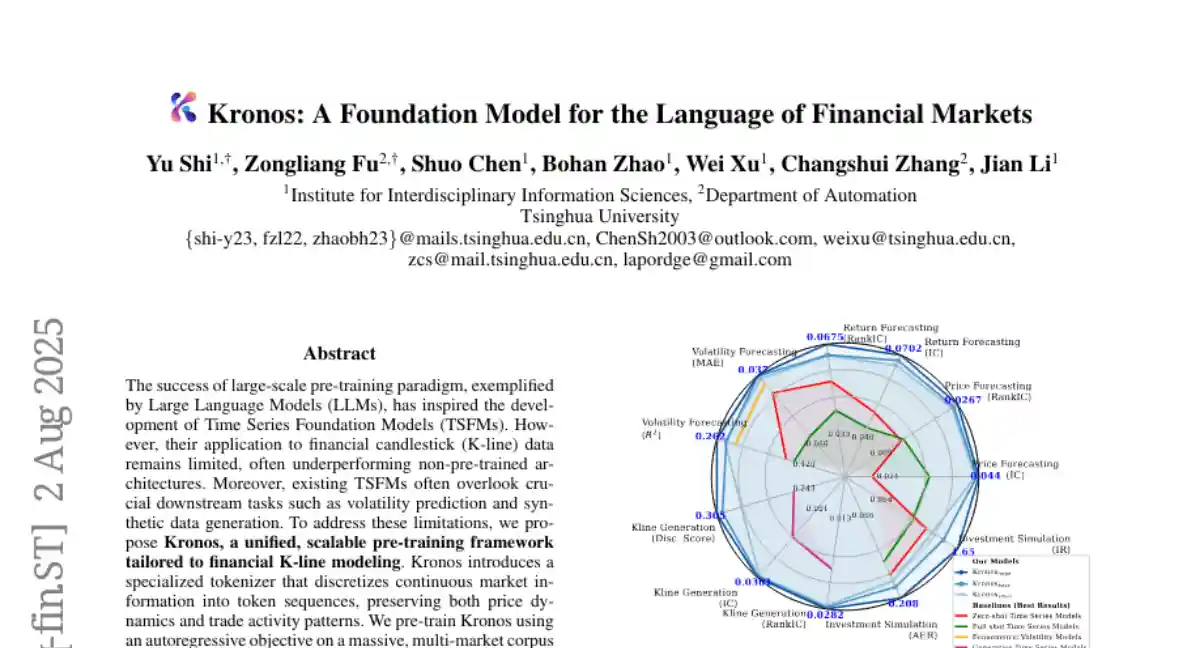

Kronos: A Foundation Model for the Language of Financial Markets

Kronos, a specialized pre-training framework for financial K-line data, outperforms existing models in forecasting and synthetic data generation through a unique tokenizer and autoregressive pre-training on a large dataset.

- 7 authors

· Published on Aug 2, 2025