World Generation for Robot Learning and Autonomous Systems

Full-day Workshop on World Understanding and Generation for Robotics

Hangzhou, China, Hangzhou International Expo Center, Grand Ballroom A

October 24th, 2025 • Hosted at #IROS2025

Overview

Workshop Details

Welcome to the IROS 2025 RoboGen Workshop on World Understanding and Generation!

RoboGen focuses on 2 aspects: multimodal world understanding and generation, because we believe these two problems are tightly bound, only if understanding is done right, then the generation is reasonable. Recent advances in 3D Vision (NeRF, Gaussian Splatting) and multimodal foundation models (LLM, VLM, diffusion and flow-based model) are transforming robotics by enabling the creation of high quality data for training, testing, and validation. Despite impressive capabilities of learning-based robotics in embodied AI, autonomous driving, unmanned aerial navigation, progress in generalizable systems remains constrained by the fundamental challenge of data acquisition.

Building towards AGI, RoboGen brings together researchers and industry experts to address this data bottleneck for scaling law. The goal is to develop innovative approaches that leverage recent advances in world generation and understanding methods to . Our workshop emphasizes practical applications and solutions to long-tail data problems in challenging robotics scenarios, with the goal of empowering deep learning systems for real-world deployment.

The RoboGen workshop aims to advance world generation for robotics with four key objectives:

- (1) Understanding: advance multimodal world understanding and spatial-temporal reasoning through vision-language models,

- (2) Representation: explore video diffusion and flow-based models conditioned on sensor geometry cues for physical world representation,

- (3) Action: democratize access to high-quality synthetic data generation to train robust vision-language-action models.

- (4) Scaling: enable practical deployment of generalizable robot learning systems overcoming the data scaling law bottleneck.

Workshop Features

The workshop will feature the following events and activities to encourage discussion and participation. We are looking for industry partners to showcase their products and services. If you are interested in sponsoring the workshop, please contact us at robogen-iros@googlegroups.com.

Industry-Academia Keynote Sessions

Leading researchers and practitioners from both academia and industry will present cutting-edge advancements in multimodal foundation models and world generation methods for robotics applications.Oral Presentations

Selected paper presentations highlighting impactful approaches to data generation, artifact mitigation, and domain-specific applications of neural rendering and generative models for robotics.Interactive Poster & Demo Session

A dedicated session where student researchers can demonstrate their methods and techniques, fostering discussions and collaborations. This will provide an opportunity for junior researchers to directly interact with senior researchers.Expert Panel Discussion

A focused discussion on bridging the gap between theoretical advancements in world generation and practical robotics applications.Award Ceremony

Awards will be presented in multiple categories including Best Paper, Best Poster, Most Impactful Open-Source Project, and Most Engaging Speaker to encourage participation and recognize outstanding contributions.

Keynote Speaker: Siyuan Huang

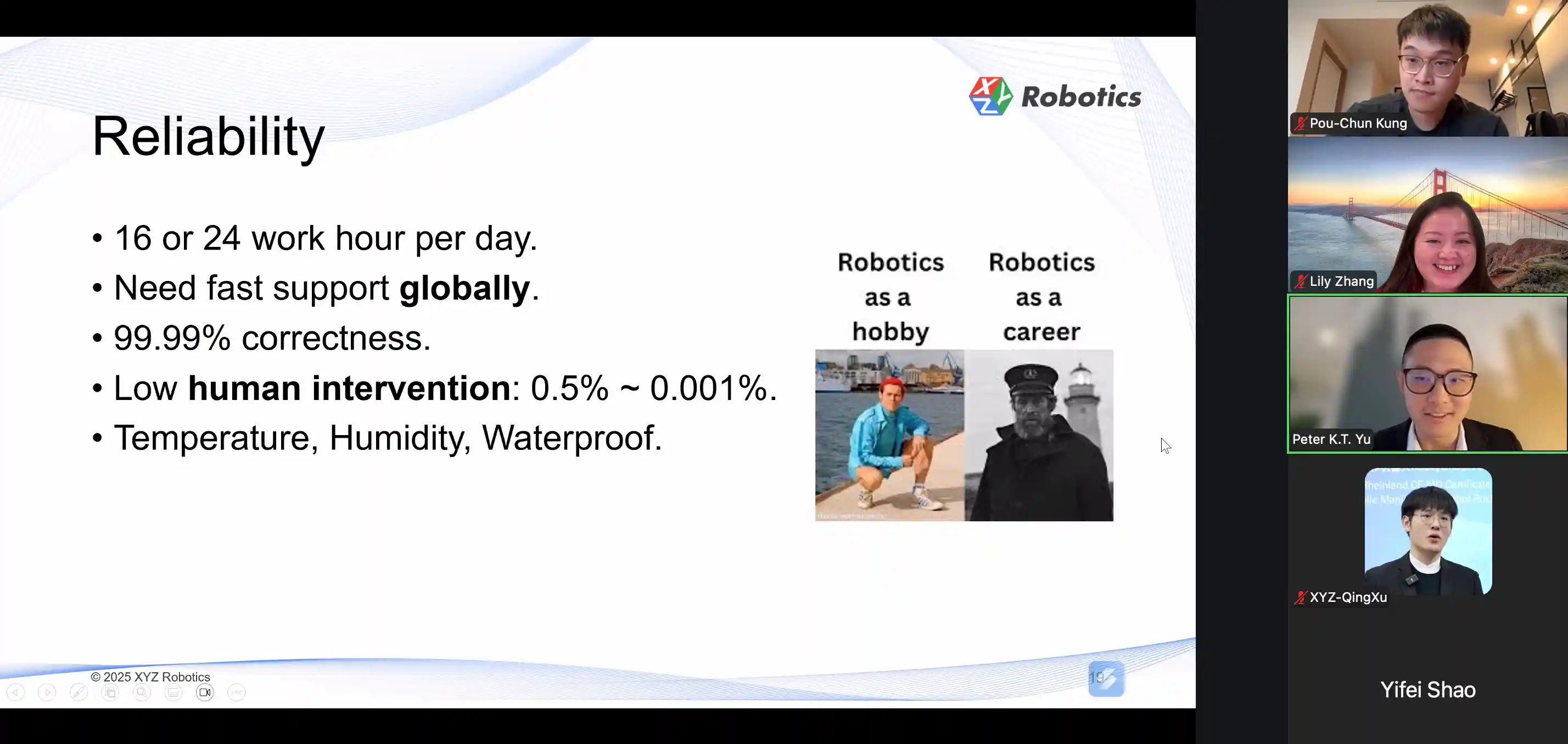

Rising Star Speaker: Yifei Shao

Rising Star Speaker: Pou-Chun Kung

Keynote Speaker: Yue Wang

🏆 Best Keynote

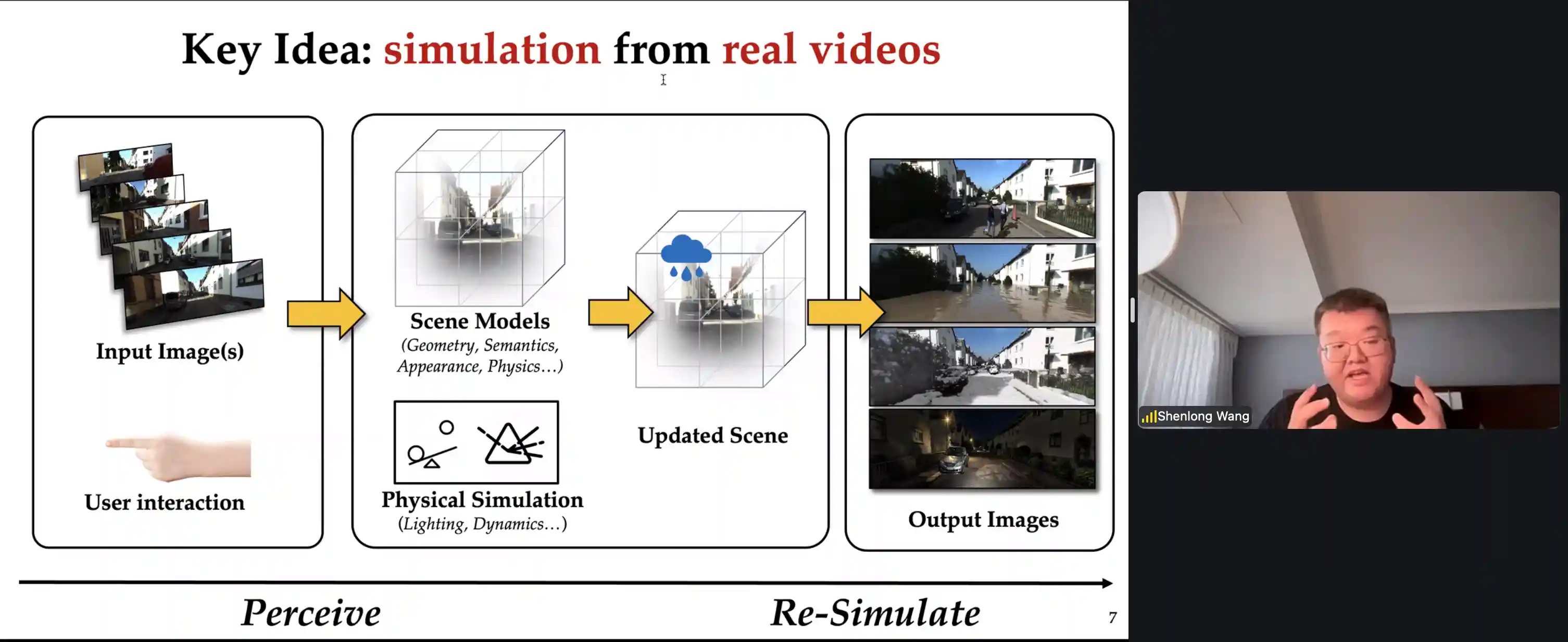

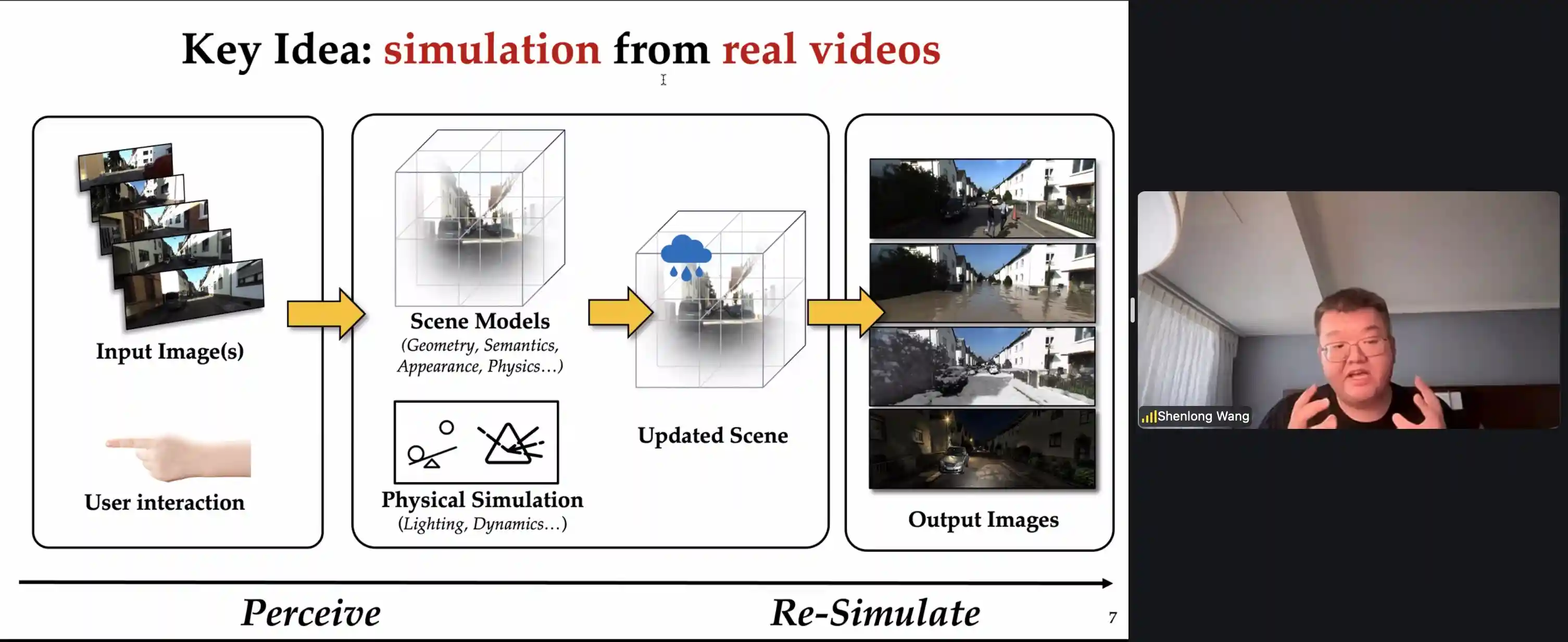

Best Keynote Winner: Shenlong Wang

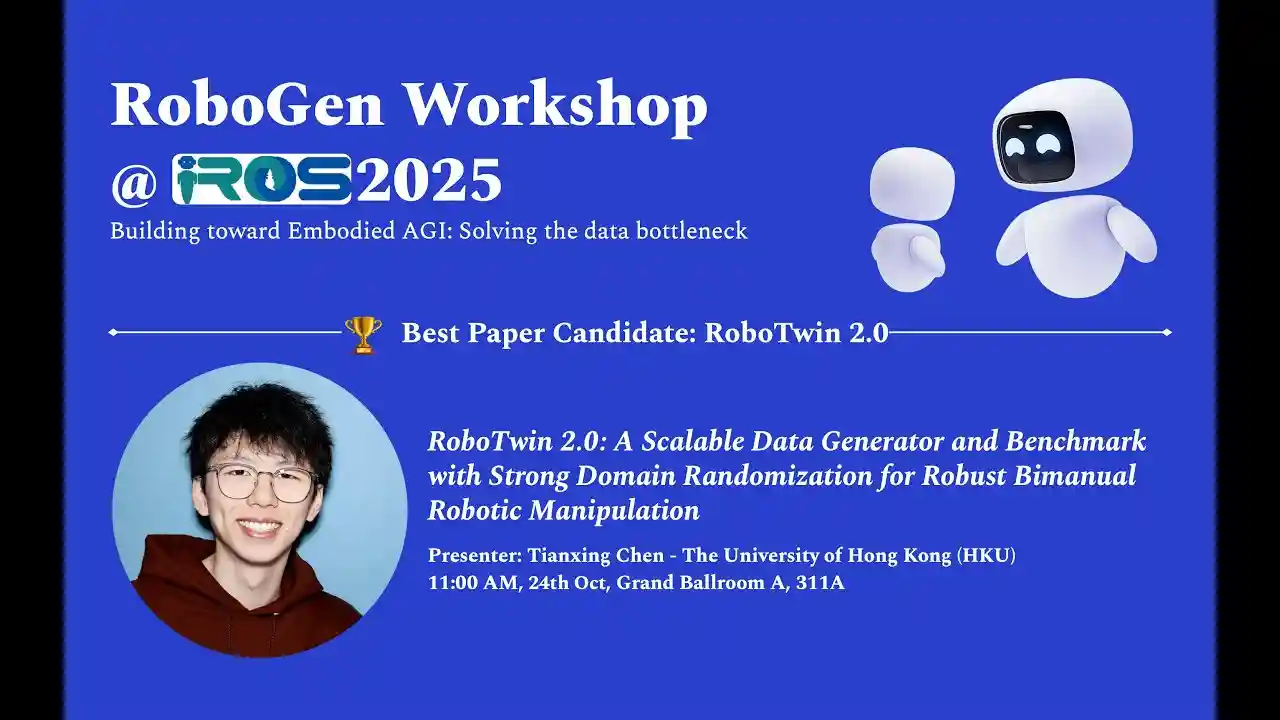

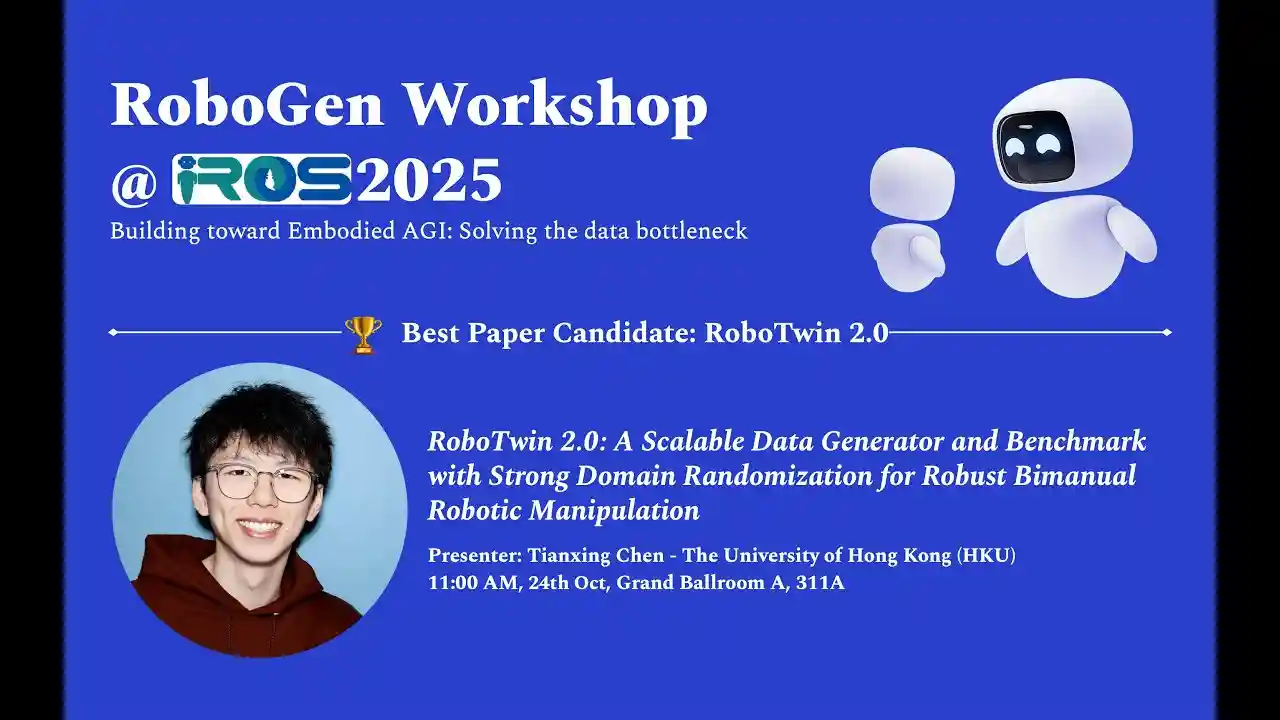

Best Paper Candidate: RoboTwin 2.0

Oral Speaker: Tianxing Chen

🏆 Best Paper

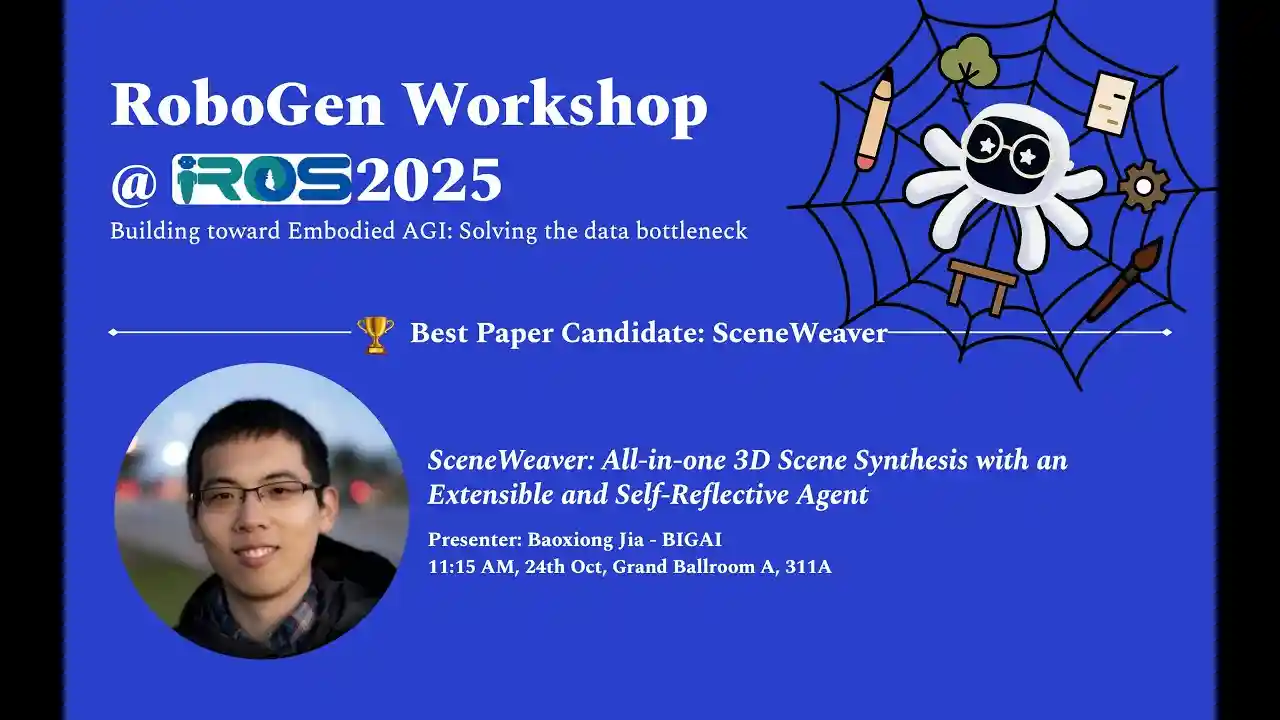

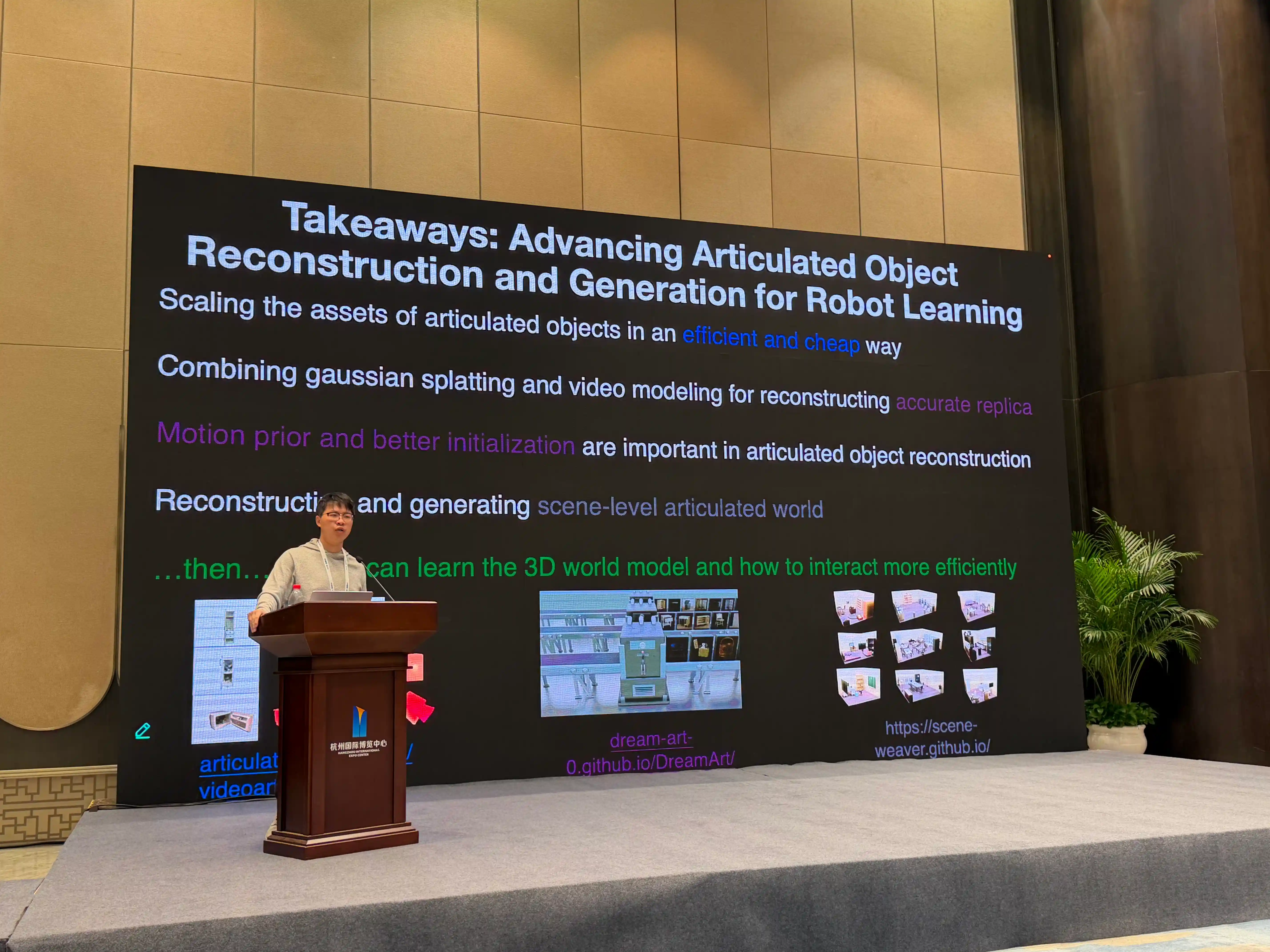

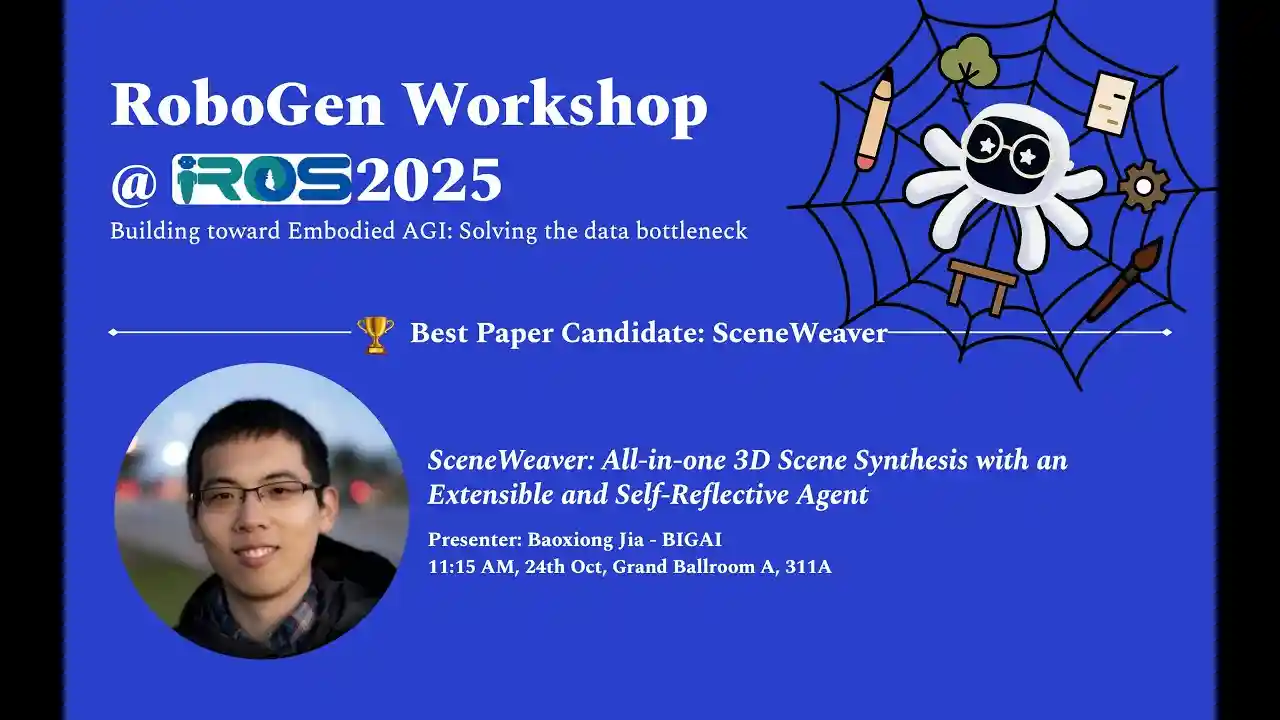

Best Paper Winner: SceneWeaver

Oral Speaker: Baoxiong Jia

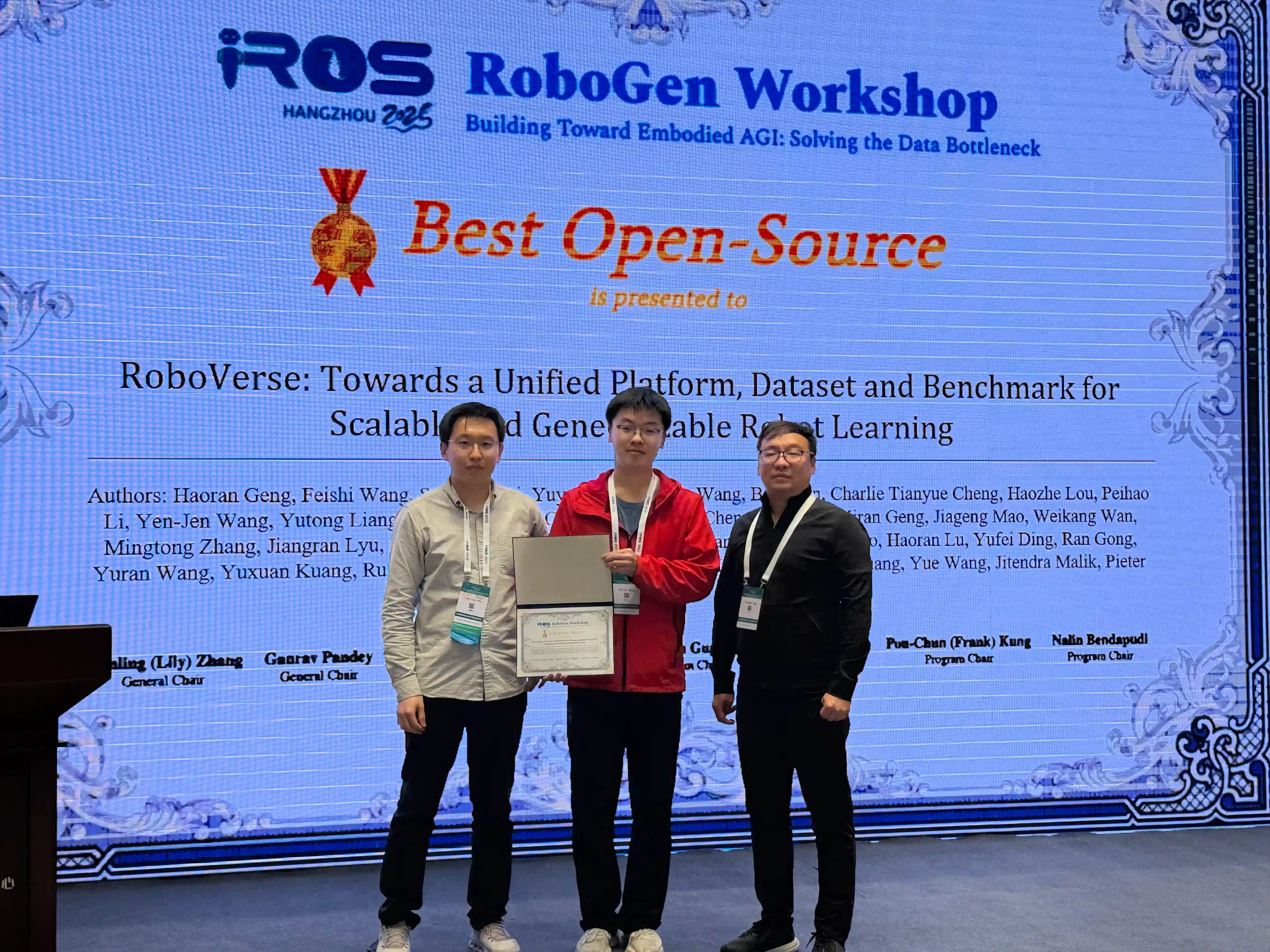

🏆 Best OpenSource

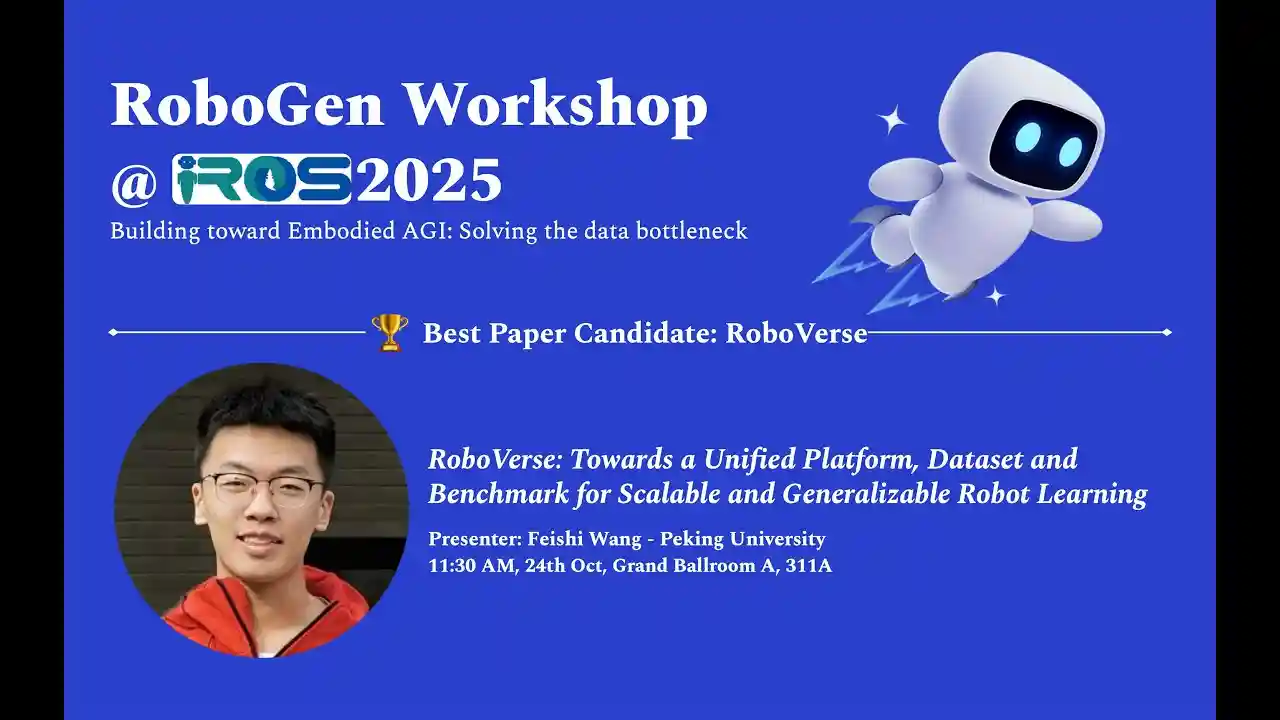

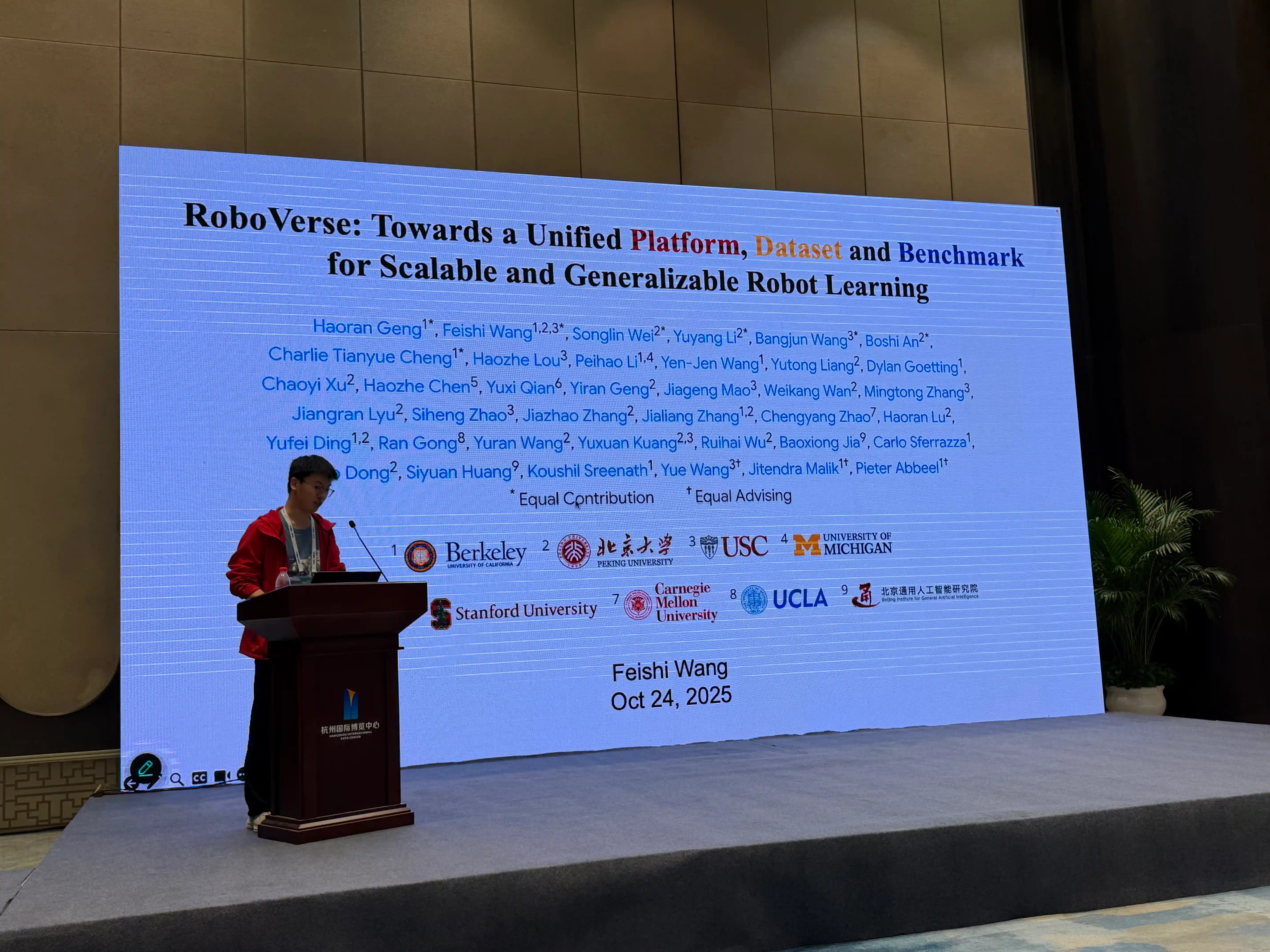

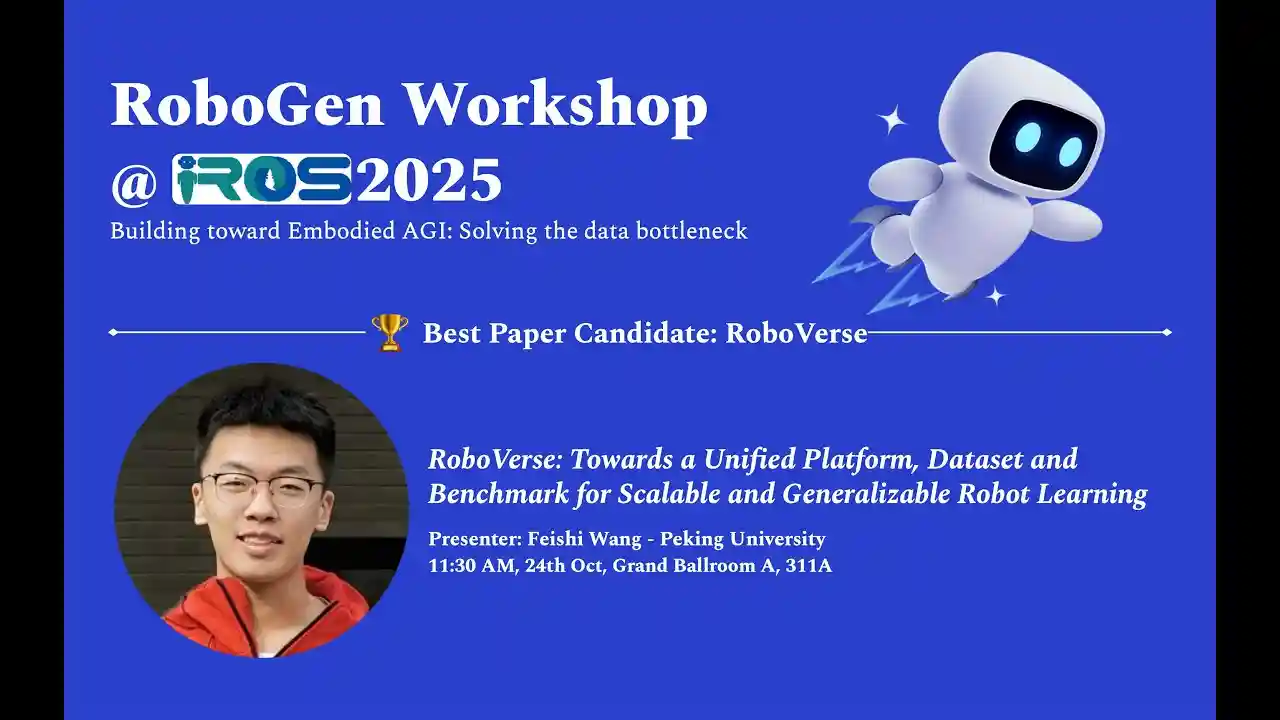

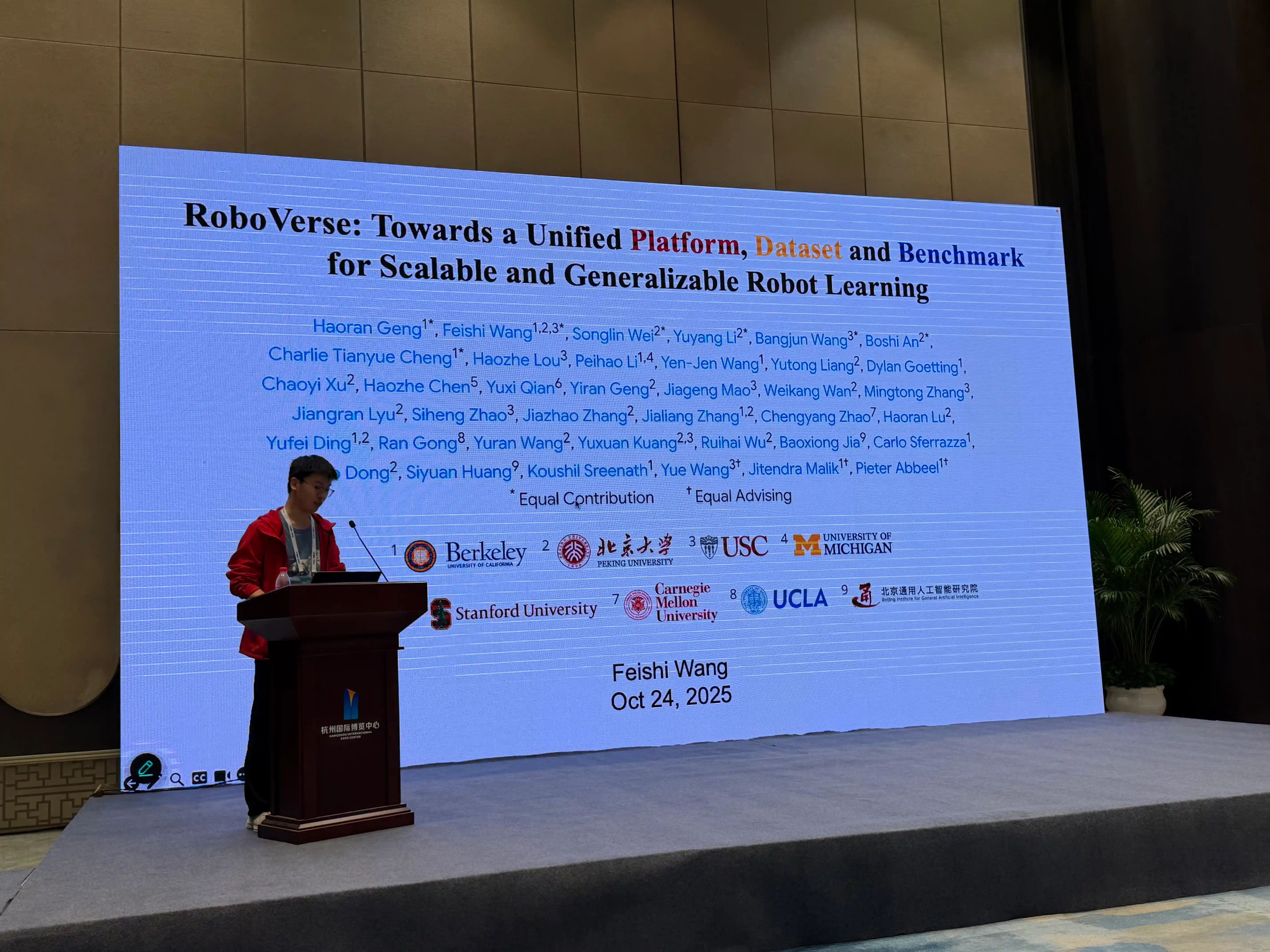

Best OpenSource Winner: RoboVerse

Oral Speaker: Feishi Wang

🏆 Best Poster

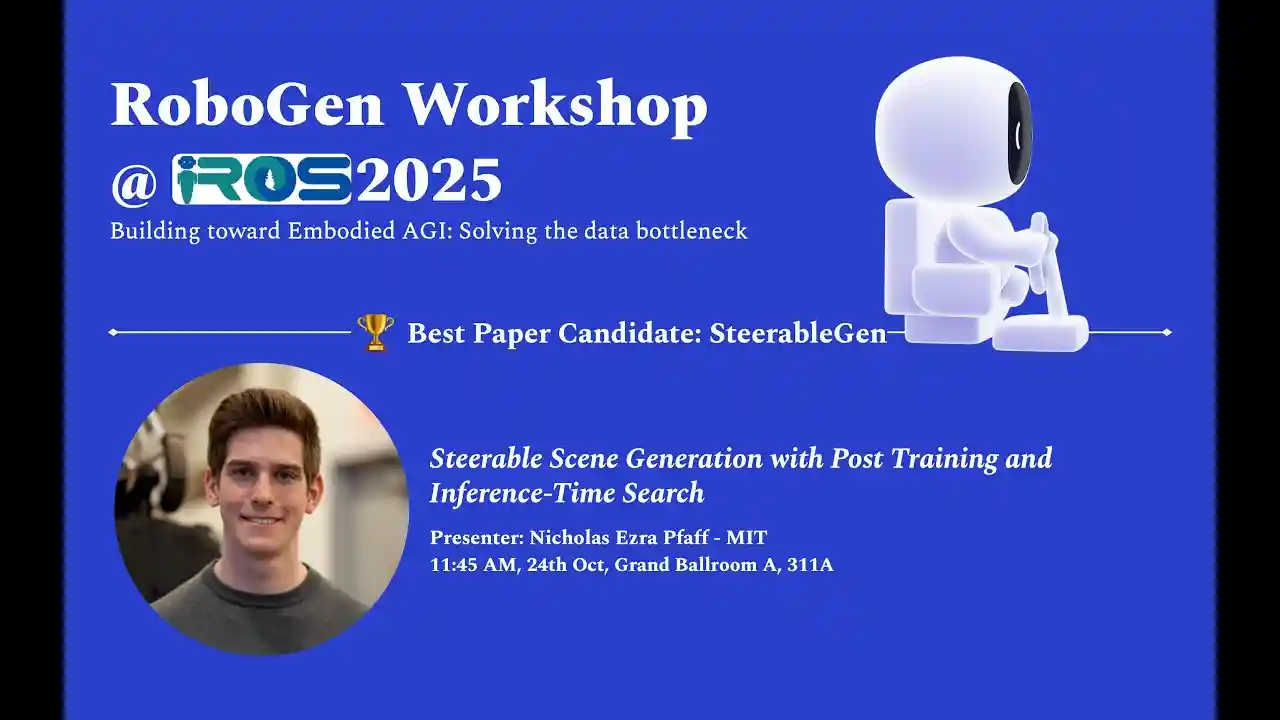

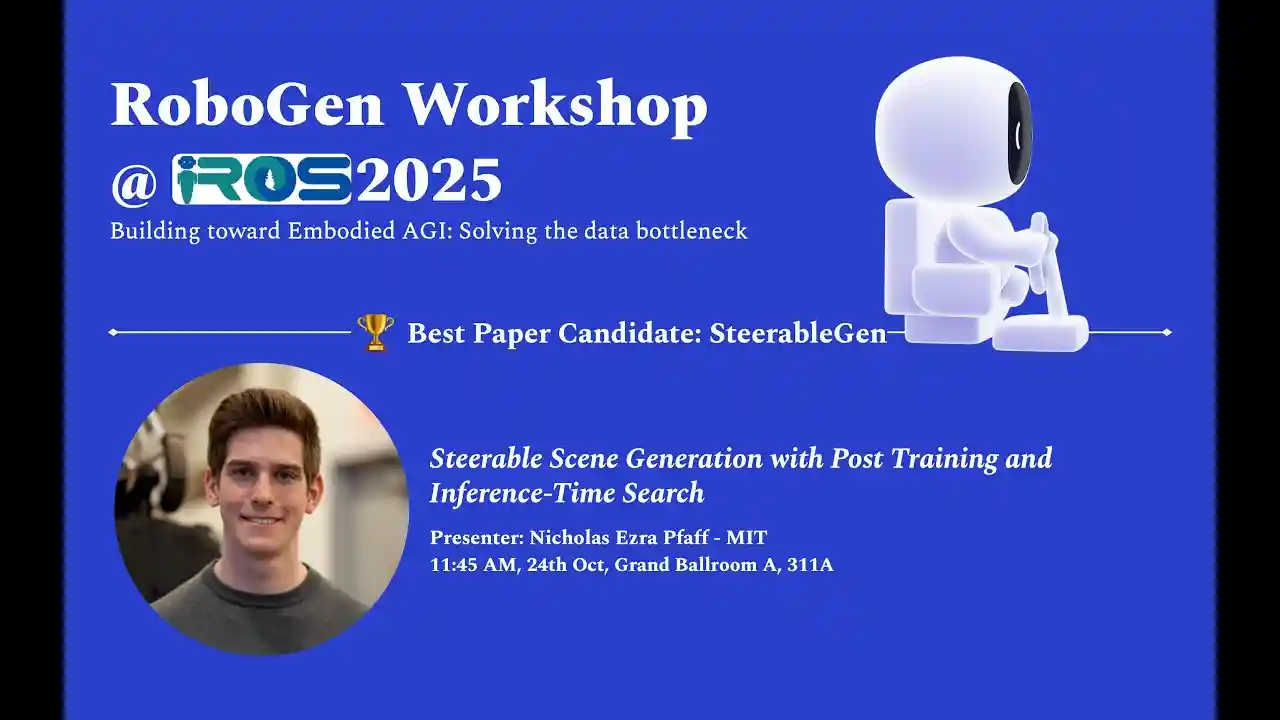

Best Poster Winner: SteerableGen

Oral Speaker: Nicholas Ezra Pfaff

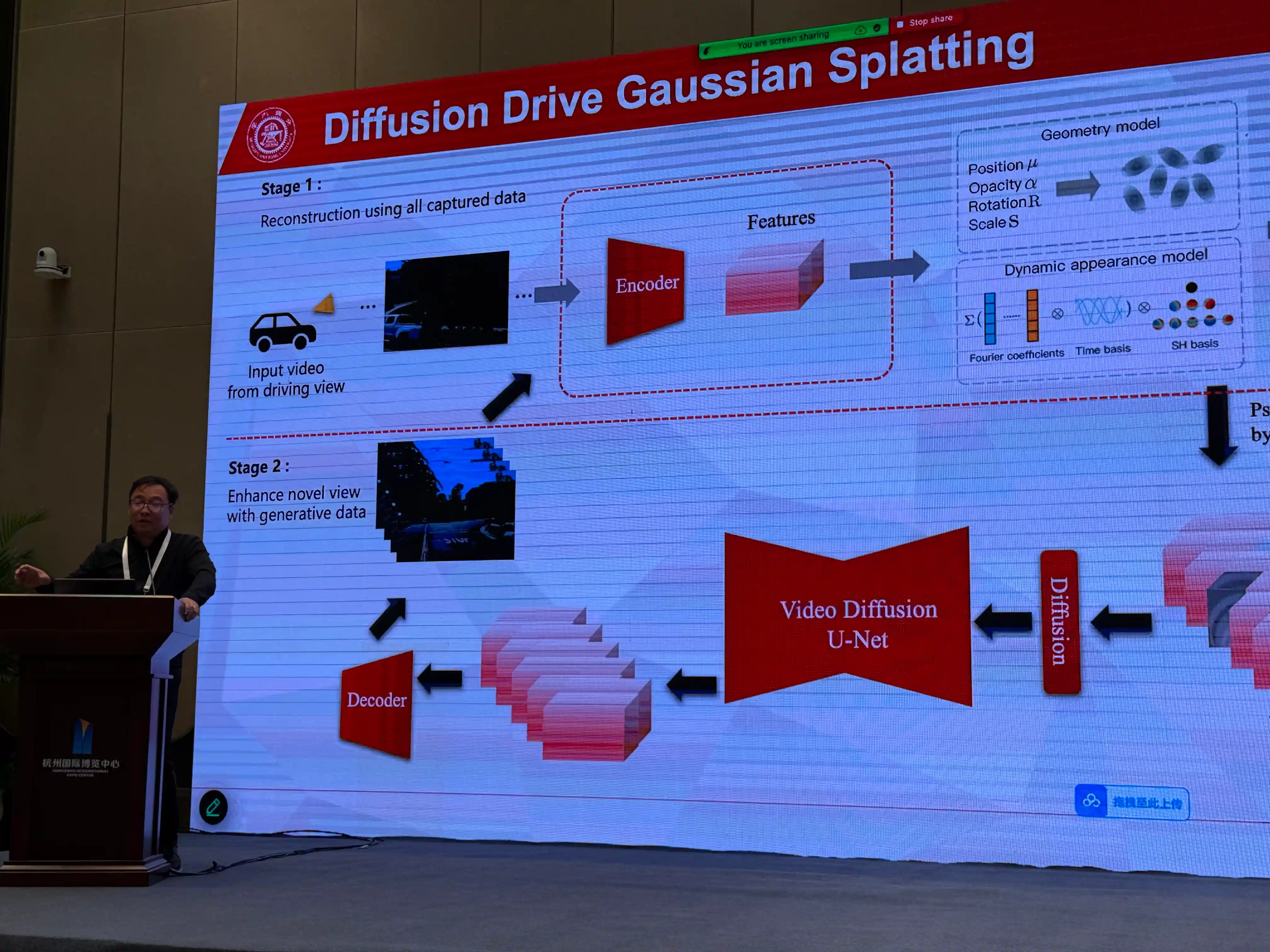

Keynote Speaker: Chenfei Wu

Recording Opted Out

Keynote Speaker: Zhijian Liu

Keynote Speaker: Ruigang Yang

Keynote Speaker: Peter KT Yu

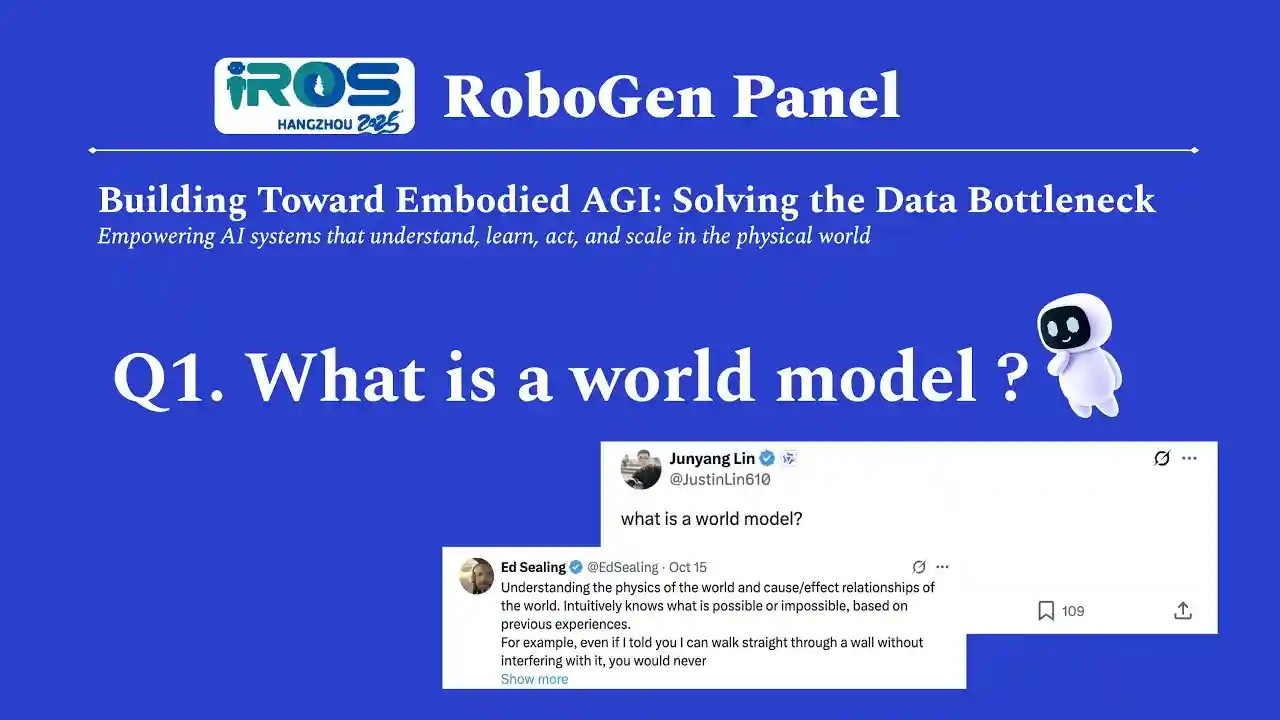

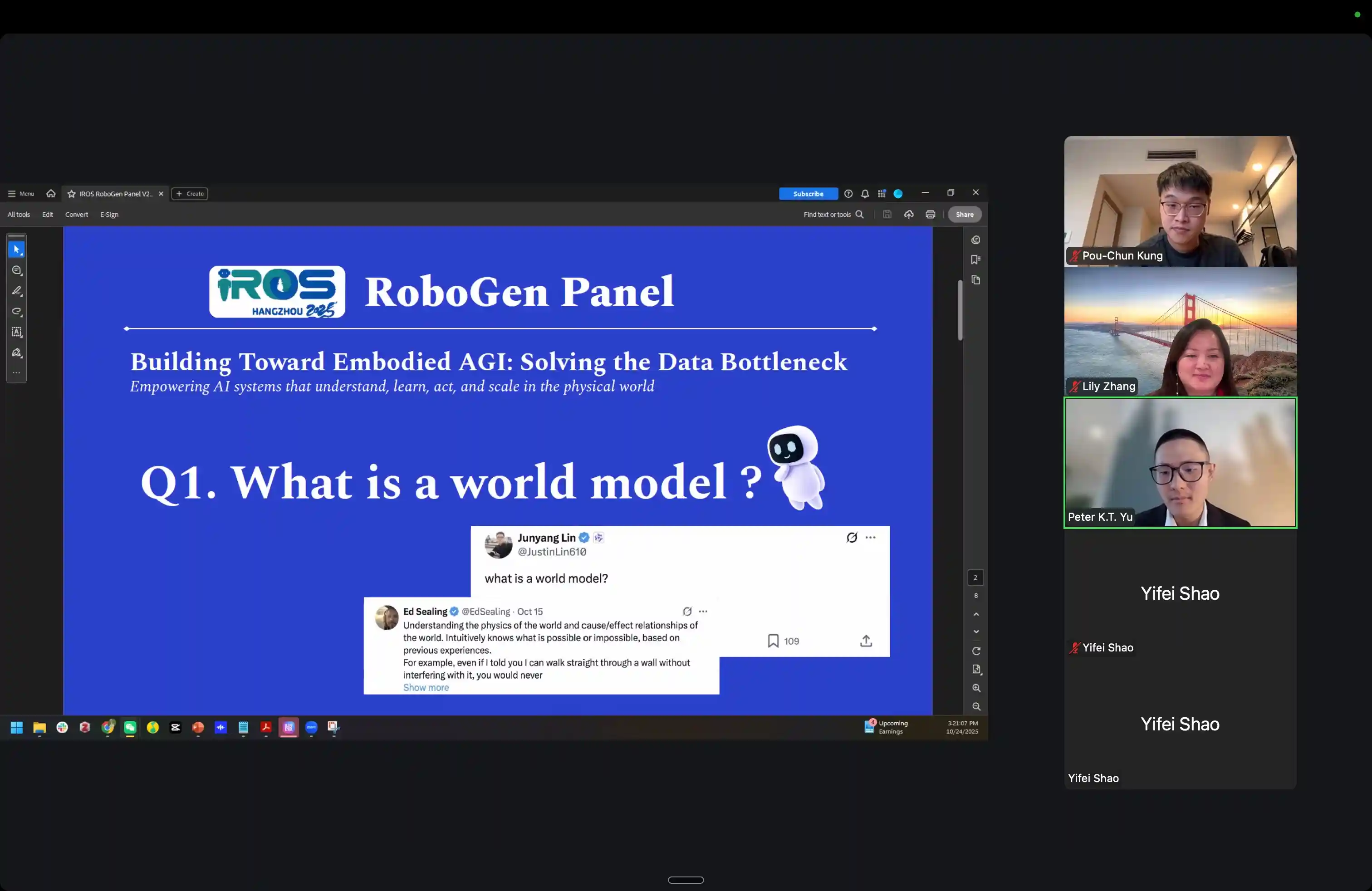

Panel: Building Toward Embodied AGI - Solving the Data Bottleneck

Workshop Photo Highlights

RoboGen Workshop

Siyuan Keynote

Siyuan Presentation

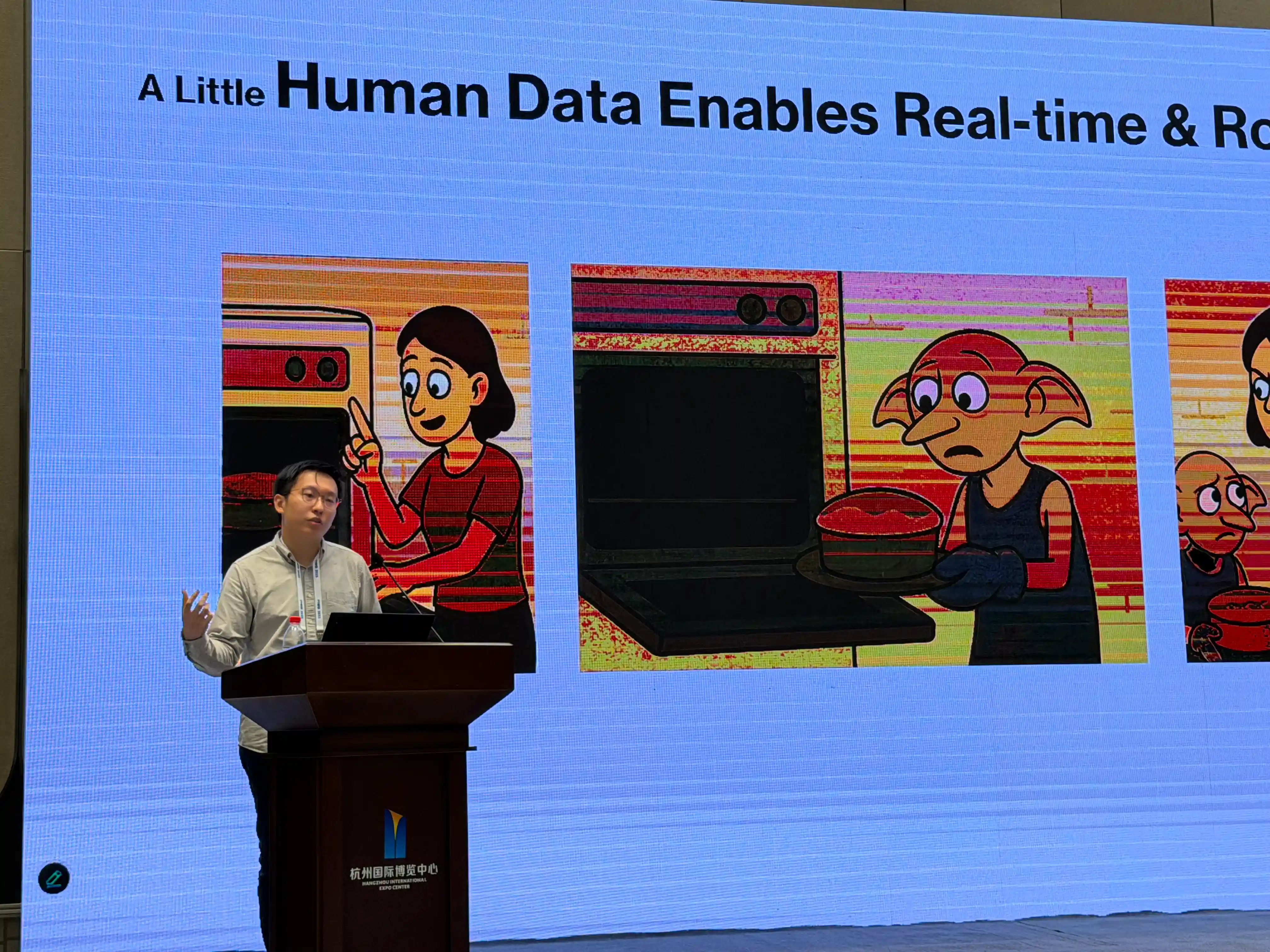

Yifei Presentation

Yifei Talk

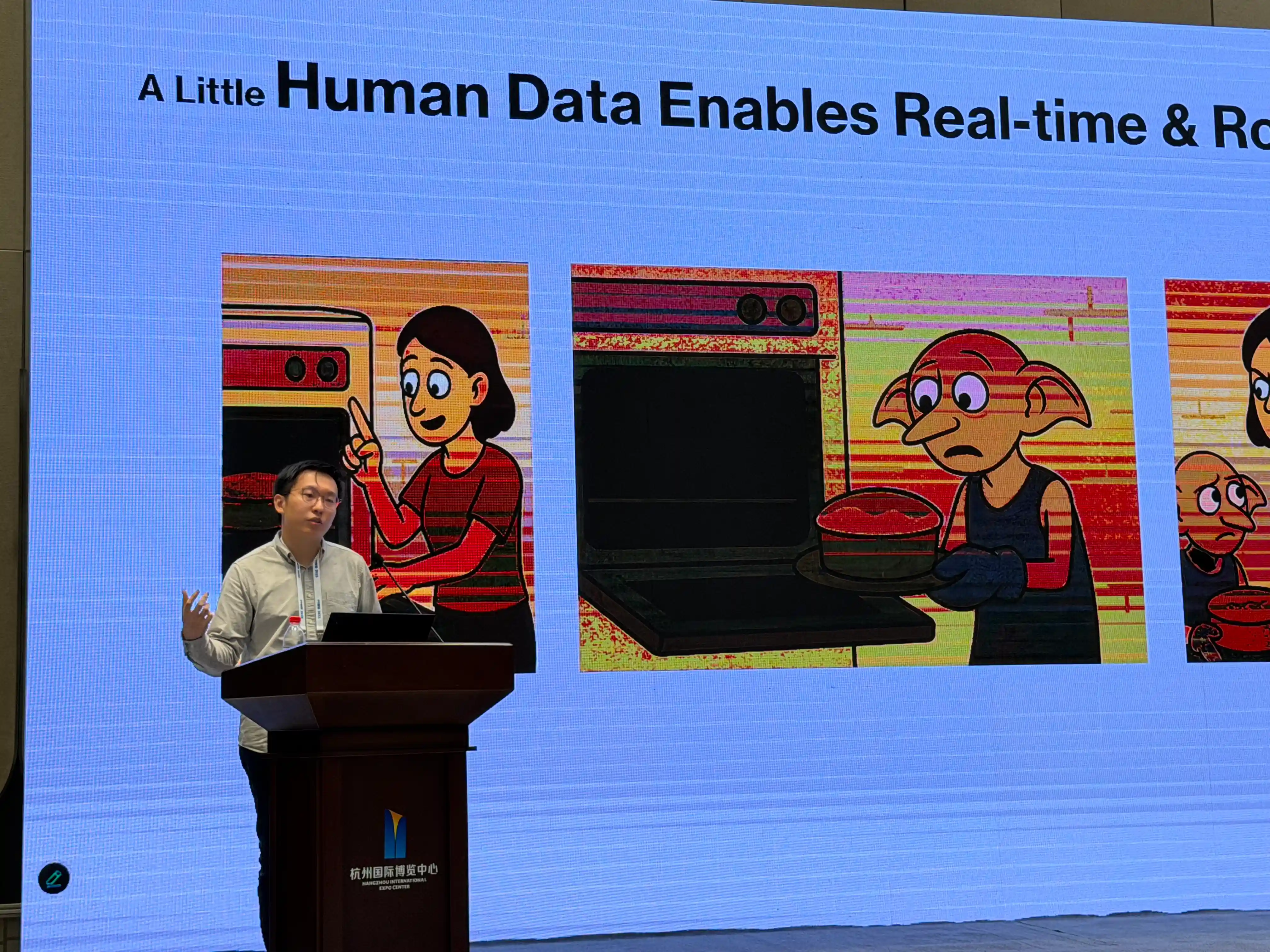

Frank Presentation

Yue Talk

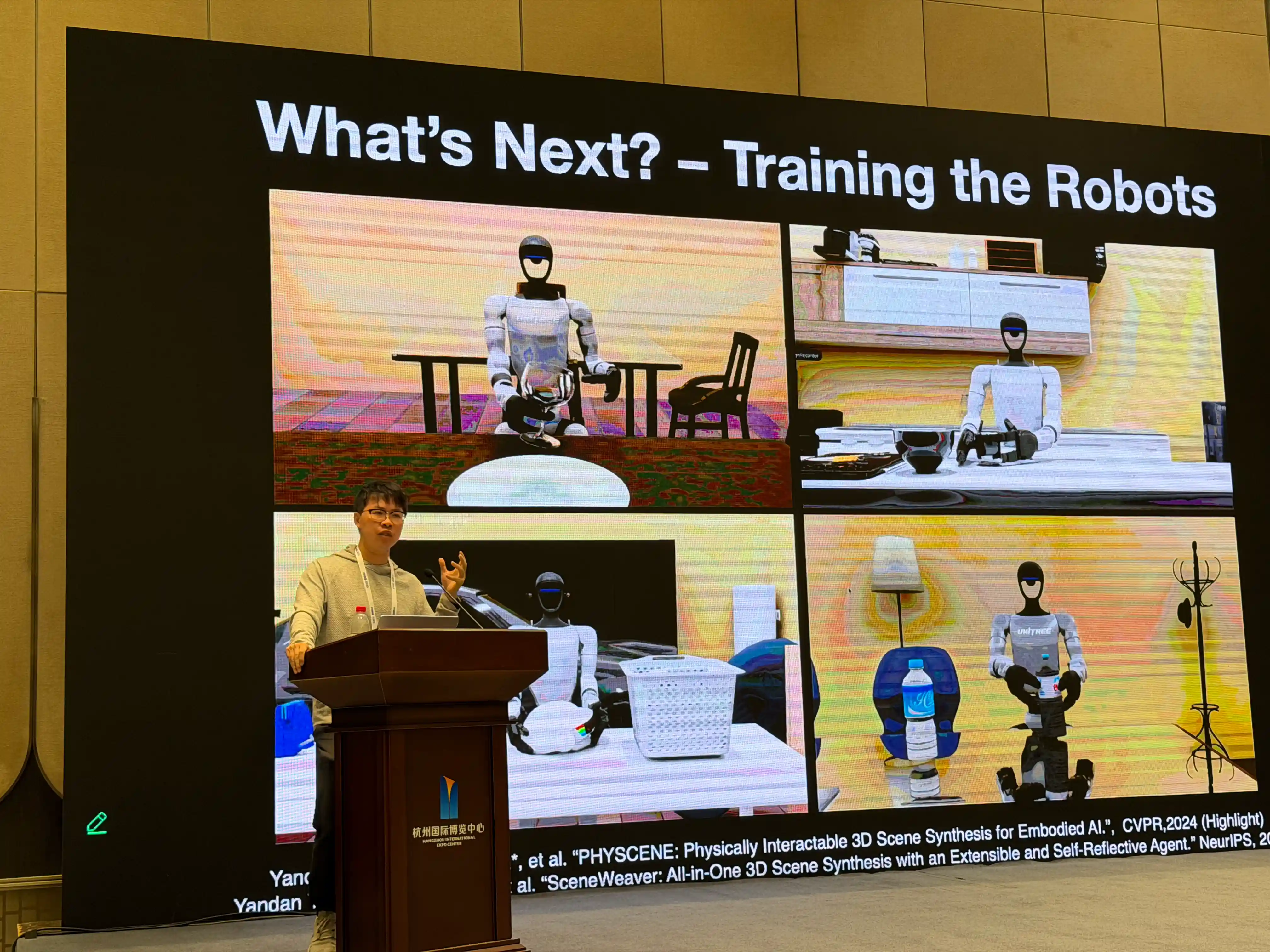

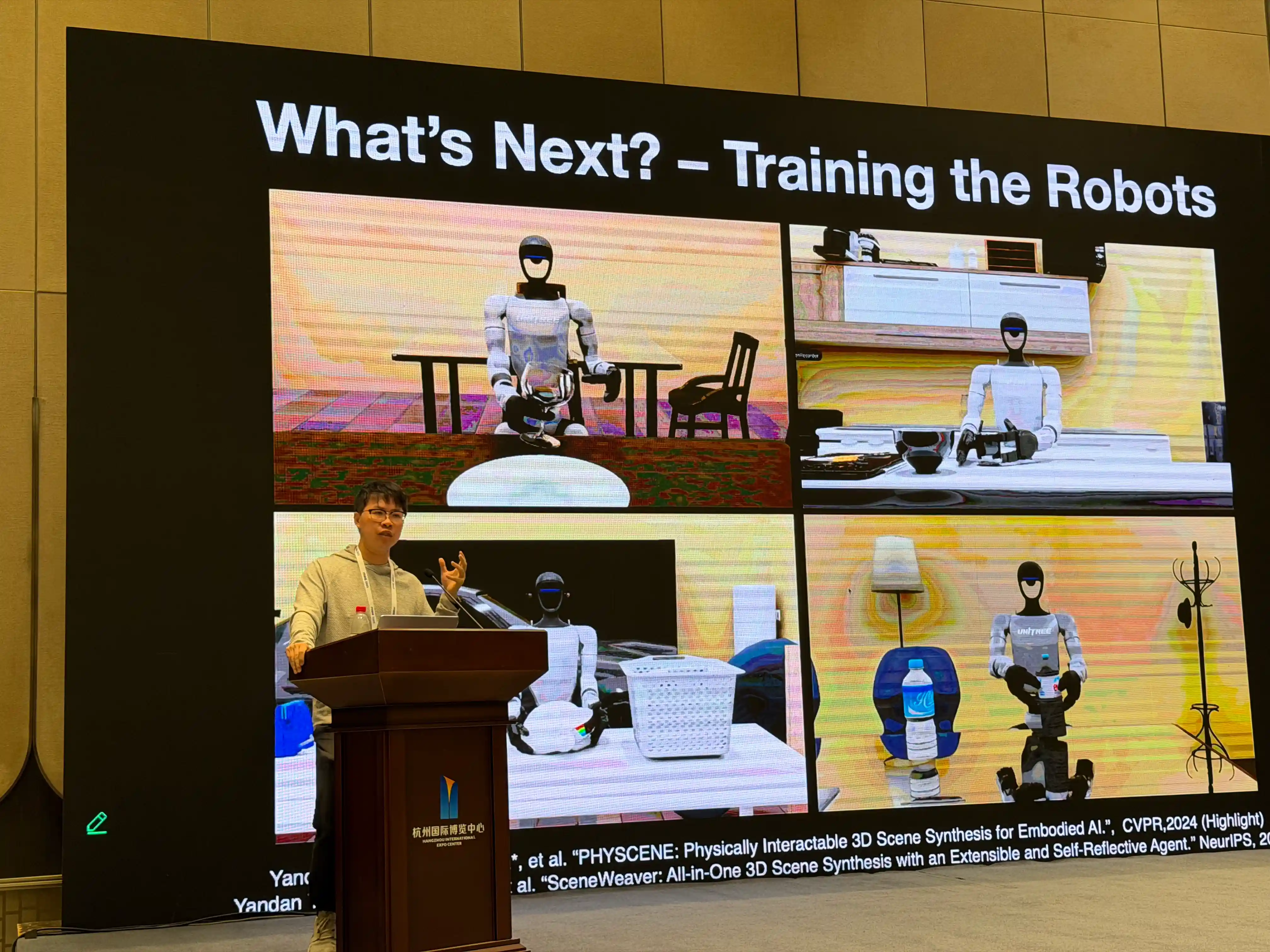

Shenlong Wang

Shenlong Presentation

Tianxing Talk

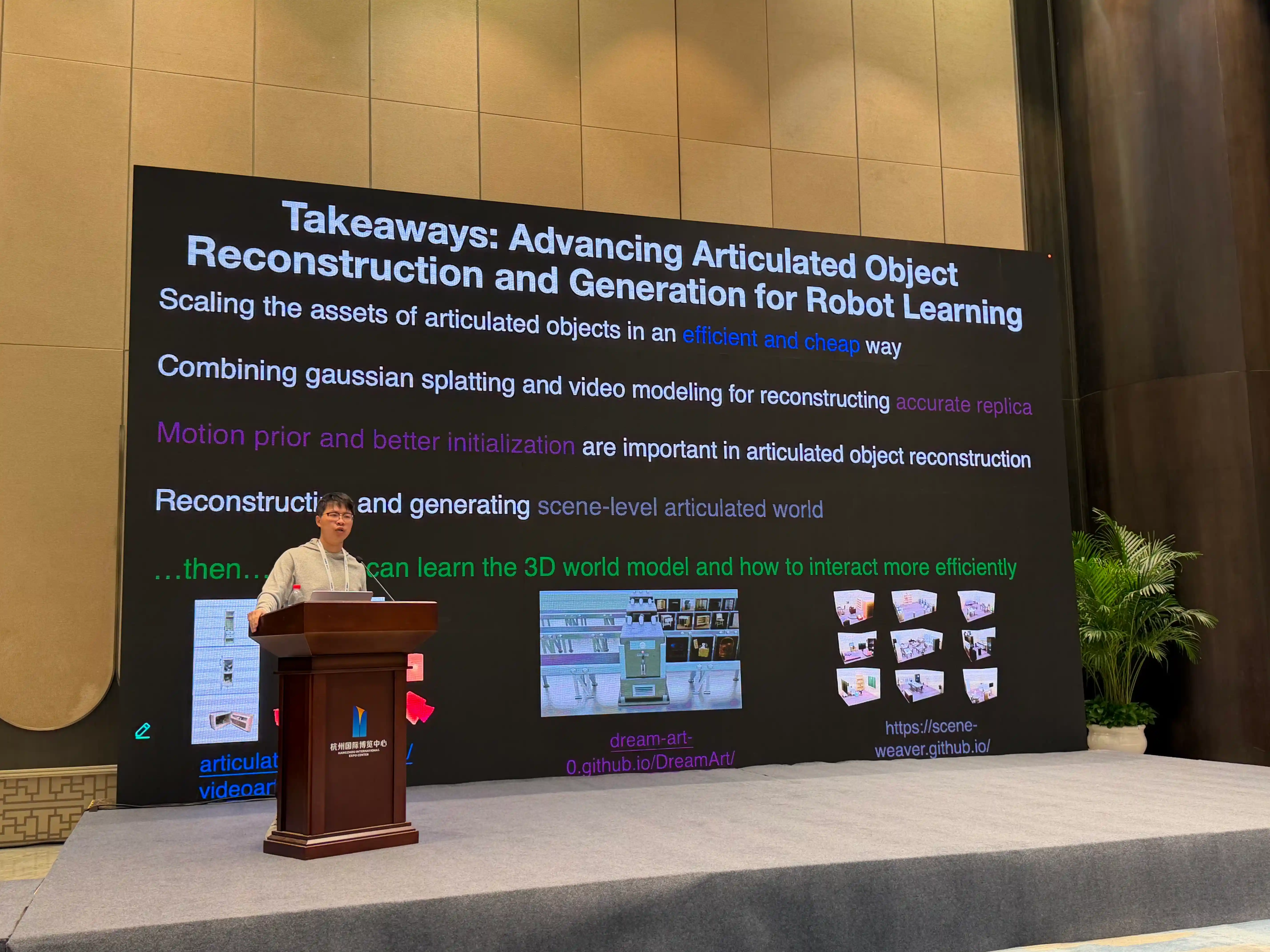

Baoxiong

Baoxiong Presentation

Feishi

Nicholas

Nicholas Presentation

Chenfei

Zhijian

Zhijian and Lily

Peter

Peter Presentation

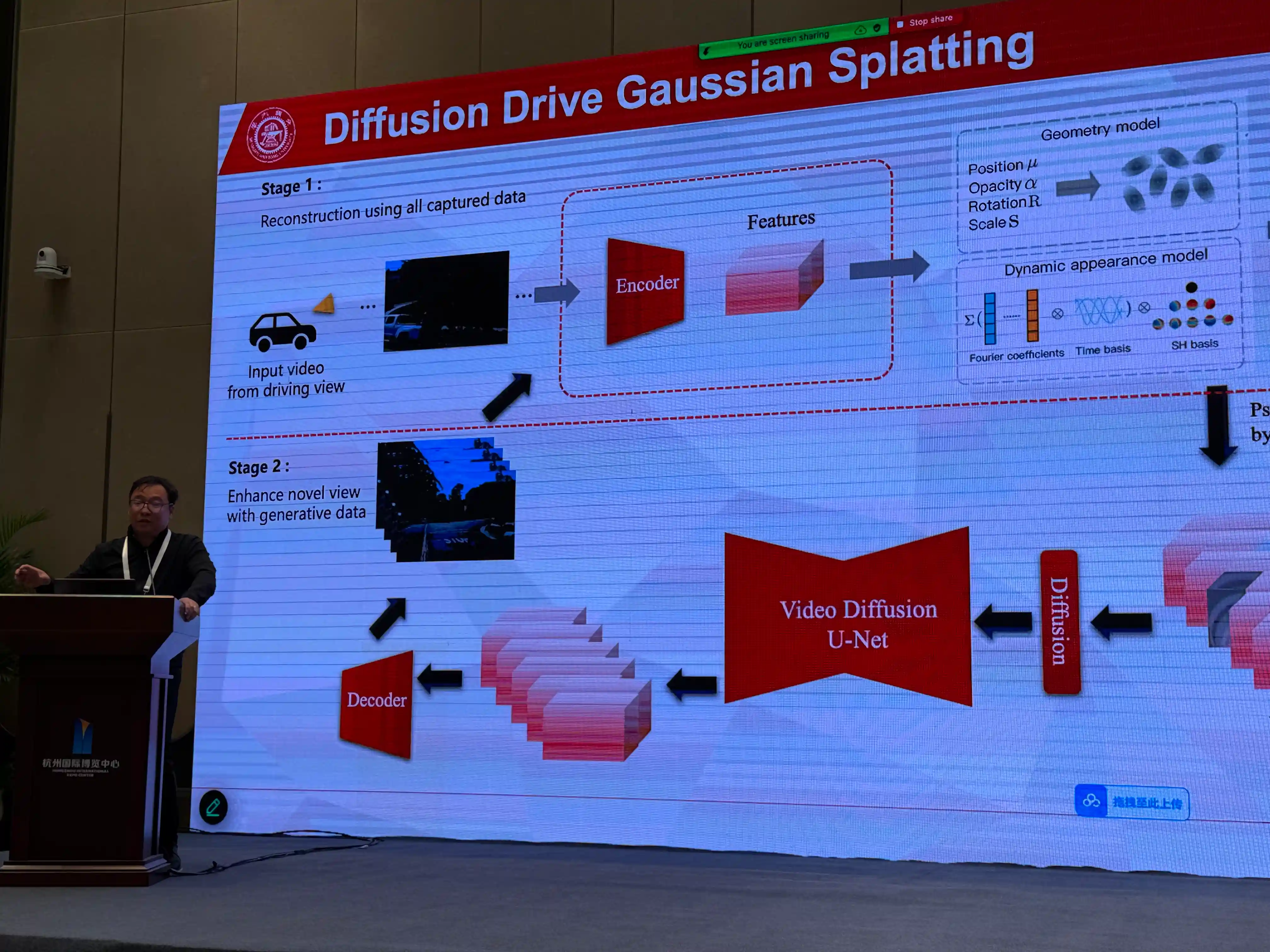

Ruigang

Ruigang Talk

Panel Discussion

Award Ceremony

Award Winners

Award Presentation

Best Paper Award

Award Recipients

×

❮

❯

Paper Submission

Call for Papers

Important Note

All submissions are non-archival, which allows authors to submit to other conferences and journals in the future. In addition, we welcome papers that have been submitted to or accepted by other venues, as well as highly impactful open-source projects .

📚 Topics

Topics of this workshop include but are not limited to:

- Vision-language conditioned image/video generation

- Embodied robot training through exploration in interactive synthetic environments

- Multimodal world understanding with spatial-temporal reasoning

- Long-horizon AV/robotics video reasoning using chain-of-thought and reinforcement learning

- Post-training multimodal LLMs to reason about the physical world

- Inference-time scaling of general-purpose world models for cost-effective robot deployment

- Simultaneous sensor and traffic simulation for novel environment adaptation in robotics

- Multi-modal sensing integration (radar, lidar) with diffusion-based generative world models

- 4D dynamic scene composition with NeRF and 3D Gaussian Splatting using cost-effective multi-modal sensors

- Robust perception in adverse environments (low-light, long-range, sparse view) using neural rendering

- Robot simultaneous localization and mapping (SLAM) with neural or Gaussian scene representation

📅 Important Dates

| Event | Date |

|---|---|

| Submission open | June 1st, 2025, 11:59 PM AOE |

| Submission due | September 1st, 2025, 11:59 PM AOE September 20th, 2025, 11:59 PM AOE Submission Extended! |

| Notification to authors | September 27th, 2025, 11:59 PM AOE |

| Camera Ready | October 1st, 2025, 11:59 PM AOE |

| Workshop Date | Oct 24th, 2025 |

📝 Submission Format

Authors may submit either (1) extended abstracts (2–4 pages) , which is suitable for preliminary work-in-progress or already-published results; (2) full papers (6–8 pages) that present original, detailed research contributions (methodology, experiments, and analysis).

Formatting Requirements:

All submissions must follow the IROS/IEEE double column format with page limits that include references, appendices, and any additional material. Papers should be submitted as a single PDF file (up to 6MB). Official formatting templates are available here.

Submission Portal:

RoboGen @ OpenReview

To recognize and encourage outstanding contributions, RoboGen will present awards in the following categories. All awards will be selected by the organizing committee:

- • Best Paper: Three best paper candidates will be invited for oral spotlight presentations, with one selected as the winner

- • Best Poster: Selected from all accepted papers presented in the poster session

- • Best Open-Source: Open to novel datasets, projects or already published open-source works of great impact in the field

- • Best Speaker: Awarded to the most engaging speaker across all presentations

Additional Information:

• All submissions will be peer-reviewed

• Optional supplementary materials (videos, images, code) may be submitted as a separate ZIP file

• Accepted papers and abstracts will be made publicly available on this website

• Authors will present their work in either oral or poster sessions

Papers

Accepted Papers

We are excited to present the following accepted papers for the RoboGen workshop. The papers marked with ⭐ will be presented in the oral sessions. All accepted papers will be presented in the poster sessions.

RoboTwin 2.0: A Scalable Data Generator and Benchmark with Strong Domain Randomization for Robust Bimanual Robotic Manipulation

Steerable Scene Generation with Post Training and Inference-Time Search

SceneWeaver: All-in-one 3D Scene Synthesis with an Extensible and Self-Reflective Agent

RoboVerse: Towards a Unified Platform, Dataset and Benchmark for Scalable and Generalizable Robot Learning

Human-level learning for autonomous driving: Learn to drive with Large Multimodal Foundation Models (LMFM)

Reliable 3D Reconstruction from Long-sequence In-the-wild Videos

MASt3R-GS: Bridging 3D Reconstruction Priors with Gaussian Splatting for Real-Time Dense SLAM

Physics-Aware Gaussian Radar Enhancement for All-weather Perception

Speakers

Invited Speakers

Siyuan Huang

Research Scientist, Beijing Institute for General Artificial Intelligence (BIGAI), Director of Center for Embodied AI and Robotics

Yue Wang

Assistant Professor, University of Southern California (USC)

Shenlong Wang

Assistant Professor, University of Illinois Urbana-Champaign (UIUC)

Chenfei Wu

Senior Expert, Tongyi Lab (Qwen), Alibaba

Zhijian Liu

Assistant Professor, University of California San Diego (UCSD) Research Scientist, NVIDIA

Oral Presentations

Best Paper Oral Speakers

Program

Workshop Schedule (Tentative)

All invited talks, oral presentations and the panel discussion will take place in-person at IROS in Hangzhou, China with support for remote participation.

08:45

Opening08:45 - 09:00

09:00

Keynote09:00 - 09:30

09:30

Rising Star Talk09:30 - 09:45

09:45

Rising Star Talk09:45 - 10:00

10:00

Keynote10:00 - 10:30

10:30

Keynote10:30 - 11:00

11:00

Oral Presentations11:00 - 12:00

13:00

Keynote13:00 - 13:30

13:30

Keynote13:30 - 14:00

14:00

Keynote14:00 - 14:30

14:30

Keynote14:30 - 15:00

15:00

Panel Discussion15:00 - 16:00

16:00

Poster Session16:00 - 17:00

17:00

Award Ceremony17:00 - 17:30

Award Ceremony

🥇 Best Paper: TBA

🏅 Best Poster: TBA

💻 Best Open-Source: TBA

🎤 Best Speaker: TBA

Organizers

Workshop Organizers

Affiliations

Keynote Speaker: Siyuan Huang

Rising Star Speaker: Yifei Shao

Rising Star Speaker: Pou-Chun Kung

Keynote Speaker: Yue Wang

🏆 Best Keynote

Best Keynote Winner: Shenlong Wang

Best Paper Candidate: RoboTwin 2.0

Oral Speaker: Tianxing Chen

🏆 Best Paper

Best Paper Winner: SceneWeaver

Oral Speaker: Baoxiong Jia

🏆 Best OpenSource

Best OpenSource Winner: RoboVerse

Oral Speaker: Feishi Wang

🏆 Best Poster

Best Poster Winner: SteerableGen

Oral Speaker: Nicholas Ezra Pfaff

Keynote Speaker: Chenfei Wu

Recording Opted Out

Keynote Speaker: Zhijian Liu

Keynote Speaker: Ruigang Yang

Keynote Speaker: Peter KT Yu

Panel: Building Toward Embodied AGI - Solving the Data Bottleneck

Workshop Photo Highlights

RoboGen Workshop

Siyuan Keynote

Siyuan Presentation

Yifei Presentation

Yifei Talk

Frank Presentation

Yue Talk

Shenlong Wang

Shenlong Presentation

Tianxing Talk

Baoxiong

Baoxiong Presentation

Feishi

Nicholas

Nicholas Presentation

Chenfei

Zhijian

Zhijian and Lily

Peter

Peter Presentation

Ruigang

Ruigang Talk

Panel Discussion

Award Ceremony

Award Winners

Award Presentation

Best Paper Award

Award Recipients

×

❮

❯