Puhao Li | 李浦豪

|

Puhao Li | 李浦豪 I am currently a Ph.D. student in Dept. of Automation, Tsinghua University advised by Prof. Song-Chun Zhu. I am also a research intern in General Vision Lab at Beijing Institute for General Artificial Intelligence (BIGAI), and I am grateful to be advised by Dr. Tengyu Liu and Dr. Siyuan Huang. Previously, I obtained my B.Eng. degree from Tsinghua University in 2023. My research interests lie in the intersection of robotics manipulation and 3D computer vision. My long-term goal is to develop embodied intelligent systems capable of interpreting human intent and naturally interacting with people in various environments, learning reusable and endless low-level skill sets and high-level common sense. Currently, I am working on 3D scene understanding and robotic manipulation learning, pushing the boundaries of how robots operate within complex settings. Email / CV / Google Scholar / Github / Twitter |

|

|

|

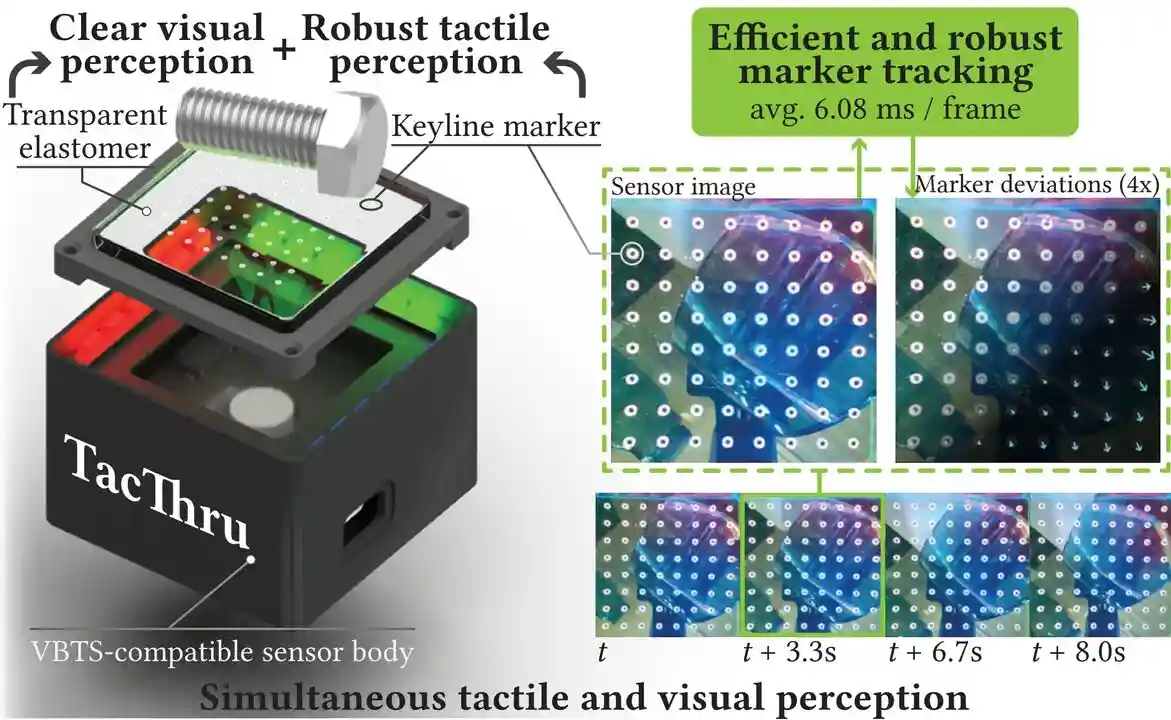

Simultaneous Tactile-Visual Perception for Learning Multimodal Robot Manipulation

Yuyang Li*, Yinghan Chen*, Zihang Zhao, Puhao Li, Tengyu Liu, Siyuan Huang, Yixin Zhu arXiv 2025 [Paper] [Code] [Data] [Hardware] [Project Page] We introduce TacThru, an STS sensor enabling simultaneous visual perception and robust tactile signal extraction, and TacThru-UMI, an imitation learning framework that leverages these multimodal signals for robotic manipulation. |

|

|

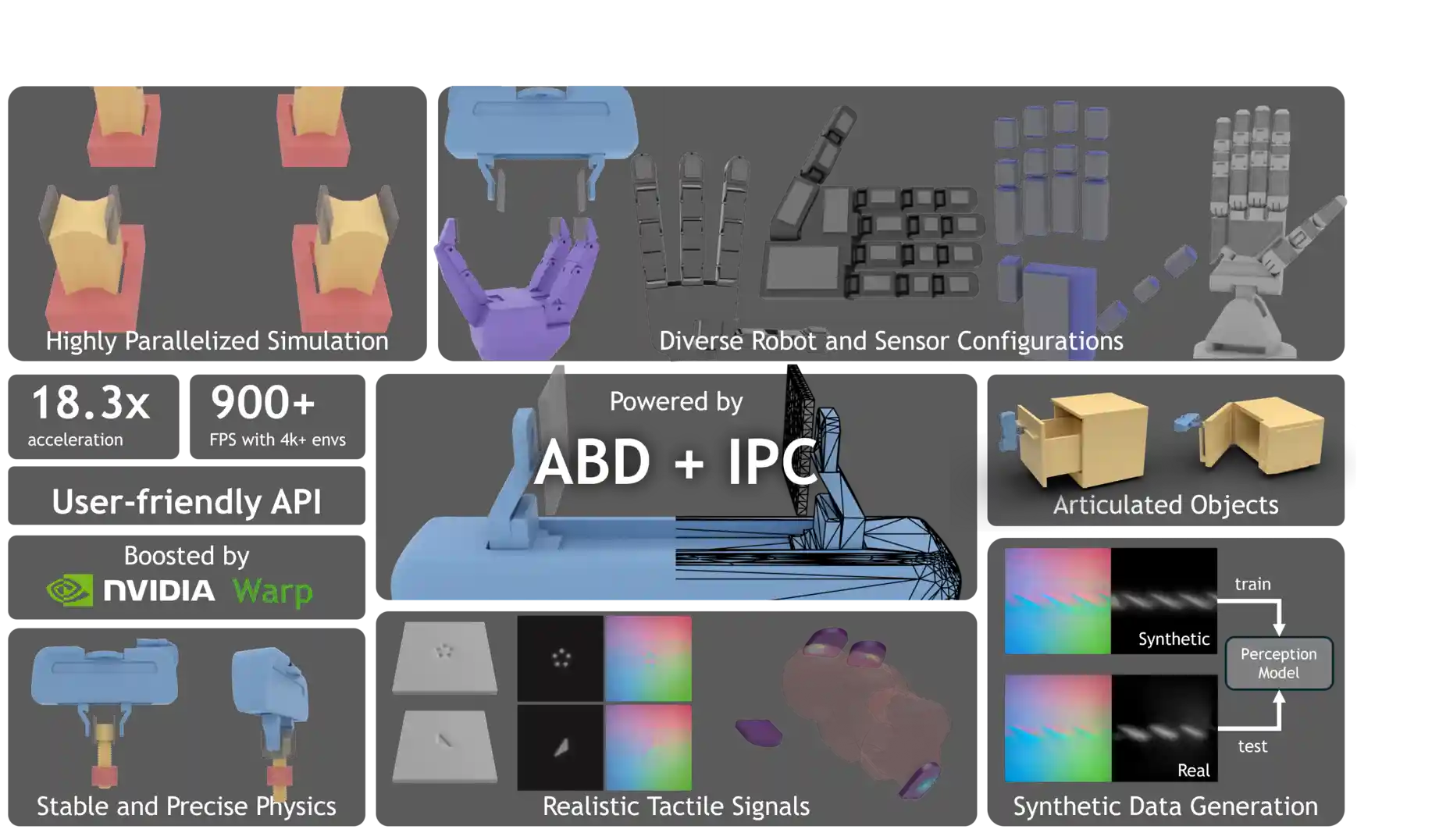

Taccel: Scaling Up Vision-based Tactile Robotics via High-performance GPU Simulation

Yuyang Li*, Wenxin Du*, Chang Yu*, Puhao Li, Zihang Zhao, Tengyu Liu, Chenfanfu Jiang, Yixin Zhu, Siyuan Huang NeurIPS 2025 (Spotlight) [Paper] [Code] [Docs] [NVIDIA Tech Blog] We develop Taccel, a high-performance GPU-based simulator, combining ABD and IPC, for simulating robots with vision-based tactile sensors. |

|

|

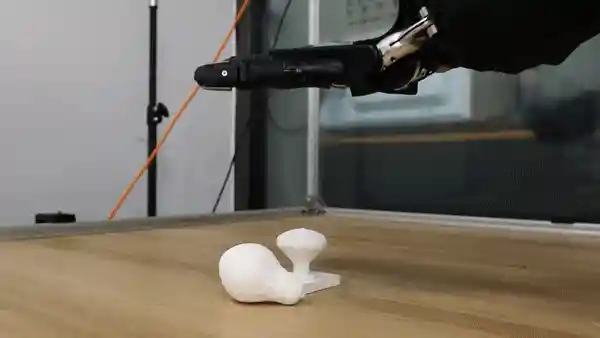

ControlVLA: Few-shot Object-centric Adaptation for Pre-trained VLA models

Puhao Li, Yingying Wu, Ziheng Xi, Wanlin Li, Yuzhe Huang, Zhiyuan Zhang, Yinghan Chen, Jianan Wang, Song-Chun Zhu, Tengyu Liu, Siyuan Huang CoRL 2025 [Paper] [Code] [Project Page] We introduce ControlVLA, a few-shot object-centric adaptation method for pre-trained VLA. By reducing demonstrations requirements, ControlVLA lowers barriers to deploying robots in diverse scenarios. |

|

|

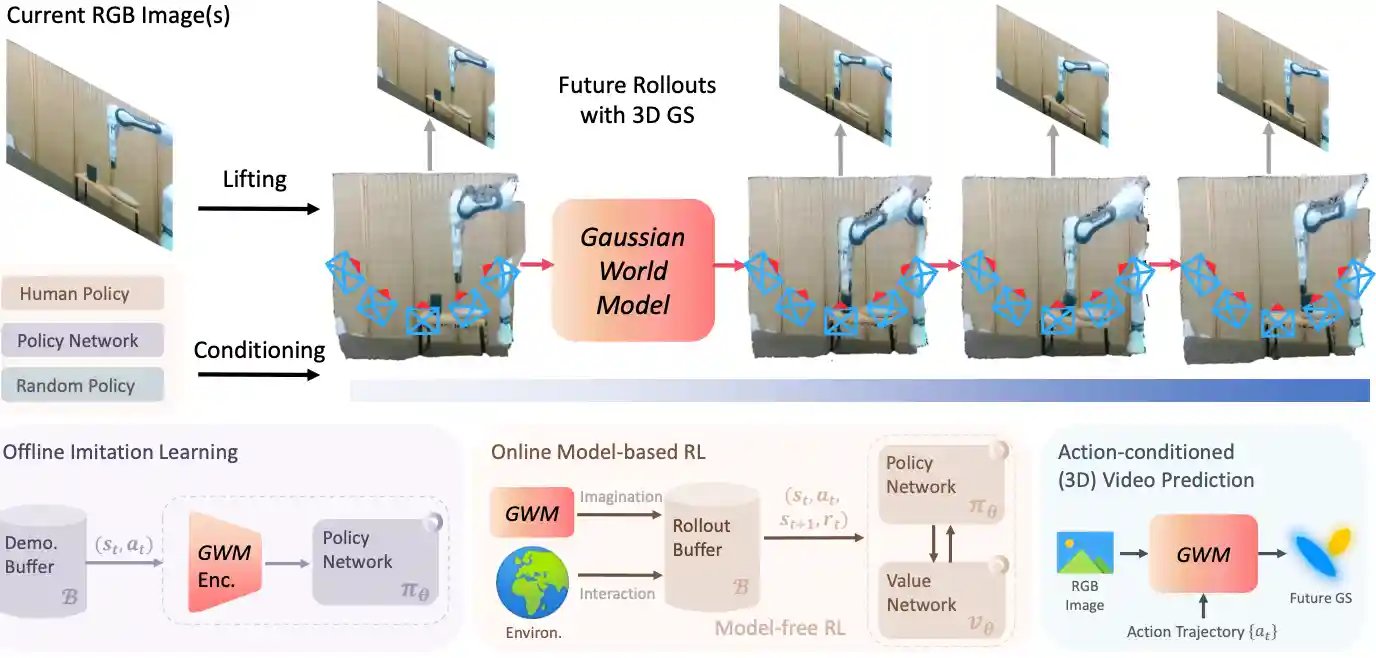

GWM: Towards Scalable Gaussian World Models for Robotic Manipulation

Guanxing Lu*, Baoxiong Jia*, Puhao Li*, Yixin Chen, Ziwei Wang, Yansong Tang, Siyuan Huang, ICCV 2025 [Paper] [Code] [Project Page] We present Gaussian World Model (GWM), a world model that predicts future dynamics and enables robotic manipulation using 3D Gaussian Splatting. |

|

|

Ag2x2: Robust Agent-Agnostic Visual Representations for Zero-Shot Bimanual Manipulation

Ziyin Xiong*, Yinghan Chen*, Puhao Li, Yixin Zhu, Tengyu Liu, Siyuan Huang, IROS 2025 [Paper] [Code] [Project Page] We propose Ag2x2, a learning framework for bimanual manipulation through coordination-aware visual representations that jointly encode object states and hand motion patterns while maintaining agent-agnosticism. |

|

|

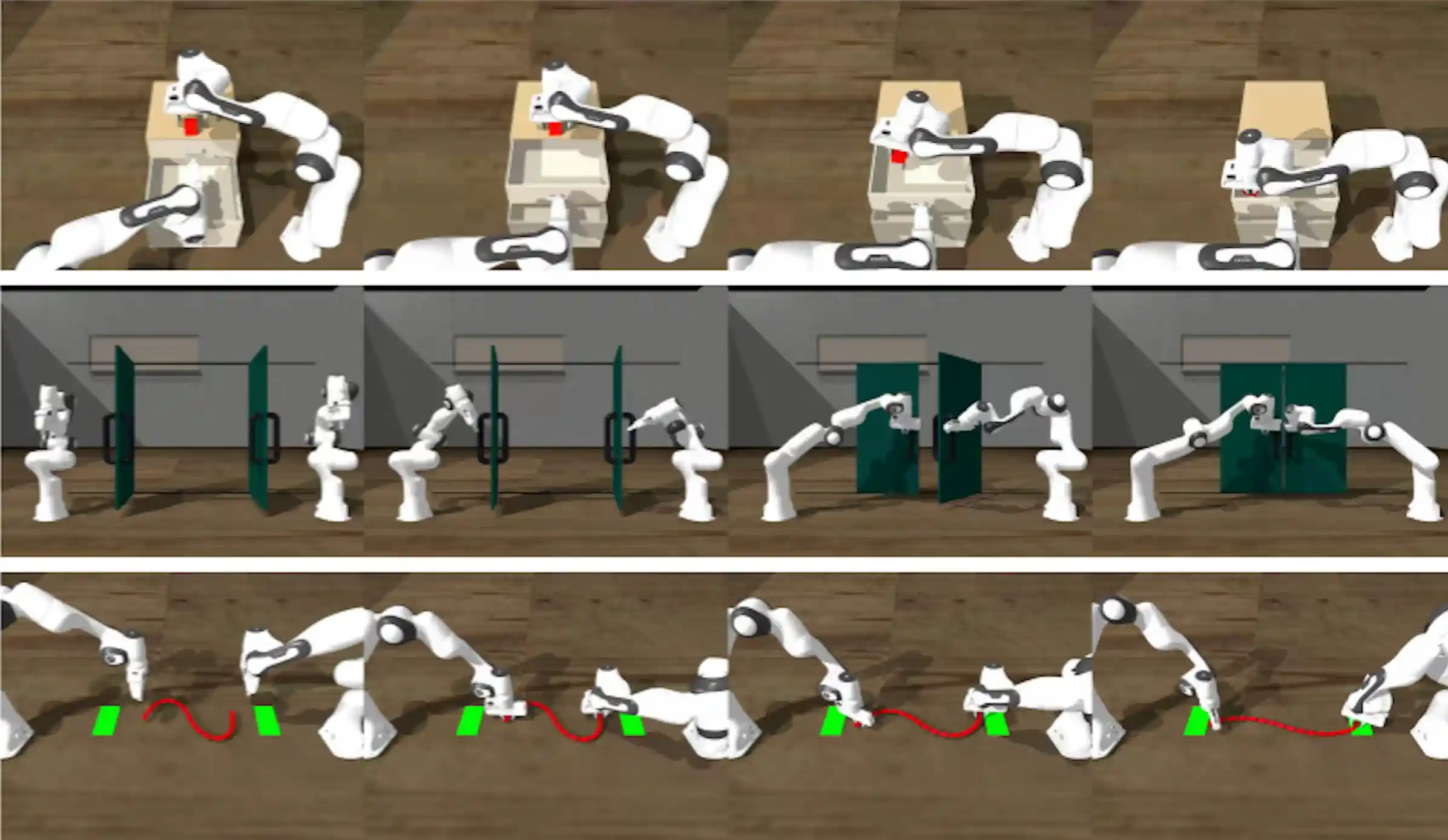

ManipTrans: Efficient Dexterous Bimanual Manipulation Transfer via Residual Learning

Kailin Li, Puhao Li, Tengyu Liu, Yuyang Li, Siyuan Huang CVPR 2025 [Paper] [Code] [Data] [Project Page] We introduce ManipTrans, a novel method for efficiently transferring human skills to dexterous robotic hands in simulation. Leveraging ManipTrans, we contribute DexManipNet, a large-scale dexterous manipulation dataset with diverse tasks. |

|

|

MetaScenes: Towards Automated Replica Creation for Real-world 3D Scans

Huangyue Yu*, Baoxiong Jia*, Yixin Chen*, Yandan Yang, Puhao Li, Rongpeng Su, Jiaxin Li, Qing Li, Wei Liang, Song-Chun Zhu, Tengyu Liu, Siyuan Huang CVPR 2025 [Paper] [Code] [Data] [Project Page] We present MetaScenes, a large-scale 3D scene dataset constructed from real-world scans. It features 706 scenes with 15,366 objects across a wide range of types, with realistic layouts, visually accurate appearances and physical plausibility. |

|

|

PhysPart: Physically Plausible Part Completion for Interactable Objects

Rundong Luo*,

Haoran Geng*,

Congyue Deng,

Puhao Li,

Zan Wang,

Baoxiong Jia,

Leonidas Guibas,

Siyuan Huang

ICRA 2025 [Paper] [Project Page] We propose a diffusion-based part generation model that utilizes geometric conditioning through classifier-free guidance and formulates physical constraints as a set of stability and mobility losses to guide the sampling process. |

|

|

PhyRecon: Physically Plausible Neural Scene Reconstruction

Junfeng Ni*, Yixin Chen*, Bohan Jing, Nan Jiang, Bing Wang, Bo Dai, Puhao Li, Yixin Zhu, Song-Chun Zhu, Siyuan Huang NeurlPS 2024 [Paper] [Code] [Project Page] We introduce PhyRecon, which enables physically plausible 3D scene reconstruction. PhyRecon features a joint optimization framwork incorporating both differentiable rendering and physics-based objectives. |

|

|

Ag2Manip: Learning Novel Manipulation Skills with Agent-Agnostic Visual and Action Representations

Puhao Li*, Tengyu Liu*, Yuyang Li, Muzhi Han, Haoran Geng, Shu Wang, Yixin Zhu, Song-Chun Zhu, Siyuan Huang IROS 2024 (Oral Pitch) [Paper] [Code] [Project Page] We introduce Ag2Manip, which enables various robotic manipulation tasks without any domain-specific demonstrations. Ag2Manip also supports robust imitation learning of manipulation skills in the real world. |

|

|

Grasp Multiple Objects with One Hand

Yuyang Li, Bo Liu, Yiran Geng, Puhao Li, Yaodong Yang, Yixin Zhu, Tengyu Liu, Siyuan Huang RA-L, presented at IROS 2024 (Oral Presentation) [Paper] [Code] [Data] [Project Page] We introduce MultiGrasp, a two-stage framework for simultaneous multi-object grasping with multi-finger dexterous hands. In addition, we contribute Grasp'Em, a large-scale synthetic multi-object grasping dataset. |

|

|

Move as You Say, Interact as You Can: Language-guided Human Motion Generation with Scene Affordance

Zan Wang, Yixin Chen, Baoxiong Jia, Puhao Li, Jinlu Zhang, Jingze Zhang, Tengyu Liu, Yixin Zhu, Wei Liang, Siyuan Huang CVPR 2024 (Highlight) [Paper] [Code] [Project Page] We introduce a novel two-stage framework that employs scene affordance as an intermediate representation, effectively linking 3D scene grounding and conditional motion generation. |

|

|

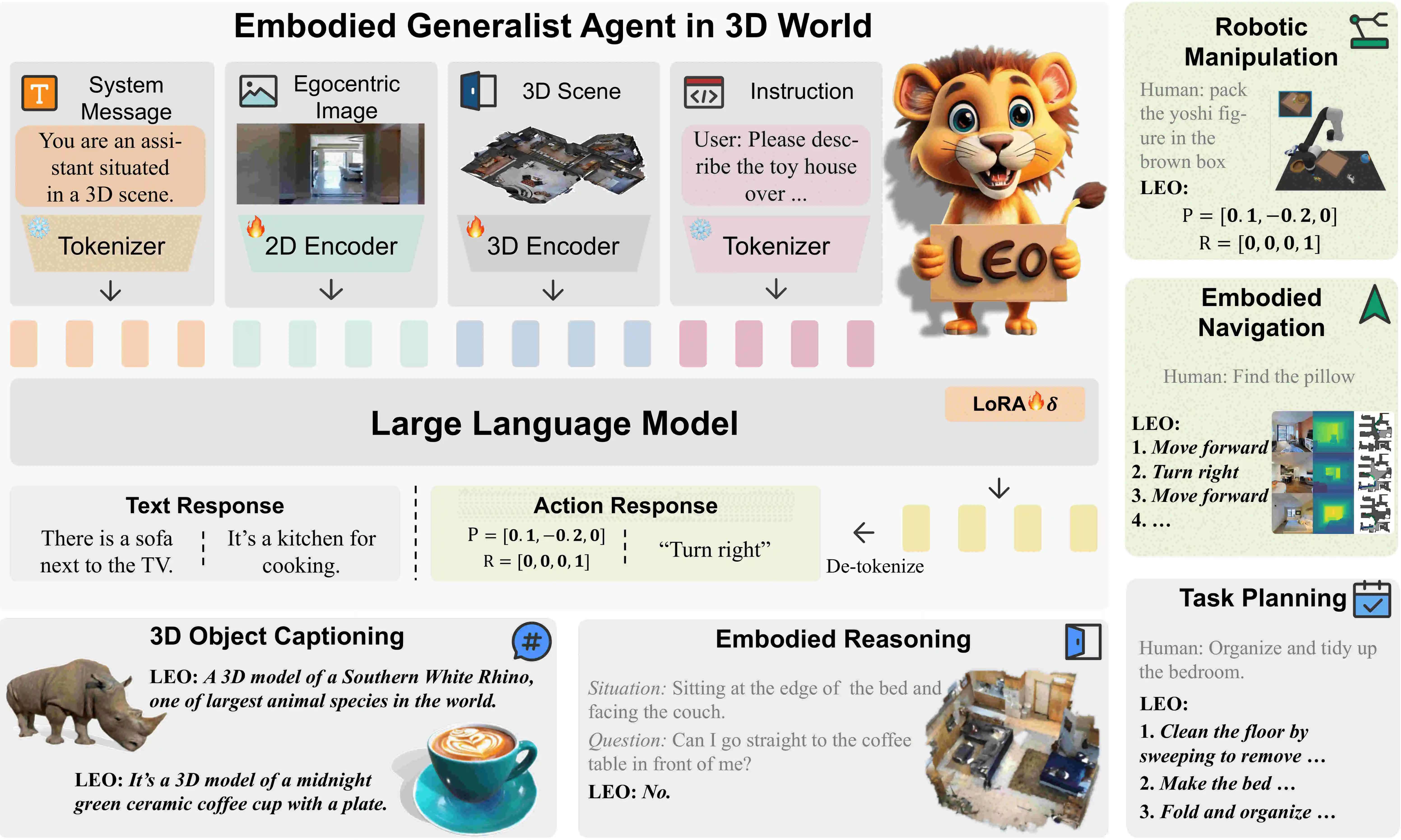

An Embodied Generalist Agent in 3D World

Jiangyong Huang*, Silong Yong*, Xiaojian Ma*, Xiongkun Linghu*, Puhao Li, Yan Wang, Qing Li, Song-Chun Zhu, Baoxiong Jia, Siyuan Huang ICML 2024 ICLR 2024 @ LLMAgents Workshop [Paper] [Code] [Data] [Project Page] We introduce LEO, an embodied multi-modal and multi-task generalist agent that excels in perceiving, grounding, reasoning, planning, and acting in 3D world. |

|

|

Diffusion-based Generation, Optimization, and Planning in 3D Scenes

Siyuan Huang*, Zan Wang*, Puhao Li, Baoxiong Jia, Tengyu Liu, Yixin Zhu, Wei Liang, Song-Chun Zhu CVPR 2023 [Paper] [Code] [Project Page] [Hugging Face] We introduce SceneDiffuser, a unified conditional generative model for 3D scene understanding. In contrast to prior work, SceneDiffuser is intrinsically scene-aware, physics-based, and goal-oriented. |

|

|

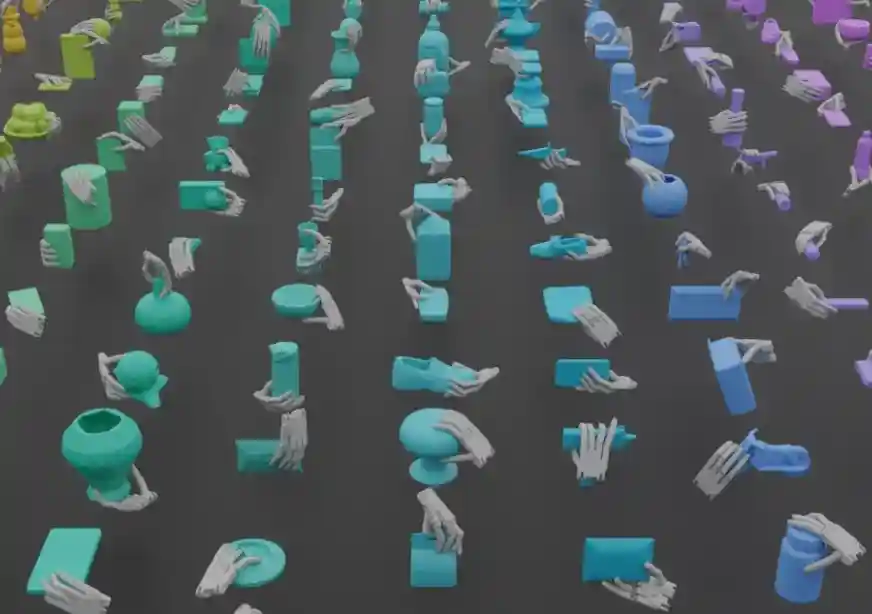

GenDexGrasp: Generalizable Dexterous Grasping

Puhao Li*, Tengyu Liu*, Yuyang Li, Yiran Geng, Yixin Zhu, Yaodong Yang, Siyuan Huang ICRA 2023 [Paper] [Code] [Data] [Project Page] We introduce GenDexGrasp, a versatile dexterous grasping method that can generalize to out-of-domain robotic hands. In addition, we contribute MultiDex, a large-scale synthetic dexterous grasping dataset. |

|

|

DexGraspNet: A Large-Scale Robotic Dexterous Grasp Dataset for General Objects Based on Simulation

Ruicheng Wang*, Jialiang Zhang*, Jiayi Chen, Yinzhen Xu, Puhao Li, Tengyu Liu, He Wang ICRA 2023 (Oral Presentation, Outstanding Manipulation Paper Finalist) [Paper] [Code] [Data] [Project Page] We introduce a large-scale dexterous grasping dataset DexGraspNet, which based on simulation. DexGraspNet features more physical stability and higher diversity than previous grasping datasets. |

|

Tsinghua University, China

2023.09 - present

Ph.D. Student

|

|

|

Beijing Institute for General Artificial Intelligence (BIGAI), China

2021.09 - present

Research Intern

|

|

Tsinghua University, China

2019.08 - 2023.06 Undergraduate Student |

Fell free to contact me if you have any problem. Thanks for your visiting by 😊

This page is designed based on Jon Barron's website and deployed on Github Pages.