Zhengqi Li

I am a research scientist at Google DeepMind. Previously, I was a research scientist at Google Research and Adobe Research. I earned my Ph.D. in Computer Science from Cornell University, where I was advised by Prof. Noah Snavely. My work has been recognized with several honors, including the 2020 Google Ph.D. Fellowship, the 2020 Adobe Research Fellowship, the 2021 Baidu Global Top 100 Rising Stars in AI, Best Paper Honorable Mention Awards at CVPR 2019, CVPR 2023, and CVPR 2025, the ICCV 2023 Best Student Paper Award, and the CVPR 2024 Best Paper Award.

News

Publications/Technical Reports

RELIC: Interactive Video World Model with Long-Horizon Memory

[www]

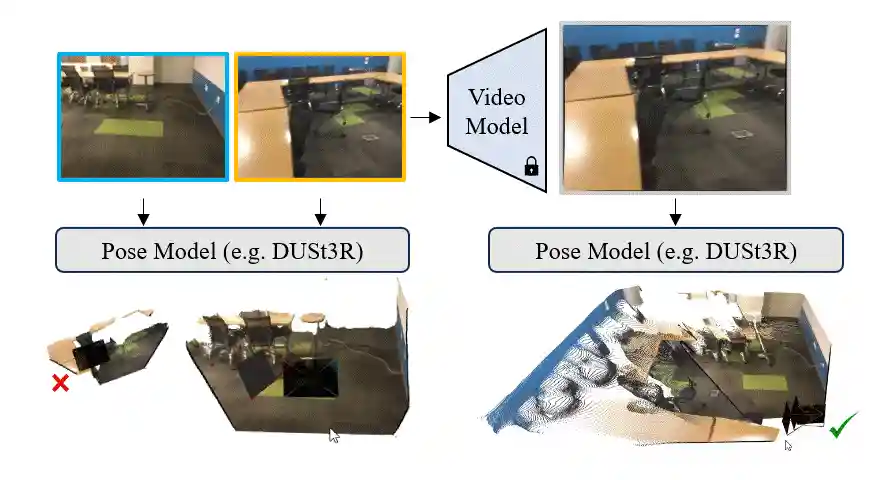

MegaSaM: Accurate, Fast and Robust Structure and Motion from Casual Dynamic Videos

Zhengqi Li, Richard Tucker, Forrester Cole, Qianqian Wang, Linyi Jin, Vickie Ye, Angjoo Kanazawa, Aleksander Holynski, Noah Snavely

In CVPR, 2025 (Best Paper Honorable Mention)

[www]

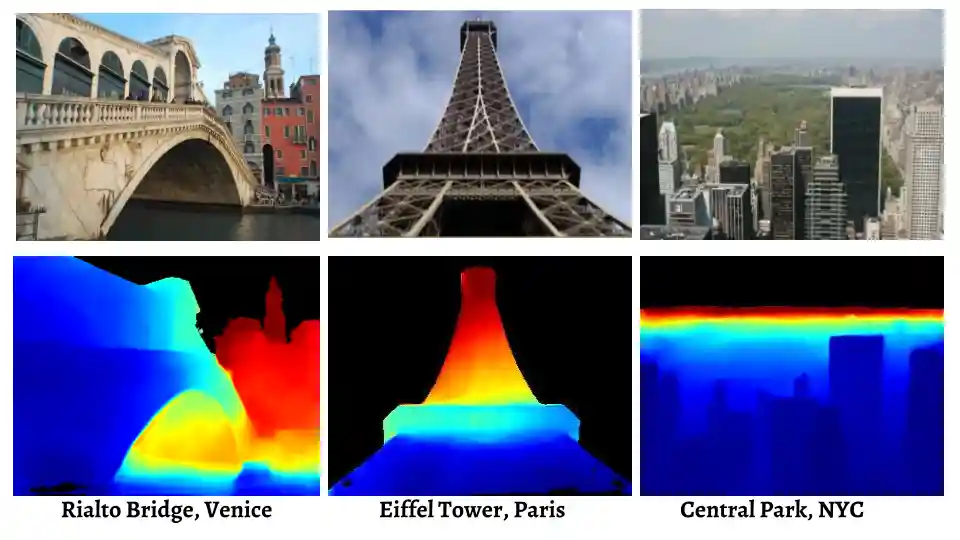

MegaDepth: Learning Single-View Depth Prediction from Internet Photos

In CVPR, 2018 (also invited to be presented at Bridges to 3D Workshop, CVPR 2018)