Egocentric 4D Perception (EGO4D)

A massive-scale, egocentric dataset and benchmark suite collected across 74 worldwide locations and 9 countries, with over 3,670 hours of daily-life activity video.

Tap / Hover over map markers above and wait for sample video to load Tap to start / stop the map from moving.

MASSIVE SCALE Explore Sample ↗

Ego4D is a massive-scale Egocentric dataset of unprecedented

diversity. It consists of 3,670 hours of video collected by

923 unique participants from

74 worldwide locations in 9 different countries.

The project brings together 88 researchers, in an

international consortium, to dramatically increases the scale

of egocentric data publicly available by an order of

magnitude, making it more than 20x greater than any other data

set in terms of hours of footage.

Ego4D aims to catalyse the next era of research in

first-person visual perception.

DIVERSE

The dataset is diverse in its geographic coverage, scenarios,

participants and captured modalities. We consulted a survey

from the

U.S. Bureau of Labor Statistics

that captures how people spend the bulk of their time.

Data was captured using seven different off-the-shelf

head-mounted cameras: GoPro, Vuzix Blade, Pupil Labs, ZShades,

OR- DRO EP6, iVue Rincon 1080, and Weeview.

In addition to video, portions of Ego4D offer other data

modalities: 3D scans, audio, gaze, stereo, multiple

synchronized wearable cameras, and textual narrations.

Check our ArXiv paper for details .

PRIVACY/ETHICS

From the onset, privacy and ethics standards were critical to

this data collection effort. Each partner was responsible for

developing a policy. While specifics vary per site, common

guidelines have been followed.

Please refer to our

statement by members of the consortium on issuess of

privacy and ethics

for further details.

Should any researcher, participant or data user encounter

clips they have been insufficiently redacted, or for any other

privacy concerns, please contact us immediately at

privacy@ego4d-data.org.

Challenges

EGO4D Consortium

This initiative is led by an international consortium of 13 universities in partnership with Facebook AI, that collaborated to advance egocentric perception.

QUESTIONS / ANSWERS

What to cite referencing this effort?

If using the dataset, annotations or inspiration from this work, cite ArXiv paper:

K Grauman et al. Ego4D: Around the World in 3,000 Hours of Egocentric Video. arXiv preprint arXiv:2110.07058 2021. BibTex (.bib)

How can I download the dataset?

Ego4D is now publicly avaiable. Obtaining the dataset or any annotations requires you first review our license agreement and accept the terms. Go here to review and execute this agreement, and you will be emailed a set of AWS access credentials when your license agreement is approved, which will take 48hrs. You can review a draft of the licenses before signing here In the meantime, you can check out data overview and sample notebooks here to get familiar with the dataset, and can download the CLI and dataloaders to get setup in advance.

What MetaData is available?

For each video, we provide information about the collecting partner/university, date of recording, recording equipment, as well as video parts when the video is made up of smaller chunks. Information about the availability of IMU, Audio and whether videos have been redacted are also included. Overviews of the metadata and annotations can be found in our docs.

Who collected this data?

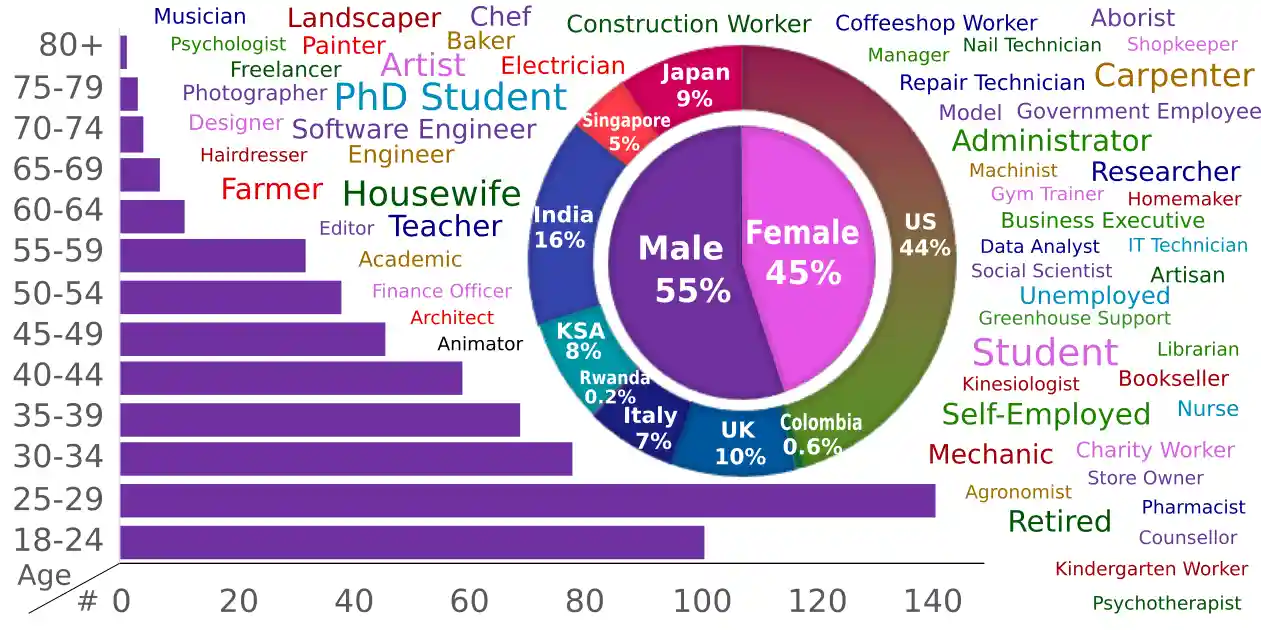

The data was collected from 923 participants. We showcase a distribution of age, gender and jobs from around 70% of our participants who volunteered to self-identify their demographics — age, gender, countries of residence, and occupations.

Does the data contain identifying information of individuals?

The collecting partner holds consent forms and/or release forms for all videos. Only when consent has been collected from participants, the data will contain faces and other identifying information. For the majority of videos, data has been de-identified pre-release. Refer to our privacy statement and ArXiv (Sec 3.4 and appendix C) for details of our privacy and de-identification pipeline.

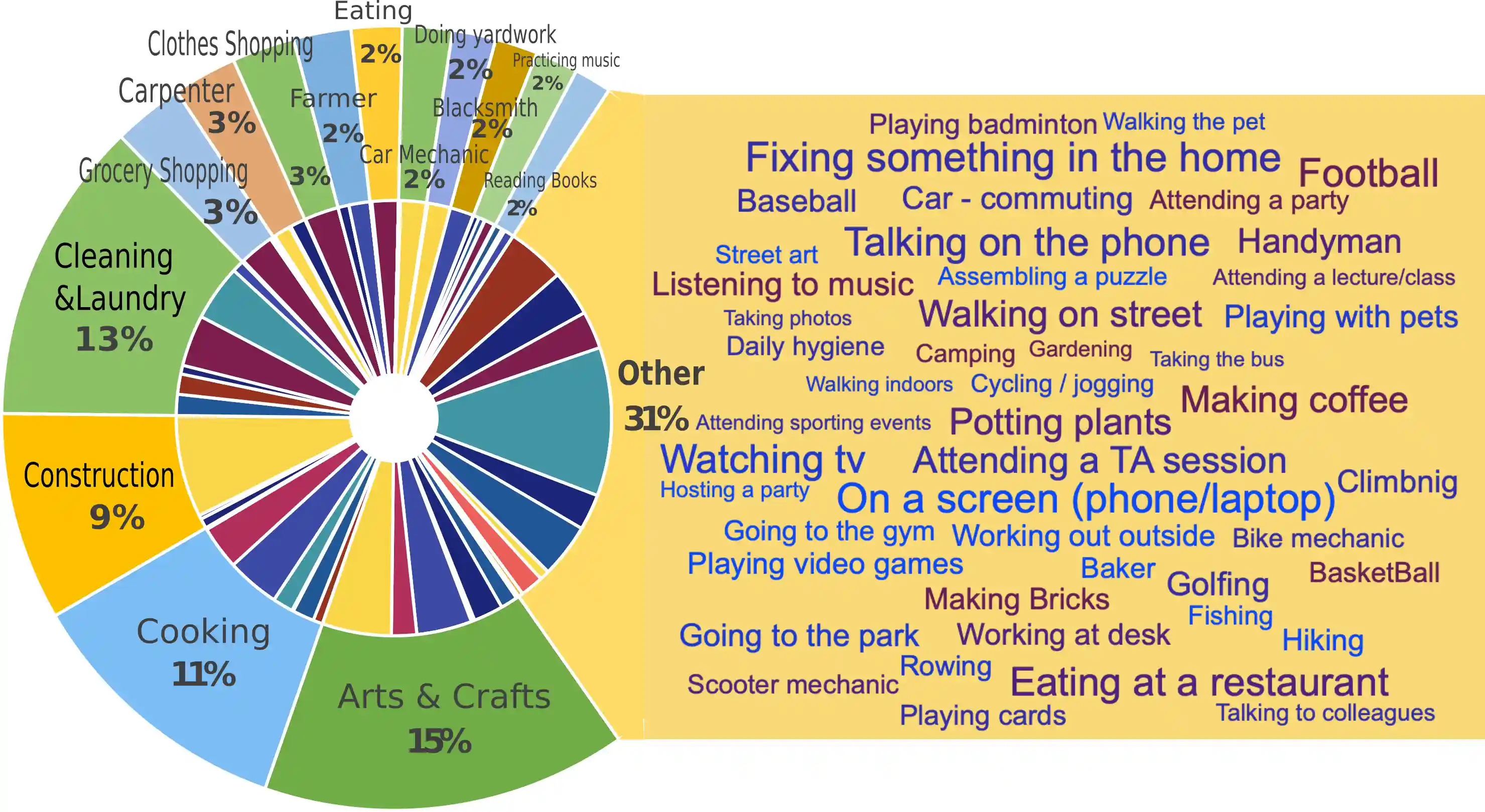

What coverage of scenarios do you have?

A sample visualisation of our scenarios is below. Outer circle shows the 14 most common scenarios (70% of the data). Wordle shows scenarios in the remaining 30%. Inner circle is color coded by the contributing partner (see map marker above).

What equipment, resolution and frame rate are available?

This depends on equipment. To avoid models overfitting to a single capture device, seven different head-mounted cameras were deployed across the dataset: GoPro, Vuzix Blade, Pupil Labs, ZShades, ORDRO EP6, iVue Rincon 1080, and Weeview. We release all footage using the native resolution, but also offer a standardised frame-rate version of 30fps for ease of use. All benchmark results use the standardised version.

EGO4D Team

Carnegie Mellon University, Pittsburgh, U.S.

Carnegie Mellon University Africa, Rawanda

King Abdullah University of Science and Technology, KSA

University of Minnesota, U.S.

- Hyun Soo Park (PI)

- Jayant Sharma

- Tien Do

- Zachary Chavis

International Institute of Information Technology, Hyderabad, India

Indiana University Bloomington, U.S.

- David Crandall (PI)

- Yuchen Wang

- Weslie Khoo

University of Pennsylvania, U.S.

- Jianbo Shi (PI)

University of Catania, Italy

University of Tokyo, Japan

Facebook AI Research, International

- Kristen Grauman (PI)

- Jitendra Malik (PI)

- Dhruv Batra

- Eugene Byrne

- Vincent Cartillier

- Morrie Doulaty

- Akshay Erapalli

- Christian Fuegen

- Rohit Girdhar

- Jackson Hamburger

- Tal Hassner

- James Hillis, FRL

- Vamsi Krishna Ithapu, FRL

- Hao Jiang

- Hanbyul Joo

- Jachym Kolar

- Satwik Kottur

- Devansh Kukreja

- Anurag Kumar, FRL

- Federico Landini

- Chao Li, FRL

- Miguel Martin

- Tullie Murrell

- Tushar Nagarajan

- Christoph Feichtenhofer

- Karttikeya Mangalam

- Richard Newcombe, FRL

- Santhosh Kumar Ramakrishnan

- Leda Sari, FRL

- Kiran Somasundaram, FRL

- Lorenzo Torresani

- Minh Vo, FRL

- Andrew Westbury

- Mingfei Yan, FRL

DOWNLOAD EGO4D

The dataset was released 17 Feb 2022 and is now publicly available

License forms should be signed to access

videos, metadata and annotations.

Sign Ego4D Licenses ↗

Starting code and README files are avaiable

Start Here ↗