Yufei Ding | 丁昱菲

Research

SoFar: Language-Grounded Orientation Bridges Spatial Reasoning and Object Manipulation

Zekun Qi*, Wenyao Zhang*, Yufei Ding*, Runpei Dong, Xinqiang Yu, Jingwen Li, Lingyun Xu, Baoyu Li, Xialin He, Guofan Fan, Jiazhao Zhang, Jiawei He, Jiayuan Gu, Xin Jin, Kaisheng Ma, Zhizheng Zhang, He Wang, Li Yi

NeurIPS 2025, Spotlight

Paper / Project Page / Code / Huggingface

We introduce the concept of semantic orientation and propose a generalizable model for language-grounded spatial reasoning and robot manipulation.

Open6DOR: Benchmarking Open-instruction 6-DoF Object Rearrangement and A VLM-based Approach

Yufei Ding*, Haoran Geng*, Chaoyi Xu, Xiaomeng Fang, Jiazhao Zhang, Songlin Wei, Qiyu Dai, Zhizheng Zhang, He Wang†

Paper / Project Page / Code / Video / Bibtex

IROS 2024, Oral Presentation

CVPR 2024 @ VLADA, Oral Presentation

ICRA 2024 @ 3D Manipulation, Spotlight

We present Open6DOR, a challenging and comprehensive benchmark for open-instruction 6-DoF object rearrangement tasks. Following this, we propose a zero-shot method, Open6DORGPT, which achieves SOTA performance and proves effective in demanding simulation environments and real-world scenarios.

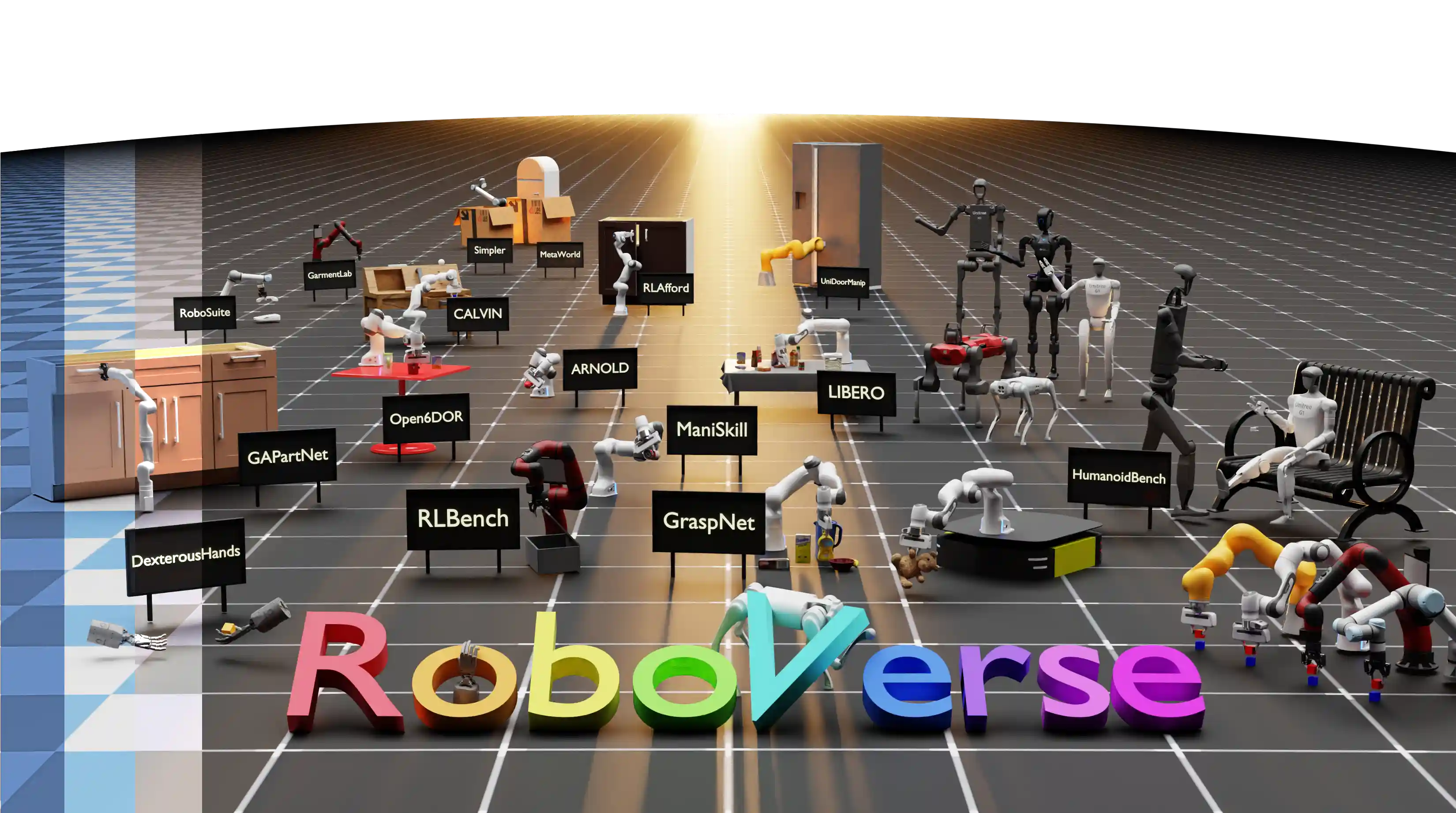

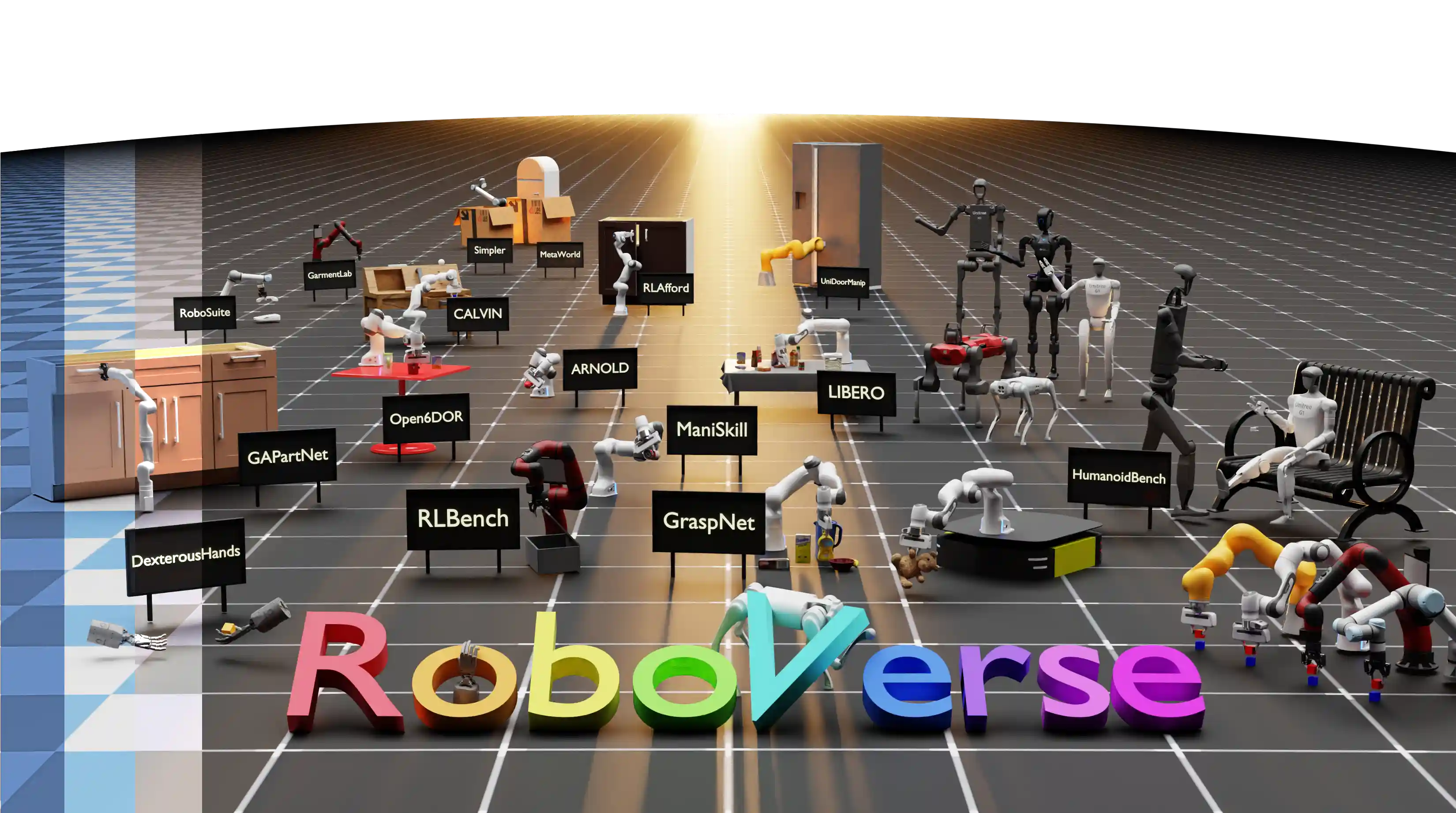

RoboVerse Team

Paper / Project / Code / Bibtex

RSS 2025

We propose RoboVerse, a comprehensive framework for advancing robotics through a simulation platform, synthetic dataset, and unified benchmarks. Its MetaSim infrastructure abstracts diverse simulators into a universal interface, ensuring interoperability and extensibility. RoboVerse improves sim-to-real transfer and enables consistent evaluation for imitation and reinforcement learning, addressing key challenges in scaling robotic data and benchmarking.

DexGraspNet 2.0: Learning Generative Dexterous Grasping in Large-scale Synthetic Cluttered Scenes

Jialiang Zhang*, Haoran Liu*, Danshi Li*, Xinqiang Yu*, Haoran Geng, Yufei Ding, Jiayi Chen, He Wang†

CoRL 2024

We synthesized a large-scale dexterous grasping dataset in cluttered scenes and designed a generative framework to learn grasping in the real world.

Simulately: Handy information and resources for physics simulators for robot learning research.

Haoran Geng, Yuyang Li, Yuzhe Qin, Ran Gong, Wensi Ai, Yuanpei Chen, Puhao Li, Junfeng Ni, Zhou Xian, Songlin Wei, Yang You, Yufei Ding, Jialiang Zhang

Website / Github

Open-source Project

Selected into CMU 16-831t

Simulately is a project where we gather useful information of robotics & physics simulators for cutting-edge robot learning research.

Selected Awards